A Prescription of Methodological Guidelines for Comparing Bio-inspired Optimization Algorithms

Bio-inspired optimization (including Evolutionary Computation and Swarm Intelligence) is a growing research topic with many competitive bio-inspired algorithms being proposed every year. In such an active area, preparing a successful proposal of a new bio-inspired algorithm is not an easy task. Given the maturity of this research field, proposing a new optimization technique with innovative elements is no longer enough. Apart from the novelty, results reported by the authors should be proven to achieve a significant advance over previous outcomes from the state of the art. Unfortunately, not all new proposals deal with this requirement properly. Some of them fail to select appropriate benchmarks or reference algorithms to compare with. In other cases, the validation process carried out is not defined in a principled way (or is even not done at all). Consequently, the significance of the results presented in such studies cannot be guaranteed. In this work we review several recommendations in the literature and propose methodological guidelines to prepare a successful proposal, taking all these issues into account. We expect these guidelines to be useful not only for authors, but also for reviewers and editors along their assessment of new contributions to the field.

💡 Research Summary

**

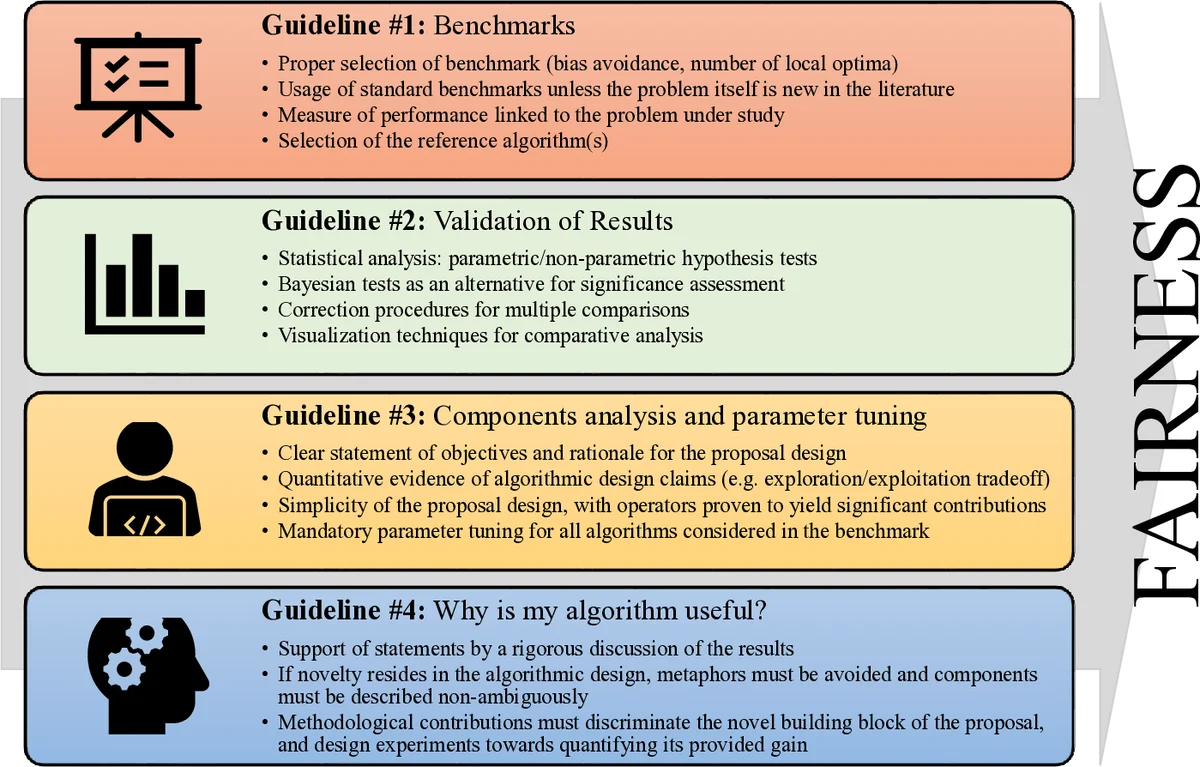

The paper addresses a critical gap in the field of bio‑inspired optimization, where a flood of new algorithms is published each year but many lack rigorous experimental validation. The authors first review existing literature on common pitfalls such as incomplete algorithm descriptions, biased search processes, inappropriate benchmark selection, insufficient statistical validation, and neglect of component‑wise analysis and parameter tuning. Building on this review, they propose four comprehensive methodological guidelines designed to help authors, reviewers, and editors ensure that any claimed improvement over the state‑of‑the‑art is truly substantiated.

Guideline #1 focuses on benchmark selection. It stresses the need for a diverse set of test problems that vary in dimensionality, separability, number of optima, and susceptibility to structural bias (e.g., central optimum placement, coordinate‑system sensitivity). Real‑world problems should be used when possible, and any known bias in the problem should be either mitigated or deliberately exploited, depending on the algorithm’s design goals.

Guideline #2 deals with result validation. The authors advocate multiple independent runs (typically ≥30) under identical settings, followed by a thorough check of statistical assumptions (normality, homoscedasticity). Appropriate non‑parametric tests such as Friedman, Wilcoxon, and post‑hoc Nemenyi are recommended, together with clear visualisations (box‑plots, convergence curves, performance profiles) to make the statistical evidence readily interpretable.

Guideline #3 requires a detailed component analysis and systematic parameter tuning. The algorithm should be decomposed into its logical modules (exploration, exploitation, mutation, selection, etc.), and the contribution of each module to overall performance must be quantified. Parameter sensitivity analysis, grid search, Bayesian optimisation, or specialised tuning frameworks (SMAC, Hyperopt) should be employed, and all settings must be reported to guarantee reproducibility. The authors also encourage the release of source code and configuration files.

Guideline #4 asks authors to explicitly justify the usefulness of their proposal. Beyond raw performance numbers, the paper should explain why the new method matters—whether it offers superior results on a specific class of problems, introduces a novel mechanism that can inspire further research, or provides a more efficient solution for a real‑world application.

To illustrate the practical application of these guidelines, the authors present a case study using the CEC 2013 Large‑Scale Global Optimization benchmark. They select a standard set of functions, adopt ranking‑based performance measures, and compare the new algorithm against several recent meta‑heuristics. Statistical validation is performed using Friedman‑Nemenyi testing, and visual tools are employed to summarise the findings. Component‑wise ablation studies and a systematic parameter‑tuning protocol are also reported, demonstrating that each part of the algorithm contributes positively to the overall outcome. The case study confirms that the proposed methodology successfully guides the design, evaluation, and reporting of a new bio‑inspired optimizer.

In conclusion, the paper delivers a pragmatic, step‑by‑step framework that standardises experimental practice in bio‑inspired optimization. By adhering to the four guidelines, researchers can produce more credible, reproducible, and impactful contributions, while reviewers and editors gain clear criteria for assessing the scientific merit of new algorithmic proposals. This work therefore strengthens the methodological foundations of the field and promotes genuine innovation.

Comments & Academic Discussion

Loading comments...

Leave a Comment