Identifying Sparse Low-Dimensional Structures in Markov Chains: A Nonnegative Matrix Factorization Approach

We consider the problem of learning low-dimensional representations for large-scale Markov chains. We formulate the task of representation learning as that of mapping the state space of the model to a low-dimensional state space, called the kernel space. The kernel space contains a set of meta states which are desired to be representative of only a small subset of original states. To promote this structural property, we constrain the number of nonzero entries of the mappings between the state space and the kernel space. By imposing the desired characteristics of the representation, we cast the problem as a constrained nonnegative matrix factorization. To compute the solution, we propose an efficient block coordinate gradient descent and theoretically analyze its convergence properties.

💡 Research Summary

The paper addresses the challenge of representing large‑scale discrete‑time Markov chains with a compact, low‑dimensional model while preserving essential dynamical properties. The authors formalize the representation learning task as a factorization of the original transition matrix P into three non‑negative stochastic matrices: U (mapping from the original state space to a reduced “kernel” space), (\tilde P) (the transition matrix within the kernel space), and V (mapping back to the original space), such that P ≈ U (\tilde P) V. This decomposition is motivated by the concept of non‑negative rank, which guarantees that if the rank is k, a factorization with k meta‑states exists.

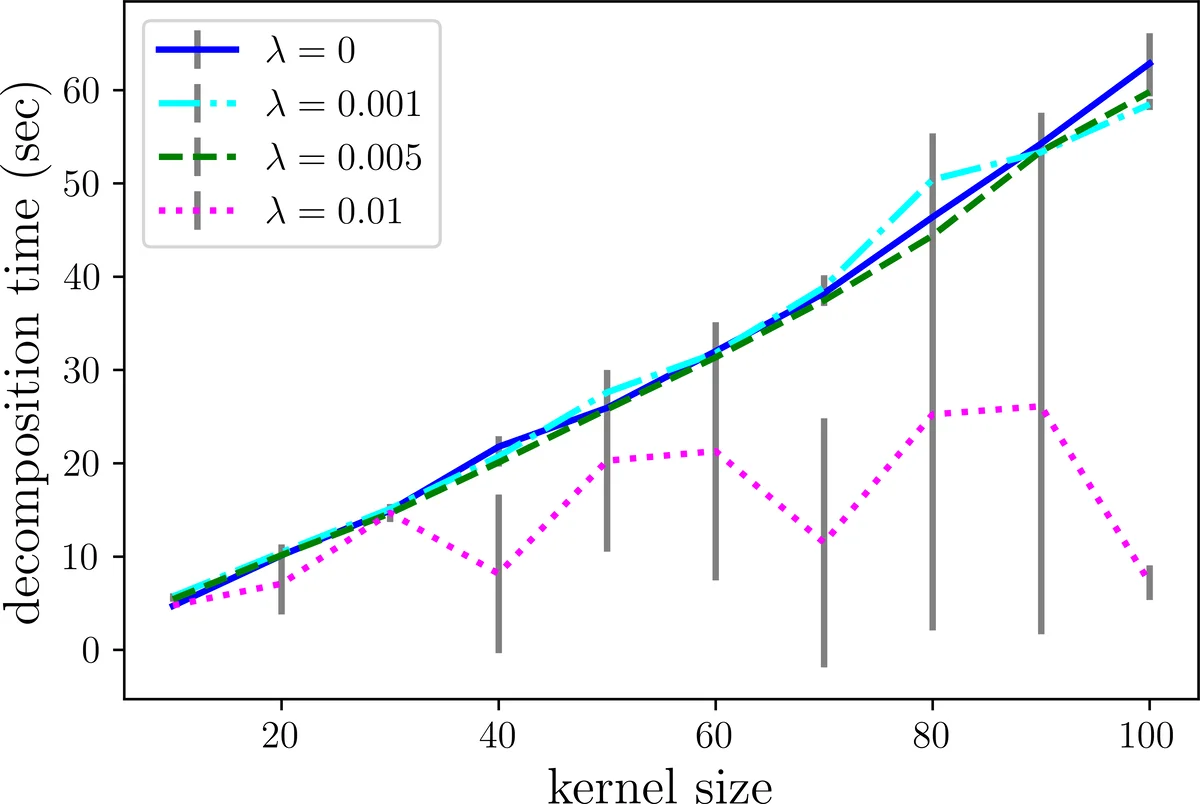

To ensure that each meta‑state represents only a small subset of original states, the authors impose sparsity on the rows of U and V. Directly enforcing an ℓ₀‑norm constraint is combinatorial and NP‑hard, so they relax it to an ℓ₁‑norm regularization, leading to the convex surrogate objective:

\

Comments & Academic Discussion

Loading comments...

Leave a Comment