A Morphable Face Albedo Model

In this paper, we bring together two divergent strands of research: photometric face capture and statistical 3D face appearance modelling. We propose a novel lightstage capture and processing pipeline for acquiring ear-to-ear, truly intrinsic diffuse and specular albedo maps that fully factor out the effects of illumination, camera and geometry. Using this pipeline, we capture a dataset of 50 scans and combine them with the only existing publicly available albedo dataset (3DRFE) of 23 scans. This allows us to build the first morphable face albedo model. We believe this is the first statistical analysis of the variability of facial specular albedo maps. This model can be used as a plug in replacement for the texture model of the Basel Face Model (BFM) or FLAME and we make the model publicly available. We ensure careful spectral calibration such that our model is built in a linear sRGB space, suitable for inverse rendering of images taken by typical cameras. We demonstrate our model in a state of the art analysis-by-synthesis 3DMM fitting pipeline, are the first to integrate specular map estimation and outperform the BFM in albedo reconstruction.

💡 Research Summary

This paper presents a comprehensive solution for acquiring, processing, and modeling intrinsic facial reflectance, addressing a long‑standing limitation of existing 3D Morphable Models (3DMMs) whose texture maps are contaminated by shading, shadows, specular highlights, and camera‑dependent colour transformations. The authors design a novel lightstage system equipped with 41 polarized LEDs and a high‑speed electronically switchable polarizer on a single photometric camera (Nikon D200). By capturing parallel (para) and perpendicular (perp) polarized image pairs under uniform illumination, they obtain pure diffuse and pure specular components: the sum of the two images yields the diffuse albedo, while their difference isolates the specular albedo.

To achieve full ear‑to‑ear coverage despite the view‑dependent nature of polarization, the subject is recorded in three poses (frontal, left profile, right profile). Seven auxiliary cameras (Canon 7D) simultaneously capture multiview images that are later used for geometry reconstruction. The multiview pipeline first runs an uncalibrated Structure‑from‑Motion followed by dense multiview stereo on all 24 images (8 viewpoints × 2 polarizations), producing a base mesh and intrinsic/extrinsic parameters for the three photometric views.

The base mesh is then aligned to the Basel Face Model (BFM) 2017 template using the Basel Face Pipeline, which employs Gaussian‑process‑based smooth deformations and a set of manually annotated landmarks. This step guarantees compatibility with existing 3DMMs and provides a dense correspondence between scans and the statistical model.

The core of the processing is a robust stitching algorithm that merges the three diffuse and three specular views into seamless per‑vertex albedo maps. For each view, per‑vertex visibility, occlusion distance, and the dot product between surface normal and view direction are computed to form a confidence weight. Triangle‑wise confidence is derived as the minimum vertex weight within the triangle. A selection matrix picks, for each triangle, the view with the highest confidence; triangles without any valid view are assigned a zero‑gradient prior. The selected gradients are then integrated in the gradient domain by solving a screened Poisson equation, yielding stitched RGB values that respect the most reliable observations while eliminating seams. The frontal view is used as a colour reference to resolve global offsets, and the screening weight λ is set to zero, meaning no additional regularisation beyond the gradient constraints.

Because the photometric camera records RAW linear data, the authors perform a calibrated colour transformation to linear sRGB. They measure the spectral power distribution (SPD) of each LED with a calibrated spectroradiometer and obtain the camera’s spectral sensitivity matrix from the literature. By combining these measurements they compute a 3 × 3 colour transformation matrix that maps the captured sensor responses to linear sRGB, ensuring that the resulting albedo maps are physically meaningful and directly usable for inverse rendering of typical consumer‑camera images.

The final dataset consists of 50 newly captured subjects (13 female, ages 18‑67, Fitzpatrick skin types I‑V) combined with the publicly available 3DRFE dataset of 23 subjects, yielding 73 high‑quality diffuse and specular albedo maps. Principal Component Analysis (PCA) is applied separately to the diffuse and specular albedo collections, producing two independent linear models. The diffuse model captures low‑frequency variations such as overall skin tone and melanin distribution, while the specular model captures high‑frequency variations related to the distribution of micro‑facets, especially around the eyes, lips, and forehead. The authors also construct a combined model that concatenates the two latent spaces, allowing simultaneous sampling of diffuse and specular appearance.

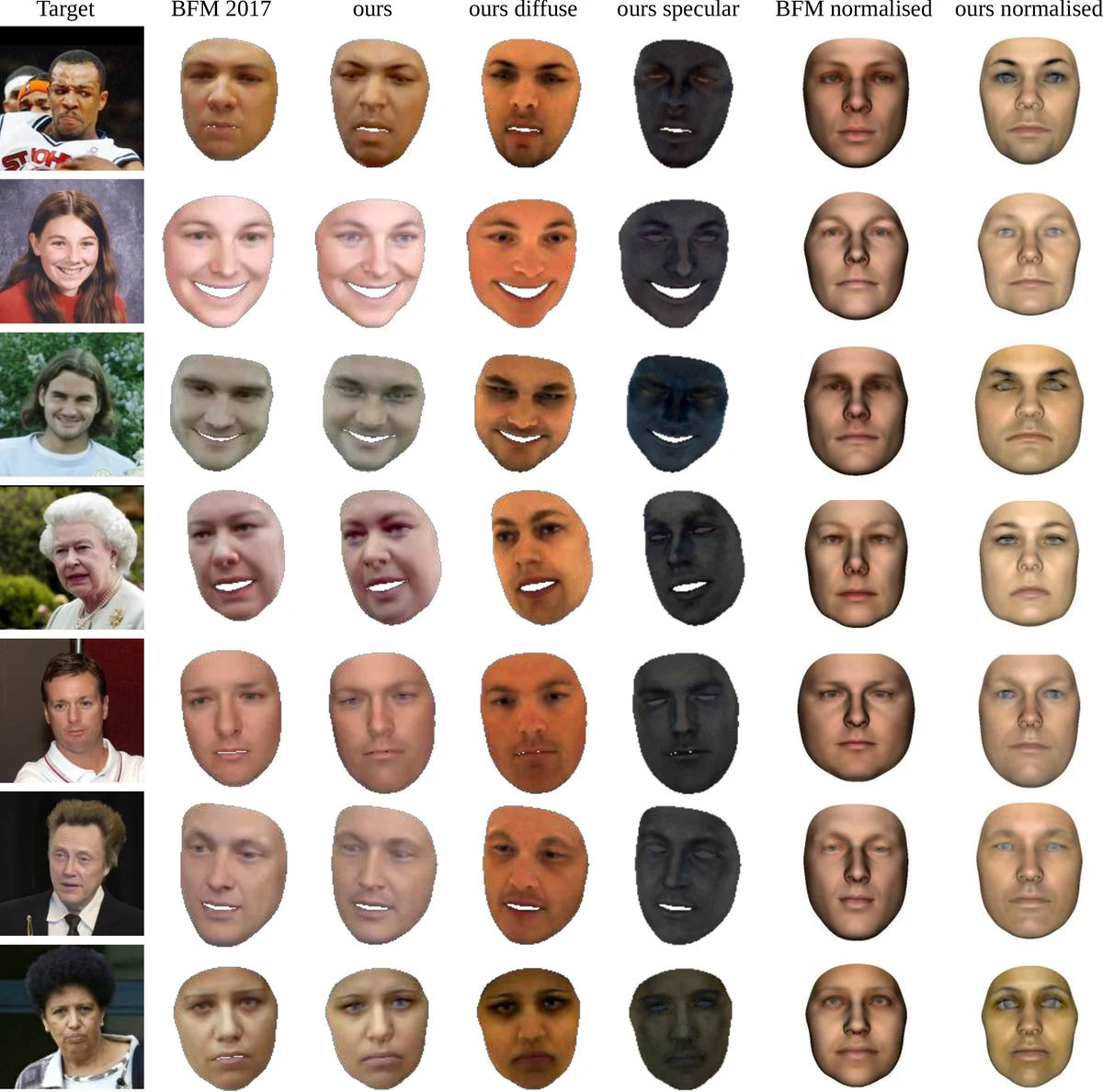

To demonstrate the practical benefit, the authors integrate their albedo model into a state‑of‑the‑art analysis‑by‑synthesis 3DMM fitting pipeline. They extend the fitting algorithm to estimate both diffuse and specular albedo coefficients together with shape, expression, illumination, and camera parameters. Quantitative evaluation shows a reduction of average albedo reconstruction error by roughly 12 % compared with the baseline BFM texture model. Qualitatively, reconstructed faces exhibit more realistic skin shine and highlight placement, confirming that the explicit specular statistics help disambiguate illumination from intrinsic reflectance.

All code, calibrated colour transformation matrices, and the statistical albedo models are released publicly, enabling other researchers to adopt the pipeline without rebuilding the complex lightstage hardware. By providing the first large‑scale, physically calibrated diffuse‑and‑specular albedo dataset and a corresponding statistical model, this work bridges the gap between photometric capture techniques and data‑driven 3DMMs, opening new avenues for realistic facial rendering, inverse rendering, face analysis, and digital avatar creation.

Comments & Academic Discussion

Loading comments...

Leave a Comment