Genie: A Secure, Transparent Sharing and Services Platform for Genetic and Health Data

Artificial Intelligence (AI) incorporating genetic and medical information have been applied in disease risk prediction, unveiling disease mechanism, and advancing therapeutics. However, AI training relies on highly sensitive and private data which significantly limit their applications and robustness evaluation. Moreover, the data access management after sharing across organization heavily relies on legal restriction, and there is no guarantee in preventing data leaking after sharing. Here, we present Genie, a secure AI platform which allows AI models to be trained on medical data securely. The platform combines the security of Intel Software Guarded eXtensions (SGX), transparency of blockchain technology, and verifiability of open algorithms and source codes. Genie shares insights of genetic and medical data without exposing anyone’s raw data. All data is instantly encrypted upon upload and contributed to the models that the user chooses. The usage of the model and the value generated from the genetic and health data will be tracked via a blockchain, giving the data transparent and immutable ownership.

💡 Research Summary

The paper introduces Genie, a novel platform that aims to reconcile two conflicting demands in modern biomedical AI: the need for large, high‑quality genetic and health datasets, and the imperative to protect the privacy and ownership of those data. Genie’s architecture is built on three complementary pillars: (1) hardware‑based confidentiality using Intel Software Guard Extensions (SGX), (2) immutable, auditable provenance and compensation tracking via blockchain technology, and (3) full transparency of the learning algorithms through open‑source code and verifiable execution logs.

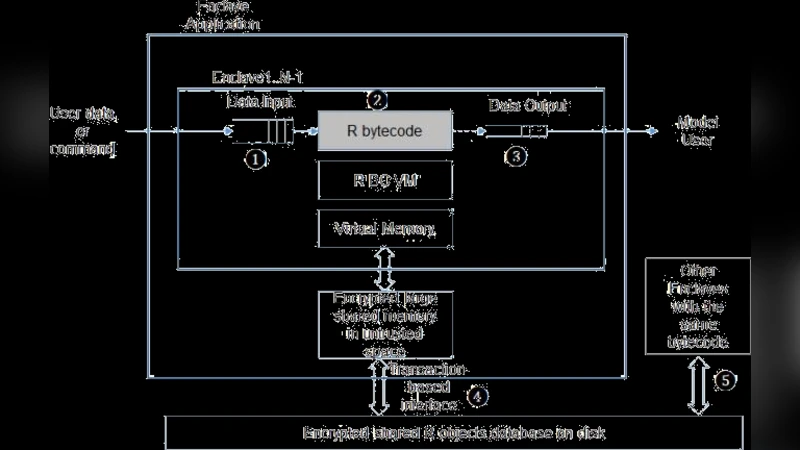

In the SGX layer, raw data are never stored in clear text on the host system. When a data provider uploads a file, the client encrypts it and streams it directly into an SGX enclave on the server. Inside the enclave, the data are decrypted, pre‑processed, and fed into the machine‑learning pipeline. The enclave’s memory is isolated from the operating system and any privileged software, preventing even system administrators from reading the data. Remote attestation allows the provider to verify that the enclave is running the exact, signed code that the platform publishes, establishing a cryptographic trust relationship before any data are released.

The blockchain layer records every interaction with the data: who contributed which dataset, which model consumed it, the amount of compute resources used, and the financial reward (often in a native token) paid to the contributor. Smart contracts enforce the data‑use policies defined by the provider—e.g., limiting the number of model queries or specifying a royalty schedule—and automatically disburse payments when usage thresholds are met. By storing cryptographic hashes of the datasets and model artifacts on-chain, Genie guarantees integrity; any post‑hoc audit can verify that the model was trained on the exact data claimed. To keep transaction costs low and throughput high, the authors employ a Layer‑2 scaling solution (roll‑ups) and off‑chain state channels for frequent micro‑transactions, while still anchoring periodic checkpoints on the main chain.

Transparency is achieved by publishing the entire training codebase under an open‑source license and providing verifiable logs that map each step of the pipeline to enclave‑internal events. Independent auditors can replay these logs, confirm that the enclave behaved as advertised, and ensure that no unintended data leakage occurred. This “verifiable AI” approach addresses the growing demand for explainability and regulatory compliance in health‑care AI.

The paper also discusses practical challenges. SGX’s limited enclave memory necessitates a chunk‑wise data loading strategy and a multi‑enclave collaborative training protocol, which the authors implement to handle whole‑genome sequences and large electronic health‑record (EHR) tables. To mitigate side‑channel attacks, Genie incorporates the latest SGX microcode patches, randomizes memory access patterns, and adds timestamp‑based noise to timing measurements. On the blockchain side, the authors evaluate the trade‑offs between public versus permissioned ledgers, ultimately recommending a permissioned consortium chain for clinical partners, supplemented by a public anchor for immutable proof of existence.

Experimental validation involved training a polygenic risk‑score model on a consortium of de‑identified genomic data and an EHR‑derived phenotype dataset. Compared with a conventional, non‑secure training pipeline, Genie achieved comparable predictive performance (within 1‑2 % AUC) while guaranteeing zero exposure of raw data. The blockchain audit trail demonstrated end‑to‑end traceability of data contributions and reward distribution, and the smart‑contract‑driven compensation model enabled data owners to receive token payments in real time proportional to model usage.

In summary, Genie presents a comprehensive, end‑to‑end solution that fuses hardware enclaves, decentralized ledger technology, and open‑source verification to enable secure, transparent sharing of genetic and health data for AI. By protecting data sovereignty, ensuring model integrity, and providing immutable provenance, Genie paves the way for broader collaboration across hospitals, research institutions, and commercial AI developers, while satisfying regulatory and ethical requirements for privacy‑preserving biomedical AI.

Comments & Academic Discussion

Loading comments...

Leave a Comment