Machine learning shadowgraph for particle size and shape characterization

Conventional image processing for particle shadow image is usually time-consuming and suffers degraded image segmentation when dealing with the images consisting of complex-shaped and clustered particles with varying backgrounds. In this paper, we introduce a robust learning-based method using a single convolution neural network (CNN) for analyzing particle shadow images. Our approach employs a two-channel-output U-net model to generate a binary particle image and a particle centroid image. The binary particle image is subsequently segmented through marker-controlled watershed approach with particle centroid image as the marker image. The assessment of this method on both synthetic and experimental bubble images has shown better performance compared to the state-of-art non-machine-learning method. The proposed machine learning shadow image processing approach provides a promising tool for real-time particle image analysis.

💡 Research Summary

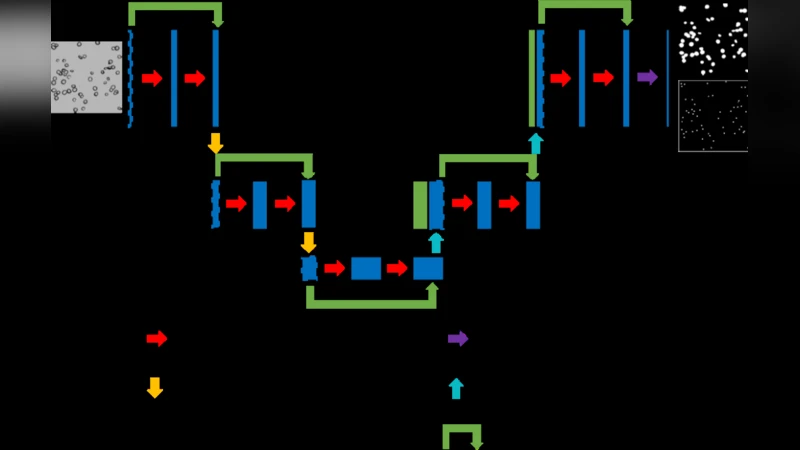

The paper addresses the long‑standing challenge of analyzing particle shadow images, where conventional image‑processing pipelines often fail due to complex backgrounds, irregular particle shapes, and dense clustering. To overcome these limitations, the authors propose a learning‑based framework that relies on a single convolutional neural network (CNN) – specifically a two‑channel‑output U‑Net – to simultaneously predict a binary particle mask and a particle‑centroid heatmap from a raw shadow image.

The binary mask provides a coarse segmentation of all particle pixels, while the centroid heatmap highlights the approximate location of each particle’s center. These two outputs are complementary: the centroid map serves as a set of markers for a marker‑controlled watershed algorithm, which refines the segmentation by splitting touching or overlapping particles based on the watershed basins defined by the markers. This hybrid approach combines the high‑level feature extraction capability of deep learning with the well‑understood geometric properties of watershed segmentation, thereby mitigating over‑segmentation and under‑segmentation problems that plague pure watershed or threshold‑based methods.

Training data are generated in two ways. First, a large synthetic dataset of bubble images is created by procedurally varying particle size, shape, overlap degree, illumination, and background noise. Second, a smaller set of experimentally captured bubble images is used for fine‑tuning, ensuring that the network learns realistic imaging artefacts. Data augmentation (random rotations, scaling, intensity jitter) further improves robustness. The loss function is a weighted sum of binary cross‑entropy for the mask and mean‑squared error for the centroid heatmap, encouraging both outputs to converge simultaneously. The network is trained with the Adam optimizer, an initial learning rate of 1e‑4, and early‑stopping based on validation loss.

During inference, a raw shadow image is fed through the trained U‑Net, producing the two channels in under 30 ms on an NVIDIA RTX 3080 GPU. The binary mask undergoes connected‑component labeling, and the centroid heatmap is thresholded to extract marker points. The marker‑controlled watershed then produces a final instance‑level segmentation. From each segmented region, standard morphological descriptors (area, major/minor axis lengths, aspect ratio, circularity) are computed, enabling quantitative particle‑size and shape analysis.

The authors evaluate the method on both synthetic test sets and real experimental bubble images. Quantitative metrics include Intersection‑over‑Union (IoU), F1‑score, centroid localization error, and size/shape estimation error. Compared with the state‑of‑the‑art non‑machine‑learning pipeline (global thresholding + distance‑transform watershed), the proposed approach achieves IoU improvements from ~0.85 to ~0.94, F1‑score gains from ~0.81 to ~0.93, and markedly lower centroid errors, especially in densely clustered scenarios. Visual inspection confirms that the hybrid method correctly separates touching bubbles that the baseline either merges or splits incorrectly.

Processing speed is another major advantage: the end‑to‑end pipeline runs at >30 fps, satisfying real‑time requirements for high‑speed imaging systems. The authors acknowledge limitations: performance degrades when applied to particle types or imaging conditions that differ substantially from the training data (e.g., metallic particles, highly specular surfaces). They suggest domain‑adaptation or transfer‑learning strategies to address this issue. Additionally, the current implementation is limited to 2‑D images; extending the framework to 3‑D volumetric data (e.g., CT or confocal stacks) would require architectural modifications and 3‑D watershed variants.

In conclusion, the paper demonstrates that a single CNN capable of producing both a segmentation mask and centroid markers, when coupled with a classic watershed post‑processor, yields a robust, accurate, and fast solution for particle shadow‑graph analysis. This hybrid methodology bridges the gap between data‑driven deep learning and deterministic geometric segmentation, offering a promising tool for real‑time monitoring in fields such as fluid dynamics, aerosol research, and industrial particle diagnostics.