NLP-assisted software testing: A systematic mapping of the literature

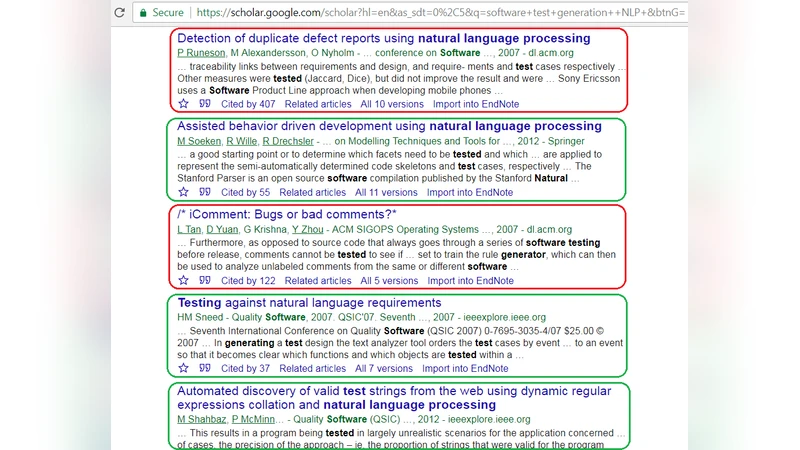

Context: To reduce manual effort of extracting test cases from natural-language requirements, many approaches based on Natural Language Processing (NLP) have been proposed in the literature. Given the large amount of approaches in this area, and since many practitioners are eager to utilize such techniques, it is important to synthesize and provide an overview of the state-of-the-art in this area. Objective: Our objective is to summarize the state-of-the-art in NLP-assisted software testing which could benefit practitioners to potentially utilize those NLP-based techniques. Moreover, this can benefit researchers in providing an overview of the research landscape. Method: To address the above need, we conducted a survey in the form of a systematic literature mapping (classification). After compiling an initial pool of 95 papers, we conducted a systematic voting, and our final pool included 67 technical papers. Results: This review paper provides an overview of the contribution types presented in the papers, types of NLP approaches used to assist software testing, types of required input requirements, and a review of tool support in this area. Some key results we have detected are: (1) only four of the 38 tools (11%) presented in the papers are available for download; (2) a larger ratio of the papers (30 of 67) provided a shallow exposure to the NLP aspects (almost no details). Conclusion: This paper would benefit both practitioners and researchers by serving as an “index” to the body of knowledge in this area. The results could help practitioners utilizing the existing NLP-based techniques; this in turn reduces the cost of test-case design and decreases the amount of human resources spent on test activities. After sharing this review with some of our industrial collaborators, initial insights show that this review can indeed be useful and beneficial to practitioners.

💡 Research Summary

The paper presents a systematic mapping study of research that applies Natural Language Processing (NLP) techniques to software testing, aiming to reduce the manual effort required to derive test cases from natural‑language requirements. The authors collected an initial set of 95 papers published between 2001 and 2017, applied inclusion/exclusion criteria and a voting process, and arrived at a final pool of 67 peer‑reviewed technical papers. Using the systematic literature mapping (SLM) methodology, they defined four research questions (RQs) to classify the literature along four dimensions: (1) contribution type (e.g., requirements analysis, test‑case generation, test‑case prioritization), (2) NLP approaches employed (syntactic parsing, morphological analysis, semantic role labeling, named‑entity recognition, etc.), (3) input requirement formats (free‑text, semi‑structured formats such as HTML or XML), and (4) tool support (number of tools, availability, underlying NLP libraries).

Key findings include: only 4 of the 38 tools mentioned in the papers (≈11 %) are publicly available for download, severely limiting reproducibility and industrial adoption. A substantial portion of the literature (30 of 67 papers, ≈45 %) provides only a “shallow exposure” of the NLP components, offering little detail about algorithms, pipelines, or parameter settings. The majority of approaches rely on syntactic and morphological analysis; more sophisticated semantic techniques (e.g., SRL, NER) appear in a minority of studies. Most input requirements are unstructured natural language, with relatively few works handling semi‑structured formats. Language support is heavily skewed toward English, with limited exploration of multilingual or domain‑specific corpora.

Empirical evaluations are sparse: only a handful of studies report quantitative metrics such as precision, recall, or coverage of generated test cases, and most evaluations are case‑study based rather than benchmarked against a common dataset. Consequently, the comparative effectiveness of different NLP‑assisted testing techniques remains unclear.

The authors argue that their mapping serves as an “index” for practitioners seeking existing NLP‑based testing solutions and for researchers needing a comprehensive overview of the field. They identify several research gaps: (1) the need for open‑source, downloadable tools; (2) detailed reporting of NLP pipelines to enable replication; (3) expansion of multilingual support and domain‑specific models; (4) establishment of standardized evaluation frameworks and publicly available benchmark datasets. Addressing these gaps would accelerate the transfer of NLP‑assisted testing methods from research prototypes to industrial practice, ultimately reducing test‑case design costs and human effort in software quality assurance.

Comments & Academic Discussion

Loading comments...

Leave a Comment