The Recognition Of Persian Phonemes Using PPNet

In this paper, a novel approach is proposed for the recognition of Persian phonemes in the Persian Consonant-Vowel Combination (PCVC) speech dataset. Nowadays, deep neural networks play a crucial role in classification tasks. However, the best results in speech recognition are not yet as perfect as human recognition rate. Deep learning techniques show outstanding performance over many other classification tasks like image classification, document classification, etc. Furthermore, the performance is sometimes better than a human. The reason why automatic speech recognition (ASR) systems are not as qualified as the human speech recognition system, mostly depends on features of data which is fed to deep neural networks. Methods: In this research, firstly, the sound samples are cut for the exact extraction of phoneme sounds in 50ms samples. Then, phonemes are divided into 30 groups, containing 23 consonants, 6 vowels, and a silence phoneme. Results: The short-time Fourier transform (STFT) is conducted on them, and the results are given to PPNet (A new deep convolutional neural network architecture) classifier and a total average of 75.87% accuracy is reached which is the best result ever compared to other algorithms on separated Persian phonemes (Like in PCVC speech dataset). Conclusion: This method can be used not only for recognizing mono-phonemes but also it can be adopted as an input to the selection of the best words in speech transcription.

💡 Research Summary

The paper “The Recognition Of Persian Phonemes Using PPNet” presents a new deep‑learning pipeline for phoneme‑level speech recognition on the Persian Consonant‑Vowel Combination (PCVC) dataset. The dataset contains 22 Persian consonants, 6 vowels, and a silence class, yielding 30 phoneme categories. Each speaker contributes 2‑second recordings sampled at 48 kHz, with roughly half of each file containing speech and the remainder silence.

Data preprocessing begins by detecting silence (near‑zero amplitude) and vowels (amplitude > 0.25 × maximum) to segment each 2‑second file into 50 ms windows: the 50 ms preceding a vowel is taken as the consonant, and the vowel itself is also 50 ms long. Each window is transformed using a short‑time Fourier transform (STFT) with a 5 ms analysis window, producing 150 frequency bins per frame and 100 time frames, resulting in a 100 × 150 spectrogram per phoneme. The authors argue that STFT outperforms MFCC and raw waveforms in their experiments.

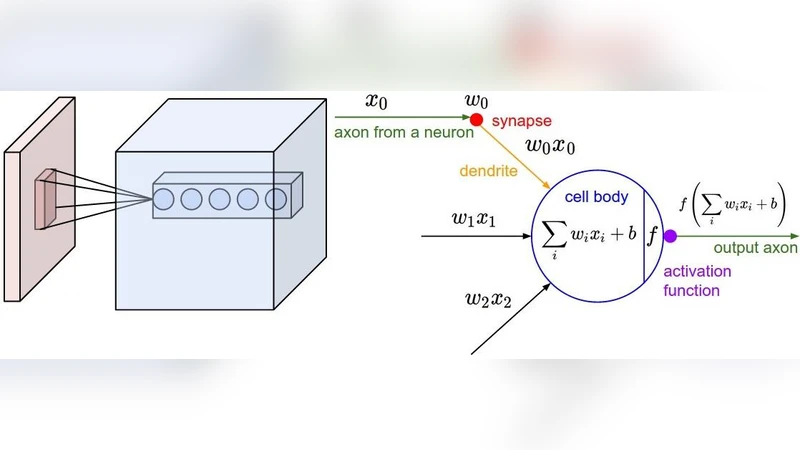

The extracted spectrograms are fed to a custom convolutional neural network called PPNet. PPNet consists of six convolutional layers with kernel size 3 × 3 and stride 1. The first two layers have 32 filters, the next two have 64, and the final two have 128. After the first convolution a batch‑normalization layer is applied; dropout layers are inserted after each convolution to mitigate over‑fitting, and three max‑pooling layers reduce spatial dimensions. The network ends with a fully‑connected softmax layer over the 30 phoneme classes. Training uses a batch size of 16, 50 epochs, and an input shape of 100 × 150; however, details such as optimizer, learning‑rate schedule, and regularization parameters are omitted.

For evaluation, 85 % of the samples are randomly selected for training and the remaining 15 % for testing, without speaker‑independent splitting. The reported overall accuracy is 75.87 % and the macro‑averaged F1‑score is 75.78 %. Class‑wise results show perfect scores for some vowels and the silence class, while several consonants achieve lower precision/recall (e.g., /tʃ/ and /dʒ/ around 0.5–0.7). The authors claim this performance surpasses previous approaches on the same dataset, such as MFCC‑based ANN models.

Critical analysis reveals several strengths and weaknesses. Strengths include the use of a publicly available, phoneme‑level annotated Persian dataset and a clear description of the segmentation and STFT preprocessing pipeline. The architecture, while not novel compared to standard VGG‑style CNNs, is adequately sized for the modest dataset and demonstrates that a simple CNN can achieve competitive results.

Weaknesses are more pronounced. The random split mixes utterances from the same speakers across train and test sets, preventing assessment of speaker‑independent generalization—a crucial factor for real‑world ASR systems. The PPNet architecture is described only at a high level; no ablation studies or comparisons with alternative architectures (e.g., residual networks, attention mechanisms) are provided, making it difficult to gauge the contribution of each component. The choice of STFT parameters (5 ms window, 150 bins) is justified only by “experience” without systematic evaluation against MFCC, log‑mel, or raw waveform features. Moreover, the dataset is relatively small (12 speakers, 132 utterances per speaker), raising concerns about overfitting despite the use of dropout. No data‑augmentation strategies (time‑stretching, pitch shifting, noise addition) are employed, limiting robustness to acoustic variability. Finally, the paper lacks statistical significance testing and does not report confidence intervals for the reported accuracy.

Future work could address these issues by: (1) adopting speaker‑independent cross‑validation (e.g., leave‑one‑speaker‑out) to evaluate true generalization; (2) expanding the dataset or augmenting it with synthetic variations to improve robustness; (3) experimenting with richer feature sets (MFCC, log‑mel, raw waveforms) and multimodal fusion; (4) incorporating modern architectural advances such as residual blocks, depthwise separable convolutions, or self‑attention to capture longer temporal dependencies; (5) providing detailed hyper‑parameter studies, optimizer choices, and learning‑rate schedules; and (6) reporting statistical analyses (e.g., paired t‑tests) to substantiate claims of superiority.

In summary, the paper introduces a straightforward STFT‑CNN pipeline (PPNet) that achieves a respectable 75 % phoneme recognition accuracy on the PCVC Persian dataset. While the results are promising relative to prior work, the methodological limitations—particularly the lack of speaker‑independent evaluation and limited architectural innovation—temper the claim of state‑of‑the‑art performance. With the suggested enhancements, the approach could become a solid baseline for Persian phoneme recognition and potentially contribute to larger‑scale Persian ASR systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment