Improved Attention Models for Memory Augmented Neural Network Adaptive Controllers

We introduced a {\it working memory} augmented adaptive controller in our recent work. The controller uses attention to read from and write to the working memory. Attention allows the controller to read specific information that is relevant and updat…

Authors: Deepan Muthirayan, Scott Nivison, Pramod P. Khargonekar

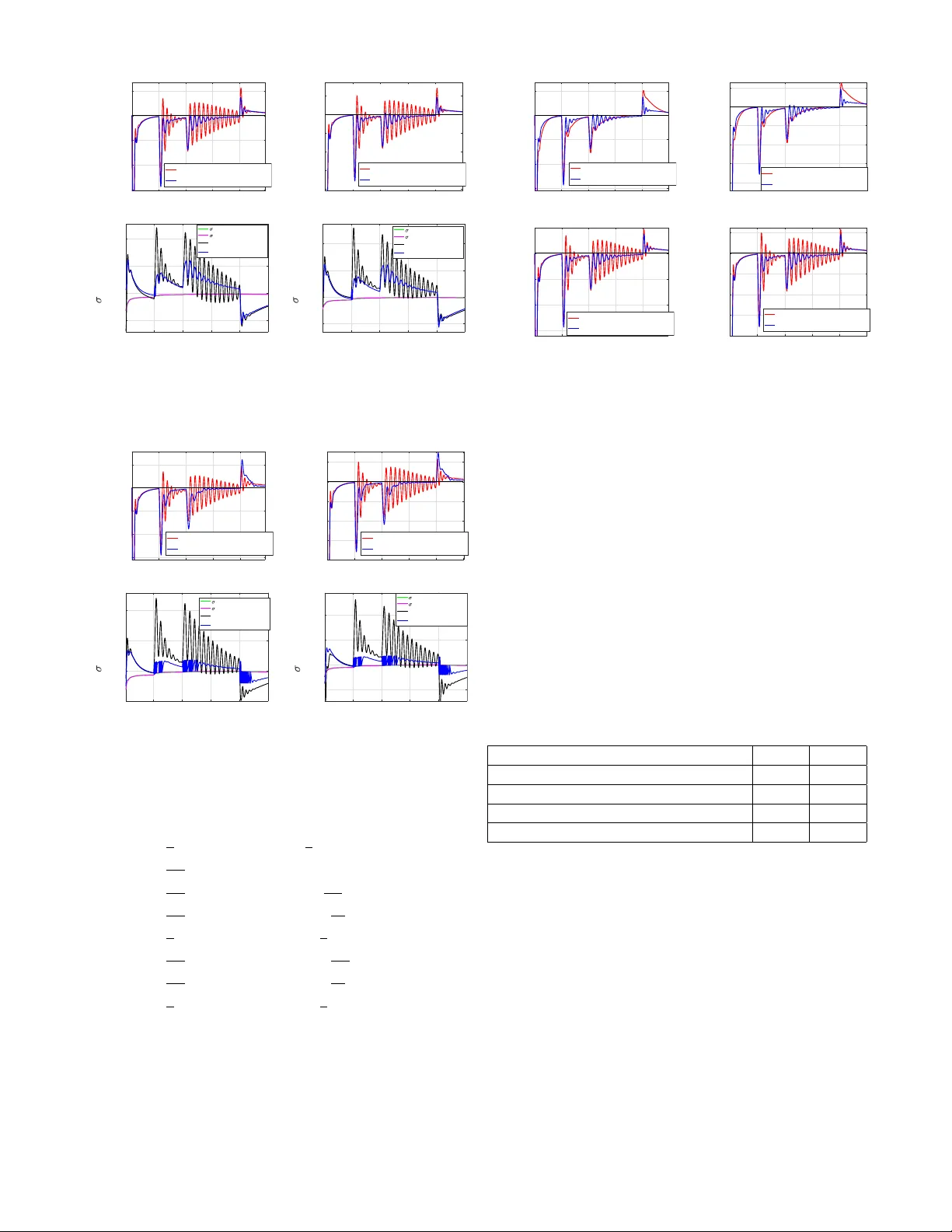

Impr ov ed Attention Models f or Memory A ugmented Neural Network Adapti ve Contr ollers Deepan Muthirayan, Scott Ni vison and Pramod P . Khargonekar Abstract — W e introduced a working memory augmented adap- tive controller in our r ecent work. The controller uses attention to read fr om and write to the working memory . Attention allows the controller to read specific inf ormation that is relevant and update its working memory with inf ormation based on its rele vance, similar to how humans pick r elevant inf ormation from the enormous amount of inf ormation that is r eceived through various senses. The r etrieved information is used to modify the final control input computed by the controller . W e showed that this modification speeds up learning. In the above work, we used a soft-attention mechanism for the adaptive controller . Contr ollers that use soft attention update and read information from all memory locations at all the times, the extent of which is determined by their r elev ance. But, f or the same reason, the inf ormation stored in the memory can be lost. In contrast, hard attention updates and reads from only one location at any point of time, which allo ws the memory to r etain information stored in other locations. The downside is that the controller can fail to shift attention when the information in the current location becomes less rele vant. W e propose an attention mechanism that comprises of (i) a hard attention mechanism and additionally (ii) an attention reallocation mechanism. The attention reallocation enables the controller to reallocate attention to a different location when the r elev ance of the location it is reading fr om diminishes. The reallocation also ensur es that the inf ormation stored in the memory befor e the shift in attention is retained which can be lost in both soft and hard attention mechanisms. Through detailed simulations of various scenarios for two link r obot robot arm systems we illustrate the effectiv eness of the pr oposed attention mechanism. I . I N T R OD U C T I O N Even though there is remarkable progress in machine learning, robotics and autonomous systems, humans still outperform intelligent machines in a wide v ariety of tasks and situations [1]. Central to the functioning of human cog- nitiv e system are several functions like perception, memory , attention, reasoning, problem solving, thinking and creativity . Thus, it is only natural to ask, can functions inspired from hu- man cognition improv e control algorithms? W ith this neuro- cognitiv e science inspiration, we recently introduced the concept of memory augmented neural adaptiv e controllers in [2], [3]. In this paper , we focus on the notion of attention in this setting. Deepan Muthirayan and Pramod P . Khargonekar are with the Department of Electrical Engineering and Computer Sciences, University of California, Irvine, CA 92697. Email: { dmuthira, pramod.khargonekar } @uci.edu Scott Nivison is with the Munitions Directorate, Air Force Research Laboratory , Eglin AFB, FL, USA. Email: scott.ni vison@us.af.mil. Supported in part by the National Science F oundation under Grant Number ECCS-1839429. Attention is the state of focused awareness on some aspects of the en vironment. Attention allows humans to focus on sen- sory information that are relev ant and essential at a particular point of time. This is especially important considering, at any moment of time, the human takes in enormous amount of information from visual, auditory , olfactory , tactile and taste senses. The human-inspired attention mechanism have been instrumental in machine learning applications such as machine translation [4]. Attention based models have also improv ed deep learning models like neural networks [5], reinforcement learning (RL) [6] and are the state-of-the-art in meta learning [7] and generati ve adversarial networks [8]. W e believ e that the idea of attention holds tremendous potential for learning in cyber-physical systems. Inspired by human attention and the recent successes in deep learning, we explore attention models for control algorithms in a specific context. W e consider the w orking memory augmented NN adaptive controllers proposed in our recent work [2], [3]. Here, the controller stores and retriev es specific information to modify its final control input. W e showed that this modification speeds up the response of the controller to abrupt changes. Attention is relev ant here because, the main controller uses an attention mechanism to read and write to the working memory . The natural question to ask is what is a good attention mechanism for such an application? Our previous work [2] used a specific form of attention called soft-attention, where the central controller reads and writes to all locations in the working memory . Here, the extent to which the memory contents are erased and re written or contrib ute to the final read output depends on the rele v ance of the content at a particular location in the memory . While soft attention mechanisms can be effecti v e by retrieving relev ant information from all locations, the same feature can lead to loss of information. In contrast, hard attention mechanisms [9] are mechanisms that read and write to only one location at any point of time. A controller that uses hard attention does not lose the information stored in other locations since at any point of time only one location is read from or updated. The disad- vantage is that the controller can fail to shift attention when the current information becomes less rele v ant. In addition, this information can be lost due to continual modifications. In this paper , we propose an attention mechanism that is a combination of har d attention and an attention r eallocation mechanism. The attention reallocation mechanism allows the controller to shift attention to a dif ferent location when the current location becomes less rele v ant. This also allows the memory to retain the information stored in it before the shift was forced by the reallocation mechanism. Thus, the mechanism we propose can overcome the limitations of prior hard and soft attention mechanisms. The setting we consider is a well-studied NN adaptiv e control setting. This setting comprises of an unkno wn non- linear function that is to be compensated. T ypically , the neural network is used to directly compensate the unkno wn function. The literature on NN based adaptive control is ex- tensiv e [10]–[17]. In the setting here, we consider nonlinear uncertainties that can vary with time including variations that are abrupt or sudden . The objectiv e for the controller is to adapt quickly even after such abrupt changes. W e make the assumptions that (i) the abrupt changes are not lar ge and (ii) the system state is observ able. In Section II, we revisit the Memory Augmented Neural Network (MANN) adapti ve controller proposed in our recent works [2], [3]. In Section III, we discuss the working mem- ory interf ace and the proposed attention mechanism. Finally , in Section IV, we pro vide a detailed discussion substantiating the improvements in learning obtained by using the attention mechanisms proposed in this paper . W e do not include the stability proof for the controller described here. The proof outlined in [2] can be trivially extended to the closed loop system discussed here. I I . C O N T RO L A R C H I T E C T U R E In this section, we briefly introduce the memory aug- mented control architecture for adapti ve control of contin- uous time systems proposed in our earlier w ork [2]. The central inspiration behind this idea is the working memory in the human memory system. Pr oposed Arc hitectur e : The general architecture is de- picted in Fig. 1. The proposed architecture augments an external working memory to the general dynamic feed- back controller . In our pre vious work, we specialized it to the specific controller that augments an external working memory to a neural network. In this paper , we focus on the attention models for the working memory . W e propose sev eral attention mechanisms for the controller . The novelty of our proposal lies in how the controller can reallocate its attention under certain circumstances. Specialization to NN Adaptive Contr ol : in neural adaptiv e control, control input u to the plant is computed based on state feedback, error feedback and the NN output. The control input is a combination of base controller u bl , which is problem specific, the NN output u ad and a “robustifying term” v [12], [18]. The final control input is gi ven by u = u bl + u ad + v . (1) Since, it is assumed that the system state is observable, the output of the plant is the system state. The control term u bl is computed based on the output of an error ev aluator , for example, the error between the system output and the desired trajectory in a trajectory tracking problem. The control term u ad is the final NN output. This output is typically used to compensate an unknown nonlinear function in the system f Plant u w x z Controller W orking Memory NN + Neural Adaptive Controller u v u bl u ad W orking Memory Read Write Fig. 1: Left: general controller augmented with working memory . Right: memory augmented neural adaptiv e con- troller . f is the uncertainty . dynamics. The robustifying term is introduced to compensate the higher order terms that are left out in the compensation of the unknown function by the term u ad . In the memory augmented NN adaptive control we pro- posed in [2], the working memory acts as a complementing memory system to the NN. The output of the NN block, u ad is computed by combining the information read from the external working memory and the NN. The exact form of this term and how it is modified based on the contents of the working memory is described in Section III. W e showed in [2] that this modification speeds up the response of the closed loop system to abrupt changes. Notation : The system state is denoted by x ∈ R n . The estimated NN weight matrices are giv en by ˆ W , ˆ V , ˆ b w and ˆ b v . W e denote the NN used to approximate the function f ( x ) by ˆ f , which is given by ˆ f = ˆ W T σ ( ˆ V T x + ˆ b v ) + ˆ b w . W e introduce a function called softmax(.), tak es in as input a vector a and outputs a vector of the same length. The i th component of softmax(.) is gi ven by softmax(a) i = exp ( a i ) P j exp ( a j ) . (2) W e introduce two other v ector functions which appear in the NN update laws. W e denote these functions by ˆ σ and ˆ σ 0 which are defined by: ˆ σ = σ ( ˆ V T x + ˆ b v ) 1 ˆ σ 0 = diag ( σ ( ˆ V T x + ˆ b v ) (1 − σ ( ˆ V T x + ˆ b v ))) 0 T (3) where 0 is a zero v ector of dimension equal to the number of hidden layer neurons. I I I . W O R K I N G M E M O RY A N D A T T E N T I O N In this section, we first summarize the two working memory operations, i.e., Memory Write and Memory Read, introduced in our pre vious works [2], [3]. W e then provide a detailed discussion on the v arious attention mechanisms and the attention reallocation mechanism. Finally , we discuss how the Memory Read output is used to modify the final output u ad . A. Memory Write: The Memory Write equation for the working memory is ˙ h i = − w r ( i ) h i + c w w r ( i ) h w + w r ( i ) ˆ W h T e , (4) where h w is the write vector , w r ( i ) is the factor that determines whether the memory v ector i is eligible for update or not and c w is a constant. The write v ector h w corresponds to the new information that can be used to update the contents of the memory . The write vector h w for this interface is gi v en by , h w = σ ( V T ˜ x + ˆ b v ) . (5) Specifying the write vector to be the current hidden layer value allows the memory to store and reuse them later to modify the final NN output. The factor w r ( i ) s determine the ele gibility of the respec- tiv e locations for update and are determined by an addressing mechanism. The controller generates a query q which could be, for e xample, the current state or the current hidden layer output itself. Each memory location i is typically associated with a key k i , which serve as its identifier . The keys k i s are compared with the generated query to deterimine the factors w r ( i ) s. The factor w r ( i ) is set to be 1 for the i whose k i is closest to the query q and the rest are set to zero. The location whose corresponding factor is one becomes eligible and is updated. In machine learning parlance this type of an addressing mechanism is referred to as har d attention [19]. Later , we discuss sev eral attention mechanisms that differ based on how the ke ys are determined and how the query is specified. B. Memory Read: The Memory Read output, h o , for the interface is given by h o = hw r , (6) where w r ( i ) s are the same set of factors discussed earlier . Essentially , the Memory Read output is a weighted combi- nation of the respecti ve memory contents with the weights being w r ( i ) s. The factors w r ( i ) s are a natural choice for the weights because they hav e a direct correspondence to the relev ance of the information in their respective locations. C. Attention Mechanism W e propose an attention mechanism that comprises of (i) a hard attention and (ii) an attention reallocation mechanism. The attention reallocation mechanism shifts the attention to a dif ferent location when the relev ance of the information in the current location diminishes. After the shift, the infor- mation in the pre vious location is retained because in the period before the next shift the proposed mechanism uses hard attention. Mechanisms that use hard attention without attention reallocation can fail to shift attention and continue to read from and modify this information, e ventually los- ing this information. Thus, attention reallocation plays a complementary role to hard attention. Belo w , we discuss two different hard attention mechanisms for the proposed controller (i) where the key is state based and (ii) where the key is representation based. W e then discuss the attention reallocation mechanism. 1) State based Har d Attention: In this design, the keys are specified to be a set of points in the state space. The update equations for the ke ys are specifed to be an asymptotically stable first order dynamic system whose state is the key vector k i and input is the sub-v ector of the state x . Thus, the key update equations are given by: ˙ k i = − c k w r ( i )( k i − x ) , (7) where the constant c k is a design constant. The constant c k determines the response time of this equation and is set such that the final output of this equation is a good representation of the states visited when its corresponding memory location was updated. The query needs to be set such that the controller can retriev e v alues rele v ant to the current state of the system. Giv en that the ke ys are points in the state space, we set the query q to be the current state itself, i.e., q = x . (8) In the simulation examples that we discuss later x is chosen to be the position v ariables in the state vector . A hard attention mechanism selects the location whose key is closest to the query . In this design, this location is determined by i ∗ = argmin i k q − k i k ∞ . (9) Giv en i ∗ , the factors w r ( i ) s are naturally given by w r ( i ) = 1 , if i = i ∗ , 0 , otherwise . (10) 2) Repr esentation based Har d Attention: Alternativ ely , we can specify the keys as a set of points in the hidden layer feature space of the neural netw ork (NN). This is a reasonable approach because the hidden layer features by definition are a representation of the NN input space. In this design, the key for the respective memory locations are giv en by k i = h i . (11) W e choose the memory v ectors themselves as the keys for the following reasons. Firstly , the memory v ectors provide a dynamic set of points in the feature space. Secondly , a key by design should contain information about the memory content and the scenario it represents and the memory vectors satisfy this criterion. The keys for the respecti ve locations are formally giv en by The query q should be such that the controller can retriev e information from the memory that is relev ant. Assuming that the current scenario and the scenario that the memory contents correspond to are not very different, the information in the location that is likely to be relev ant is the location whose key is closest to the current hidden layer output. The controller can retriev e this information by specifying the query q to be the current hidden layer output of the NN itself. Hence, we define q as: q = σ ( V T ˜ x + b v ) . (12) In this design, the location that is selected by the hard attention mechanism is given by i ∗ = argmin i k q − 1 /c w k i k ∞ . (13) The factor 1 /c w in the abov e equation accounts for the same factor in the Memory Write equation (4) which is also the key update equation in this case. Ha ving defined i ∗ , the factor w r ( i ) s are given by the same equation (10). D. Attention Reallocation The attention reallocation mechanism that we propose continually checks whether the content of any of the memory locations is nearer to the current hidden layer output. If, at some point, the hidden layer output deviates from any of the contents beyond this threshold θ then the controller reallocates attention to the least rele v ant location with its value re-initialized at the current hidden layer value. Such a design is likely to ensure that, at any point of time, there is at least one memory location whose content is ‘very’ relev ant to the current scenario. Attention reallocation also ensures that the memory does not forget the information stored before the shift in attention. This is because once the shifts occurs, the location where the information is stored is neither updated nor read, at least for a certain period, until the attention shifts back to this location. W e note that, in both hard and soft attention, this information is likely to be lost. The decision to shift attention is determined by a r = 0 , if ∃ i s.t. k σ ( V T ˜ x + b v ) − 1 /c w µ i k ∞ < θ , 1 , otherwise . (14) When a r = 1 , it indicates that the attention is to be shifted. In this design, the location i s that the attention is shifted to is giv en by i s = argmax i k σ ( V T ˜ x + b v ) − 1 /c w µ i k ∞ . (15) The attention mechanism is initialized with the possi- ble range of selections limited to just one location. The mechanism can expand this range to include other locations progressiv ely if doing so could be beneficial. The decision to include new locations is specified by the same decision rule (14). This ensures that the controller starts with a limited set of memory locations and increases this set only when required. This can avoid unwanted jumps in attention, which could be the case with a mechanism that uses only hard attention. E. NN Output: The learning system (NN) modifies its output using the information h o retriev ed from the memory . For this memory interface, the NN output is modified by adding the output of the Memory Read to the output of the hidden layer as given below . NN Output: u ad = − ˆ W T σ ( ˆ V T ˜ x + ˆ b v ) + h o − ˆ b w . (16) W e postulated and showed empirical evidence in our earlier work [2] that such a modification impro ves the speed of learning by inducing the search in a particular direction, which facilitates quick con v ergence to a neural network that is a good approximation of the unknown function. For a detailed discussion on how memory augmentation improves learning we refer the reader to [2]. The computed NN output is fed to the controller (Fig. 1) which then computes the final control input by Eq. (1). NN Update Law : The NN update law , which constitutes the learning algorithm for the proposed architecture, is the regular update la w for a two layer NN [18]: " ˙ ˆ W ˙ ˆ b T w # = C w ˆ σ − ˆ σ 0 ˆ V T ˜ x + ˆ b v h e − κC w k e k ˆ W ˆ b T w , " ˙ ˆ V ˙ ˆ b T v # = C v ˜ x 1 h e ˆ W ˆ b T w T ˆ σ 0 − κC v k e k ˆ V ˆ b T v . (17) The variable h e in (4) is problem specific and depends on the L yapuno v function (without the NN error term). I V . S I M U L A T I O N R E S U LT S A N D D I S C U S S I O N In this section, we consider se v eral scenarios and sho w that the controller that uses the proposed attention mechanism results in improv ed performance. W e also provide a detailed discussion on ho w the attention mechanism proposed here improv es the performance. Finally , we provide a brief com- parativ e study of the two dynamic key design approaches. A. Robot Arm Contr oller W e briefly discuss the control equations for a typical robot arm controller augmented by an external working memory . The dynamics of a multi-arm robot system is giv en by , as in [12], M ( x ) ¨ x + V m ( x, ˙ x ) ˙ x + G ( x ) + F ( ˙ x ) = τ , (18) where x ∈ R n is the joint v ariable v ector , M ( x ) is the inertia matrix, V m ( x, ˙ x ) is the coriolis/centripetal matrix, G ( x ) us the gravity v ector , F ( ˙ x ) is the friction vector and τ is the torque control input. Let s ( t ) be the desired trajectory (reference signal), then the error in tracking the desired trajectory is e ( t ) = s ( t ) − x ( t ) . (19) Define the filtered tracking error by r = ˙ e + Λ e, (20) where Λ = Λ T > 0 . Then the system equations in terms of the filtered tracking error r , as gi ven in [12], is M ˙ r = − V m r − τ + f , (21) where f = M (¨ s +Λ ˙ e ) + V m ( ˙ s +Λ e ) + N ( x, ˙ x ) and N ( x, ˙ x ) = G ( x ) + F ( ˙ x ) . Gi ven f , it folows that the input to the NN, ˜ x = [ e, ˙ e, s, ˙ s, ¨ s ] . For this system, the control la w , τ = − u = − u bl − u ad − v , (22) where u bl = − K v r , v = − k v ( k ˆ W k F + k ˆ V k F + k µ k F + Z m ) r , and u ad is the NN output as defined in (16). The NN update laws are the same as (17). The vector h e = r T in this case. B. T wo Link Robot Arm System The system matrices for a typical two-link planar robot arm system are given below . M ( x ) = φ + ρ + 2 ψ cos( x 2 ) ρ + ψ cos( x 2 ) ρ + ψ cos( x 2 ) ρ , (23) V m ( x, ˙ x ) = − ψ ˙ x 2 sin( x 2 ) − ψ ( ˙ x 1 + ˙ x 2 ) sin( x 2 ) ψ ˙ x 1 sin( x 2 ) 0 , (24) N ( x, ˙ x ) = φγ cos( x 1 ) + ψ γ cos( x 1 + x 2 ) ψ γ cos( x 1 + x 2 ) . (25) In the examples we consider here, the initial masses of the two links are set as: m 1 = 0 . 8 Kg , m 2 = 2 . 3 Kg. Their arm lengths are set as: l 1 = l 2 = 1 m. The parameters in the matrices, in terms of the link masses and the link lengths are: φ = ( m 1 + m 2 ) l 2 1 = 3 . 1 Kgm 2 , ρ = m 2 l 2 2 = 2 . 3 Kgm 2 , ψ = m 2 l 1 l 2 = 2 . 3 Kgm 2 , γ = g /l 1 = 9 . 8 s − 2 . The control algorithm parameters are set as: c w = 3 / 4 , θ = 0 . 2 , K v = 20 , k v = 10 , κ = 0 , C w = C v = 10 . The number of hidden layers, N = 10 . T o start with the number of memory vectors is initialized to one, i.e., n s = 1 . The memory is allowed to include up to a maximum of 5 memory locations. For the memory interface, dynamic representation based key is used. Later , we draw comparison between this approach and the dynamic state based key approach. In all the scenarios described belo w , the attention reallocation is on only during the initializing phase. C. Illustr ation of Attention Reallocation In the scenario we consider here, masses undergo the following sequence of abrupt changes: m i → 2 m i at t = 10 , m i → √ 2 m i t = 20 , and m i → 1 / √ 2 m i at t = 40 (26) The link lengths are: l 1 = 1 , l 2 = 2 . The values of other parameters are as giv en above. In the first set of results the attention reallocation is on only during the initial phase (case 1). In the second set of results the reallocation mechanism is on throughout (case 2). Case 1 : Fig. 2 sho ws the response of joint angles for the controller that uses soft attention and the attention mech- anism proposed here. Figure 4 shows the response of joint angles for the controller that uses hard attention and a regular NN controller with N = 14 hidden layer neurons. W e find that the responses for the controller that uses soft attention and hard attention sho w large and sustained oscillations after ev ery abrupt change compared to the mechanism proposed in this work. From the figures it is clear that this is caused by the lar ge change and the oscillations observed in h o after ev ery abrupt change for these two controllers. The attention mechanism we proposed in this work sho ws an impro ved performance for the follo wing reason. In the initial phase, the mechanism reallocates attention if the mem- ory content de viates beyond the thershold θ and continues to reallocate if the de viations continue to occur . This continues till the number of memory locations grows up to 5 , the maximum that the memory can include. After this initial phase, the reallocation mechanism is turned off. But the information in the memory vector just before the reallocation is still retained and so the attention can shift back to this location if any subsequent jump exceeds the limit implied by this information. As a result, the Memory Read output is restricted from any de viation that exceeds the limit implied by the information in the memory contents even after the initial phase. This results in the diminished oscillations and thus the improv ed performance that we observe for the controller that uses hard attention and attention reallocation in the initial phase. Case 2 : Fig. 3 shows the response of joint angles for the controller that uses attention reallocation throughout. The response shows almost negligible oscillations unlike the previous case. This is clearly attributable to the reallocation mechanism being active throughout, which restricts the devi- ation of the memory contents much more than the previous case, as is evident from the h o and σ plots shown in Fig. 3. But, we observ e that the initial peak after ev ery abrupt change exceeds that of the controller that uses soft attention and the peak for the same controller in the previous case. This is observed because the quick correction provided by the second update term (or the third term) in the Memory Write equation (4), which necessarily results in a de viation, is restricted more than necessary in this case. Thus, having the attention reallocation on throughout reduces the oscillations but exaggerates the initial peak just after the abrupt change. In the following, we present many scenarios to illustrate the ef fecti veness of the mechanism we proposed in this work. In all these scenarios, the major contributing factor to why the mechanism we proposed performs better is the explanation that we provided in this section. 1) Scenario 1 : In this scenario, the v alues of the param- eters are as given abov e. The attention reallocation is activ e only during the initial phase. It is set this way in all the scenarios we consider below . The command signals that the two arm angles hav e to track are: s 1 = sin (0 . 5 t ) , s 2 = 0 and the masses undergo the 0 10 20 30 40 Time (s) -0.06 -0.04 -0.02 0 0.02 x 1 MANN Cont. (SA) MANN Cont. (HA+AR) 10 20 30 40 Time (s) 0.06 0.07 0.08 0.09 0.1 0.11 x 2 MANN Cont. (SA) MANN Cont. (HA+AR) 10 20 30 40 Time (s) 0.5 1 1.5 2 (1), h o (1) (1) (MANN(SA)) (1) (MANN(HA+AR)) h o (1) (MANN(SA)) h o (1) (MANN(HA+AR)) 10 20 30 40 Time (s) 0.5 1 1.5 2 (2), h o (2) (2) (MANN(SA)) (2) (MANN(HA+AR)) h o (2) (MANN(SA)) h o (2) (MANN(HA+AR)) Fig. 2: Above: respone of first and second joint angle, x 1 and x 2 , reallocation is on only during the initial period. Below: plot of σ, h o . 0 10 20 30 40 Time (s) -0.06 -0.04 -0.02 0 0.02 x 1 MANN Cont. (SA) MANN Cont. (HA+AR) 10 20 30 40 50 Time (s) 0.07 0.08 0.09 0.1 0.11 x 2 MANN Cont. (SA) MANN Cont. (HA+AR) 10 20 30 40 50 Time (s) 0.5 1 1.5 2 (1), h o (1) (1) (MANN(SA)) (1) (MANN(HA+AR)) h o (1) (MANN(SA)) h o (1) (MANN(HA+AR)) 10 20 30 40 Time (s) 0.5 1 1.5 2 (2), h o (2) (2) (MANN(SA)) (2) (MANN(HA+AR)) h o (2) (MANN(SA)) h o (2) (MANN(HA+AR)) Fig. 3: Abo ve: respone of first and second joint angle, x 1 and x 2 , reallocation is on throughout. Below: plot of σ, h o . following sequence of abrupt changes: m i → √ 2 m i at t = 5 , m i → √ 2 m i at t = 25 , m i → √ 2 . 5 m i at t = 50 , m i → 0 . 63 m i at t = 75 , m i → √ 0 . 5 m i at t = 90 , m i → √ 0 . 5 m i at t = 110 , m i → √ 0 . 1 m i at t = 130 , m i → √ 10 m i at t = 150 , m i → √ 2 m i at t = 170 , m i → √ 5 m i at t = 190 , m i → √ 0 . 2 m i at t = 210 , m i → √ 0 . 5 m i at t = 230 , m i → √ 0 . 1 m i at t = 250 , m i → √ 10 m i at t = 270 , m i → √ 2 m i at t = 290 , m i → √ 5 m i at t = 310 . (27) In the results belo w , we compare the performace of this controller with the MANN controller discussed in our earlier work [2] (uses soft attention) and an equi v alent NN controller . An equiv alent NN controller is a controller that has the same number of parameters as the MANN controller, where the parameter count for the MANN controller includes 0 10 20 30 40 Time (s) -0.06 -0.04 -0.02 0 0.02 x 1 NN Cont.(N = 14) MANN Cont. (HA+AR) 0 20 40 Time (s) 0.06 0.07 0.08 0.09 0.1 0.11 x 2 NN Cont. (N = 14) MANN Cont. (HA+AR) 0 10 20 30 40 Time (s) -0.06 -0.04 -0.02 0 0.02 x 1 MANN Cont. (HA) MANN Cont. (HA+AR) 10 20 30 40 50 Time (s) 0.07 0.08 0.09 0.1 0.11 x 2 MANN Cont. (HA) MANN Cont. (HA+AR) Fig. 4: Abo ve: respone of first and second joint angle, x 1 and x 2 . Comparison with a NN controller with N = 14 . Below: respone of first and second joint angle, x 1 and x 2 . Comparison with the MANN controller that uses hard attention. the size of the memory , which is n s × N . For the robot arm system considered in this section, the NN controller that is equiv alent to a MANN controller with N = 10 hidden layer neurons and n s = 5 number of memory v ectors is a network with N = 14 number of hidden layer neurons. T able I provides the values of the sample root mean square error (SRMSE) for the two joint angles. The SRMSE values clearly indicate that the MANN controller version that uses hard attention (HA) and attention reallocation (AR) outperforms the other controllers by a notable margin. T ABLE I: Robot Arm System, SRMSE × 10 3 , Scenario 1 Joint Angle 1 2 NN Cont. (N = 14) 10.2 4.4 MANN Cont. (soft att., N = 10) - I 8.8 3.8 MANN Cont. (this paper, N = 10) - II 8.2 3.6 % Reduction (From I to II) 7.3 % 5.3 % 2) Scenario 2 : In this scenario, the command signal for the joint angle 2 , s 2 = 0 . 1 , instead of 0 . The masses undergo the same sequence of changes as giv en in (27). The MANN controller that uses soft attention is provided with n s = 5 memory vectors and the equiv alent NN controller is provided with a NN that has N = 14 number of hidden layer neurons. The rest of the setting for this scenario is similar to that of scenario 1 . For lack of space, we do not provide the plots of the responses for this scenario. T able II pro vides the v alues of the sample root mean square error (SRMSE) for the two joint angles. W e note that the proposed MANN controller outperforms the other controllers by a notable margin. 3) Scenario 3 : In the scenarios considered so far the sequence of changes were periodic in nature. The scenario we consider here is the same as scenario 1 except that the abrupt changes the masses under go result in a monotonic T ABLE II: Robot Arm System, SRMSE × 10 3 , Scenario 2 Joint Angle 1 2 NN Cont. (N = 14) 10 5.2 MANN Cont. (soft att., N = 10) - I 9.4 4.9 MANN Cont. (this paper, N = 10) - II 8.3 4.5 % Reduction (From I to II) 11.7 % 8.2 % increase of the mass values. More specifically , we consider the following sequence of abrupt changes: m 2 i → m 2 i + 0 . 2 m 2 i (0) , after ev ery 20 s . (28) T able III pro vides the v alues of the sample root mean square error (SRMSE) for the two joint angles. W e note that, here too, the proposed MANN controller outperforms the other controllers by a notable mar gin. T ABLE III: Robot Arm System, SRMSE × 10 3 , Scenario 3 Joint Angle 1 2 NN Cont. (N = 14) 5.7 2.6 MANN Cont. (soft att., N = 10) - I 5.6 2.5 MANN Cont. (this paper, N = 10) - II 5.2 2.2 % Reduction (From I to II) 7 % 12 % 4) Scenario 4 : In this scenario, the intial masses of the links are given by , m 1 = 3 and m 2 = 2 . Rest of the setting considered here is the same as that of scenario 1 . The parameters for the interface and in the control and update laws are also equal to the v alues used for scenario 1 . T able IV pro vides the v alues of the sample root mean square error (SRMSE) for the tw o joint angles. The SRMSE values indicate that the proposed controller is only marginally better than the controller that uses soft attention in this scenario. W e reported this scenario to illustrate the point that the improv ements are scenario dependent. T ABLE IV: Robot Arm System, SRMSE × 10 3 , Scenario 4 Joint Angle 1 2 NN Cont. (N = 14) 12.0 3.6 MANN Cont. (soft att., N = 10) - I 10.2 3.1 MANN Cont. (this paper, N = 10) - II 10.0 3.0 % Reduction (From I to II) 2 % 3 % 5) Scenario 5 : In this scenario, the setting is similar to scenario 1 e xcept that the link lengths are dif ferent. The links lengths are: l 1 = 1 and l 2 = 2 . The command signals are: s 1 = 0 and s 2 = sin(0 . 5 t ) . T able V giv es the SRMSE values for the three controllers. Here too, we observe that the controller that uses the attention mechanism proposed achiev es a performance that is better compared to soft attention. D. Comparison with Har d Attention Here, we report sev eral scenarios where the controller that uses the proposed attention mechanism is noticeably better than the controller that uses hard attention. T ABLE V: Robot Arm System, SRMSE × 10 3 , Scenario 5 Joint Angle 1 2 NN Cont. (N = 14) 11.0 7.6 MANN Cont. (soft att., N = 10) - I 9.8 7.4 MANN Cont. (this paper, N = 10) - II 9.3 6.8 % Reduction (From I to II) 5 % 8 % First, we consider scenario 6 . In this scenario the masses undergo the same set of abrupt changes as giv en in scenario 1 . The arm lengths of the robot arm are: l 1 = 1 , l 2 = 2 . The command signals are: s 1 = 0 , s 2 = 0 . 1 . The threshold for attention reallocation, θ = 0 . 25 . T able VI giv es the SRMSE values for the two joint angles. W e only report the SRMSE values of the signals after the first 10 s in order to report the values without the initial peak. W e find that the mechanism proposed here shows a noticeable improv ement in the per- formance. Similar responses are observed in scenario 4 when the command signals are: s 1 = 0 and s 2 = 0 . 1 . Next, we consider scenario 5 . T able VII gives the SRMSE values for the two joint angles. The error v alues are reported for the responses starting from 10 s to report the values without the initial peak. Clearly , the SRMSE v alues for the controller that uses the proposed attention mechanism show a noticeable improv ement relative to the controller that uses hard attention. W e find similar performance impro vements in scenarios 1 and scenario 4 when the link lengths are set as: l 1 = 1 , l 2 = 2 . In scenarios 1 , 2 and 3 , the responses for the two controllers turned out to be similar . In scenario 4 , we found the controller that uses hard attention to be marginally superior . T ABLE VI: Robot Arm System, SRMSE × 10 3 ( t > 10 ), Scenario 6 Joint Angle 1 2 NN Cont. (N = 14) 9.4 5.7 MANN Cont. (hard att., N = 10) - I 7.8 4.9 MANN Cont. (this paper, N = 10) - II 7.3 4.5 % Reduction (From I to II) 6.4 % 8.2 % T ABLE VII: Robot Arm System, SRMSE × 10 3 ( t > 10 ), Scenario 5 Joint Angle 1 2 NN Cont. (N = 14) 9.7 6.9 MANN Cont. (hard att., N = 10) - I 7.9 6.3 MANN Cont. (this paper, N = 10) - II 7.5 5.9 % Reduction (From I to II) 5 % 6.3 % E. Discussion on K e y Design Methods In this section, we provide a comparativ e study of rep- resentation based ke y design and state based key design. T able VIII giv es the SRMSE values for the two k ey design approaches in scenarios 1 and 4 respectiv ely . It is clear that the dynamic state based key design is better than dynamic representation based key design in scenario 4 but is worser in scenario 1 . This suggests that a clear conclusion cannot be drawn on which key design is superior . T ABLE VIII: Robot Arm System, SRMSE × 10 3 Joint Angle 1 2 scenario 1 MANN Cont. (soft att.) 8.8 3.8 MANN Cont. (dyn rep ke y) 8.2 3.6 MANN Cont. (dyn state ke y) 8.5 3.7 scenario 4 MANN Cont. (soft att.) 10.2 3.1 MANN Cont. (dyn rep ke y) 10.0 3.0 MANN Cont. (dyn state ke y) 9.5 2.9 V . C O N C L U S I O N In this work, we proposed a much improved attention mechanism for working memory augmented neural network adaptiv e controllers. The attention mechanism we proposed is a combination of a hard attention and an attention real- location mechanism. The attention reallocation enables the memory to reallocate attention to a different location when the information in the location it is reading from becomes less rele v ant. This shifts the attention to a new location which is initialized with the most rele v ant information and also retains the older information in the location prior to the shift. Thus, the memory is able to o vercome the limitations of prior soft and hard attention mechanisms. W e showed through extensi v e simulations of various scenarios that the attention mechanism we proposed in this paper is more effecti ve than the other standard attention mechanisms. R E F E R E N C E S [1] B. M. Lak e, T . D. Ullman, J. B. T enenbaum, and S. J. Gershman, “Building machines that learn and think lik e people, ” Behavioral and brain sciences , vol. 40, 2017. [2] D. Muthirayan and P . P . Khargonekar , “Memory augmented neural net- work adapti ve controllers: Performance and stability , ” arXiv preprint arXiv:1905.02832 , 2019. [3] ——, “Memory augmented neural network adaptive controller for strict feedback nonlinear systems, ” arXiv preprint , 2019. [4] A. V aswani, N. Shazeer , N. Parmar , J. Uszkoreit, L. Jones, A. N. Gomez, Ł. Kaiser , and I. Polosukhin, “ Attention is all you need, ” in Advances in neural information processing systems , 2017, pp. 5998– 6008. [5] X. W ang, R. Girshick, A. Gupta, and K. He, “Non-local neural networks, ” in Pr oceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , 2018, pp. 7794–7803. [6] A. Graves, G. W ayne, M. Reynolds, T . Harley , I. Danihelka, A. Grabska-Barwi ´ nska, S. G. Colmenarejo, E. Grefenstette, T . Ra- malho, J. Agapiou et al. , “Hybrid computing using a neural network with dynamic e xternal memory , ” Natur e , vol. 538, no. 7626, p. 471, 2016. [7] N. Mishra, M. Rohaninejad, X. Chen, and P . Abbeel, “ A simple neural attentiv e meta-learner, ” arXiv preprint , 2017. [8] H. Zhang, I. Goodfello w , D. Metaxas, and A. Odena, “Self-attention generativ e adversarial networks, ” arXiv pr eprint arXiv:1805.08318 , 2018. [9] S. A. Ni vison and P . Khargonekar, “ A sparse neural network approach to model reference adaptive control with hypersonic flight applica- tions, ” in 2018 AIAA Guidance, Navigation, and Control Confer ence , 2018, p. 0842. [10] K. S. Narendra and K. Parthasarathy , “Identification and control of dynamical systems using neural networks, ” IEEE T ransactions on neural networks , vol. 1, no. 1, pp. 4–27, 1990. [11] A. Y es ¸ildirek and F . L. Lewis, “Feedback linearization using neural networks, ” A utomatica , vol. 31, no. 11, pp. 1659–1664, 1995. [12] F . L. Lewis, A. Y esildirek, and K. Liu, “Multilayer neural-net robot controller with guaranteed tracking performance, ” IEEE T r ansactions on Neural Networks , vol. 7, no. 2, pp. 388–399, 1996. [13] K. S. Narendra and S. Mukhopadhyay , “ Adapti ve control using neural networks and approximate models, ” IEEE T ransactions on neural networks , vol. 8, no. 3, pp. 475–485, 1997. [14] C. Kwan and F . L. Le wis, “Robust backstepping control of nonlinear systems using neural networks, ” IEEE T ransactions on Systems, Man, and Cybernetics-P art A: Systems and Humans , vol. 30, no. 6, pp. 753–766, 2000. [15] A. J. Calise, N. Hovakimyan, and M. Idan, “ Adaptive output feedback control of nonlinear systems using neural networks, ” Automatica , vol. 37, no. 8, pp. 1201–1211, 2001. [16] L. Chen and K. S. Narendra, “Nonlinear adapti ve control using neural networks and multiple models, ” A utomatica , v ol. 37, no. 8, pp. 1245– 1255, 2001. [17] S. S. Ge and C. W ang, “ Adaptive neural control of uncertain mimo nonlinear systems, ” IEEE T ransactions on Neural Networks , vol. 15, no. 3, pp. 674–692, 2004. [18] F . Le wis, S. Jagannathan, and A. Y esildirak, Neural network control of r obot manipulators and non-linear systems . CRC Press, 1998. [19] M.-T . Luong, H. Pham, and C. D. Manning, “Ef fectiv e ap- proaches to attention-based neural machine translation, ” arXiv pr eprint arXiv:1508.04025 , 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment