Resilience in Collaborative Optimization: Redundant and Independent Cost Functions

💡 Research Summary

This report presents a comprehensive analysis of Byzantine fault-tolerance in multi-agent collaborative optimization. The system consists of n agents, each possessing a local cost function f_i(x) defined over a d-dimensional real vector space. The canonical goal is to find a global minimum of the aggregate of all agents’ cost functions. However, in the presence of up to t Byzantine faulty agents—which may behave arbitrarily and disseminate incorrect information about their functions—this ideal goal becomes unattainable. Consequently, the report adopts the objective of t-resilience: outputting a minimum of the aggregate of the true cost functions of the non-faulty agents only.

The central theoretical contribution is an exact characterization of the condition necessary and sufficient for achieving t-resilience. The authors prove that t-resilience is achievable if and only if the agents’ cost functions satisfy a property termed 2t-redundancy. This property, defined in two equivalent forms, essentially requires that the information contained in any subset of n-2t agents is sufficient to determine the optimum for the entire set of non-faulty agents. Specifically, for any two subsets of n-2t agents, the intersection of the sets of individual minimizers must be identical and non-empty. Under the assumption of convex and differentiable cost functions, Lemma 2 shows this is equivalent to the condition that a point is a minimum for the sum of all non-faulty agents’ functions if and only if it is a minimum for the sum of any n-2t non-faulty agents’ functions. Theorem 1 formally establishes the necessity and sufficiency of 2t-redundancy for t-resilience. The sufficiency is proven constructively by outlining an algorithm where the server computes potential minimizers from all possible subsets of n-2t agents and identifies a common solution.

Recognizing that the strong condition of 2t-redundancy may not hold in many scenarios (e.g., with independent cost functions), the report introduces a relaxed but quantifiable metric called (u,t)-weak resilience. An algorithm is (u,t)-weakly resilient if its output point x̂ is such that there exists a subset of at least (|S|-u) non-faulty agents for which the aggregate cost at x̂ is no worse than the optimal aggregate cost for all non-faulty agents. This metric provides a graceful degradation of performance. The authors show that for the case of independent or non-redundant cost functions, if the cost functions are non-negative and faulty agents are in the minority (t < n/2), an algorithm achieving (u,t)-weak resilience with u ≥ t can be constructed.

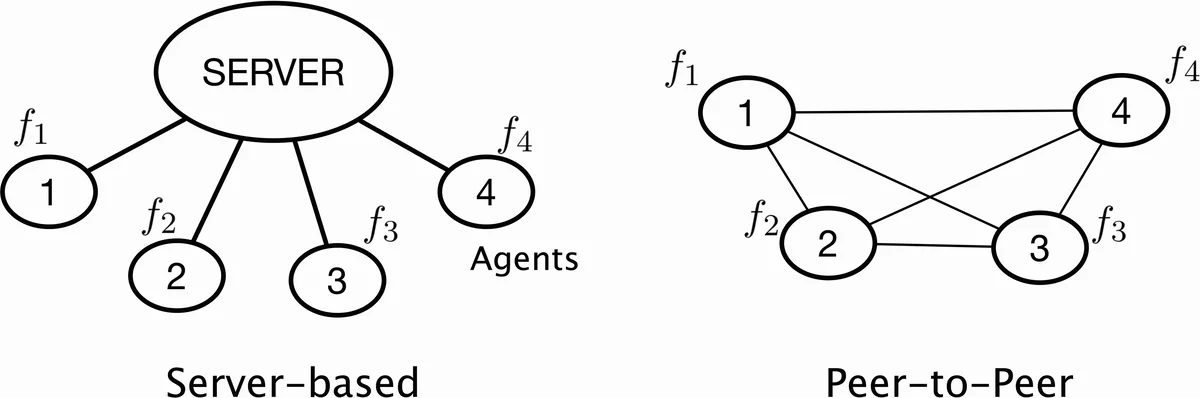

The report considers both server-based (with a trusted server) and peer-to-peer system architectures. For the P2P case, it notes that any server-based algorithm can be simulated using Byzantine broadcast primitives provided t < n/3. The discussion also covers the trade-off between algorithmic complexity and the degree of redundancy in the cost functions. Furthermore, Section 4 summarizes a gradient-based algorithm for t-resilience from the authors’ prior work, and Section 5 briefly discusses the potential extension of the results to non-differentiable and non-convex cost functions.

In summary, this work provides a foundational framework for understanding resilience in distributed optimization against Byzantine failures. It precisely delineates the informational redundancy (2t-redundancy) required for exact fault-tolerant optimization and proposes a practical fallback guarantee (weak resilience) for scenarios lacking such redundancy, thereby offering a holistic view of the problem landscape.

Comments & Academic Discussion

Loading comments...

Leave a Comment