Causality-Guided Adaptive Interventional Debugging

Runtime nondeterminism is a fact of life in modern database applications. Previous research has shown that nondeterminism can cause applications to intermittently crash, become unresponsive, or experience data corruption. We propose Adaptive Interventional Debugging (AID) for debugging such intermittent failures. AID combines existing statistical debugging, causal analysis, fault injection, and group testing techniques in a novel way to (1) pinpoint the root cause of an application’s intermittent failure and (2) generate an explanation of how the root cause triggers the failure. AID works by first identifying a set of runtime behaviors (called predicates) that are strongly correlated to the failure. It then utilizes temporal properties of the predicates to (over)-approximate their causal relationships. Finally, it uses fault injection to execute a sequence of interventions on the predicates and discover their true causal relationships. This enables AID to identify the true root cause and its causal relationship to the failure. We theoretically analyze how fast AID can converge to the identification. We evaluate AID with six real-world applications that intermittently fail under specific inputs. In each case, AID was able to identify the root cause and explain how the root cause triggered the failure, much faster than group testing and more precisely than statistical debugging. We also evaluate AID with many synthetically generated applications with known root causes and confirm that the benefits also hold for them.

💡 Research Summary

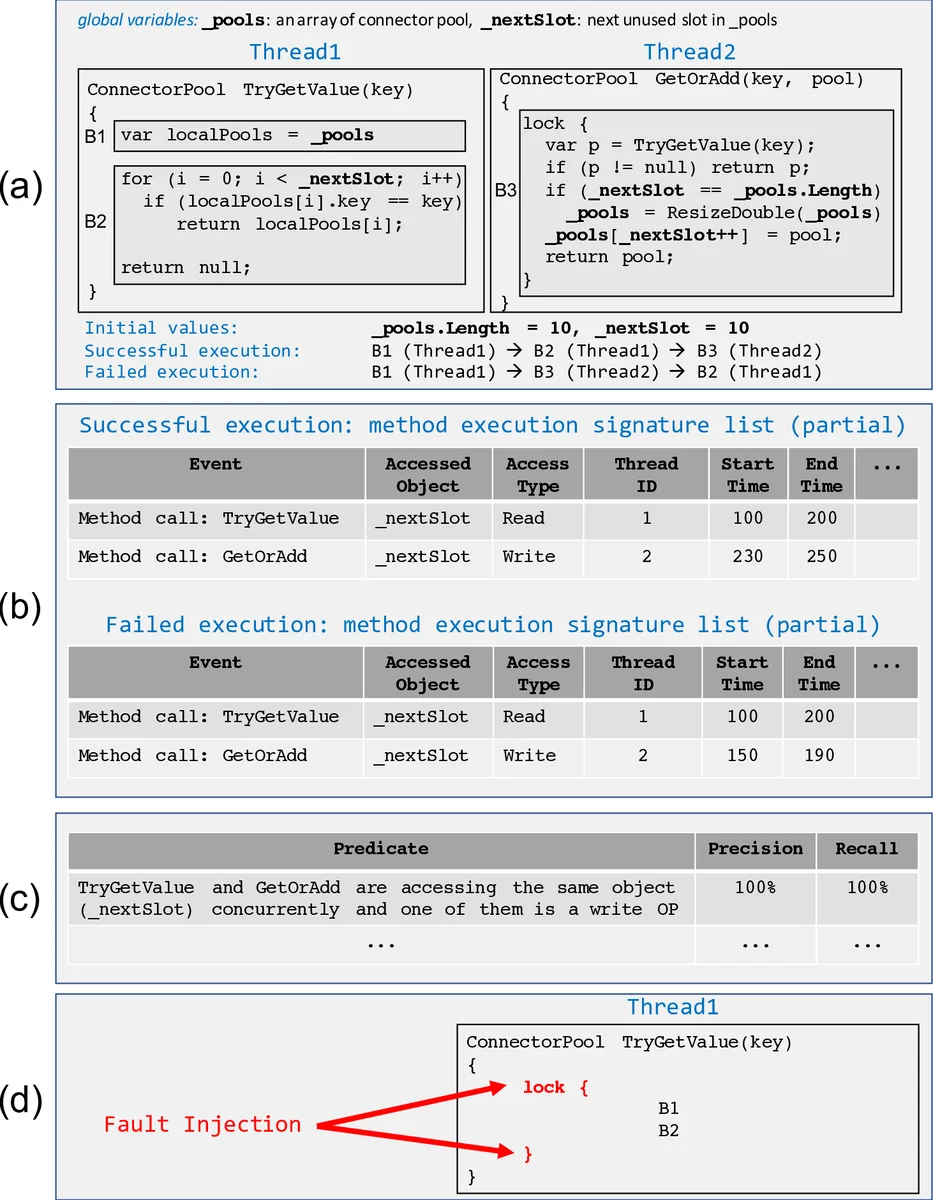

The paper tackles the persistent problem of intermittent failures in modern database‑backed applications, which often arise from nondeterministic runtime phenomena such as thread scheduling variability, transient faults, and timing races. Traditional statistical debugging (SD) can collect large numbers of Boolean predicates from successful and failed runs and rank them by precision and recall, but it suffers from two major drawbacks: (1) it typically returns a long list of highly correlated predicates, many of which are merely symptoms rather than causes, and (2) it provides little insight into how a candidate predicate could actually lead to the observed failure. Consequently, developers must manually sift through many candidates and rely on deep domain knowledge to pinpoint the true root cause.

Adaptive Interventional Debugging (AID) is introduced as a data‑driven technique that augments SD with causal analysis, fault injection, and adaptive group testing. The workflow proceeds in four stages.

-

Predicate Extraction and Full Discriminativity – AID first runs the instrumented program many times, collecting predicate logs. It filters for fully discriminative predicates, i.e., those that appear in every failed execution and never in a successful one. This guarantees that the true root‑cause predicate, if present in the log set, will be among the candidates.

-

Construction of an Approximate Causal DAG (AC‑DAG) – Using temporal ordering information, AID infers possible causal edges: if predicate P₁ always precedes predicate P₂ in all logs where both appear, an edge P₁ → P₂ is added. The resulting directed acyclic graph is an over‑approximation: it contains all genuine causal relationships but may also include spurious edges and nodes.

-

Structure‑Guided Adaptive Group Testing with Fault Injection – Rather than testing predicates independently or selecting random groups (as in classic group testing), AID exploits the AC‑DAG to choose a subset of predicates whose simultaneous intervention will maximally reduce uncertainty. An intervention is realized via fault injection: the program is instrumented so that the selected predicate’s value is forced to match its successful‑run value (e.g., forcing a method to return a non‑null object, inserting a lock to break a data race, etc.).

-

Counterfactual Causality Evaluation and DAG Pruning – After each intervention round, the program is re‑executed. If the failure disappears, the intervened predicate is a counterfactual cause (the failure would not have occurred without it) and is marked as a root‑cause candidate. If the failure persists, the predicate (and any downstream edges) are eliminated from consideration. Repeating this process refines the AC‑DAG until only a single root‑cause node and a causal path from that node to the failure remain.

The authors provide an information‑theoretic analysis showing that leveraging the DAG’s structure dramatically increases the expected information gain per test, yielding a theoretical bound of O(log N) intervention rounds in the worst case (N = number of predicates), compared with the O(D log N) bound of generic adaptive group testing (D = number of defective items).

Empirical Evaluation spans six real‑world systems—Npgsql (a .NET PostgreSQL driver), Apache Kafka, Microsoft Azure Cosmos DB, and three proprietary Microsoft applications—each exhibiting intermittent failures under identical inputs. For every case, AID identified the exact root cause and automatically generated a causal chain (root → intermediate predicates → failure) that matched the developers’ own explanations. AID required dramatically fewer intervention rounds (typically 5–12) than a baseline adaptive group‑testing implementation (often 20–50 rounds) and produced far fewer candidate predicates than pure SD (average 1–2 vs. ~15). Additional synthetic workloads with known ground‑truth root causes confirmed that AID consistently outperforms both baselines in terms of intervention count and worst‑case performance.

The paper also discusses limitations: the current formulation assumes a single root cause and relies on the presence of a discriminative predicate in the initial log set. Extending AID to handle multiple interacting root causes, richer predicate languages, and online, low‑overhead fault injection are identified as promising future directions.

In summary, Adaptive Interventional Debugging unifies statistical correlation, temporal causality, and targeted fault injection into a coherent, efficient pipeline. By iteratively refining an over‑approximated causal graph through structure‑aware interventions, AID not only pinpoints the true source of intermittent failures with far fewer experiments than traditional group testing, but also produces a human‑readable explanation of how that source propagates to the observed crash. This represents a substantial step forward for developers confronting nondeterministic bugs in complex, concurrent database applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment