Experimental Design under Network Interference

💡 Research Summary

The paper tackles the problem of designing experiments for causal inference when units are linked through a single network and interference creates both spillover effects and statistical dependence. The authors propose a two‑wave (pilot‑main) experimental framework that leverages a small pilot study to estimate the variance‑covariance structure of the outcomes, and then uses these estimates to optimally select participants and assign treatments in the main experiment so as to minimize the conditional variance of a user‑specified linear estimator of the treatment effect(s).

Key modeling assumptions are: (i) local interference – each unit’s potential outcome depends only on its own treatment and on a known exposure mapping of its neighbors’ treatments; (ii) one‑degree dependence – error terms are independent across units that are not directly connected; (iii) the underlying network is sparse, with maximum degree growing slower than the square‑root of the total number of nodes. Under these conditions the authors define a model‑based estimand τ that aggregates direct, spillover, and overall effects, and they focus on linear estimators (difference‑in‑means, weighted differences, or linear regression) that are unbiased for τ conditional on the design.

The experimental protocol consists of three steps: (1) select a small, well‑separated subset of nodes and run a pilot experiment; (2) using the pilot’s variance estimates, solve an optimization problem that chooses the main‑experiment participant set and treatment vector to minimize the estimated variance of τ; (3) collect outcomes from the main experiment. Because the pilot units (and their neighbors) must be excluded from the main sample to preserve unconfoundedness, the pilot selection problem is cast as a min‑cut problem on the network graph. This yields a tractable algorithm that balances two opposing forces: a larger pilot improves variance estimation but reduces the pool of eligible main‑experiment units, tightening the design constraints.

To formalize this trade‑off, the authors introduce a regret measure: the excess variance of the two‑wave design relative to an “oracle” design that knows the true variance‑covariance matrix and faces no pilot‑related constraints. They prove that regret decays at rate O(1/m + m/n), where m is the pilot size and n is the main‑experiment size. Consequently, the regret‑minimizing pilot size satisfies m ≈ √n, providing a clear rule of thumb for practitioners. The proof hinges on lower‑bounding the oracle objective under the stricter feasible set imposed by the pilot and on establishing concentration of the pilot‑based variance estimator.

The optimization naturally induces dependence among treatment assignments; the authors handle this by formulating a discrete Lagrangian and solving it with standard combinatorial optimization tools. They also extend the framework to incorporate randomization for design‑based inference and to accommodate partially observed networks.

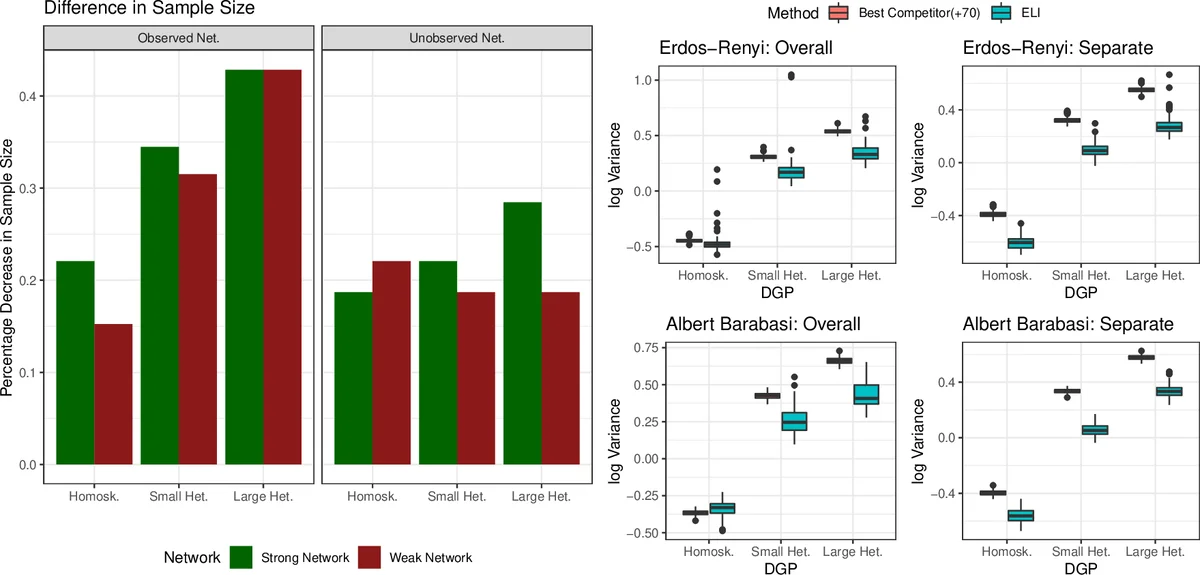

Simulation studies on synthetic graphs and real‑world social networks demonstrate substantial gains over state‑of‑the‑art clustered or saturation designs, especially when outcomes exhibit heteroskedasticity and non‑zero covariances. The proposed method reduces mean‑squared error for overall, direct, and spillover effect estimators by up to 20 % in realistic settings.

In the literature review, the paper situates itself among clustered experiments, saturation designs, and i.i.d. batch experimental design, noting that none of these prior works exploit pilot information to achieve variance‑optimal designs under network interference. It also connects to the broader causal inference with interference literature, which focuses on identification and inference rather than design optimization. By bridging these strands, the paper fills a notable gap: it provides a theoretically grounded, computationally feasible, and empirically validated approach to designing two‑wave experiments that achieve near‑oracle precision in the presence of network spillovers.

Comments & Academic Discussion

Loading comments...

Leave a Comment