Protocol and Tools for Conducting Agile Software Engineering Research in an Industrial-Academic Setting: A Preliminary Study

Conducting empirical research in software engineering industry is a process, and as such, it should be generalizable. The aim of this paper is to discuss how academic researchers may address some of the challenges they encounter during conducting empirical research in the software industry by means of a systematic and structured approach. The protocol developed in this paper should serve as a practical guide for researchers and help them with conducting empirical research in this complex environment.

💡 Research Summary

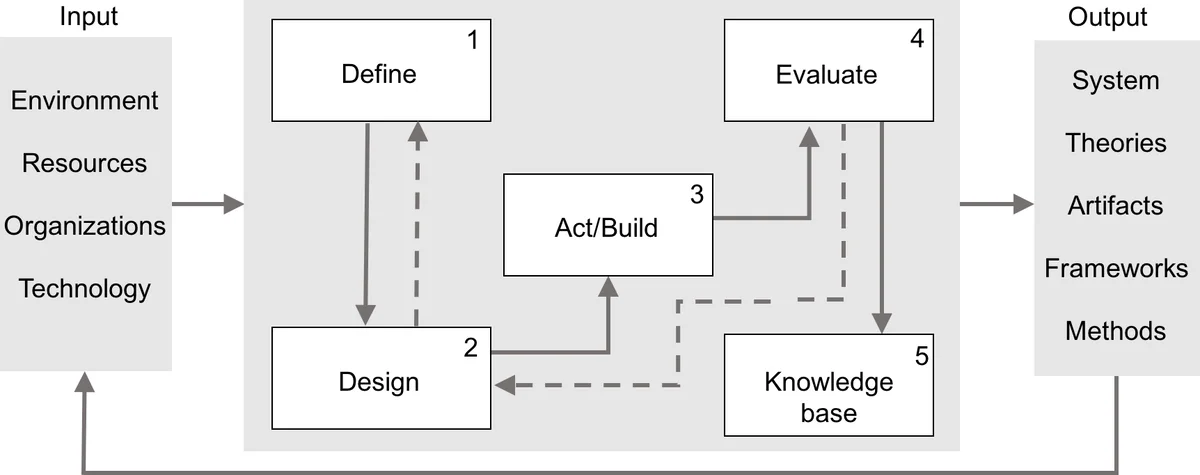

The paper presents a structured protocol and a set of supporting tools designed to help academic researchers conduct empirical software engineering studies within an industrial‑academic collaboration, specifically when the research focuses on Agile development practices. The authors argue that existing collaboration models describe phases but lack internal control mechanisms and systematic feedback loops. To address this gap, they integrate three methodological traditions—Action Research, Design Science, and the Lean Research Approach (LRA)—and overlay them with the DMAIC (Define‑Measure‑Analyze‑Improve‑Control) cycle from Six Sigma, creating a dual‑loop framework: an outer loop that captures the iterative nature of Action Research and an inner loop that provides continuous process improvement.

The protocol is divided into five sequential phases:

-

Define – Clarify the research rationale, formulate research questions, select a strategy, conduct a state‑of‑the‑art review, and establish roles, responsibilities, terminology, dissemination plans, and a review schedule. Tools suggested include problem‑framing approaches, stakeholder analysis matrices, GQM+Strategy, CTB matrices, and organizational culture diagnostics such as OCAI or the Congruence Model.

-

Design – Develop courses of action, set baselines, define data collection and analysis procedures, design the solution, plan validation activities, create a communication plan, and devise a control plan. The authors recommend systematic literature mapping studies, descriptive and inferential statistics, and solution modeling techniques (architecture and requirements envisioning).

-

Act/Build – Implement the data extraction strategy and build a prototype. Agile engineering practices such as XP, Test‑Driven Development (TDD), Behavior‑Driven Development (BDD), and rapid feedback mechanisms (e.g., the method by Vetrò et al.) are emphasized to keep iteration cycles short.

-

Evaluate – Conduct both academic validation (peer reviews, conference papers) and business validation (case studies, questionnaires, structured/unstructured interviews). Evaluation criteria are drawn from Sandberg et al.’s ten‑factor framework, ensuring methodological rigor and practical relevance.

-

Knowledge Base – Disseminate findings internally (company trainings, workshops) and externally (publications, patents, conferences). The authors stress the importance of sharing lessons learned to create a lasting knowledge repository.

Table 2 in the paper maps each phase to concrete tools, providing a ready‑to‑use toolbox for researchers. For example, the Define phase uses RACI matrices for responsibility allocation, while the Design phase leverages statistical packages for data analysis, and the Evaluate phase employs qualitative analysis software for interview data.

The authors illustrate the protocol through an ongoing three‑year Industrial Doctorate project between a global software company and the Universitat Politècnica de Catalunya. The project aims to improve Agile development processes using a data‑driven approach. Throughout the collaboration, the partners co‑located on the university campus, which facilitated frequent interaction, rapid feedback, and joint ownership of research artifacts. The protocol guided the project from problem definition (automatic dependency detection in Agile environments) through prototype development, iterative validation, and finally to the dissemination of methodological guidelines and a prototype tool that adds value to the company.

Key insights include:

- Context awareness – Understanding the organization’s structure, culture, and communication patterns (Conway’s Law) is essential for accessing data, identifying champions, and aligning research objectives with business needs.

- Iterative feedback – Short, sprint‑like cycles (research sprints of three months) combined with regular gate reviews keep the project aligned with both academic standards and business expectations.

- Dual validation – Simultaneous academic and business validation ensures that research contributions are scientifically sound and practically useful.

- Process improvement – Embedding DMAIC and Lean Six Sigma techniques provides an internal control mechanism that can measure the effectiveness of the collaboration and suggest continuous improvements.

In conclusion, the proposed protocol offers a reproducible, scalable, and adaptable roadmap for conducting Agile‑focused empirical software engineering research in industry settings. It bridges the gap between high‑level methodological guidance and day‑to‑day operational tools, thereby enhancing the likelihood of successful, impactful collaborations. Future work should test the protocol across diverse domains and organizational sizes to further validate its generalizability.

Comments & Academic Discussion

Loading comments...

Leave a Comment