Natural Language Interaction to Facilitate Mental Models of Remote Robots

Increasingly complex and autonomous robots are being deployed in real-world environments with far-reaching consequences. High-stakes scenarios, such as emergency response or offshore energy platform and nuclear inspections, require robot operators to have clear mental models of what the robots can and can’t do. However, operators are often not the original designers of the robots and thus, they do not necessarily have such clear mental models, especially if they are novice users. This lack of mental model clarity can slow adoption and can negatively impact human-machine teaming. We propose that interaction with a conversational assistant, who acts as a mediator, can help the user with understanding the functionality of remote robots and increase transparency through natural language explanations, as well as facilitate the evaluation of operators’ mental models.

💡 Research Summary

The paper addresses a critical challenge in the deployment of complex, autonomous robots in hazardous, high‑stakes environments such as nuclear facilities, offshore platforms, and emergency response sites. In these scenarios, operators often lack a clear mental model of what each robot can and cannot do, especially when they are not the original designers. This knowledge gap can lead to over‑trust, under‑trust, misuse, or delayed decision‑making, ultimately hampering adoption and effective human‑machine teaming.

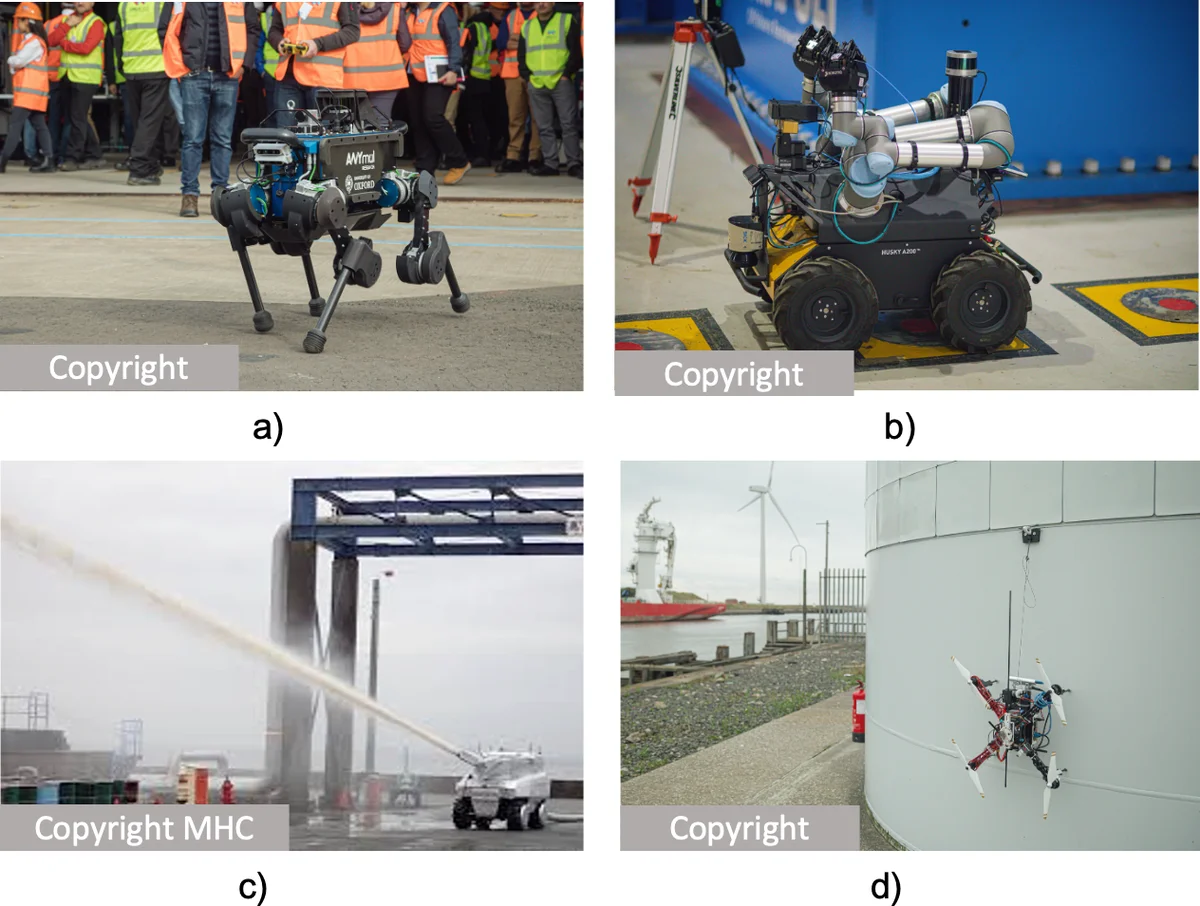

To bridge this gap, the authors propose a conversational intelligent assistant named MIRIAM (Multimodal Intelligent Robot Interaction Assistant for Monitoring). MIRIAM functions as a chat‑or‑spoken dialogue system that mediates between the operator and a fleet of heterogeneous remote robots—including aerial drones, ground vehicles, and underwater platforms. The system continuously gathers status updates (battery level, sensor health, location, etc.) from each robot, integrates them into a dynamic world model, and uses this model to generate natural‑language explanations tailored to the operator’s queries and intents.

Key technical contributions include:

- Dynamic Capability Reasoning – MIRIAM evaluates each robot’s current capabilities against the task at hand, automatically filtering out unsuitable assets (e.g., a robot with a broken camera cannot be assigned to visual inspection).

- Dual‑Level Explanations – The assistant provides both functional explanations (what the robot is doing or can do) and structural explanations (how the robot’s internal processes work). This duality supports both “what‑does‑it‑do” and “how‑does‑it‑work” aspects of mental models.

- Mixed‑Initiative Interaction – Operators can issue high‑level commands (“inspect this area”) while MIRIAM clarifies intent, suggests appropriate robots, and informs the operator of any constraints, thereby reducing the cognitive load of managing multiple heterogeneous platforms.

- Evaluation Framework – The authors measured mental‑model clarity using pre‑ and post‑interaction questionnaires that assess functional understanding, structural understanding, trust, and situational awareness. Experiments conducted with robots from the ORCA Hub showed statistically significant improvements in both functional and structural mental‑model scores after exposure to MIRIAM’s explanations. Notably, the amount and presentation style of information influenced how mental models evolved over time, highlighting the importance of calibrated communication.

The paper also discusses limitations and future directions. Current mental‑model assessments rely on self‑report scales, which can be subjective. The experimental context is limited to offshore inspection tasks, so generalization to other domains (e.g., space exploration, disaster relief) remains to be validated. To enhance naturalness and efficiency, the authors are exploring the embodiment of MIRIAM in a socially expressive robot (the Furhat platform), leveraging visual cues such as gaze, facial expression, and prosody. These social signals could convey implicit information about robot capabilities without explicit verbal repetition, mirroring human‑to‑human conversational dynamics.

Furthermore, the authors propose integrating Theory‑of‑Mind reasoning to infer the operator’s prior knowledge and misconceptions, enabling personalized corrective feedback. This could improve trust calibration and accelerate the formation of high‑fidelity mental models, especially in time‑critical, high‑risk missions.

In summary, the work demonstrates that a natural‑language conversational assistant can serve as an effective mediator for remote robot teams, enhancing transparency, supporting mental‑model development, and ultimately fostering safer and more efficient human‑robot collaboration. The authors’ roadmap—adding socially‑enhanced cues, automated user modeling, and broader domain testing—offers a promising path toward widespread adoption of such assistants in real‑world, high‑stakes robotic operations.

Comments & Academic Discussion

Loading comments...

Leave a Comment