Complete Subset Averaging for Quantile Regressions

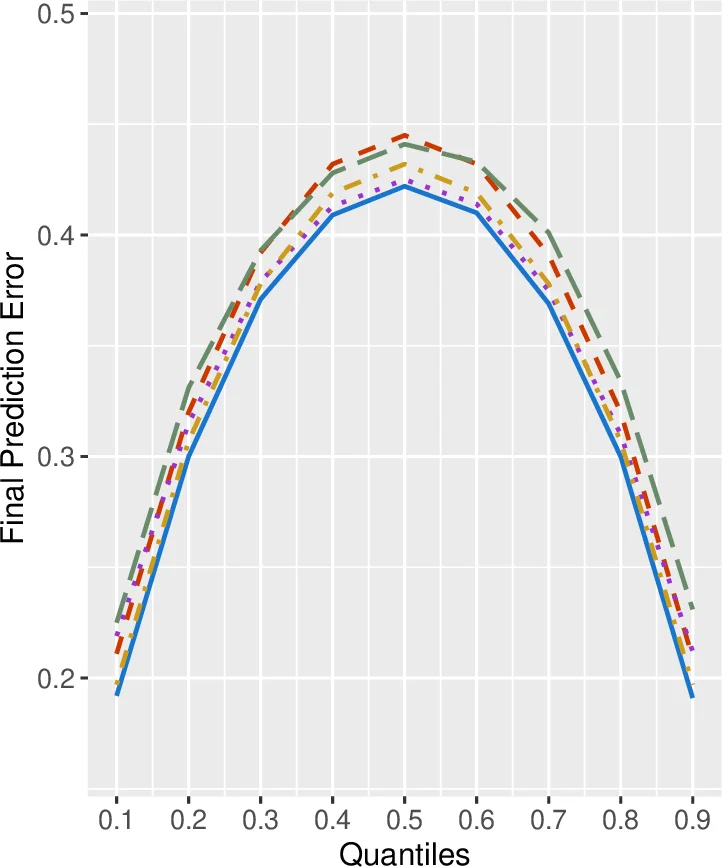

We propose a novel conditional quantile prediction method based on complete subset averaging (CSA) for quantile regressions. All models under consideration are potentially misspecified and the dimension of regressors goes to infinity as the sample size increases. Since we average over the complete subsets, the number of models is much larger than the usual model averaging method which adopts sophisticated weighting schemes. We propose to use an equal weight but select the proper size of the complete subset based on the leave-one-out cross-validation method. Building upon the theory of Lu and Su (2015), we investigate the large sample properties of CSA and show the asymptotic optimality in the sense of Li (1987). We check the finite sample performance via Monte Carlo simulations and empirical applications.

💡 Research Summary

This paper introduces a novel prediction framework for conditional quantile regression called Complete Subset Averaging (CSA). The authors consider a setting where the number of potential regressors K may increase with the sample size n, and every candidate model can be misspecified. Rather than selecting a limited set of models and estimating sophisticated weight vectors, CSA averages over all possible subsets of size k drawn from the K regressors. For each subset m (of size k) a standard quantile regression is estimated, yielding a conditional quantile predictor x_i′(m,k) Θ̂(m,k). The final CSA predictor is the simple arithmetic mean of these M(K,k)=K!/(k!(K−k)!) individual predictions, i.e.,

\

Comments & Academic Discussion

Loading comments...

Leave a Comment