waLBerla: A block-structured high-performance framework for multiphysics simulations

Programming current supercomputers efficiently is a challenging task. Multiple levels of parallelism on the core, on the compute node, and between nodes need to be exploited to make full use of the system. Heterogeneous hardware architectures with accelerators further complicate the development process. waLBerla addresses these challenges by providing the user with highly efficient building blocks for developing simulations on block-structured grids. The block-structured domain partitioning is flexible enough to handle complex geometries, while the structured grid within each block allows for highly efficient implementations of stencil-based algorithms. We present several example applications realized with waLBerla, ranging from lattice Boltzmann methods to rigid particle simulations. Most importantly, these methods can be coupled together, enabling multiphysics simulations. The framework uses meta-programming techniques to generate highly efficient code for CPUs and GPUs from a symbolic method formulation. To ensure software quality and performance portability, a continuous integration toolchain automatically runs an extensive test suite encompassing multiple compilers, hardware architectures, and software configurations.

💡 Research Summary

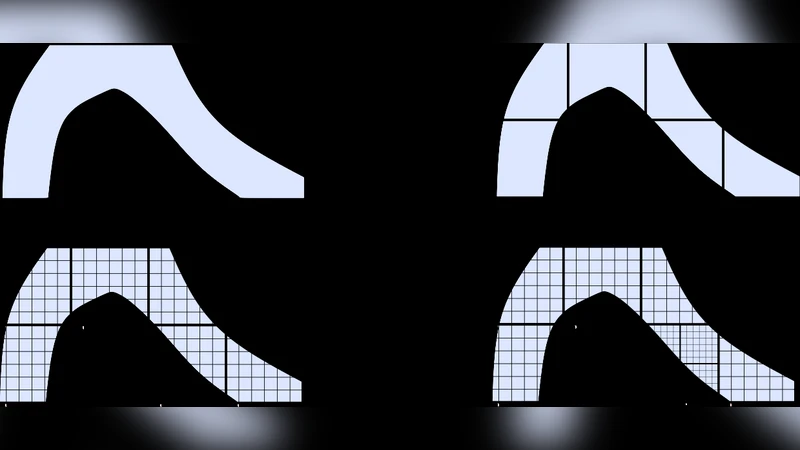

The paper presents waLBerla, an open‑source, block‑structured high‑performance framework designed to enable large‑scale multiphysics simulations on modern supercomputers. At its core lies a domain partitioning scheme called BlockForest, which decomposes the global computational domain into cuboidal blocks that obey a strict 2:1 size ratio between neighboring blocks. These blocks are organized as leaves of a forest of octrees, allowing each MPI rank to store only information about its own blocks and immediate neighbors. This distributed data layout guarantees that per‑process memory consumption does not grow with the total number of processes, thereby supporting extreme scalability.

The framework supports pure MPI as well as hybrid MPI/OpenMP parallelism, and it provides a layered communication infrastructure. The lowest layer offers thin C++ wrappers around MPI calls; on top of that, a “buffered MPI” layer implements flexible serialization using C++ stream‑like operators, enabling safe packing and unpacking of native types, STL containers, and waLBerla‑specific data structures. Metadata attached to buffers allows runtime type checking and detection of mismatched messages, reducing programmer errors in high‑performance communication patterns.

Data structures are built around high‑performance fields and buffers. Fields are three‑dimensional arrays laid out to maximize SIMD and vector‑instruction utilization (e.g., AVX‑512). Accessors are heavily templated and inlined, ensuring zero‑overhead abstraction. Buffers support both CPU and GPU back‑ends; the same field operations can be executed on CUDA or HIP devices without changing user code, providing performance portability across heterogeneous architectures.

A standout feature is the meta‑programming based code generation pipeline. Users describe numerical methods (e.g., lattice‑Boltzmann collision operators, discrete element contact laws, generic PDE stencils) at a symbolic level. The framework parses these high‑level specifications and automatically generates optimized C++ kernels for CPUs or PTX/HIP kernels for GPUs using template metaprogramming and JIT compilation techniques. This “zero‑cost abstraction” separates algorithmic intent from low‑level implementation, allowing rapid prototyping of new physics while retaining near‑hand‑tuned performance.

Adaptive mesh refinement (AMR) and load balancing are tightly integrated. Users register callbacks that mark blocks for refinement or coarsening based on problem‑specific criteria such as vorticity magnitude or particle count. A lightweight proxy BlockForest, containing only topological information and estimated workloads, is constructed. The proxy is then redistributed using space‑filling curves (Morton, Hilbert) or external libraries like ParMETIS. Because the proxy carries no simulation data, the refinement‑balancing cycle incurs minimal overhead. After redistribution, the real BlockForest is updated, and data migration is performed automatically.

The paper showcases several application domains. The Lattice Boltzmann Method (LBM) module provides a suite of collision models (BGK, TRT, MRT) and boundary conditions, achieving strong scaling on hundreds of thousands of cores. The Rigid Particle Dynamics (RPD) module implements hard‑contact and soft‑contact DEM algorithms, sharing the same block infrastructure as LBM. Coupling LBM and RPD enables monolithic fluid‑particle simulations such as sedimentation, fluidized beds, and particulate flows without sacrificing performance. Additional modules for generic PDE solvers, phase‑field models, and earth‑mantle dynamics illustrate the framework’s extensibility.

Software quality is ensured through an extensive continuous integration (CI) pipeline. The CI system automatically builds and tests waLBerla with multiple compilers (GCC, Clang, Intel), operating systems (Linux, macOS), and hardware targets (CPU, NVIDIA GPU, AMD GPU). The test suite includes unit tests, integration tests, and performance regression benchmarks, with results visualized on a dashboard for immediate feedback. This automated testing guarantees correctness across diverse environments and protects against performance regressions as the code evolves.

User productivity is further enhanced by a Python interface and a graphical user interface (GUI). The Python bindings expose configuration handling, pre‑ and post‑processing utilities, and enable seamless interaction with NumPy, facilitating data analysis and coupling with machine‑learning workflows. The GUI assists in setting up simulations, visualizing domain partitions, and monitoring runtime statistics.

In summary, waLBerla combines a flexible block‑structured domain decomposition, sophisticated meta‑programming code generation, adaptive refinement, and robust parallel communication to deliver a high‑performance, portable, and extensible platform for multiphysics simulations. Its design addresses the key challenges of modern HPC—scalability, performance portability, and software maintainability—making it a valuable tool for researchers tackling complex, large‑scale computational problems.

Comments & Academic Discussion

Loading comments...

Leave a Comment