FMore: An Incentive Scheme of Multi-dimensional Auction for Federated Learning in MEC

Promising federated learning coupled with Mobile Edge Computing (MEC) is considered as one of the most promising solutions to the AI-driven service provision. Plenty of studies focus on federated learning from the performance and security aspects, but they neglect the incentive mechanism. In MEC, edge nodes would not like to voluntarily participate in learning, and they differ in the provision of multi-dimensional resources, both of which might deteriorate the performance of federated learning. Also, lightweight schemes appeal to edge nodes in MEC. These features require the incentive mechanism to be well designed for MEC. In this paper, we present an incentive mechanism FMore with multi-dimensional procurement auction of K winners. Our proposal FMore not only is lightweight and incentive compatible, but also encourages more high-quality edge nodes with low cost to participate in learning and eventually improve the performance of federated learning. We also present theoretical results of Nash equilibrium strategy to edge nodes and employ the expected utility theory to provide guidance to the aggregator. Both extensive simulations and real-world experiments demonstrate that the proposed scheme can effectively reduce the training rounds and drastically improve the model accuracy for challenging AI tasks.

💡 Research Summary

The paper “FMore: An Incentive Scheme of Multi-dimensional Auction for Federated Learning in MEC” addresses a critical barrier to the practical deployment of federated learning (FL) in Mobile Edge Computing (MEC) environments: the lack of incentive for edge nodes to participate. While the combination of FL and MEC is a promising paradigm for privacy-preserving, distributed AI model training, existing research has primarily focused on performance optimization and security, neglecting the economic and resource-related motivations of rational edge device owners.

The core problem is twofold. First, edge nodes are reluctant to voluntarily contribute their computational, communication, and battery resources to the collaborative training process without compensation. Second, these resources are multi-dimensional (e.g., data quality, CPU cycles, bandwidth) and heterogeneous across nodes, which can severely degrade FL performance if not managed properly. The proposed solution, FMore, is an incentive framework based on a multi-dimensional procurement auction model extended for selecting K winners per training round.

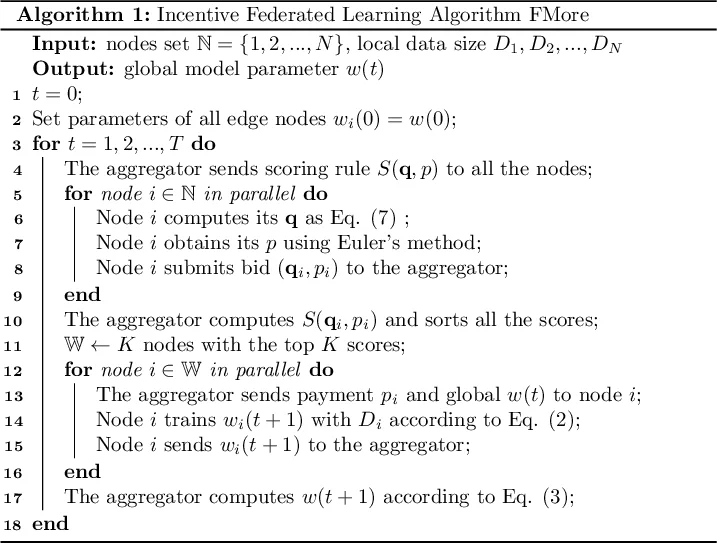

In FMore’s design, the aggregator (buyer) initiates each FL round by broadcasting a scoring rule, S(q, p) = s(q) - p, where q is a vector representing the qualities of multiple resources a node offers, and p is the payment the node requests. Edge nodes (sellers), each with a private cost parameter θ and a cost function c(q, θ), then strategically submit their bids (q, p) to maximize their profit π = p - c(q, θ). The aggregator selects the K nodes with the highest scores to form the winner set for that round, followed by the standard FL steps of model distribution, local training, and global aggregation.

The paper’s key theoretical contributions are providing a Nash equilibrium bidding strategy for the edge nodes and guidance for the aggregator based on expected utility theory. The authors prove that under reasonable assumptions (e.g., single-crossing conditions), a strategy where nodes bid truthfully according to their private type θ constitutes a Bayesian Nash equilibrium, ensuring the scheme’s Incentive Compatibility (IC). This means it is futile for a node to misreport its resource capabilities. The framework is flexible, allowing the scoring function s(q) to take various forms (e.g., linear for substitutable resources, min for complementary resources, Cobb-Douglas) to match different aggregator utility preferences.

Extensive evaluations demonstrate FMore’s effectiveness. Simulations using CNN and LSTM models on datasets like MNIST, CIFAR-10, and Sentiment140 show that FMore reduces the number of training rounds required for convergence by an average of 51.3% and improves final model accuracy by up to 28% compared to random participant selection (RandFL). A real-world implementation with 31 physical edge nodes and one aggregator confirmed these results, showing a 44.9% improvement in model accuracy and a 38.4% reduction in total training time. These gains are achieved because FMore intelligently selects high-quality, low-cost nodes, accelerating learning and leading to better models. The paper concludes that FMore is a lightweight, incentive-compatible mechanism that solves a crucial practical problem for federated learning in MEC, paving the way for its wider adoption.

Comments & Academic Discussion

Loading comments...

Leave a Comment