Underwater Color Restoration Using U-Net Denoising Autoencoder

Visual inspection of underwater structures by vehicles, e.g. remotely operated vehicles (ROVs), plays an important role in scientific, military, and commercial sectors. However, the automatic extraction of information using software tools is hindered…

Authors: Yousif Hashisho, Mohamad Albadawi, Tom Krause

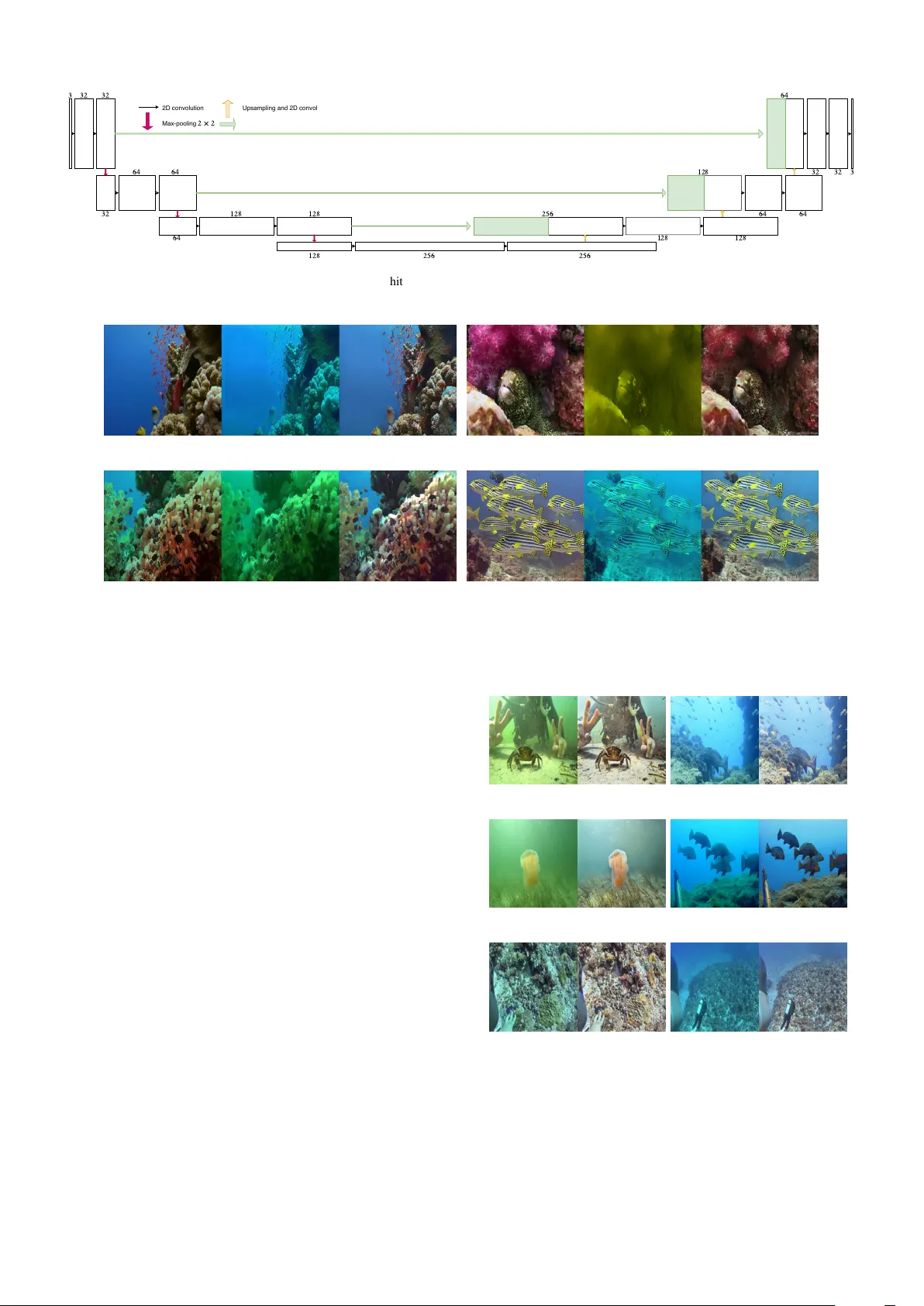

Underwater Color Restoration Using U-Net Denoising Autoencoder Y ousif Hashisho Department of Maritime Graphics F raunhofer Institute for Computer Gr aphics Researc h (IGD) Rostock, Germany yousif.hashisho@igd-r .fraunhofer .de T om Krause Department of Maritime Graphics F raunhofer Institute for Computer Gr aphics Researc h (IGD) Rostock, Germany tom.krause@igd-r .fraunhofer .de Mohamad Albadawi Department of Maritime Graphics F raunhofer Institute for Computer Gr aphics Researc h (IGD) Rostock, Germany mohamad.albadawi@igd-r .fraunhofer .de Uwe Freiherr von Lukas Department of Maritime Graphics F raunhofer Institute for Computer Gr aphics Researc h (IGD) Department of Computer Science University of Rostoc k Rostock, Germany uwe.freiherr .von.lukas@igd-r .fraunhofer .de Abstract —V isual inspection of underwater structures by vehi- cles, e.g. remotely operated vehicles (RO Vs), plays an important role in scientific, military , and commercial sectors. However , the automatic extraction of information using software tools is hindered by the characteristics of water which degrade the quality of captured videos. As a contribution for restoring the color of underwater images, Underwater Denoising A utoencoder (UD AE) model is de veloped using a denoising autoencoder with U-Net ar chitecture. The proposed network takes into consid- eration the accuracy and the computation cost to enable r eal- time implementation on underwater visual tasks using end-to- end autoencoder network. Underwater vehicles perception is impro ved by reconstructing captured frames; hence obtaining better performance in underwater tasks. Related learning meth- ods use generative adversarial networks (GANs) to generate color corrected underwater images, and to our knowledge this paper is the first to deal with a single autoencoder capable of pr oducing same or better results. Mor eover , image pairs are constructed for training the proposed network, where it is hard to obtain such dataset from underwater scenery . At the end, the proposed model is compared to a state-of-the-art method. Index T erms —autoencoders, underwater image, image restora- tion, Generativ e Adverarial Networks, real-time I . I N T RO D U C T I O N Marine robots, such as remotely operated vehicles (R O Vs), are being increasingly used in the scientific, military , and commercial sectors. They are critical in collecting data and performing certain underw ater operations. Due to safety and health concerns, human intervention can be risky and limited when executing underwater missions [ 1 ]. Thus, underwater vehicles are supplied with cameras systems for performing numerous vision tasks. For instance, Choi et al., 2017 [ 2 ] operated an R O V manually for inspecting harbour structures and acquiring high quality videos. Manjunatha et al., 2018 [ 3 ] built a robot equipped with a high definition camera for visual inspection at a specified depth in a water body . Howe ver , the automatic extraction of information using software tools is hindered by underwater image degradation caused by poor water medium and light beha viour . Contrast loss and color distortion af fect the algorithms and ultimately the vehicle performance in gathering data and pro- cessing them. An image enhancement technique is needed for vehicle navigation by human operator to facilitate underwater tasks. Furthermore, the processing speed should be taken into consideration for a real-time implementation. This paper proposes Underwater Denoising Autoencoder (UD AE), a deep learning network based on a single denoising autoencoder [ 4 ] using U-Net [ 5 ] as a CNN architecture, for improving the quality of underwater imagery and video material. The contributions presented in this paper can be summarized as follows: • UD AE network is proposed which is specialized in un- derwater color restoration. • F aster processing speed is achieved than the state-of-the- art method which optimize the real-time capability . • A new dataset with a combination of dif ferent under- water scenarios (turbidity , depth, temperature, attenuation type..) is synthesized for training the proposed network. The synthetic dataset is generated using a generati ve deep learning method. • The fully end-to-end proposed model generalizes well (real underwater images) with dif ferent degradation types. The rest of the paper is as follows: § II talks about relev ant work; § III giv es experiments and methods follo wed to restore underwater images; § IV presents corrected underwater images and the performance of the proposed network; finally , § V summarizes the paper . I I . R E L A T E D W O R K Numerous attempts have been made with different image improv ement methods for restoring the color of raw under- water images. These methods fall into two categories [ 6 ]: hardware-based methods [ 7 ], [ 8 ] and software-based meth- ods [ 9 ]–[ 11 ]. Software-based methods inv ert the formation of underwater images and construct physical models for image enhancement in addition to modifying the image pixel v alues. Hardware-based methods capture multiple images with help of polarization filters, stereo setups or specialized hardware devices and use the obtained additional information [ 6 ], [ 12 ]. Both categories sho w good performance, ho we ver , they are limited to certain scenarios and don’t match various underwa- ter lightening conditions. They are expensi ve to implement since some of them use specialized sensors and multiple images for the enhancement. Recent approaches ha ve focused on Generativ e Adversarial Networks (GANs) as a new way for achieving better results. When improving underwater imagery using deep learning models (e.g. GANs), image pairs consisting of clean and distorted underwater images are needed for training the model. It is hard to capture clean underwater images without the attenuation of light and other underwater effects. Thus, sev eral works ha ve been done to synthesize training images. Li et al., 2018 [ 13 ] used two types of networks: W ater Generativ e Adversarial Network (W aterGAN) for generating realistic underwater images and Underwater Image Restoration Network for correcting the color . The generator of W aterGAN models the formation of underwater image using three stages: Attenuation, Scattering, and Camera Model. After that, the learned generator is used to generate training image samples for the color restoration netw ork. First, a relative depth map is estimated and reconstructed from the input image and are both used for color restoration. They showed ef ficiency for real-time applications, howe ver , their network is limited to certain degradation type appearance due to the way of generating images. Figure 1 shows the images that were used for training the network which do not reflect underwater structures. The clean images consist of in-air images, whereas the corruption process is limited to certain degradation types (e.g. greenish mask). Fig. 1. The synthesized underwater images by W aterGAN. The color restora- tion might be limited to certain types of color degradation appearance. [ 13 ] As an impro vement o ver the aforementioned data generation method, Li et al., 2018 [ 14 ] and Fabbri et al., 2018 [ 15 ] used CycleGAN [ 16 ] for generating underwater images. After synthesizing the data, it was later used for training their color restoration model. The previously mentioned deep learning methods showed good performance in restoring the color . Ho wever in certain scenarios, they led to an unrealistic color correction of under- water images as in Li et al., 2018 [ 14 ]. The training dataset lacked true colors of underwater structures such as coral reefs and fish. Furthermore, a drawback in the color restoration model, Underwater Generativ e Adversarial Network (UGAN), of Fabbri et al., 2018 [ 15 ] is the efficiency of real-time implementation with high resolution images, as the model’ s architecture makes it computationally costly . W e follow the same procedure as in Fabbri et al., 2018 [ 15 ] for generating synthetic images. Howe ver , a different set of images is used for the training of CycleGAN. Fabbri et al., 2018 [ 15 ] collected clear underwater images and style- transferred the characteristics of degradation from distorted underwater images to them. Our generated dataset is composed of various underwater locations with different degradation types, leading to a better generalization than their network. I I I . M E T H O D O L O G Y T wo important aspects are discussed in b uilding the UD AE model. The first aspect is the methodology followed to gener- ate the underwater dataset for training the netw ork. The second one is the architecture of the UD AE model and the benefits of using a denoising autoencoder . A. Dataset A dataset is gathered and filtered to be used for the gener - ation process of the image pairs. This section is divided into two subsections. The first subsection discusses data collection of underwater images, while the second discusses generating data for obtaining underwater image pairs. 1) Data Collection: T o train a network capable of restoring the true underwater color from the distorted images, clear images were gathered without light scattering in them. These images were taken from different sources on the Internet. As it is hard to get clear images, it was possible to obtain them from: • Large fish aquariums such as the ones in museums and touristic towers. • Underwater images that were captured in a close distance to the structures with artificial light exposure. • V arious images and frames taken from videos that were enhanced and processed by commercial software tools. The clean images were chosen based on contrast loss and degradation presented in underw ater images. After that, distorted images were gathered with dif ferent attenuation types from various locations. Some of them were captured by Fraunhofer IGD from the Baltic Sea, while the others were gathered from the Internet corresponding to dif ferent locations, depths, temperatures and other degradation factors. 2) Image P airs Data Generation: 15 , 131 images composed of clear and distorted images were collected. After that, the collected images were filtered, based on a subjecti ve quality ev aluation, into two categories: A (clear) containing 7 , 055 images and B (distorted) containing 8 , 076 images. The two different categories are shown in Figure 2 . All images were resized to 512 × 512 using area interpolation method. After gathering suitable images, CycleGAN generati ve model was used for style-transferring. It uses adversarial loss for learning a mapping from a source domain X to a target domain Y ( G : X → Y ) [ 16 ]. It was used to transfer the underwater style from B images to A ones, and the result was the category A 0 , Figure 3 . The image pairs in A and A 0 were then used to train the autoencoder . The training of the CycleGAN model took around 9 days on 4 NVIDIA TIT AN X GPU devices, after that 7 , 055 image pairs were generated and filtered into 5 , 194 after removing failure cases. The failure cases are due to limitations in the style transfer of CycleGAN. (a) A (clean images samples) (b) B (distorted images samples) Fig. 2. Samples of images used for the style-transferring. (a) A (clean images samples) (b) A 0 (distorted images samples) Fig. 3. Samples of the UD AE dataset after the generation of image pairs. B. Pr oposed Network Denoising autoencoder is used for restoring the color of underwater images. W e consider the problem of the color restoration as a reconstruction of a corrupted input. Consider that x is the clean image and ˜ x is the corrupted version of it by the style transfer c ( ˜ x | x ) . Then we would try to reconstruct a repaired input by learning a decoding distrib ution p θ ( x | z ) from an encoded distribution q φ ( z | ˜ x ) . Denoising autoencoders are expected to capture implicit in v ariances in the data and extract the key features from the input images [ 4 ], [ 17 ]. U-Net is used as a CNN architecture due to its efficienc y in computation and training 1 in addition to its ability to propagate context information to higher resolution layers [ 5 ]. For a better illustration of the proposed UD AE network, refer to Figure 4 . Same kernel sizes and layers were used as in UNet [ 5 ]. First of all, a distorted RGB underwater image is fed into the encoder of the denoising autoencoder . In the encoder part, subsequent conv olutions downsample the image gradually to a latent variable. In each downsampling stage, 3 × 3 2-D con volutions are used twice followed by a rectified linear unit (ReLU) and a 2 × 2 max-pooling with a stride of 2 . The number of feature maps are doubled in each stage. In the decoder part, upsampling is done from the latent variable back to the original input size sequentially . After each upsampling, the tensor (image) is concatenated with the output of the corresponding symmetric layer in the encoder side and 3 consecutiv e con volutions are followed. The feature maps are reduced gradually to 3 channels. The concatenation of the output of layers combines the contextual information from the downsampling step [ 5 ]. The reconstructed image should bear resemblance to the clean images, therefore and inspired by the work of Zhao et al. [ 18 ], Multi-scale Structural SIMilarity (MS-SSIM) index and absolute value ( L 1 ) loss functions were used. The loss function can be expressed as: L = α · L M S − S S I M + (1 − α ) · L L 1 , (1) where L represents the loss of the reconstructed image and α is set to 0 . 80 after conducting sev eral experiments and observing best reconstruction. The objective of the autoencoder is to minimize the loss function as much as possible. W eight decay is omitted in the proposed network since the presented noise in the input images has a similar re gularization ef fect to weight decay with faster training dynamics [ 19 ]. T ensorflow framew ork was used for the training. I V . R E S U LT S A N D D I S C U S S I O N The training of UD AE took around 1 day on NVIDIA Quadro M5000. It was then tested on 1 , 040 images with a resolution of 512 × 512 . The average time per image in seconds was 0 . 01601 ( 62 . 45 f ps ) on NVIDIA R TX 2080ti. The selected loss function was capable of preserving details when reconstructing the image. S S I M is sensiti ve to v arious types of image degradation [ 20 ], whereas L 1 preserv es colors and luminance [ 18 ]. The proposed network produced good results as sho wn in Figure 5 with a suitable speed for real- time implementation. In certain scenarios where the clean image is only partially clear such as the one in subfigure 5c , the reconstructed image showed a better recovery from the distorted color than the clean image itself. The reason is that the network in general learned an encoding and decoding distribution capable of reconstructing color-recov ered images. Additionally , UDAE network was tested on real data such as underwater videos extracted from Y ouT ube to ev aluate its 1 the parameters learn well even with a small dataset 2 5 6 1 2 8 3 2 3 2 3 6 4 6 4 1 2 8 1 2 8 2 5 6 2 5 6 1 2 8 1 2 8 6 4 3 2 6 4 3 3 2 6 4 3 2 6 4 1 2 8 2D convolution Max-pooling 2 × 2 Upsampling and 2D convolution Concatenation Fig. 4. Architecture of the UD AE proposed model. (a) (b) (c) (d) Fig. 5. T esting image with its clean and reconstructed version using UDAE. The images from left to right correspond to clean image, input image, and output image respectiv ely . generalization ability . Figure 6 sho ws samples of the recon- structed images on the following videos: Baltic Sea 2 , Scuba Diving 3 , and Fish Hunting 4 . The color of the input underwater images with different degradation type was restored and the details were preserved. A. Comparison with UGAN UD AE was compared to Underwater Generati ve Adversarial Network (UGAN) [ 15 ]. First, both netw orks were tested on the dataset described in Section III-A ( 1 , 040 images) due to the av ailability of the clean image and for an objectiv e ev aluation. The testing images are of size 256 × 256 . Three metrics were used for the e valuation: MSE, SSIM, and MS-SSIM-L1 (eq. 1 ). In all three metrics, UDAE showed better reconstruction error than that of UGAN, T able I 5 . For a fair comparison, both networks were then ev aluated on the testing images that the authors of UGAN published in their paper , Figure 7 6 . The average processing time was calculated 2 https://www .youtube.com/watch?v=Y -SVGO0r6n0 3 https://www .youtube.com/watch?v=OSdrb1XNXZI 4 https://www .youtube.com/watch?v=aLt7aGFcVkM 5 MSE and MS-SSIM-L1 giv e a score 0 for identical images, while SSIM giv es a score 1 . 6 for a better comparison of images, it is better to view them in digital form. (a) Case 1 - “Baltic Sea” V ideo (b) Case 2 - “Fish Hunting” V ideo (c) Case 3 - “Baltic Sea” V ideo (d) Case 4 - “Fish Hunting” V ideo (e) Case 5 - “Scuba Diving” V ideo (f) Case 6 - “Scuba Diving” Video Fig. 6. Samples of the reconstructed frames of the color restoration task extracted from Y ouTube videos. UDAE performs well on real data ev en though it was trained on synthetic dataset. T ABLE I E V A L UA T I O N O F U G A N A N D U DA E U S IN G T H R E E M E TR I C S OV E R 1 , 040 I M AG ES W I T H A R E S O LU T I ON O F 256 × 256 . Objective Evaluation Metrics MSE SSIM MS-SSIM-L1 UD AE 0 . 0028 0 . 9653 0 . 0753 UGAN 0 . 0061 0 . 9186 0 . 1415 ov er 1 , 813 testing images resized to 256 × 256 . The average time per image of UGAN was 0 . 0099 seconds ( 100 . 94 f ps ), whereas that of UD AE was 0 . 0043 seconds ( 230 . 67 f ps ). The processing was conducted on NVIDIA R TX2080ti.Since clean images were not av ailable, the ev aluation was only based on the human perception. UD AE sho wed good generalization (a) (b) (c) (d) Fig. 7. Comparison to a testing image produced by UGAN. The first column represents the original image, second column represents the reconstructed image using UGAN, and the third column represents the reconstructed image using UD AE. where the color was restored and the details were preserved. UGAN achieved good performance in restoring the colors of some images such as subfigure 7a , howe ver , UDAE had better color brightness. Another inference drawn from the images is the background reconstruction. In many images, UGAN failed to reconstruct the background properly such as images with plain background as in subfigure 7b , whereas our proposed network was capable of restoring the color of both the foreground and the background without any artifacts. An example on the artifacts is the halo effect shown in UGAN reconstructed image. As for the high frequencies, the images of subfigure 7c were zoomed in by a factor of 9 using bilinear interpolation method in Figure 8 . UD AE outperforms UGAN (a) Input (b) UGAN (c) UD AE Fig. 8. The input image is zoomed in with a factor of 9 using bilinear interpolation. The details in the reconstructed image of UD AE are preserved. network in preserving and reconstructing better details. The coral reefs in the reconstructed image of UGAN were blurry and many details were lost. The details are important for object detection and tracking by underwater vehicles. Some failure cases were noticed by our proposed network such as subfigure 7d . This will be kept for future work where a better dataset with more degradation types would be established for a better generalization. V . C O N C L U S I O N This paper proposed Underwater Denoising Autoencoder (UD AE); a ne w way for restoring the color of underwater images using a single denoising autoencoder with real-time capability . W e showed that it is possible to reconstruct under- water images using a network based on a single denoising autoencoder , where it gave same or better results than a network based on a GAN. Ho we ver , using a single autoencoder is better suited for real-time implementation. Additionally , as an improvement to previous networks, UD AE is capable of restoring better color in images and preserving the details. W e believ e that there is a space for impro ving the network, where better generalization ability should be achieved. The network was trained on a relati vely small dataset, howe ver , obtaining a larger one with various color distortion would lead to great improvement. The processing speed could be also improv ed by trying different CNN-baseline or latent space size. R E F E R E N C E S [1] R. B. W ynn, V . A. Huvenne, T . P . Le Bas, B. J. Murton, D. P . Connelly , B. J. Bett, H. A. Ruhl, K. J. Morris, J. Peakall, D. R. Parsons et al. , “ Autonomous underwater vehicles (auvs): Their past, present and future contrib utions to the adv ancement of marine geoscience, ” Marine Geology , vol. 352, pp. 451–468, 2014. [Online]. A vailable: https://doi.org/10.1016/j.mar geo.2014.03.012 [2] J. Choi, Y . Lee, T . Kim, J. Jung, and H.-T . Choi, “Development of a rov for visual inspection of harbor structures, ” in 2017 IEEE Underwater T echnology (UT) . IEEE, 2017, pp. 1–4. [Online]. A vailable: https://doi.org/10.1109/UT .2017.7890285 [3] M. Manjunatha, A. A. Selvakumar , V . P . Godeswar, and R. Manimaran, “ A low cost underwater robot with grippers for visual inspection of external pipeline surface, ” Procedia computer science , vol. 133, pp. 108–115, 2018. [Online]. A vailable: https://doi.org/10.1016/j.procs. 2018.07.014 [4] P . V incent, H. Larochelle, Y . Bengio, and P .-A. Manzagol, “Extracting and composing robust features with denoising autoencoders, ” in Pr oceedings of the 25th international conference on Machine learning . A CM, 2008, pp. 1096–1103. [Online]. A vailable: https: //doi.org/10.1145/1390156.1390294 [5] O. Ronneberger , P . Fischer, and T . Brox, “U-net: Convolutional networks for biomedical image segmentation, ” in International Confer ence on Medical image computing and computer-assisted intervention . Springer , 2015, pp. 234–241. [Online]. A vailable: https://doi.org/10.1007/978- 3- 319- 24574- 4 28 [6] H. Lu, Y . Li, Y . Zhang, M. Chen, S. Serikawa, and H. Kim, “Underwater optical image processing: a comprehensi ve review , ” Mobile networks and applications , vol. 22, no. 6, pp. 1204–1211, 2017. [Online]. A vailable: https://doi.org/10.1007/s11036- 017- 0863- 4 [7] Y . Y . Schechner and N. Karpel, “Recovery of underwater visibility and structure by polarization analysis, ” IEEE Journal of oceanic engineering , vol. 30, no. 3, pp. 570–587, 2005. [Online]. A vailable: https://doi.org/10.1109/JOE.2005.850871 [8] T . Treibitz and Y . Y . Schechner , “ Active polarization descattering, ” IEEE transactions on pattern analysis and machine intelligence , vol. 31, no. 3, pp. 385–399, 2009. [Online]. A vailable: https: //doi.org/10.1109/TP AMI.2008.85 [9] C. O. Ancuti, C. Ancuti, C. De Vleeschouwer , and P . Bekaert, “Color balance and fusion for underwater image enhancement, ” IEEE T ransactions on Image Processing , vol. 27, no. 1, pp. 379–393, 2018. [Online]. A v ailable: https://doi.org/10.1109/TIP .2017.2759252 [10] F . Farhadifard, Z. Zhou, and U. F . von Lukas, “Learning-based underwater image enhancement with adapti ve color mapping, ” in 2015 9th International Symposium on Image and Signal Pr ocessing and Analysis (ISP A) . IEEE, 2015, pp. 48–53. [Online]. A vailable: https://doi.org/10.1109/ISP A.2015.7306031 [11] J. Y . Chiang and Y .-C. Chen, “Underwater image enhancement by wav elength compensation and dehazing, ” IEEE T ransactions on Image Pr ocessing , vol. 21, no. 4, pp. 1756–1769, 2012. [Online]. A vailable: https://doi.org/10.1109/TIP .2011.2179666 [12] R. Schettini and S. Corchs, “Underwater image processing: state of the art of restoration and image enhancement methods, ” EURASIP Journal on Advances in Signal Processing , vol. 2010, no. 1, p. 746052, 2010. [Online]. A v ailable: https://doi.org/10.1155/2010/746052 [13] J. Li, K. A. Skinner , R. M. Eustice, and M. Johnson-Roberson, “W atergan: unsupervised generati ve network to enable real-time color correction of monocular underwater images, ” IEEE Robotics and Automation Letters , vol. 3, no. 1, pp. 387–394, 2018. [Online]. A vailable: https://doi.org/10.1109/LRA.2017.2730363 [14] C. Li, J. Guo, and C. Guo, “Emerging from water: Underwater image color correction based on weakly supervised color transfer , ” IEEE Signal Pr ocessing Letters , vol. 25, no. 3, pp. 323–327, 2018. [Online]. A vailable: https://doi.org/10.1109/LSP .2018.2792050 [15] C. Fabbri, M. J. Islam, and J. Sattar , “Enhancing underwater imagery using generativ e adversarial networks, ” in 2018 IEEE International Confer ence on Robotics and Automation (ICRA) . IEEE, 2018, pp. 7159–7165. [Online]. A vailable: https://doi.org/10.1109/ICRA.2018. 8460552 [16] J.-Y . Zhu, T . Park, P . Isola, and A. A. Efros, “Unpaired image-to-image translation using cycle-consistent adversarial networks, ” in Pr oceedings of the IEEE International Conference on Computer V ision , 2017, pp. 2223–2232. [Online]. A v ailable: https://doi.org/10.1109/ICCV .2017.244 [17] A. Creswell and A. A. Bharath, “Denoising adversarial autoencoders, ” IEEE transactions on neural networks and learning systems , no. 99, pp. 1–17, 2018. [18] H. Zhao, O. Gallo, I. Frosio, and J. Kautz, “Loss functions for image restoration with neural networks, ” IEEE T ransactions on Computational Imaging , vol. 3, no. 1, pp. 47–57, 2017. [Online]. A vailable: https://doi.org/10.1109/TCI.2016.2644865 [19] A. Pretorius, S. Kroon, and H. Kamper , “Learning dynamics of linear denoising autoencoders, ” in Pr oceedings of the 35th International Confer ence on Machine Learning , ser . Proceedings of Machine Learning Research, J. Dy and A. Krause, Eds., vol. 80. Stockholmsmssan, Stockholm Sweden: PMLR, 10–15 Jul 2018, pp. 4141–4150. [Online]. A vailable: http://proceedings.mlr .press/v80/pretorius18a.html [20] A. Hore and D. Ziou, “Image quality metrics: Psnr vs. ssim, ” in 2010 20th International Confer ence on P attern Recognition . IEEE, 2010, pp. 2366–2369. [Online]. A v ailable: https://doi.org/10.1109/ICPR.2010.579

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment