MOBIUS: Model-Oblivious Binarized Neural Networks

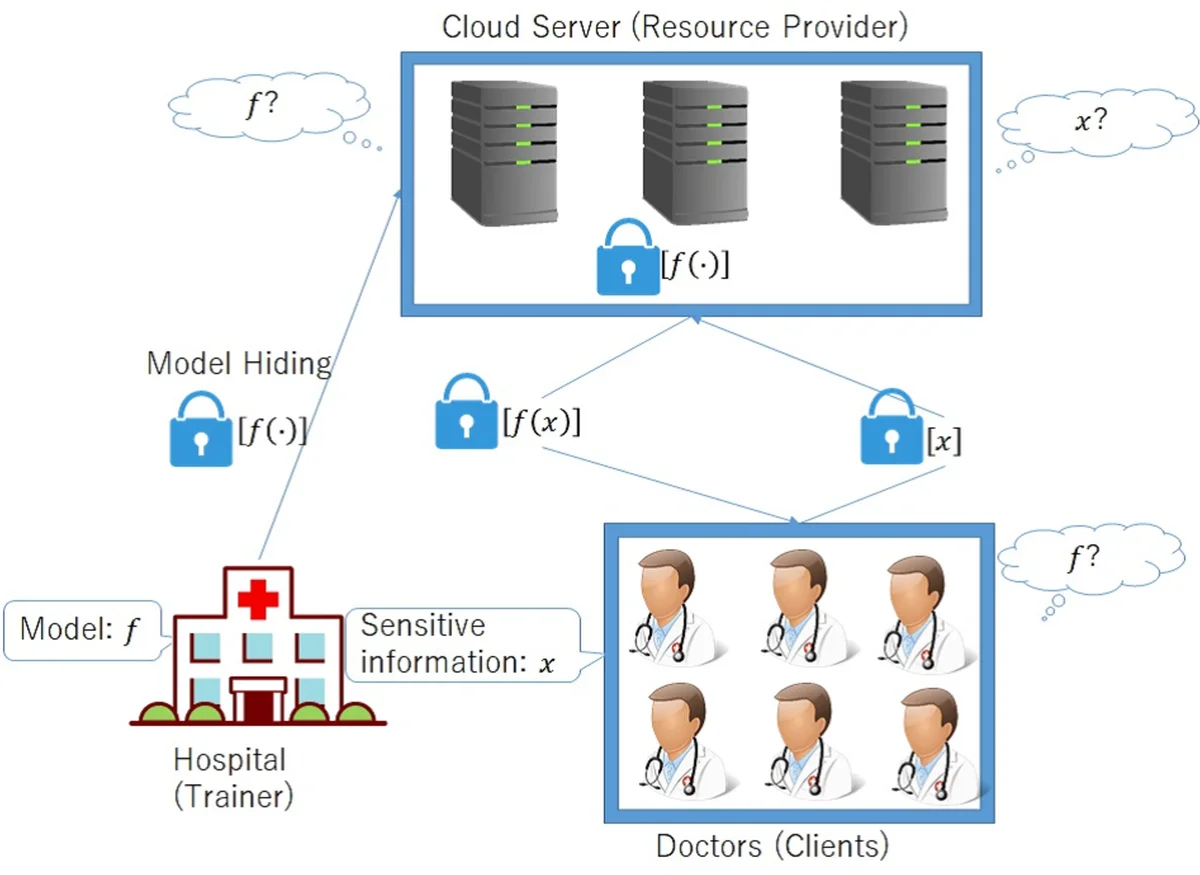

A privacy-preserving framework in which a computational resource provider receives encrypted data from a client and returns prediction results without decrypting the data, i.e., oblivious neural network or encrypted prediction, has been studied in machine learning that provides prediction services. In this work, we present MOBIUS (Model-Oblivious BInary neUral networkS), a new system that combines Binarized Neural Networks (BNNs) and secure computation based on secret sharing as tools for scalable and fast privacy-preserving machine learning. BNNs improve computational performance by binarizing values in training to $-1$ and $+1$, while secure computation based on secret sharing provides fast and various computations under encrypted forms via modulo operations with a short bit length. However, combining these tools is not trivial because their operations have different algebraic structures and the use of BNNs downgrades prediction accuracy in general. MOBIUS uses improved procedures of BNNs and secure computation that have compatible algebraic structures without downgrading prediction accuracy. We created an implementation of MOBIUS in C++ using the ABY library (NDSS 2015). We then conducted experiments using the MNIST dataset, and the results show that MOBIUS can return a prediction within 0.76 seconds, which is six times faster than SecureML (IEEE S&P 2017). MOBIUS allows a client to request for encrypted prediction and allows a trainer to obliviously publish an encrypted model to a cloud provided by a computational resource provider, i.e., without revealing the original model itself to the provider.

💡 Research Summary

MOBIUS (Model‑Oblivious BInary neUral networkS) is a privacy‑preserving inference framework that simultaneously hides both the client’s input data and the service provider’s trained model from the cloud resource provider. The system combines Binarized Neural Networks (BNNs), which restrict weights and activations to the binary set {‑1, +1}, with secret‑sharing based secure multi‑party computation (MPC). By binarizing the network, all arithmetic can be expressed as integer operations, which aligns naturally with the modular arithmetic used in secret‑sharing schemes. However, the original BNN design relies on real‑valued batch normalization that is incompatible with integer‑only MPC. MOBIUS resolves this incompatibility by converting batch‑normalization parameters (γ, β, μ, σ) into scaled integers, truncating lower‑order bits, and applying a constant multiplier. This integer‑only batch‑normalization preserves the statistical benefits of the original method while avoiding the accuracy loss caused by the bit‑shift technique used in earlier BNN implementations.

The authors implemented MOBIUS in C++ using the ABY library, which provides two‑party protocols for addition, multiplication, constant multiplication, comparison, and a “Half” operation over a residue class ring Z₂^m (m = 8, 16, 32, 64). The security model assumes semi‑honest adversaries and a t‑out‑of‑n secret‑sharing scheme; as long as fewer than t servers are compromised, neither the client’s query nor the trainer’s model can be reconstructed. The workflow is as follows: the trainer builds a plaintext BNN, secret‑shares the model parameters, and uploads the shares to the cloud servers. The client secret‑shares its input and sends the shares to the same servers. The servers jointly perform the BNN inference using MPC primitives on the shared data, producing a shared output vector. The client reconstructs the vector and selects the class with the highest score as the prediction.

Experimental evaluation on the MNIST handwritten‑digit dataset demonstrates that MOBIUS achieves near‑state‑of‑the‑art BNN accuracy (≈98 %) while delivering a full encrypted prediction in an average of 0.76 seconds. This latency is six times lower than SecureML, the closest prior work that also supports model‑oblivious inference but relies on different cryptographic primitives. Notably, the reported performance is obtained without any low‑level optimizations, indicating substantial headroom for further speedups.

MOBIUS therefore provides a practical solution for “model‑oblivious” inference: the model remains encrypted and inaccessible to the cloud provider, the client’s data stays private, and the inference latency is compatible with real‑time applications. The paper suggests future extensions to deeper architectures (e.g., convolutional or recurrent networks) and to multi‑party settings, positioning the combination of BNNs and secret‑sharing MPC as a promising direction for scalable, privacy‑preserving machine learning.

Comments & Academic Discussion

Loading comments...

Leave a Comment