LUNAR: Cellular Automata for Drifting Data Streams

With the advent of huges volumes of data produced in the form of fast streams, real-time machine learning has become a challenge of relevance emerging in a plethora of real-world applications. Processing such fast streams often demands high memory an…

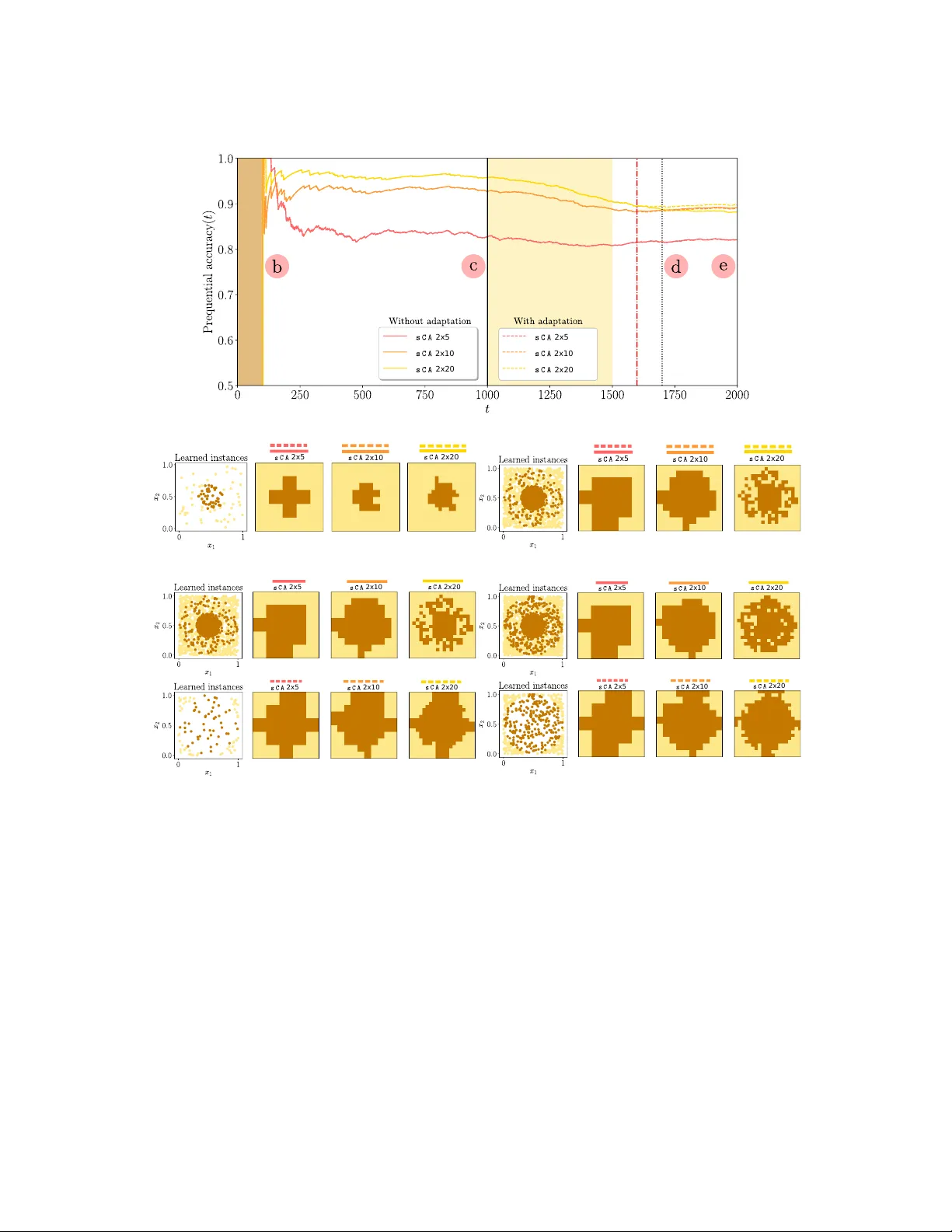

Authors: Jesus L. Lobo, Javier Del Ser, Francisco Herrera