Towards Explainable Bit Error Tolerance of Resistive RAM-Based Binarized Neural Networks

Non-volatile memory, such as resistive RAM (RRAM), is an emerging energy-efficient storage, especially for low-power machine learning models on the edge. It is reported, however, that the bit error rate of RRAMs can be up to 3.3% in the ultra low-pow…

Authors: Sebastian Buschj"ager, Jian-Jia Chen, Kuan-Hsun Chen

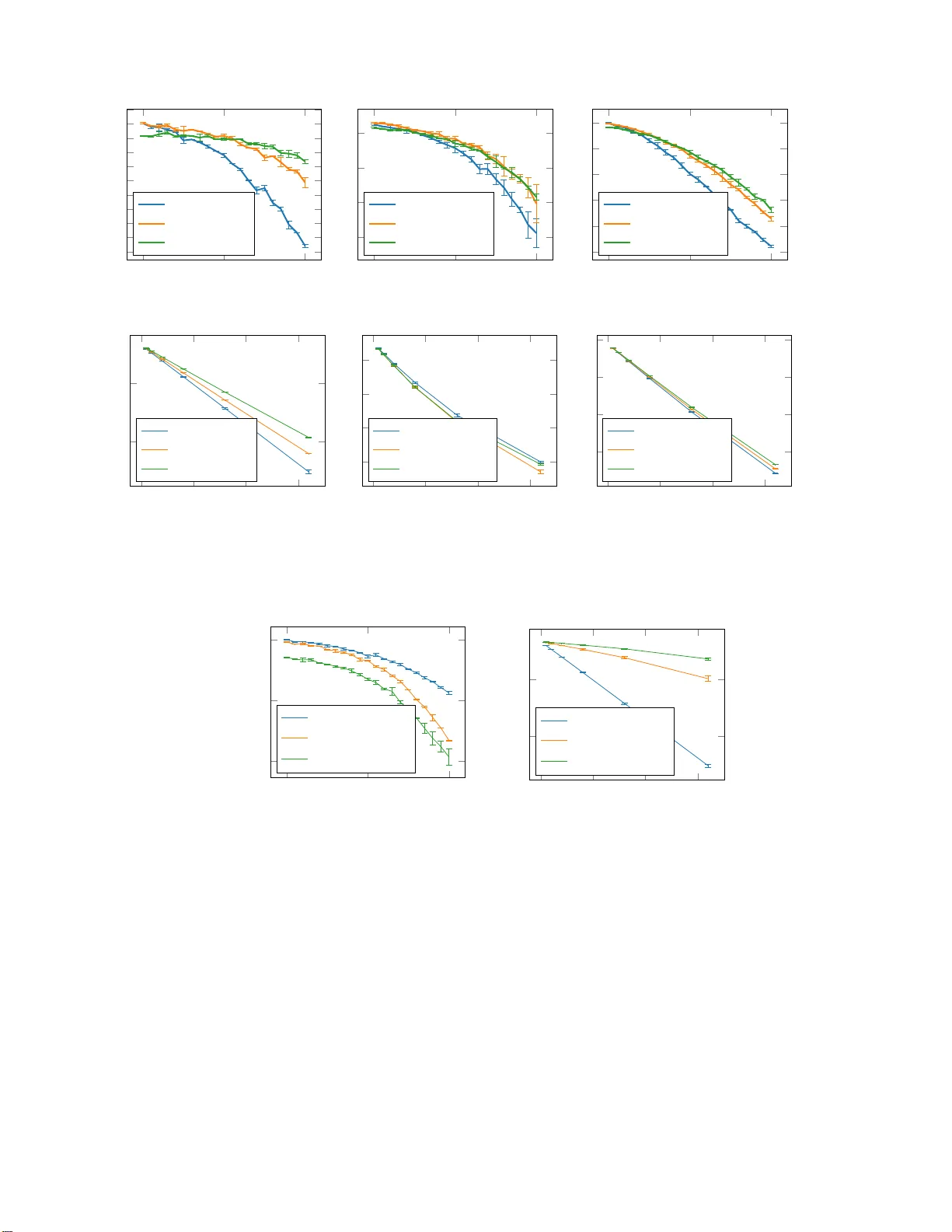

T o wa rds Explainable Bit Error T olerance of Resisti v e RAM-Based Binarized Neural Netw orks Sebastian Buschj ¨ ager ∗‡ , Jian-Jia Chen † , Kuan-Hsun Chen † , Mario G ¨ unzel † ‡ , Christian Hakert † , Katharina Morik ∗ , Rodion Novkin † , Lukas Pfahler ∗ ‡ , and Mikail Y ayla † ‡ ∗ Artificial Intelligence Gr oup , TU Dortmund Un iversity , Germany † Design Automation for Embedded Systems Gr o up , TU Dortmund University , Germany ‡ These authors contributed equally to this r esear ch { sebastian.buschjaeger , jian-jia.chen , kuan-hsun.ch e n, mario.gu enzel, chr istian.hakert, katharina. morik, rodion .novkin, lukas.pfahler, mikail.y ayla } @tu-dor tmund.de Abstract —Non-volatile memory , su ch as resistive RAM (RRAM), is an emerging energy-efficient storage , especially for low-power machine learning models on the edge. It is reported, howev er , th at the bit er ror rate of RRAMs can be up to 3.3% in the ultra low-power setting, which might be crucial fo r many use cases. Binary neural networks (BNNs), a resource efficient variant of neural networks (NNs), can tolerate a certain percentage of errors without a loss in accuracy and demand lower reso urces in computation and storage. The bit erro r tolerance (BET) in BNNs can b e achieved by flipp ing the weight signs d u ring training, as proposed by Hirtzli n et al., but their meth od has a significant drawback, especially for fully connected neural networks (FCNN): The FC NN s overfit to the error rate u sed in training, wh ich leads to low accuracy und er lower error rates. In addition, t h e und erlying princip les of BET are not in vestigated. In this work, we impro ve the traini n g for BE T of BNNs and aim to explain this p roperty . W e propose straight-through gradient approximation to improv e the w eigh t-sign-flip training, b y which BNNs adapt l ess to the b it error rates. T o explain the achieved robustness, we defin e a metric th at aims to measure BE T without fault inj ection . W e ev aluate the metric and find t hat it correlates with accuracy ov er erro r rate for all FCNNs tested. Fi n ally , we explore the influence of a novel regularizer that optimizes with respect to this metric, with the aim of pro viding a configurable trade-off in accuracy and BET . I . I N T RO D U C T I O N In the age of u biquitous com puting, sensors and comp uting facilities are embedded into various physical e nvironmen ts for data collection. Small devices ap ply m achine learn ing models on data streams directly on the edge. Since the edge devices have resource constraints, such as in c omputation and storag e, these models ne e d to be efficient in execution and mem o ry usage. Binary n eural networks (BNNs) ar e one resource- e f ficient variant of neural network s (NNs), w h ich are especially we ll su ited fo r small embed d ed devices. The ir weight param eters a r e stor ed as b inary values, and th e con- volution operations are compute d with XNOR f ollowed by populatio n c o unt (POPCOUNT) instructions, which coun t the number of set bits. The trade- off for resou rce-efficiency is a This paper ha s been support ed by Deutsche Forsc hungsgemein - schaft (DFG ), as part of the Collaborati ve Research Center SFB 876 ”Provi ding Information by Resource- Constrain ed Analysis” , project A1 (http:/ /sfb876.tu-do rtmund.de ) and Project OneMemory (CH 985/13-1). decrease in a c curacy by a few percen tage poin ts comp ared to full-p recision neura l network s. The efficient execution of BNNs has been researched in several recent works [ 1], [2], [3], but the memor y type to use for BNN m odels in a low- power setting has received limited attention so far, desp ite the energy saving po te n tial. Non-volatile memor ies ( NVMs), such as resisti ve rando m - access memory ( RRAM), are e m erging memory tech nologies for low-power storag e. They a r e expected to be dep loyed in fu- ture comp uting systems with resour c e co nstraints [4]. Because of t heir non-volatility , they make no r mally-off compu ting efficient: The device is only powered o n if th ere is co mputation to b e done. This is especially conv enient f o r inferen ce o n the low-po wer edge. RRAM, which stores inform ation in th e f orm of non- volatile resisti ve states, has co mparable perfo rmance to DRAM, b ut uses less energy b e c ause no refreshs are needed and the rea d /write en ergy is lower [4]. Moreover , wh en u sing an u ltra lo w-power setting for RRAM cell p rogramm ing, the energy consumptio n can be lowered furth er , u p to 30 times for the p rogramm ing energy , as repor te d b y Hirtzlin et al. [5 ]. This addre sses one the major disadvantages of RRAMs: Cell lifetime. The lower prog ramming en ergy stresses th e cells less, which leads to increased lifetime. The crucial drawback of th e ultra low-power RRAM setting is, howe ver, the high bit error rate o f ∼ 3 . 3 % . H ir tzlin et al. p r opose to use th is setting fo r in-memo ry pr ocessing of BNNs, i.e. executing the BNN operatio ns inside the memo ry , and show th at BNNs can be trained to be more erro r tolerant, up to a rate of 4% witho ut a significant accuracy drop. T he increased bit err or toleranc e (BET) lowers the r e quirements on the mem ory and makes p ossible the use of the ultra low-po wer setting fo r BNNs. RRAM is therefo re a highly pr omising low- power memory for BNNs. The metho d propo sed by Hirtzlin et a l. [5] is simple: During training a certain percentage of bit err ors are introduced into the network by rando mly flip ping weights. Even thoug h this method is very effecti ve, it has sev eral drawbacks. First, th e accuracy drop is consider able, esp ecially for fully conn ected neural networks (FCNN). In their experimen ts, the FCNNs adapt to the err or rates they were trained for, which mean s that the accur acy of the BNNs drop in cases in which a lower percentag e of error s is p resent. Second ly , faults have to be injected dur ing training , which adds com plexity to the train ing process. As discussed in [6], train ing NNs fo r gener al BET ( i.e. without accu racy drops fo r lower error r ates) is not a trivial task. T he explanation o f the und erlying principles of the BET of BNNs is not explicitly studied in the literatur e as well. In th is work, we r eport on o ur prog ress and futur e directions for a chieving general b it err o r tolerance and for explaining this prope rty: • W e impr ove the BET tr aining of the previous work b y using stra ight-throu gh gr a dient app roximation , so that the NNs do no t overfit to the e r ror rates used during trainin g . • W e present a metric th a t aim s to measure the achieved BET of BNNs, without injecting faults. • Based on this m etric, we explore the impact of a regu - larizer with th e go al of achieving g eneral BET , wh ich is a prop erty in depende n t fro m the err or mod el. The paper is organized as the fo llowing. Section II intr o- duces BNNs form ally . Section I I I f ormalizes the BET o f NNs, whereas Sec tio n IV presents a novel regularizer to en hance the BET of NNs. Section VI sur veys the related work , wher e as Section V p resents o ur experimen ts. Sectio n VII co ncludes the paper . I I . B I NA R I Z E D N E U R A L N E T W O R K S T o train a binarized neura l n etwork with weights in F 2 = {− 1 , +1 } we follow the approa c h p resented b y Hubar a et al. [7] in wh ic h the weights are stored as flo ating po int nu mbers, but both weig hts an d a cti vations are determ inistically rou nded to F 2 during fo r ward computatio n. Th e grad ient updates are perfor med with full precision on the floatin g p oint weights. A. Notatio n Before we describe th e tr aining p rocedur e in more detail, we intro d uce th e notation used to descr ibe neural network s. W e assume a feed -forward network in which ea c h layer l is associated with a weigh t tenso r W l . Each layer per forms a generic operation ◦ to compute its o utput h l ( X ) := W l ◦ X giv en its in put tensor X l − 1 and weights in W l . For ex- ample, a fu lly-conne cted layer comp u tes the m a trix p roduct h l ( X l − 1 ) = W l X l − 1 or the co n volution layer compu tes a conv olu tio n with a n umber of filters defin ed by W deno ted by h l ( X l − 1 ) = W l ∗ X l − 1 . Between layers we apply an activ ation function σ ( h l ( X l )) in order to obtain a non - linear decision function . In BNNs it is co mmon to use the sign function as activ ation functio n. B. T raining Floating point networks are typically train e d with gradien t- based appro aches such as min i- batched stochastic g radient descent (SGD) to minimize a loss function. Let D = { ( x 1 , y 1 ) , . . . , ( x I , y I ) } with x i ∈ X and y i ∈ Y de n ote the training data and let ℓ : Y × Y → R be th e loss f unction. Let W = ( W 1 , . . . , W L ) d enote th e weig ht tensor s of each layer in th e neur a l ne twork and let f W ( x ) b e the outp ut of Algorithm 1 Binar ized forward pass f W ( x ) . 1: functio n F O RW A R D (m o del, x ) 2: for l ∈ { 1 , . . . , L } do 3: x ← B ( B ( W l ) ◦ x ) 4: return x the network given its we ig hts W , th en we aim to solve the following optimizatio n p roblem arg min W 1 I X ( x,y ) ∈D ℓ ( f W ( x ) , y ) by a grad ient descent strategy that computes the gradien t ∇ W ℓ using back p ropagatio n. Unfo r tunately , in the case of binary neu ral networks we ca n not perf orm gradient-b a sed optimization dir ectly . T his is due to two reaso n s: First, the space of weights is discr e te and thus the p arameter-vector obtained by tak in g a sm a ll step in the op posite dire ction of the gradient is almost c ertainly not b inary . Secon d, th e sign - function is no t differentiable and also its sub- differential is useless f or op timization, as it is ze ro everywhere other than zero. T o mitigate the first problem, Hub ara et al. [7] pr o pose a scheme that du r ing train ing stores weig hts as floating poin t number s co n strained to values betwe e n -1 and 1 and ‘bina- rizes’ the network d uring the forward p ass. More for mally , let b : R → F 2 be a binarization f unction with b ( x ) = ( 1 x > 0 − 1 else and let B ( W l ) den ote th e element-wise applicatio n of b to W l . W e su mmarize the for ward-pass in Algo r ithm 1. The outer application of B in Algorith m 1 acts as the activation fun ction and tha t b c a n also be interpreted as a n un-sm ooth version of the tanh activ ation fu nction. T h erefore it is sometimes called hard-tan h o r H tanh . Then, d u ring the b a ckward pass they u se full flo ating point precision. T o mitigate the seco nd p roblem – b is not differentiable – they replace the gradien t of b with the so-called straight-thro ugh estimato r . Consider th e forward co mputation Y = B ( X ) . Let ∇ Y ℓ denote the gradient with resp ect to Y . The straight-thr ough e stimator ap proximate s ∇ X ℓ := ∇ Y ℓ, (1) essentially pr e te n ding that B is the iden tity functio n. Using this gr a d ient ap proximatio n we can a pply standard sto chastic gradient descen t techn iq ues with the small addition that all floating poin t weights a r e clipped to be between -1 an d 1 after each gradien t update. For faster and more reliable train ing, we use the stand ard deep lea rning te c hnique Batch Normalization . W e insert batch normalizatio n layers between layers and the following a c - ti vation fun ctions. The batch normalizatio n layers shift and scale the ou tputs compu ted b y the respectiv e layers, then the sign fun c tion is applied. Hen ce the forward p ass can still b e comp uted u sin g only b inary arithmetics, the ba tc h normalizatio n ju st shif ts th e thr e sh old of the bin ary activ ation from zero to a d a ta-depend ent numbe r . One peculiarity of our models is that we apply normalization also af ter the last linear lay er , befor e the o utputs are fed into a softmax- la y er with subsequent cross-entr opy lo ss. While this seems coun ter- intuitive a t fir st, we fin d that it improves loss and eases train ing substantially . W e suspect that this is du e to th e r escaling of outputs: Plain b inary n etworks o u tput large integer activ ations on the last lay e r which , fed into softmax ac ti vations, often result in vanishing grad ients. I I I . B I T E R RO R T O L E R A N C E O F B N N S T o und e rstand th e e r ror tolerance of BNNs we pr opose to formalize it using a metric that is calculated on the neu r on lev el with only one pass over the evaluation set. T o do so we focus o ur efforts o n CNNs. Please note tha t all of our definition are also ap plicable fo r FCNN if we view their inputs as 1 × 1 images. W e first define the local err or tolerance of a 2 d fea tu re map in a CNN. Then we lev erage this definition into the error tolerance of a single neu ron, which finally enables us to d e fin e the erro r toleranc e of the whole network . Consider a CNN and let n be the index of one neuro n. Recall th at th e outp u t of a n e uron is a 2 d f eature m ap with height U and width V . W e define the neur on’ s lo cal err o r tolerance T i,n,u,v at position u, v by modelin g the n umber of weight sign flips it can tolerate with out a chan ge of its output given the input x i . T o do so, let h i,n,u,v be th e ou tput of n -th neuron b e for e applying the activation function. For neuron s which are not in the first layer, we note two th ings: First, each n euron’ s output is compu ted by a weighted sum of inputs that are ± 1 with weights tha t are also ± 1 . Second , th e sign fun ction is applied to this output. Th us as long a s weigh t flips do n ot ch ange the sign of the weigh ted sum, a neuro n is error toler ant. Formally , we can quan tify the er ror toleranc e of a neuro n gi ven the input x i by th e d istance of its ou tput from 0 : T i,n,u,v = h i,n,u,v − s n − 1 2 . (2) W e inclu de s n to acco u nt for activ ation shifts d ue to the Batch Normalization Layer (without BatchNorm s n = 0 ), and to av oid amb iguity at 0 we subtract 1 2 . Note that T i,n,u,v is a measure for the worst case err or toler ance, in the sense that at least ⌊ T i,n,u,v 2 ⌋ + 1 weig h t sign flips, are necessary . W ith each weight sign flip h i,n,u,v can get closer to s n and finally flip the outp u t. The definition of T i,n,u,v yields the following theorem : Theorem 1 . Let b ∈ R ≥ 0 . If T i,n,u,v ≥ b fo r all u , v th en the neur on c a n tolerate at least ⌊ b 2 ⌋ bitflips, i.e. any bitflip of ⌊ b 2 ⌋ weights of the neur on do es n ot a ffect its o utput. The proof can be fo und in the ap pendix. Intuitively , a neu ron is error tolerant if it is robust acr oss all positions. Thus, we may demand that each position h as a local erro r toleran ce of at least b . More fo rmally , the err or tolerance T b i,n of a neur o n n given the input x i is d efined as: T b i,n = 1 U V U X u =1 V X v =1 1 { T i,n,u,v ≥ b } . (3) The error tolerance of the whole network can then be defined a s the average erro r toler ance acro ss all ne urons: T b i = 1 N N X n =1 T b i,n . (4) W e d etermine T b for a network by ev alu a ting th e BNN on the f ull data set: T b = 1 I I X i =1 T b i . (5) For neuro ns in the first lay er , we assume that the inputs ar e not in { ± 1 } but { 0 , . . . , Z } . T hus we have to scale the local error tolerance: T i,n,u,v = h i,n,u,v − s n − 1 2 Z . (6) W ith the definitio n o f T b , we aim to explain the robustness of BNNs against b it e rrors without fault in jection. I V . T R A I N I N G B I T E R RO R T O L E R A N T N E U R A L N E T W O R K S In this section we pro pose two different ways to regularize the BNN tr aining objective to account for b it errors dur in g training and achiev e bit erro r tole r ance. The first approa ch is based d irectly on the insights in Section III, and the second one is based on flip -training as prop osed by Hirtzlin et al. [5]. A. Direct Regularization As discussed in Sectio n III, a hig h T b -value for a n euron indicates that many weight signs can flip witho ut ch a nging the acti vation of the ne u ron. Th e quantity T b is n ot a d if - ferentiable function but e ssentially a coun t. Howev er , we can still construct a regularizer th at pu nishes those neuron s that do no t have a flip-toler ance of at least b . W e rely o n the we ll- known h inge fu nction to build a conve x and su b-differentiable regularizer . For a g iven bit-flip tolerance le vel b , we pr opose to regulariz e each neuro n n for eac h inpu t examp le i using the h inge-fun ction R b n,u,v ( x i ) = ma x(0 , b − T i,n,u,v ) . (7) Whenever a neuro n has a T i,n,u,v -value of at least b , the minimum of R is ach ie ved. T o r egularize th e who le network, we com pute the mean of all neur on r egularizers. W e weigh t the r egularizer with λ > 0 and add it to the loss. B. Flip Regularization Flip regula r ization is a techniq u e first prop osed by Hirtzlin et al. [5]. The idea is simple: T o make the network robust against bit err ors, we simulate those error s alrea d y during training time . Dur ing each forward-pass com p utation, we generate a random bitflip-m ask and apply it to the binary weights. Howe ver, ther e are two ways to im plement this. Let M denote a ran dom b itflip ma sk with entr ies ± 1 o f the same Name # Trai n # T est # Dim # classes Fashio nMNIST 1 60000 10000 (1,28,28) 10 CIF AR10 50000 10000 (3,32,32) 10 T ABLE I : Datasets used for experimen ts. Parame ter Range Regu lariza tion λ ∈ { 10 − 4 , 10 − 3 , 10 − 2 } Flip probabili ty p ∈ { 0 . 01 , 0 . 05 , 0 . 1 , 0 . 2 } Robust ness b ∈ { 32 , 64 , 128 } Fashio n FCNN In → FC 2048 → FC 2048 → 10 Fashio n CNN In → C64 → MP 2 → C64 → MP 2 → FC2048 → FC2048 → 10 CIF AR10 CNN In → C128 → C128 → MP 2 → C256 → C256 → MP 2 → C256 → C256 → MP 2 → FC 2048 → FC 2048 → 10 T ABLE I I : Parameters used fo r experiments. size as W that we multiply comp onent-wise to the binar ized weights. W e first con sider co mputing the bit-flip op eration as H = ( B ( W ) · M ) ◦ X . Stan dard ba ckpropa g ation o n a loss ℓ that is a f u nction of H yields the fo llowing g radient of ℓ with respect to B ( W ) ∇ B ( W ) ℓ = M · ∇ B ( W ) · M ℓ which e. g. for fully connected layers amounts to a gradien t update ∇ B ( W ) ℓ = M · ( ∇ H ℓ X T ) . W e see that an update com p uted this way is aware of the bit- flips that were perfor m ed and accoun ts for them. W e prop ose instead to use a special flip-opera to r with straight-th rough gradient ap proxima tio n. W e den ote by e p the bit er r or functio n that flips its input with prob ability p and let E p denote its compon ent-wise co unterpart. Durin g training we ch ange th e forward p a ss such that it com putes X l +1 := B ( E p ( B ( W l )) ◦ X l ) . W e rep lace the grad ient of E p with a straight-th rough appr ox- imation as in (1). This way , in the example above we now have H = E p ( B ( W )) ◦ X with gradient up dates ∇ B ( W ) ℓ = ∇ E p ( B ( W )) ℓ which for fully connected layers yields th e update ∇ B ( W ) ℓ = ∇ H ℓ X T which is unaware o f bit-flip s an d just uses the corrup ted outputs H . W e b e lieve that the appr oach usin g straight-thr ough gra d ient approx imation is superior and that the prob lems r eported by Hirtzlin et al. [5] can be sourced to th e m using the native implementatio n. Particularly as we will see in Sec tio n V, our imp le m entation d o es n ot overfit to a particular error probab ility . V . E X P E R I M E N T S In this sectio n we pr esent our experiment r esults. W e ev aluate fully co nnected neural networks (FCNNs) an d conv o- lutional ne u ral n etworks (CNNs) in the co nfiguration s shown in T a b le II FashionMNIST an d CIF AR10 (see T able I). In all experiments we run the Ad a m optim izer for 100 epoch s for FashionMNIST an d 250 ep ochs fo r CIF AR10 to min imize th e cross en tropy loss. W e use a batch size of 128 and an initial learning rate of 10 − 3 . T o stabilize tr aining we expon entially decrease the lear ning rate every 25 epoch s by 50 percen t. All experiments ar e repeated 5 tim es. Fir st, we p lot the accu r acy over bit error rate for NNs trained with straight-thro u gh gradient app roximation in the top r ow of Figure 1. T hen, we show the correlation between T b and accuracy over bit error rate in the bottom r ow of Figure 1. Fina lly , w e ev aluate th e impact of our pro posed dir ect regularizer on th e error tolerance in Fig u re 2 . W e notice that flip regularizatio n imp roves the accuracy when b it errors are intro duced. This effect is stronger f or FCNNs. Mo reover we see that we can trade a high accuracy at small er ror rates with a high accu racy at larger error rates. Howe ver we do not observe an overfitting to a particu lar bit error pro bability . Seco nd, in the case of FCNNs trained on FashionMNIST and CNNs traine d on CIF AR10, we ob serve that the accu racy over different error r ates indeed corre la te s with T b . For th e case of CNNs on FashionMNI ST , a co rrela- tion cannot be observed. Overall we see that CNNs are mor e brittle than FCNNs. This is likely du e to th e weight-sh a ring in CNNs, where a flip in a conv olu tion filter has effects at every position in the f eature m ap. This difference is also reflected in th e T b values. I n co nclusion we see that the T b measure is better suited for fully conn ected networks. Figure 2 depicts the results f or the direct r egu larization training intro duced in Section I V -A. W e observe that this training meth od does not increase the accu racy over erro r rate, althoug h the T b values are high. Instead regular izin g the training objective this way decreases accu racy at any error rate. Similar curve pr o gressions can be o b served for other hyperp arameter settings and the CIF AR10 dataset. For sm a ller regularization scalings λ , the observed curves app roach th e unregularized cu rves, howe ver we never obtain higher accu- racies at any error r ate. W e conclude that o ur regular izer is currently unusable : While it effectively inc r eases T b , it doe s so by sacrificin g accur acy thereby r endering the resulting mod els useless. V I . R E L AT E D W O R K Deep Nets offer re markable p e rforman c e in state of th e art imag e classification tasks, but req uire immense com p u- tation p ower during training an d during inferen c e. Thus, a natural research question in this context is to ask, whether we ca n r educe th e co m putation and memo ry req u irements of Dee p Nets with out hurtin g its p erforman ce. A commo n approa c h to red uce both , mem ory and computation d emands, is to q u antize the weights o f an alread y trained network after tr aining is complete d . This way , weights can be stored using fe wer b its and fixed p oint arithmetics can be exploited during infer ence. Howe ver, this p ost-processing step u sually degrades the classification pe r formanc e , wh ich leads to sub- optimal pe r formanc e [8], [ 9], [10]. More evolved app roaches 0 5 10 81 82 83 84 85 86 87 88 89 90 91 Bit Erro r Rate (%) Accuracy (%) FCNN F ashionMNIST No Reg. flip, p = 0 . 1 flip, p = 0 . 2 0 5 10 75 80 85 90 Bit Erro r Rate (%) CNN F ashionMNIST No Reg. flip, p = 0 . 05 flip, p = 0 . 1 0 5 10 30 40 50 60 70 80 Bit Erro r Rate (%) CNN CIF AR10 No Reg. flip, p = 0 . 05 flip, p = 0 . 1 0 20 40 60 0 . 5 0 . 6 b T b FCNN F ashionMNIST No Reg. flip, p = 0 . 1 flip, p = 0 . 2 0 20 40 60 0 . 4 0 . 5 0 . 6 0 . 7 b CNN F ashionMNIST No Reg. flip, p = 0 . 05 flip, p = 0 . 1 0 20 40 60 0 . 6 0 . 7 0 . 8 0 . 9 b CNN CIF AR10 No Reg. flip, p = 0 . 05 flip, p = 0 . 1 Fig. 1 : Th e experim e nt results for our pr oposed flip-trainin g. In the top row are the a c c uracies plotted over b it erro r rate. In the bottom row are the T b values plotted over b . 0 5 10 70 80 90 Bit Erro r Rate (%) Accuracy (%) FCNN F ashionMNIST No reg. b : 3 2 , λ : 10 − 4 b : 6 4 , λ : 10 − 3 0 20 40 60 0 . 5 0 . 6 b T b FCNN F ashionMNIST No reg. b : 3 2 , λ : 1 0 − 4 b : 6 4 , λ : 1 0 − 3 Fig. 2 : The experiment results fo r the direct regular ization. incorpo rate qua n tization directly into the training , so that nets can retain their accu r acy . Here two appr o aches exists: The first app roach aims to perform a ll oper ations during training (inclu ding gradien t c omputation ) with fixed point arithmetics. Th is way , the network is alw ays r estricted to fixed p oint values an d e fficient acce lerations of the training is enab led by the mea n s of FPGAs an d GPUs. Howev er , such an approach must guarantee a certain num erical precision so that gradient updates are still meaningful a nd it h a s to m ake sure that gradient estimates du ring trainin g are still unb ia sed [11]. The seco n d a p proach on ly q uantizes the network durin g the forward pass, but perfo rms a ll gradient o perations with full floatin g point p recision. This way , gradient update s can be perf ormed with fu ll floating point pr e cision, while th e networks per f ormance is based on its fixed-po int we ig hts. In its most extreme version this appro ach restricts all intermed iate calculations and weig hts to only two values, e.g. ‘+1 ’ and ‘-1’[1 2 ] , [7]. Since gradient compu tations are still perfo rmed with flo ating-poin t precision, this ap proach still enables regular optimization with stoch a stic gradien t descen t and possible reg- ularization, e.g . to enh ance the robustness of neur a l n etworks against bit er rors. Nonetheless, the error toler a nce training o f BNNs f or low- power memo ries has n ot received m u ch atten tion yet. Recent work related to BNNs on NVMs fo cuses more on the real- ization of the low-po wer in-mem ory proc essing o f BNNs th an on the erro r to lerance training aspect. E.g ., in [1 3] H ir tzlin et al. pro pose to use Spin T orqu e Mag netoresistive RAM (ST - MRAM) for th e in -memory imp lementation of NNs. In th eir work they h ighlight the inheren t b it erro r tolerance of BNNs that we r e train e d withou t robustness training. Th ey show th at half th e en ergy can be saved without accuracy loss when using a low power setting to write to the m e m ory cells. V I I . C O N C L U S I O N In this work, we impr oved the state-of-the-a r t bit error tolerance training for BNNs and evaluated a metric that aims to explain the achieved tolerance. W e we r e able to elimina te the NNs’ overfitting to the er r or rates b y employin g a special flip-opera tor with straight-th rough grad ient app roximation in the gr adient comp utation. For BNNs trained with our flip- regularization, we e valuated the robustness metric and found that it corr e la te s with accu racy over err o r rate fo r all FCNNs tested. CNNs train ed on FashionMNIST with our imp roved flip-regularization do no t sho w a h igh robustness value T b ; we h ypothesize this is because of the weight sharing pro perty of CNNs and their mor e com plex layer struc tu re. W e also trie d to optimize the NNs with respect to the robustness metric T b . Althoug h we can achieve high T b values, it d oes n ot lead to a better accur acy over erro r rate. W e th ink th a t th is is main ly du e to regular izing e ach neuron equally in ou r mo del. This ignores th e ef f ect that a highly robust secon d layer can com p ensate low robustness of the first layer . In the futu re, we aim to improve the robustness metric T b , so that error toler a nce is better descr ib ed by it. I n th e flip- regularization, only the er r or rate can be configu red, th erefore we also aim to improve our direct regularization metho d, so that the error tolerance can be mo re finely tun e d , e.g. with a configu rable tr a de-off between accuracy and bit error tolerance. R E F E R E N C E S [1] M. Courbaria ux and Y . Bengio, “Binarynet : Training deep neural net- works with weights and act i v ations constrained to +1 or -1, ” CoRR , vol. abs/1602.02830 , 2016. [2] S. Mehta, M. Rasteg ari, L. G. Shapiro, and H. Hajishirz i, “Espnetv2: A light-we ight, powe r effici ent, and general purpose con volutiona l neural netw ork, ” CoRR , vol. abs/1811.11431, 2018. [3] H. Y ang, M. Fritzsche, C. Bartz, and C. Meinel, “Bmxnet: An open- source binary neural netwo rk implementation based on mxnet, ” in Pr oceedi ngs of the 25th ACM Internati onal Confer ence on Multime dia , MM ’17, (Ne w Y ork, NY , USA), pp. 1209–1212, A CM, 2017. [4] J. Boukhobza , S. Rubini, R. Chen, and Z. Shao, “Emergin g n vm: A surve y on archi tectura l integra tion and research challe nges, ” ACM T rans. Des. Autom. Electr on. Syst. , vol. 23, pp. 14:1–14:32, 2017. [5] T . Hirtzlin , M. Bocquet, J. . Klein, E. Nowak, E. V ianel lo, J. . Portal, and D. Querlioz, “Outstanding bit error tolerance of resisti ve ram-based binariz ed neural netwo rks, ” in 2019 IEEE Internatio nal Confer ence on Artificial Intellig ence Circu its and Systems (AICAS) , pp. 288–292, 2019. [6] C. T orres-Huitzi l and B. Girau, “Fault and error toleran ce in neural netw orks: A revie w , ” IEEE Access , vol. 5, pp. 17322–17341, 2017. [7] I. Hubara, M. Courbaria ux, D. Soudry , R. E l-Y aniv , and Y . Bengio, “Bi- narize d neural networks, ” in A dvance s in neural information pro cessing systems , pp. 4107–4115, 2016. [8] D. Lin, S. T alathi, and S. Annapureddy , “Fixed point quantiz ation of deep con vol utional networks, ” in International Confer ence on Machine Learning , pp. 2849–2858, 2016. [9] M. Courbariaux, J.-P . Davi d, and Y . Bengio, “Low precisi on storage for deep learning, ” arX iv prep rint arXiv:1412.7024 , 2014. [10] S. Han, H. Mao, and W . J. Dally , “Deep compression : Compressing deep neural netw orks with pruning, trained quantizat ion and huffman coding, ” arXiv preprint , 2015. [11] S. Gupta, A. Agra wal, K. Gopal akrishnan , and P . Narayanan, “Deep learni ng with limited numerical precision, ” in International Confere nce on Machine Learning , pp. 1737–1746, 2015. [12] M. Raste gari, V . Ordonez, J. Redmon, and A. Farhadi , “Xnor-net: Imagenet classification using binary con volutiona l neural networks, ” in Eur opean Confer ence on Computer V ision , pp. 525–542, Springer , 2016. [13] T . Hirtzli n, B. Penko vsky , J.-O. Klein, N. Locatelli , A. F . V incent , M. Bocque t, J. -M. Portal, and D. Querlioz, “Implemen ting binarized neural net works with magnetore sisti ve ram without error correc tion, ” 2019. A P P E N D I X Pr oof for Theorem 1. At first we consider the n - th n e uron an d assume that it is not in the first layer . Let u, v be a position for the con volution result. As described in (2 ), we know th at T i,n,u,v = | h i,n,u,v − s n − 1 2 | . For improved readability , we write h fo r h i,n,u,v and s fo r s n . By con struction of the activ ation function , th e output o f the neuro n at position u , v is +1 for h − s − 1 2 > 0 an d − 1 f or h − s − 1 2 < 0 since h and s are assumed to b e integer values. Further more, the case h − s − 1 2 = 0 does not oc cur . I f T i,n,u,v ≥ b th en either h − s − 1 2 ≥ b or h − s − 1 2 ≤ − b . In the first case we have h − s − 1 2 ≥ b ≥ 0 and the o utput at u, v is + 1 . W e d enote by ˜ y the value o f h after the bitflips of up to ⌊ b 2 ⌋ weigh ts. By definition , h is a w e ig hted sum where e a c h summan d is one in put of the neuro n multiplied with one weig ht. Since each summand is in {± 1 } , chan ging one sign chan ges h by 2. Therefore ˜ h can dif fer by up to 2 · ⌊ b 2 ⌋ fr om h a n d is in [ h − ⌊ b ⌋ , h + ⌊ b ⌋ ] . For ˜ h we still have ˜ h − s − 1 2 ≥ b − ⌊ b ⌋ ≥ 0 wh ich causes an outpu t of + 1 at u, v . The second case is proven an alogously: W e have h − s − 1 2 ≤ − b ≤ 0 an d the outpu t of the neur on at u, v is − 1 . Changin g the sign of ⌊ b 2 ⌋ su m mands o f h can in crease the value of h by up to ⌊ b ⌋ . After at most ⌊ b 2 ⌋ bitflips, we obtain a new value ˜ h ∈ [ h − ⌊ b ⌋ , h + ⌊ b ⌋ ] . W e h av e ˜ h − s − 1 2 ≤ − b + ⌊ b ⌋ ≤ 0 and the output of this ne u ron at u, v is still − 1 . If the n -th n euron is in the first layer w e have T i,n,u,v = | h − s − 1 2 | Z by (6). Since the summ ands of h are in [ − Z, Z ] , th e value of h ca n chan ge by up to 2 · Z p er bitflip. Thus, after ⌊ b 2 ⌋ bitflips the value of ˜ h is in the interval [ h − Z · ⌊ b ⌋ , h + Z · ⌊ b ⌋ ] . But the p roof still works as above since | h − s − 1 2 | ≥ Z · b by assumption.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment