Towards Brain-Computer Interfaces for Drone Swarm Control

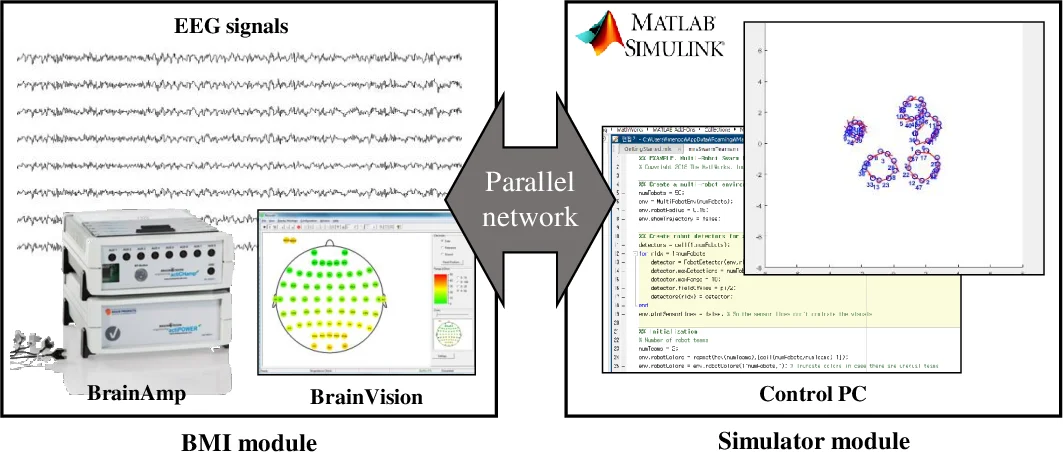

Noninvasive brain-computer interface (BCI) decodes brain signals to understand user intention. Recent advances have been developed for the BCI-based drone control system as the demand for drone control increases. Especially, drone swarm control based on brain signals could provide various industries such as military service or industry disaster. This paper presents a prototype of a brain swarm interface system for a variety of scenarios using a visual imagery paradigm. We designed the experimental environment that could acquire brain signals under a drone swarm control simulator environment. Through the system, we collected the electroencephalogram (EEG) signals with respect to four different scenarios. Seven subjects participated in our experiment and evaluated classification performances using the basic machine learning algorithm. The grand average classification accuracy is higher than the chance level accuracy. Hence, we could confirm the feasibility of the drone swarm control system based on EEG signals for performing high-level tasks.

💡 Research Summary

This paper investigates the feasibility of controlling a swarm of drones using a non‑invasive brain‑computer interface (BCI) based solely on electroencephalogram (EEG) signals. While previous BCI work has demonstrated control of single devices such as robotic arms, spellers, wheelchairs, and even individual quadcopters, the notion of steering multiple drones as a coordinated swarm remains largely unexplored. To address this gap, the authors designed a visual‑imagery paradigm that maps four high‑level swarm commands—Hovering, Splitting, Dispersing, and Aggregating—onto imagined visual scenes. These commands represent fundamental maneuvers required for swarm operation in simulated tactical scenarios.

Seven healthy, BCI‑naïve participants (five males, two females, ages 22‑33) took part in the study. Each subject completed 200 trials (50 trials per class) while seated 90 cm from a monitor that presented visual cues. A trial consisted of a 3‑second rest period, a 3‑second visual cue/preparation phase, a 3‑second fixation period, and a 4‑second visual‑imagery period during which participants imagined the instructed swarm state. EEG was recorded with a 64‑channel BrainVision system (Ag/AgCl electrodes, 10‑20 layout), sampled at 1 kHz, with a 60 Hz notch filter and impedances kept below 10 kΩ.

Data preprocessing applied a zero‑phase 2nd‑order Butterworth band‑pass filter (8‑30 Hz), targeting the mu and beta rhythms known to be modulated by visual imagery. The continuous signal was segmented into 4‑second epochs aligned with the imagery interval. Spatial features were extracted using the Common Spatial Pattern (CSP) algorithm; the logarithmic variances of the first three and last three CSP components (six features total) were used for classification. A Linear Discriminant Analysis (LDA) classifier, employing a one‑versus‑rest scheme for the four classes, was trained and evaluated using 5‑fold cross‑validation to mitigate overfitting.

The results show a grand‑average classification accuracy of 36.7 % ± 4.6 % across all participants, significantly above the chance level of 25 % for a four‑class problem. Individual performance varied, with the best subject achieving 41.3 % and the lowest 28.4 %, suggesting that the quality of visual‑imagery execution influences decoding success. The authors argue that these findings demonstrate that EEG captured under a controlled simulator environment contains discriminative information sufficient to differentiate high‑level swarm commands, even when using a relatively simple machine‑learning pipeline.

Nevertheless, the reported accuracy remains modest for practical real‑time control. The paper acknowledges several limitations: (1) the use of a basic CSP‑LDA approach, which may not fully exploit the rich spatiotemporal dynamics of EEG; (2) a limited command set and a simulated rather than physical swarm; (3) variability in participants’ ability to perform visual imagery, which directly impacts classification performance. To overcome these constraints, the authors propose future work that includes (a) adopting deep‑learning architectures (e.g., convolutional neural networks, recurrent networks, or transformer models) to automatically learn robust features; (b) expanding the command vocabulary and investigating sequential or hierarchical command structures; (c) integrating real drones and establishing a low‑latency feedback loop for closed‑loop control; and (d) exploring hybrid BCI modalities (e.g., combining EEG with eye‑tracking or EMG) to improve reliability and reduce user fatigue.

In conclusion, the study provides the first empirical evidence that a visual‑imagery‑based EEG BCI can distinguish four fundamental swarm‑control commands above chance level, thereby laying groundwork for brain‑swarm interfaces applicable to military operations, industrial disaster response, and advanced AI‑driven autonomous systems. The authors anticipate that with improved signal processing, richer command sets, and real‑world testing, EEG‑driven swarm control could become a viable human‑machine interaction paradigm.

Comments & Academic Discussion

Loading comments...

Leave a Comment