Quantum tensor singular value decomposition with applications to recommendation systems

In this paper, we present a quantum singular value decomposition algorithm for third-order tensors inspired by the classical algorithm of tensor singular value decomposition (t-svd) and then extend it to order-$p$ tensors. It can be proved that the q…

Authors: Xiaoqiang Wang, Lejia Gu, Joseph Heung-wing Joseph Lee

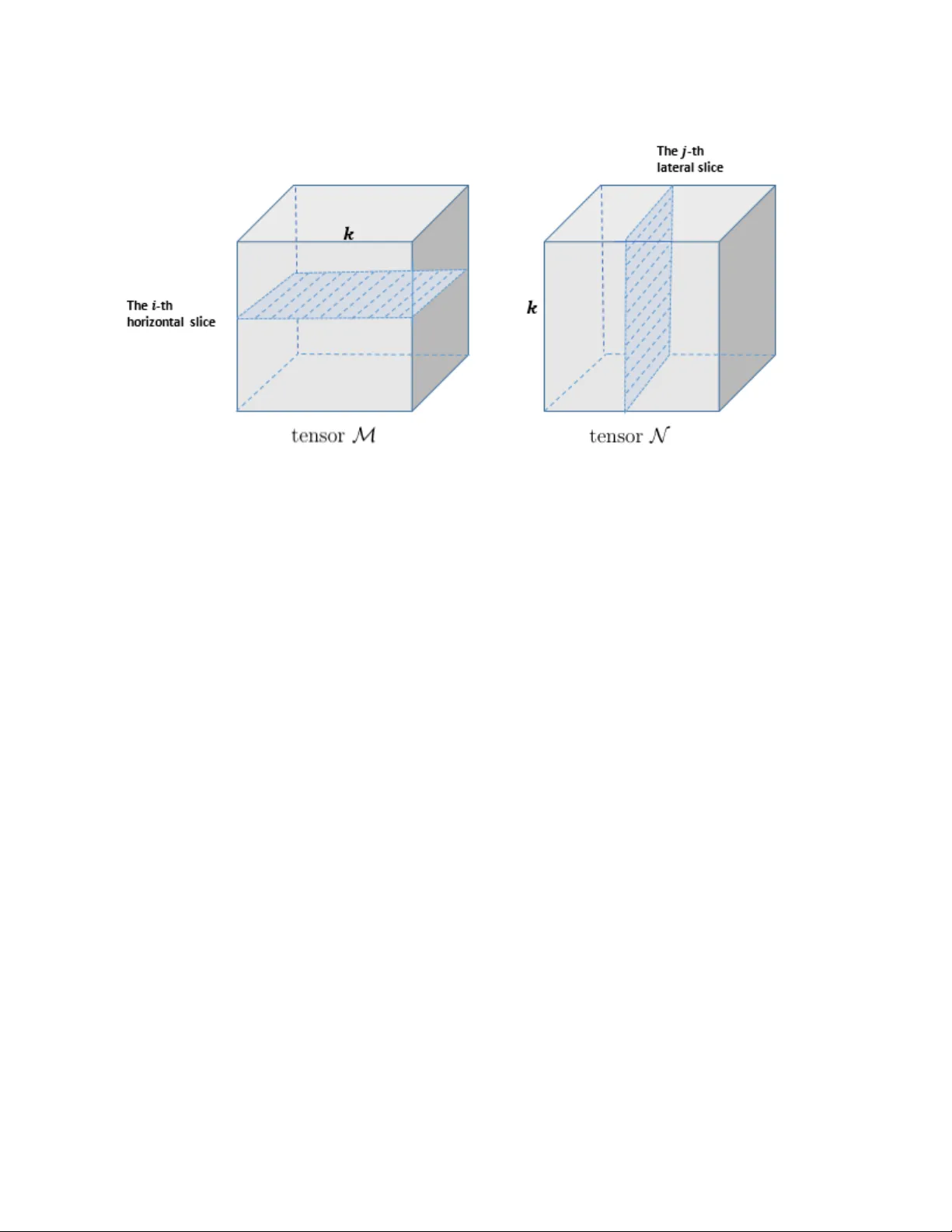

Quan tum tensor singular v alue decomp osition with applications to recommendation systems Xiao qiang W ang ∗ , Lejia Gu † , Joseph Heung-wing Lee ‡ , and Guofeng Zhang § Departmen t of Applied Mathematics, The Hong Kong Polytec hnic Universit y , Hong Kong F ebruary 4, 2020 Abstract In this pap er, w e present a quantum singular v alue decomposition algorithm for third-order tensors inspired by the classical algorithm of tensor singular v alue decomp osition (t-svd) and then extend it to order- p tensors. It can b e pro v ed that the quantum v ersion of the t-svd for a third-order tensor A ∈ R N × N × N ac hieves the complexity of O ( N p olylog( N )), an exponential sp eedup compared with its classical counterpart. As an application, w e propose a quan tum al- gorithm for recommendation systems whic h incorp orates the con textual situation of users to the p ersonalized recommendation. W e provide recommendations v arying with contexts b y measur- ing the output quan tum state corresponding to an appro ximation of this user’s preferences. This algorithm runs in expected time O ( N p olylog( N )poly ( k )) , if ev ery fron tal slice of the preference tensor has a goo d rank- k approximation. A t last, w e provide a quan tum algorithm for tensor completion based on a different truncation method which is tested to hav e a go od p erformance in dynamic video completion. Keywor ds: tensor singular v alue decomposition (t-svd), quan tum algorithms, tensor comple- tion. 1 In tro duction T ensor refers to a m ulti-dimensional arra y of ob jects. The order of a tensor is the num b er of mo des. F or example, A ∈ R N 1 × N 2 × N 3 is a third-order tensor of real num b ers with dimension N i for mo de i , i = 1 , 2 , 3, resp ectively . Due to their flexibilit y of representing data, tensors hav e v ersatile applications in man y areas such as image deblurring, video reco very , denoising, data completion, multi-partite quantum systems, netw orks and machine learning [ 13 , 38 , 39 , 5 , 40 , 14 , 23 , 22 , 29 , 28 , 10 , 35 , 25 , 24 , 26 , 37 , 34 , 16 ]. Some of these practical problems are addressed b y differen t wa ys of tensor decomp osition, including, but not limited to, CANDECOMP/P ARAF A C (CP) [ 3 ], TUCKER [ 33 ], higher-order singular v alue decomp osition (HOSVD) [ 4 , 7 ], T ensor-train decomp osition (TT) [ 20 ] and tensor singular v alue decomp osition (t-svd) [ 13 , 39 , 16 ]. Plen ty of research has b een carried out on t-svd recen tly . The concept of t-svd w as first proposed b y Kilmer and Martin [ 13 ] for third-order tensors. Later, Martin et al. [ 17 ] later extended it to higher-order tensors. The t-svd algorithm is sup erior to TUCKER and CP decomp ositions in the sense that it extends the familiar matrix svd strategy to tensors efficiently thus av oiding the loss ∗ xiao qiang.w ang@connect.p olyu.hk † le-jia.gu@connect.p olyu.hk ‡ joseph.lee@p olyu.edu.hk § Guofeng.Zhang@p olyu.edu.hk 1 of information inherent in flattening tensors used in TUCKER and CP decomp ositions. One can obtain a t-svd by computing matrix svd in the F ourier domain, and it also allows other matrix factorization techniques like QR decomp osition to b e extended to tensors easily in the similar wa y . In this pap er, we prop ose a quan tum version of t-svd. An imp ortan t step in a (classical) t-svd algorithm is p erforming F ast F ourier T ransform (FFT) along the third mo de of a tensor A ∈ R N 1 × N 2 × N 3 , obtaining ˆ A with computational complexit y O ( N 3 log N 3 ) for eac h tub e A ( i, j, :) , i = 0 , · · · , N 1 − 1 , j = 0 , · · · , N 2 − 1. In the quan tum t-svd algorithm to b e proposed, this pro cedure is accelerated by the quan tum F ourier transform (QFT) [ 19 ] whose complexity is only O ((log N 3 ) 2 ). Moreo ver, due to quantum sup erposition, the QFT can b e p erformed on the third register of the state |Ai , which is equiv alen t to p erforming the FFT for all tubes of A parallelly , so the total complexit y of this step is still O ((log N 3 ) 2 ). After p erforming the QFT, in order to further accelerate the second step in the classical t-svd algorithm (the matrix svd), w e apply the quantum singular v alue estimation (QSVE) algorithm [ 12 ] to the frontal slice ˆ A (: , : , i ) parallelly with complexity O (p olylog( N 1 N 2 ) / SVE ), where SVE is the minimum precision of estimated singular v alues of ˆ A (: , : , i ), i = 0 , · · · , N 3 − 1. T raditionally , the quantum singular v alue decomp osition of matrices inv olv es exp onen tiating non-sparse lo w- rank matrices and output the sup erp osition state of singular v alues and their associated singular v ectors in time O (p olylog( N 1 , N 2 )) [ 27 ]. Ho wev er, for achieving p olylogarithmic complexit y , this Hamiltonian simulation metho d requires that the matrix to b e exp onen tiated is lo w-rank and it is difficult to b e satisfied in general. In our algorithm, we use the QSVE algorithm prop osed in [ 12 ], where the matrix is unnecessarily low-rank, sparse or Hermitian and the output is a sup erposition state of estimated singular v alues and their asso ciated singular v ectors. Ho w ever, the original QSVE algorithm prop osed in [ 12 ] has to b e carefully mo dified to b ecome a useful subroutine in our quan tum tensor-svd algorithm. In fact, an important result, Theorem 4 , is dev elop ed to address this tricky issue. In Section 3.2 , we sho w that the proposed quantum t-svd algorithm for tensors A N × N × N ( N 1 = N 2 = N 3 = N for simplification), Algorithm 2 , achiev es the complexity of O ( N p olylog( N ) / SVE ), a time exponentially faster than its classical coun terpart O ( N 4 ). In Section 3.3 , we extend the quan tum t-svd algorithm to order- p tensors. In [ 12 ], Kerenidis and Prak ash designed a quantum algorithm for recommendation systems mo deled b y an m × n preference matrix, which makes recommendations by just sampling from an appro ximation of the preference matrix. Therefore, the running time is only O (p oly( k )p olylog( mn )) if the preference matrix has a go od rank- k approximation. T o ac hieve this, they pro jected a state corresp onding to a user’s preferences to the approximated ro w space spanned by singular vectors with singular v alues greater than the chosen threshold. After measuring this pro jected state in a computational basis, they got recommended pro duct index for the input user. In a recommendation system, the task is to predict a user’s preferences for a pro duct and then mak e recommendations. Most recommendation systems do not tak e con text in to accoun t. In contrast, con text-aw are recommendation systems incorp orate the contextual situation of a user to p ersonalized recommendation, i.e., a pro duct is recommended to a user v arying with differen t con texts (time, lo cation, etc.). Th us, taking con text in to accoun t renders a dynamic recommen- dation system of three elemen ts user, pro duct and con text, which can be neatly mo deled b y a third-order tensor. W e apply our quan tum tensor-svd algorithm to these context-a w are recom- mendation systems. Since the pro duct that a user preferred in a certain context is v ery lik ely to affect the recommendation for him/her at other con texts, the t-svd factorization tec hnique suits the problem very w ell b ecause in t-svd the QFT is p erformed first to bind a user’s preferences in differen t con text together. t-svd factorization approac hes ha ve b een sho wn to hav e b etter p erformance than other tensor 2 decomp osition tec hniques, suc h as HOSVD, when applied to facial recognition [ 8 ]. It also has go od p erformance in tensor completion [ 38 ]. Ho wev er, the computational cost of the t-svd is to o high. Compared with the classical t-svd with high complexity , our quantum t-svd is able to b oth mo del the context information and reduce the computational complexit y . Indeed, our quantum recommendation systems algorithm provides recommendations for a user i by just measuring the output quantum state corresp onding to an approximation of the i -th fron tal slice of the preference tensor. It is designed based on the lo w-rank tensor reconstruction using t-svd, that is, the full preference tensor can b e appro ximated by the truncated t-svd of the subsample tensor. W e also sho w that this new quan tum recommendation systems algorithm is exponentially faster than its classical counterpart. The rest of the pap er is organized as follows. The classical t-svd algorithm and several related concepts are in tro duced in Section 2.1 ; Section 2.2 summarizes the quan tum singular v alue esti- mation algorithm prop osed in [ 12 ]. Section 3 pro vides our main algorithm, quan tum t-svd, and its complexity analysis, then extends this algorithm to order- p tensors. In Section 4 , w e prop ose a quan tum algorithm for context-a w are recommendation systems, whose p erformance and complex- it y are analyzed in Sections 4.3 and 4.4 respectively . W e pro ve that for a sp ecified user i to be recommended pro ducts, the output state corresp onds to an appro ximation of this user’s preference information. Therefore, measuring the output state in the computational basis is a go o d recommen- dation for user i with a high probability . In Section 4.5 , w e consider a tensor completion problem and design a quan tum algorithm which is similar to Algorithm 4 but truncation is p erformed in another wa y . Notation. In this pap er, script letters are used to denote tensors. Capital nonscript letters are used to represent matrices, and b oldface low er case letters refer to vectors. F or a third-order tensor A ∈ R N 1 × N 2 × N 3 , subtensors are formed when a subset of indices is fixed. Sp ecifically , a tube of size 1 × 1 × N 3 can b e regarded as a vector and it is defined by fixing all indices but the last one, e.g., A ( i, j, :). A slice of a tensor A can b e regarded as a matrix defined by fixing one index, e.g., A ( i, : , :), A (: , i, :), A (: , : , i ) represent the i -th horizontal, lateral, fron tal slice resp ectiv ely . W e use A ( i ) to denote the i -th frontal slice A (: , : , i ) , i = 0 , · · · , N 3 − 1. The i -th row of the matrix A is denoted by A i . The tensor after the F ourier transform (the FFT for the classical t-svd or the QFT for the quan tum t-svd) along the third mo de of A is denoted b y ˆ A and its m -th fron tal slice is ˆ A ( m ) . 2 Preliminaries In this preliminary section, w e first review the definition of t-pro duct and the classical (namely , non-quan tum) t-svd algorithm prop osed by Kilmer et al. [ 13 ] in 2011. Then in Section 2.2 , we briefly review the quantum singular v alue estimation algorithm (QSVE) [ 12 ] prop osed by Kerenidis and Prak ash [ 12 ] in 2017. 2.1 The t-svd algorithm based on t-pro duct In this subsection, we first review the definition of circulan t conv olution b et ween vectors, based on whic h w e presen t the t-pro duct b et ween tensors, finally w e presen t a t-svd algorithm. Definition 1. Given a ve ctor u ∈ R N and a tensor B ∈ R N 1 × N 2 × N 3 with fr ontal slic es B ( ` ) , ` = 0 , · · · , N 3 − 1 , the matric es circ( u ) and circ( B ) ar e define d as 3 circ( u ) , u 0 u N − 1 · · · u 1 u 1 u 0 · · · u 2 . . . . . . . . . . . . u N − 1 u N − 2 · · · u 0 , circ( B ) , B (0) B ( N 3 − 1) · · · B (1) B (1) B (0) · · · B (2) . . . . . . . . . . . . B ( N 3 − 1) B ( N 3 − 2) · · · B (0) , r esp e ctively. Definition 2. L et u, v ∈ R N . The cir cular c onvolution b etwe en u and v pr o duc es a ve ctor x of the same size, define d as x ≡ u ~ v , circ( u ) v . As a circulant matrix can b e diagonalized by means of the F ast F ourier transform (FFT), from ( 2 ) w e hav e FFT( x ) = diag(FFT( u ))FFT( v ) , where diag( u ) returns a square diagonal matrix with elemen ts of v ector u on the main diagonal. FFT( u ) computes the DFT of u , i.e., if ˆ u = FFT( u ) , then ˆ u k = P N − 1 j =0 e − 2 iπ kj / N u j , and it reduces the complexity of computing the DFT from O ( N 2 ) to only O ( N log N ). The next result formalizes the ab o ve discussions. Theorem 1. [ 32 ] (Cyclic Convolution The or em) Given u , v ∈ R N , let x = u ~ v . We have FFT( x ) = FFT( u ) FFT( v ) , (1) wher e is the Hadamar d pr o duct. If a tensor A ∈ R N 1 × N 2 × N 3 is regarded as an N 1 × N 2 matrix of tub es of dimension N 3 , whose ( i, j )-th entry (a tube) is A ( i, j, :), then based on the definition of circular con volution b et w een v ectors, the t-pro duct b et ween tensors can b e defined. Definition 3. [ 13 ] L et M ∈ R N 1 × N 2 × N 3 and N ∈ R N 2 × N 4 × N 3 . The t-pr o duct M ∗ N is an N 1 × N 4 × N 3 tensor, denote d by A , whose ( i, j ) -th tub e A ( i, j, :) is the sum of the cir cular c onvolution b etwe en c orr esp onding tub es in the i -th horizontal slic e of the tensor M and the j -th later al slic e of the tensor N , i.e., A ( i, j, :) = N 2 − 1 X k =0 M ( i, k , :) ~ N ( k , j, :) . (2) 4 Figure 1: The illustration of the t-pro duct M ∗ N in Definition 3 According to Theorem 1 and Definition 3 , we ha ve FFT( A ( i, j, :)) = N 2 − 1 X k =0 FFT( M ( i, k , :)) FFT( N ( k , j, :)) , (3) for i = 0 , · · · , N 1 − 1 , j = 0 , · · · , N 4 − 1 . Let ˆ A b e the tensor, whose ( i, j )-th tub e is FFT( A ( i, j, :)). Then equation ( 3 ) becomes ˆ A ( i, j, :) = P N 2 − 1 k =0 ˆ M ( i, k , :) ˆ N ( k , j, :) , which can also b e written in the form ˆ A ( l ) ( i, j ) = P N 2 − 1 k =0 ˆ M ( l ) ( i, k ) ˆ N ( l ) ( k , j ) for the ` -th fron tal slices of these tensors. Therefore, ˆ A ( ` ) = ˆ M ( ` ) ˆ N ( ` ) . The following theorem summarizes the idea stated ab o ve. Theorem 2. [ 13 ] F or any tensor M ∈ R N 1 × N 2 × N 3 and N ∈ R N 2 × N 4 × N 3 , we have A = M ∗ N ⇐ ⇒ ˆ A ( ` ) = ˆ M ( ` ) ˆ N ( l ) (4) holds for ` = 0 , 1 , · · · , N 3 − 1 . Mor e over, for another tensor T ∈ R N 4 × N 5 × N 3 , we have A = M ∗ N ∗ T ⇐ ⇒ ˆ A ( ` ) = ˆ M ( ` ) ˆ N ( l ) ˆ T ( l ) , (5) for ` = 0 , · · · , N 3 − 1 . No w we can get the tensor decomp osition for a tensor A using the t-pro duct b y p erforming matrix factorization strategies on ˆ A ( ` ) . F or example, the tensor QR decomposition A = Q ∗ R is defined as p erforming the matrix QR decomp osition on eac h frontal slice of the tensor ˆ A , i.e., ˆ A ( ` ) = ˆ Q ( ` ) · ˆ R ( ` ) , for ` = 0 , · · · , N 3 − 1 , where ˆ Q ( ` ) is an orthogonal matrix and ˆ R ( ` ) is an upp er triangular matrix [ 9 ]. If we compute the matrix svd on ˆ A ( ` ) , i.e., ˆ A ( ` ) = ˆ U ( ` ) ˆ S ( ` ) ˆ V ( ` ) † , the t-svd of tensor A is obtained; see Algorithm 1 . Before presenting the t-svd algorithm for third-order tensors, we first introduce some related definitions. 5 Definition 4. tensor tr ansp ose [ 13 ] The tr ansp ose of a tensor A ∈ R N 1 × N 2 × N 3 , denote d A T , is obtaine d by tr ansp osing al l the fr ontal slic es and then r eversing the or der of the tr ansp ose d fr ontal slic es 2 thr ough N 3 . Definition 5. tensor F r ob enius norm [ 13 ] The F r ob enius norm of a thir d-or der tensor A is define d as ||A|| F = q P i,j,k |A ( i, j, k ) | 2 . Definition 6. identity tensor [ 13 ] The identity tensor I ∈ R N 1 × N 2 × N 3 is a tensor whose first fr ontal slic e I (0) is an N 1 × N 1 identity matrix and al l the other fr ontal slic es ar e zer o matric es. Definition 7. ortho gonal tensor [ 13 ] A tensor U ∈ R N 1 × N 2 × N 3 is an ortho gonal tensor if it satisfies U T ∗ U = U ∗ U T = I . The tensor transpose defined in Definition 4 has the same property as the matrix transp ose, e.g., ( A ∗ B ) T = B T ∗ A T . Similarly , just like orthogonal matrices, the orthogonality defined in Definition 7 preserv es the F rob enius norm of a tensor, i.e., ||Q ∗ A|| F = ||A|| F if Q is an orthogonal tensor. Moreo v er, when the tensor is tw o-dimensional, Definition 7 coincides with the definition of orthogonal matrices. Finally , note that frontal slices of an orthogonal tensor are not necessarily orthogonal. Theorem 3. [ 13 ] tensor singular value de c omp osition (t-svd) F or A ∈ R N 1 × N 2 × N 3 , its t-svd is given by A = U ∗ S ∗ V T , wher e U ∈ R N 1 × N 1 × N 3 , V ∈ R N 2 × N 2 × N 3 ar e ortho gonal tensors, and every fr ontal slic e of S ∈ R N 1 × N 2 × N 3 is a diagonal matrix. There are several v ersions of t-svd algorithms. In what follows we presen t the one prop osed in [ 13 ]. Algorithm 1 t-svd for third-order tensors [ 13 ] Input : A ∈ R N 1 × N 2 × N 3 . Output : U ∈ R N 1 × N 1 × N 3 , S ∈ R N 1 × N 2 × N 3 , V ∈ R N 2 × N 2 × N 3 ˆ A = fft( A , [] , 3); for i = 0 , · · · , N 3 − 1 do [ U, S, V ] = svd( ˆ A (: , : , i )); ˆ U (: , : , i ) = U ; ˆ S (: , : , i ) = S ; ˆ V (: , : , i ) = V ; end for U = ifft( ˆ U , [] , 3); S = ifft( ˆ S , [] , 3); V = ifft( ˆ V , [] , 3) . Remark 1. In the t-svd liter atur e, the diagonal elements of the tensor S ar e c al le d the singular values of A . Mor e over, the l 2 norms of the nonzer o tub es S ( i, i, :) ar e in desc ending or der, i.e., ||S (1 , 1 , :) || 2 ≥ ||S (2 , 2 , :) || 2 ≥ · · · ≥ ||S (min( N 1 , N 2 ) , min( N 1 , N 2 ) , :) || 2 . However, it c an b e notic e d that the diagonal elements of S may b e unor der e d and even ne gative due to the inverse FFT. As a r esult, when doing tensor trunc ation in Se ction 4 to get quantum r e c ommendation systems, we use ˆ S inste ad of S as the diagonal elements of the former ar e non-ne gative and or der e d in desc ending or der. Next, we present the definition of the tensor nuclear norm (TNN) which is frequen tly used as an ob jective function to b e minimized in man y optimization algorithms for data completion [ 38 ]-[ 40 ]. Since directly minimizing the tensor m ulti-rank (defined as a vector whose i -th entry is the rank 6 of ˆ A ( i ) ) is NP-hard, some works approximate the rank function b y its con vex surrogate, i.e., TNN [ 39 ]. It is prov ed that TNN is the tightest conv ex relaxation to ` 1 norm of the tensor m ulti-rank [ 39 ] and the problem is reduced to a con vex one when transformed into minimizing TNN. Definition 8. [ 39 ] T ensor nucle ar norm The tensor nucle ar norm (TNN) of A ∈ R N 1 × N 2 × N 3 , denote d by ||A|| T N N , is define d as the sum of the singular values of ˆ A ( ` ) , the ` -th fr ontal slic e of ˆ A , i.e., ||A|| T N N = P N 3 − 1 ` =0 || ˆ A ( ` ) || ∗ , wher e || · || ∗ r efers to the matrix nucle ar norm, namely the sum of the singular values. An imp ortan t application of the t-svd algorithm is the optimality of the truncated t-svd for data approximation, which is the theoretical basis of our quantum algorithm for recommendation systems and tensor completion to b e developed in Sections 4.2 and 4.5 resp ectiv ely . This prop ert y is stated in the follo wing Lemma. Lemma 1. [ 13 , 36 ] Supp ose the t-svd of the tensor A ∈ R N 1 × N 2 × N 3 is A = U ∗ S ∗ V T . Then we have A = min( N 1 ,N 2 ) − 1 X i =0 U (: , i, :) ∗ S ( i, i, :) ∗ V (: , i, :) T , wher e the matric es U (: , i, :) and V (: , i, :) and the ve ctor S ( i, i, :) ar e r e gar de d as tensors of or der 3. F or 1 ≤ k < min( N 1 , N 2 ) define A k , P k − 1 i =0 U (: , i, :) ∗ S ( i, i, :) ∗ V (: , i, :) T . Then A k = arg min ˜ A∈M k ||A − ˜ A|| F , wher e M k = {X ∗ Y |X ∈ R N 1 × k × N 3 , Y ∈ R k × N 2 × N 3 } . Ther efor e, ||A − A k || F is the the or etic al minimal err or, given by ||A − A k || F = q P min( N 1 ,N 2 ) − 1 i = k ||S ( i, i, :) || 2 2 . 2.2 Quan tum singular v alue estimation Kerenidis and Prak ash [ 12 ] prop osed a quantum algorithm to estimate the singular v alues of a matrix, named by the quantum singular v alue estimation (QSVE). With the introduction of a data structure, see Lemma 2 below, in which the ro ws of the matrix are stored, the QSVE algorithm can prepare the quantum states corresp onding to the rows of the matrix efficiently . Lemma 2. [ 12 ] Consider a matrix A ∈ R N 1 × N 2 with ω nonzer o entries. L et A i b e its i -th r ow, and s A = 1 || A || F [ || A 0 || 2 , || A 1 || 2 , · · · , || A N 1 − 1 || 2 ] T . Ther e exists a data structur e storing the matrix A in O ( ω log 2 ( N 1 N 2 )) sp ac e such that a quantum algorithm having ac c ess to this data structur e c an p erform the mapping U P : | i i | 0 i → | i i | A i i , for i = 0 , · · · , N 1 − 1 and U Q : | 0 i | j i → | s A i | j i , for j = 0 , · · · , N 2 − 1 in time p olylog( N 1 N 2 ) . The explicit description of the QSVE is given in [ 12 ] and the follo wing lemma summarizes the main ideas. Unlik e the singular v alue decomp osition technique prop osed in Ref. [ 15 , 27 ] that requires the matrix A to b e exp onen tiated b e low-rank, in the QSVE algorithm the matrix A is not necessarily sparse or lo w-rank. Lemma 3. [ 12 ] L et A ∈ R N 1 × N 2 and x ∈ R N 2 b e stor e d in the data structur e as mentione d in L emma 2 . L et the singular value de c omp osition of A b e A = P r − 1 ` =0 σ ` | u ` i h v ` | , wher e r = min( N 1 , N 2 ) . The input state | x i c an b e r epr esente d in the eigenstates of A , i.e. | x i = P N 2 − 1 ` =0 β ` | v ` i . 7 L et > 0 b e the pr e cision p ar ameter. Then ther e is a quantum algorithm, denote d as U SVE , that runs in O (polylog ( N 1 N 2 ) / ) and achieves U SVE ( | x i | 0 i ) = N 2 − 1 X ` =0 β ` | v ` i | σ ` i , wher e σ ` is the estimate d value of σ ` satisfying | σ ` − σ ` | ≤ || A || F for al l ` with pr ob ability at le ast 1 − 1 / p oly( N 2 ) . Remark 2. In the QSVE algorithm on the matrix A state d in L emma 3 , we c an also cho ose the input state as | A i = 1 || A || F P r − 1 ` =0 σ ` | u ` i | v ` i , c orr esp onding to the ve ctorize d form of the normalize d matrix A || A || F = 1 || A || F P i,j a ij | i i h j | r epr esente d in the svd form. This r epr esentation of the input state is adopte d in Se ction 3 . Note that we c an expr ess the state | A i in the ab ove form even if we do not know the singular p airs of A . A c c or ding to L emma 3 , we c an obtain σ ` , an estimation of σ ` , stor e d in the thir d r e gister sup erp ose d with the singular p air {| u ` i , | v ` i} after p erforming U SVE , i.e., the output state is 1 || A || F P r − 1 ` =0 σ ` | u ` i | v ` i | σ ` i , wher e | σ ` − σ ` | ≤ || A || F for al l ` = 0 , · · · , r − 1 . 3 Quan tum t-svd algorithms In this section, w e presen t our quan tum t-svd algorithm for third-order tensors. W e also show that the running time of this algorithm is exp onen tially faster than its classical counterpart, provided that every frontal slice of the tensor is stored in the data structure as introduced in Lemma 2 and the tensor as a quan tum state can b e efficiently prepared. W e first presen t the algorithm (Algorithm 2 ) in Section 3.1 , then w e analyze its computational complexit y in Section 3.2 . Finally , we extend it to order- p tensors in Section 3.3 . F or a third-order tensor A ∈ R N 1 × N 2 × N 3 , we assume that ev ery fron tal slice of A is stored in a tree structure in tro duced in Lemma 2 such that the algorithm ha ving quantum access to this data structure can return the desired quantum state. Assumption 1. L et tensor A ∈ R N 1 × N 2 × N 3 , wher e N i = 2 n i with n i b eing the numb er of qubits on the i -th mo de, i = 1 , 2 , 3 . Assume that we have an efficient quantum algorithm (e.g. QRAM) to achieve the quantum state pr ep ar ation |Ai = 1 ||A|| F N 1 − 1 X i =0 N 2 − 1 X j =0 N 3 − 1 X k =0 A ( i, j, k ) | i i | j i | k i (6) efficiently. That is, we c a n enc o de A ( i, j, k ) as the amplitude of a thr e e-p artite system. Without loss of gener ality, we assume that ||A|| F = 1 . 3.1 Quan tum t-svd for third-order tensors In this section, we first present our quantum t-svd algorithm, Algorithm 2 , for third-order tensors, then explain eac h step in detail. 8 Algorithm 2 Quan tum t-svd for third-order tensors Input: tensor A ∈ R N 1 × N 2 × N 3 prepared in a quan tum state |Ai in ( 6 ), precision ( m ) SVE , m = 0 , · · · , N 3 − 1, r = min { N 1 , N 2 } . Output: the state | φ i . 1: P erform the QFT on the third register of the quantum state |Ai , to obtain the state | ˆ Ai . 2: P erform the controlled- U SVE on the state | ˆ Ai to get the state | ψ i = N 3 − 1 X m =0 r − 1 X ` =0 ˆ σ ( m ) ` | ˆ u ( m ) ` i c | ˆ v ( m ) ` i d | ˆ σ ( m ) ` i a ! | m i e . (7) 3: P erform the inv erse QFT on the last register of | ψ i and output the state | φ i = 1 √ N 3 N 3 − 1 X t,m =0 r − 1 X ` =0 ˆ σ ( m ) i ω − tm | ˆ u ( m ) ` i c | ˆ v ( m ) ` i d | ˆ σ ( m ) ` i a | t i e . (8) The quan tum circuit of Algorithm 2 is shown in FIG. 2 , where the blo c k of U ( m ) SVE , m = 0 , · · · , N 3 − 1 , is illustrated in FIG. 3 . In Step 1, we consider the input state |Ai in ( 6 ) and p erform the QFT on the third register of this state, obtaining | ˆ Ai = 1 √ N 3 N 3 − 1 X m =0 X i,j,k ω km A ( i, j, k ) | i i c | j i d | m i e , (9) where ω = e 2 π i / N 3 . F or every fixed m , the unnormalized state 1 √ N 3 X i,j,k ω km A ( i, j, k ) | i i | j i (10) in ( 9 ) corresp onds to the matrix ˆ A ( m ) = 1 √ N 3 X i,j,k ω km A ( i, j, k ) | i i h j | , (11) namely , the m -th fron tal slice of the tensor ˆ A . Normalizing the state in ( 10 ) pro duces a quantum state | ˆ A ( m ) i = 1 √ N 3 || ˆ A ( m ) || F X i,j,k ω km A ( i, j, k ) | i i c | j i d . (12) Therefore, the state |Ai in ( 9 ) can b e rewritten as | ˆ Ai = N 3 − 1 X m =0 || ˆ A ( m ) || F | ˆ A ( m ) i cd | m i e . (13) 9 | 0 i n 2 n 1 n 1 n 2 n 3 a b c d e | 0 i | 0 i | 0 i | 0 i U A F U (0) SVE U (1) SVE U ( N 3 − 1) SVE F † · · · Figure 2: Circuit of Algorithm 2 . U A is the unitary op erator for preparing the state |Ai . The QFT is denoted by F . The blo c ks U ( m ) SVE are further illustrated in FIG. 3 . | 0 i H | 0 i | 0 i | 0 i ˆ U ( m ) Q W 2 0 m W 2 1 m W 2 d m n 2 n 1 n 1 n 2 a b c d F † U f m ˆ U ( m ) † Q · · · Figure 3: The circuit of U ( m ) SVE , m = 0 , · · · , N 3 − 1. The unitary op erators ˆ U ( m ) Q and W m are defined in ( 45 ) and ( 47 ) in the pro of of Theorem 4 . d = n 2 − 1. U f m is a unitary op erator implemented through oracle with a computable function f m ( x ) = || ˆ A ( m ) || F cos( x/ 2) . In Step 2, w e design a controlled- U SVE op eration to estimate the singular v alues of ˆ A ( m ) paral- lelly , m = 0 , · · · , N 3 − 1. Denote the pro cedure of the QSVE on the matrix ˆ A ( m ) as U ( m ) SVE and this pro cedure is unitary [ 12 ]. Let the svd of ˆ A ( m ) in ( 11 ) b e P r − 1 ` =0 ˆ σ ( m ) ` ˆ u ( m ) ` ˆ v ( m ) † ` , where ˆ u ( m ) ` and ˆ v ( m ) ` are the left and right singular v ectors corresponding to the singular v alue ˆ σ ( m ) ` . The controlled- U SVE is defined as P N 3 − 1 m =0 U ( m ) SVE ⊗ | m i h m | and when acting on the input | ˆ Ai it has the effect of performing the unitary transformation U ( m ) SVE on the state | ˆ A ( m ) i for m = 0 , · · · , N 3 − 1 parallelly . That is, N 3 − 1 X m =0 U ( m ) SVE ⊗ | m i h m | ! | ˆ Ai = N 3 − 1 X m =0 || ˆ A ( m ) || F U ( m ) SVE | ˆ A ( m ) i ( cd ) | m i ( e ) . (14) Note that the corresp onding input of U ( m ) SVE is | ˆ A ( m ) i instead of an arbitrary quantum state com- monly used in some quan tum svd algorithms [ 27 ]. There are mainly three primary reasons for 10 selecting this state as the input. First, after Step 1, the state | ˆ Ai is the sup erp osition state of | ˆ A ( m ) i , m = 0 , · · · , N 3 − 1. Hence, the op eration of U is to p erform the U ( m ) SVE op eration on eac h matrix ˆ A ( m ) using the input | ˆ A ( m ) i simultaneously , as sho wn in ( 14 ). Second, we keep the en tire singular information ( ˆ σ ( m ) ` , ˆ u ( m ) ` , ˆ v ( m ) ` ) of ˆ A ( m ) together, and thus get the quan tum svd for tensors similar to the matrix svd formally . The third consideration is that w e don’t need the information unrelated to the tensor A (e.g. an arbitrary state) to b e inv olved in the quan tum t-svd algorithm. Next, we fo cus on the result of U ( m ) SVE | ˆ A ( m ) i in ( 14 ). F ollo wing the idea of Remark 2 , the input | ˆ A ( m ) i can b e rewritten in the form P ` ˆ σ ( m ) ` || ˆ A ( m ) || F | ˆ u ( m ) ` i | ˆ v ( m ) ` i , where ˆ σ ( m ) ` || ˆ A ( m ) || F is the scaled singular v alue of ˆ A ( m ) || ˆ A ( m ) || F b ecause | ˆ A ( m ) i is the normalized state corresp onding to the matrix ˆ A ( m ) . Theorem 4 summarizes the ab o ve discussions and illustrates the Step 2 of Algorithm 2 . The pro of of Theorem 4 is giv en in App endix A . Theorem 4. Given every fr ontal slic e of a tensor A stor e d in the data structur e (L emma 2 ), ther e is a quantum algorithm, denote d by U ( m ) SVE , that runs in time O (p olylog( N 1 N 2 ) / ( m ) SVE ) using the input | ˆ A ( m ) i = P r − 1 ` =0 ˆ σ ( m ) ` || ˆ A ( m ) || F | ˆ u ( m ) ` i c | ˆ v ( m ) ` i d and outputs the state 1 || ˆ A ( m ) || F r − 1 X ` =0 ˆ σ ( m ) ` | ˆ u ( m ) ` i c | ˆ v ( m ) ` i d | ˆ σ ( m ) ` i a (15) with pr ob ability at le ast 1 − 1 / p oly( N 2 ) , wher e ( ˆ σ ( m ) ` , ˆ u ( m ) ` , ˆ v ( m ) ` ) ar e the singular p airs of the matrix ˆ A ( m ) in ( 11 ), and ( m ) SVE is the pr e cision such that | ˆ σ ( m ) ` − ˆ σ ( m ) ` | ≤ ( m ) SVE || ˆ A ( m ) || F for al l ` = 0 , · · · , r − 1 . Based on Theorem 4 , the state in ( 14 ) b ecomes | ψ i in ( 7 ). Since we hav e to p erform the QSVE on all ˆ A ( m ) , m = 0 , · · · , N 3 − 1 , the running time of Step 2 is O ( N 3 p olylog( N 1 N 2 ) / SVE ), where SVE = min 0 ≤ m ≤ N 3 − 1 ( m ) SVE . In Step 3, the inv erse QFT is p erformed on the last register of the state | ψ i in ( 7 ) to obtain the final quantum state | φ i in ( 8 ). In what follows, w e interpret the final quantum state | φ i pro duced by Algorithm 2 . First, according to Algorithm 1 for the classical t-svd, the singular v alues of the tensor A are σ ( k ) ` = 1 √ N 3 P N 3 − 1 m =0 ω − km ˆ σ ( m ) ` , ` = 0 , · · · , r − 1 , k = 0 , · · · , N 3 − 1, where the estimated v alues of ˆ σ ( m ) ` are ˆ σ ( m ) ` stored in the third register of | φ i , i.e., Algorithm 2 can pro duce estimates of the singular v alues of the original tensor A . Second, in terms of the circulant matrix circ( A ) defined in Definition 1 , 1 √ N 3 P N 3 − 1 t =0 ω − tm | t i | ˆ v ( m ) ` i is the right singular vector corresp onding to its singular v alue ˆ σ ( m ) ` . Similarly , the corresp onding left singular vector is 1 √ N 3 P N 3 − 1 t =0 ω − tm | t i | ˆ u ( m ) ` i . Finally , the singular v alues of ˆ A ( m ) ha ve wider applications than the singular v alues of A . F or example, some lo w-rank tensor completion problems are solv ed b y minimizing the TNN of the tensor, which is defined as the sum of all the singular v alues of ˆ A ( m ) [ 39 , 40 ]; see Definition 8 . Moreov er, the theoretical minimal error truncation is also based on the singular v alues of ˆ A ( m ) ; see Lemma 1 . Therefore, in Algorithm 2 , we estimate the v alues of ˆ σ ( m ) ` , m = 0 , · · · , N 3 , l = 0 , · · · , r − 1, and store them in the third register of the final state for future use. Our quantum t-svd algorithm can b e used as a subroutine of other algorithms, that is, it is suitable for some sp ecific applications where the singular v alues of ˆ A ( m ) are used. F or example, in Section 4 , w e in tro duce a quantum recommendation systems algorithm for third order tensors which 11 extracts the singular v alues of ˆ A ( m ) and only k eep the greater ones. By doing so, the original tensor A can b e approximated and we can recommend a pro duct to a user according to this reconstructed preference information. 3.2 Complexit y analysis F or simplification, we consider the tensor A ∈ R N × N × N with the same dimensions on each mo de. In Steps 1 and 3, performing the QFT or the inv erse QFT parellelly on the third register of the state |Ai achiev es the complexity of O ((log N ) 2 ), compared with the complexity O ( N 3 log N ) of the FFT p erformed on N 2 tub es of the tensor A in the classical t-svd algorithm. Moreo ver, in the classical t-svd, the complexit y of p erforming the matrix svd (Step 2) for all fron tal slices of ˆ A is O ( N 4 ). In contrast, in our quantum t-svd algorithm, this step is accelerated by the QSVE whose complexit y is O (p olylog( N ) / SVE ) on each frontal slice ˆ A ( m ) , where SVE = min 0 ≤ m ≤ N − 1 ( m ) SVE , m = 0 , · · · , N − 1; hence the Step 2 of our quantum t-svd algorithm ac hieves the complexity of O ( N polylog ( N ) / SVE ) . If we choose SVE = 1 / p olylog( N ), the total computational complexity of Algorithm 2 is O ( N p olylog( N )) which is exp onen tially faster than the classical t-svd with O ( N 4 ). 3.3 Quan tum t-svd for order- p tensors F ollo wing a similar pro cedure, we can extend the quantum t-svd for third-order tensors to order- p tensors easily . W e assume that the quan tum state |Ai corresponding to the tensor A ∈ R N 1 ×···× N p can b e prepared efficiently , where N i = 2 n i with n i b eing the n umber of qubits on the corresp onding mo de, and |Ai = N 1 − 1 X i 1 =0 · · · N p − 1 X i p =0 A ( i 1 , · · · , i p ) | i 1 , · · · , i p i . (16) Next, w e p erform the QFT on the third to the p -th order of the state |Ai , and then use one register | m i to denote | m 3 i · · · | m p i , i.e., m = m 3 2 p − 3 + m 4 2 p − 4 + · · · + m p , m = 0 , · · · , ι − 1 , ι = N 3 N 4 · · · N p , obtaining | ˆ Ai = 1 √ ι ι − 1 X m =0 X i 1 , ··· ,i p ω P p ` =3 i ` m ` A ( i 1 , · · · , i p ) | i 1 , i 2 i | m i . (17) 12 Algorithm 3 Quan tum t-svd for order- p tensors Input: tensor A ∈ R N 1 ×···× N p prepared in a quantum state, precision ( m ) SVE , m = 0 , · · · , ι − 1. Output: the state | φ p i . 1: P erform the QFT parallelly from the third to the p -th register of quan tum state |Ai , obtain the state | ˆ Ai . 2: P erform the QSVE for eac h matrix ˆ A ( m ) with precision ( m ) SVE parallelly , m = 0 , · · · , ι − 1, b y using the con trolled- U SVE acting on the state | ˆ Ai , to obtain the state | ψ p i = ι − 1 X m =0 r − 1 X ` =0 ˆ σ ( m ) ` | ˆ u ( m ) ` i | ˆ v ( m ) ` i | ˆ σ ( m ) ` i ! | m i . (18) 3: P erform the inv erse QFT parallelly from the third to the p -th register of the ab o ve state and output the state | φ p i = 1 ( √ N ) p − 2 N 3 − 1 X m 3 =0 · · · N p − 1 X m p =0 r − 1 X ` =0 ˆ σ ( m ) ` ω − P p ` =3 i ` m ` (19) | ˆ u ( m ) ` i | ˆ v ( m ) ` i | ˆ σ ( m ) ` i | i 3 i · · · | i p i . (20) Let the matrix ˆ A ( m ) = 1 √ ι X i 1 ,i 2 X i 3 , ··· ,i p ω P p ` =3 i ` m ` A ( i 1 , · · · , i p ) | i 1 i h i 2 | and p erform the QSVE on ˆ A ( m ) , m = 0 , · · · , ι − 1, parallelly using the same strategy describ ed in Section 3.1 , w e can get the state | ψ p i in ( 18 ) after Step 2. Finally , w e recov er the | m 3 i · · · | m p i expression and perform the in verse QFT on the third to the p -th register, obtaining the final state | φ p i in ( 19 ) corresp onding to the quan tum t-svd of order- p tensor A . 4 Quan tum algorithm for recommendation systems mo deled b y third-order tensors In this section, w e propose a quantum algorithm for recommendation systems modeled b y third- order tensors as an application of the quan tum t-svd algorithm developed in Section 3.1 . T o do this, Algorithm 2 has b een mo dified in the following wa ys. First, the input state to the new algorithm enco des the preference information of user i because w e w an t to output the recommended index for an y sp ecific user; see Step 2 of Algorithm 4 for details. Second, after the QFT and the QSVE steps of Algorithm 2 , w e truncate the greater singular v alues of eac h fron tal slice, and apply the in verse QFT just as Step 3 of Algorithm 2 . In this w ay , we get a state which can b e prov ed to b e an appro ximation of the input state. Finally , pro jection measurement and p ostselection generate the recommendation index for user i . W e will first introduce the notation adopted in this section and then give a brief o verview of Algorithm 4 . In Section 4.1 , the main ideas and assumptions of the algorithm are summarized. In Section 4.2 , Algorithm 4 is pro vided first, follo wed by the detailed explanation of each step. 13 Theoretical analysis is given in Section 4.3 and complexit y analysis is conducted in Section 4.4 . Finally , a quan tum algorithm for solving the problem of third-order tensor completion is in tro duced in Section 4.5 . Notation. The preference information of users is stored in a third-order tensor T ∈ R N × N × N , called the preference tensor, whose three modes represent user( i ), product( j ) and context( t ) re- sp ectiv ely . The tub e T ( i, j, :) is regarded as the rating sc ore of the user i for the pro duct j under differen t contexts. The en try T ( i, j, t ) takes v alue 1 indicating the pro duct j is “go o d” for user i in context t and v alue 0 otherwise. T (: , : , m ) is represen ted as T ( m ) (fron tal slice). Let tensor ˜ T b e the random tensor obtained by sampling from the tensor T with probabilit y p and ˆ ˜ T be the tensor obtained by p erforming the QFT along the third mo de of ˜ T . The tensor ˆ ˜ T ≥ σ denotes the tensor whose m -th frontal slice is ˆ ˜ T ( m ) ≥ σ ( m ) formed by truncating the m -th frontal slice ˆ ˜ T ( m ) with a giv en threshold σ ( m ) . ˜ T ≥ σ denotes the tensor obtained b y p erforming the in v erse QFT along the third mo de of ˆ ˜ T ≥ σ . The i -th horizon tal slice of ˜ T ≥ σ is ˜ T ≥ σ ( i, : , :). The i -th ro w of a matrix T is represen ted as T i . 4.1 Main ideas Giv en a hidden preference tensor T , w e will prop ose Algorithm 4 to recommend a pro duct j to a user i at a certain context t 0 . The algorithm is inspired by the matrix recommendation metho ds dev elop ed in [ 1 , 12 ] and a tensor reconstruction algorithm [ 39 ]. The main idea is summarized in the following flo w c hart. T ( i, : , :) sample − − − − → ˜ T ( i, : , :) QFT − − − → ˆ ˜ T ( i, : , :) tube − − − → ˆ ˜ T ( i, : , m ) approximation − − − − − − − − − → ˆ ˜ T ≥ σ ( i, : , m ) form slice − − − − − → ˆ ˜ T ≥ σ ( i, : , :) iQFT − − − → ˜ T ≥ σ ( i, : , :) . In Algorithm 4 , w e first sample the preference tensor T with probabilit y p , obtaining the tensor ˜ T whic h represents the preference information that we are able to collect. That is, ˜ T ij t = T ij t /p with probabilit y p and ˜ T ij t = 0 otherwise. Clearly , E ˜ T = T . Giv en a state | ˜ T ( i, : , :) i represen ting the user i ’ subsample preference information, we first p erform QFT on the last register of the state | ˜ T ( i, : , :) i , obtaining the state | ˆ ˜ T ( i, : , :) i . By p erforming the QSVE on the m -th frontal slice ˆ ˜ T ( m ) using the input state | ˆ ˜ T ( i, : , m ) i and truncating the resulting singular v alues with threshold σ ( m ) , the state | ˆ ˜ T ≥ σ ( i, : , m ) i is obtained. Stac king tub es ˆ ˜ T ≥ σ ( i, : , m ) ( m = 0 , . . . , N − 1) yields the horizon tal slice ˆ ˜ T ≥ σ ( i, : , :) which can b e regarded as an approximation of ˆ ˜ T ( i, : , :). After the inv erse QFT on ˆ ˜ T ≥ σ ( i, : , :), the horizontal slice ˜ T ≥ σ ( i, : , :) is obtained. W e can pro ve that ˜ T ≥ σ ( i, : , :) is an appro ximation of the original slice ˜ T ( i, : , :) in Section 4.3 . Assumption 2. The fol lowing assumptions ar e use d Algorithm 4 . 1. Each T ( m ) , m = 0 , · · · , N − 1 , has a go o d r ank- k appr oximation. 2. Every fr ontal slic e of the subsample tensor ˜ T ∈ R N × N × N is stor e d in the data structur e as mentione d in L emma 2 . 3. F or al l i, m = 0 , · · · , N − 1 , we assume the tub es T ( i, : , m ) satisfty 1 1 + γ ||T || 2 F N 2 ≤ ||T ( i, : , m ) || 2 2 ≤ (1 + γ ) ||T || 2 F N 2 (21) 14 for a given γ > 0 . The first assumption is reasonable because most of users b elong to a small n umber of t yp es, and the third assumption indicates that users in the preference tensor T are all typical users. In other words, the n um b er of preferred pro ducts of users is close to the a verage in an y context m . These assumptions are also adopted in Kerenidis and Prak ash’s work [ 12 ] for matrices, where they giv e detailed explanation to justify . 4.2 Quan tum algorithm for recommendation systems mo deled b y third-order tensors Algorithm 4 is a quantum algorithm that, giv en the dynamic preference tensor T , the sampling probabilit y p , the assumed lo w rank k , the threshold σ ( m ) , and the precision ( m ) SVE for QSVE on each ˆ ˜ T ( m ) , outputs the state corresp onding to the appro ximation of the i -th horizontal slice T ( i, : , :). The algorithm is stated b elo w. Algorithm 4 is given b elo w, whose circuit is sho wn in FIGs. 4 and 5 . Algorithm 4 Quan tum algorithm for recommendation systems mo deled by third-order tensors Input: a user index i , the state | ˜ T ( i, : , :) i corresp onding to the preference information of user i , precision ( m ) SVE , the truncation threshold σ ( m ) , m = 0 , · · · , N − 1, and a context t 0 . Output: the recommended index j for the user i at the context t 0 . 1: P erform the QFT on the last register of the input state | ˜ T ( i, : , :) i , to obtain | ˆ ˜ T ( i, : , :) i in ( 23 ). 2: P erform the QSVE on the matrix ˆ ˜ T ( m ) parallelly , using the input | ˆ ˜ T ( i, : , :) i with precision ( m ) SVE , m = 0 , · · · , N − 1 , to get the state | ξ 1 i defined in ( 27 ). 3: Add an ancilla qubit | 0 i a and apply a unitary transformation V on the registers b and a , con trolled b y the register e (see FIG. 4 ), to obtain | ξ 2 i in ( 28 ). 4: Apply the inv erse QSVE and discard the register c , to get | ξ 3 i in ( 29 ). 5: Measure the ancilla register a in the computational basis and p ostselect the outcome | 0 i , then delete the register a , to obtain | ξ 4 i in ( 30 ). 6: P erform the inv erse QFT on the register e , to get | ξ 5 i in ( 32 ). 7: Measure the register e in the computational basis and p ostselect the outcome | t 0 i . Then measure the register d in the computational basis to get the index j . Next, we explain each step in detail. The dynamic preference tensor T ∈ R N × N × N can b e interpreted as the preference matrix T (: , : , t ) evolving ov er the context t . It is reasonable to b eliev e that the tub es T ( i, : , t ) , · · · , T ( i, : , N − 1) are related to eac h other bec ause the preference of the same user i in different contexts is m utually influenced. Considering these relations, w e merge tub es in the same horizontal slice together through the QFT after getting the subsample tensor ˜ T . In other w ords, in Step 1, the QFT is p erformed on the last register of the input state | ˜ T ( i, : , :) i = 1 || ˜ T ( i, : , :) || F N − 1 X j,t =0 ˜ T ( i, j, t ) | j i d | t i e (22) 15 to get | ˆ ˜ T ( i, : , :) i = 1 || ˜ T ( i, : , :) || F N − 1 X m =0 || ˆ ˜ T ( i, : , m ) || 2 | ˆ ˜ T ( i, : , m ) i d | m i e , (23) where ω = e 2 π i / N and | ˆ ˜ T ( i, : , m ) i = 1 √ N || ˆ ˜ T ( i, : , m ) || 2 N − 1 X j,t =0 ω tm ˜ T ( i, j, t ) | j i . (24) Note that || ˆ ˜ T ( i, : , :) || F = || ˜ T ( i, : , :) || F , since the F rob enius norm of ˆ ˜ T ( i, : , :) do es not change when p erforming the F ourier transform. | 0 i | 0 i | 0 i | 0 i | 0 i U ˜ T ( i, : , :) F U V 0 | ξ 4 i | ξ 5 i U † F † t 0 j a b c d e Figure 4: The circuit of Algorithm 4 . U ˜ T ( i, : , :) is the unitary op erator for preparing the initial state | ˜ T ( i, : , :) i . The unitary operator U = P N − 1 m =0 U ( m ) SVE ⊗ | m i h m | and the block of U ( m ) SVE is shown in FIG. 5 . After measuring the first register in the computational basis, we p ostselect the out- come | 0 i a , getting | ξ 4 i . | 0 i H | 0 i ˆ U ( m ) Q W 2 0 m W 2 1 m W 2 d m F † U f m ˆ U ( m ) † Q · · · b c d Figure 5: The implementation of U ( m ) SVE . 16 In Step 2, a unitary op erator U = P N − 1 m =0 U ( m ) SVE ⊗ | m i h m | , given in FIG. 4 , is p erformed on the state | ˆ ˜ T ( i, : , :) i from Step 1. Here, U ( m ) SVE denotes the QSVE pro cedure for the matrix ˆ ˜ T ( m ) with the input | ˆ ˜ T ( i, : , m ) i . This step b orro ws the idea of Step 2 of Algorithm 2 . Based on Theorem 4 and the analysis of Algorithm 2 and Lemma 3 , Step 2 can b e expressed as the following transformation: U | ˆ ˜ T ( i, : , :) i = 1 || ˜ T ( i, : , :) || F N − 1 X m =0 || ˆ ˜ T ( i, : , m ) || 2 U ( m ) SVE | ˆ ˜ T ( i, : , m ) i d | m i e . (25) Then we express | ˆ ˜ T ( i, : , m ) i under the basis of ˆ v ( m ) j , j = 0 , · · · , N − 1, i.e., | ˆ ˜ T ( i, : , m ) i = N − 1 X j =0 β ( im ) j | ˆ v ( m ) j i , (26) where N − 1 P j =0 ˆ σ ( m ) j ˆ u ( m ) j ˆ v ( m ) † j is the svd of ˆ ˜ T ( m ) . According to Lemma 3 , ( 25 ) b ecomes 1 || ˜ T ( i, : , :) || F X m,j || ˆ ˜ T ( i, : , m ) || 2 β ( im ) j | ˆ v ( m ) j i d | ˆ σ ( m ) j i b | m i e , | ξ 1 i , (27) where ˆ σ ( m ) j is an estimate of ˆ σ ( m ) j suc h that | ˆ σ ( m ) j − ˆ σ ( m ) j | ≤ ( m ) SVE || ˆ ˜ T ( m ) || F . In Steps 3-5, our goal is to pro ject each tube ˆ ˜ T ( i, : , m ) onto the subspace ˆ ˜ T ( m ) ≥ σ ( m ) + ˆ ˜ T ( m ) ≥ σ ( m ) spanned b y the righ t singular vectors ˆ v ( m ) j corresp onding to singular v alues greater than the thresh- old σ ( m ) , where ˆ ˜ T ( m ) ≥ σ ( m ) + denotes the Mo ore-P enrose inv erse of ˆ ˜ T ( m ) ≥ σ ( m ) . In Step 3, w e first add an ancillary register | 0 i a and then apply a unitary op erator V = P N − 1 m =0 V ( m ) ⊗ | m i h m | e acting on the register b and a controlled b y the register e , where V ( m ) maps | t i b | 0 i a → | t i b | 1 i a if t < σ ( m ) and | t i b | 0 i a → | t i b | 0 i a otherwise. Therefore, after Step 3, we get | ξ 2 i = 1 || ˜ T ( i, : , :) || F N − 1 X m =0 || ˆ ˜ T ( i, : , m ) || 2 X j, ˆ σ ( m ) j ≥ σ ( m ) β ( im ) j | ˆ v ( m ) j i d | ˆ σ ( m ) j i b | 0 i a + X j, ˆ σ ( m ) j <σ ( m ) β ( im ) j | ˆ v ( m ) j i d | ˆ σ ( m ) j i b | 1 i a | m i e . (28) 17 After the in verse pro cedure of QSVE in Step 4, ( 28 ) b ecomes | ξ 3 i = 1 || ˜ T ( i, : , :) || F N − 1 X m =0 || ˆ ˜ T ( i, : , m ) || 2 X j, ˆ σ ( m ) j ≥ σ ( m ) β ( im ) j | ˆ v ( m ) j i d | 0 i a + X j, ˆ σ ( m ) j <σ ( m ) β ( im ) j | ˆ v ( m ) j i d | 1 i a | m i e . (29) Then we measure the second register of | ξ 3 i and p ostselect the outcome | 0 i a getting | ξ 4 i = 1 α N − 1 X m =0 X j, ≥ σ ( m ) β ( im ) j || ˆ ˜ T ( i, : , m ) || 2 | ˆ v ( m ) j i d | m i e , (30) where α = v u u u t N − 1 X m =0 X j, ≥ σ ( m ) || ˆ ˜ T ( i, : , m ) || 2 2 · | β ( im ) j | 2 . Comparing ( 26 ) with ( 30 ), w e find that the unnormalized state P j, ≥ σ ( m ) β ( im ) j || ˆ ˜ T ( i, : , m ) || 2 | ˆ v ( m ) j i , corresp onding to the i -th row of the truncated matrix ˆ ˜ T ( m ) ≥ σ ( m ) , can b e seen as an appro ximation of ˆ ˜ T ( i, : , m ) , m = 0 , · · · , N − 1 . Hence, | ξ 4 i corresp onds to an approximation of ˆ ˜ T ≥ σ ( i, : , :) . The probability that we obtain the outcome | 0 i in Step 5 is || ˆ ˜ T ≥ σ ( i, : , :) || 2 F || ˜ T ( i, : , :) || 2 F , (31) delete this part: where ˆ ˜ T ≥ σ ( i, : , :) is the i -th horizon tal slice of the tensor ˆ ˜ T ≥ σ whose the m -th fron tal slice is ˆ ˜ T ( m ) ≥ σ ( m ) . Hence, based on amplitude amplification, we hav e to rep eat the measuremen t O ( || ˜ T ( i, : , :) || F || ˆ ˜ T ≥ σ ( i, : , :) || F ) times in order to ensure the success probabilit y of getting the outcome | 0 i is close to 1. In Step 6, we p erform the inv erse QFT on | ξ 4 i in ( 30 ) to get the final state | ξ 5 i = 1 α √ N N − 1 X t,m =0 X j, ≥ σ ( m ) β ( im ) j ω − tm || ˆ ˜ T ( i, : , m ) || 2 | ˆ v ( m ) j i d | t i e , (32) whic h corresp onds to an approximation of ˜ T ( i, : , :), and thus it can also b e regarded as an approx- imation of T ( i, : , :); see the theoretical analysis in Section 4.3 . In the last step, user i is recommended a pro duct j v arying with differen t contexts as needed b y measuring the output state | ξ 5 i . F or example, if we need the recommended index at a certain 18 con text t 0 , we can first measure the last register of | ξ 5 i in the computational basis and p ostselect the outcome | t 0 i , obtaining the state prop ositional to (unnormalized) N − 1 X m =0 X j, ≥ σ ( m ) β ( im ) j ω − t 0 m || ˆ ˜ T ( i, : , m ) || 2 | ˆ v ( m ) j i d . (33) W e next measure this state in the computational basis to get an index j which is prov ed to b e a go od recommendation for user i at context t 0 . 4.3 Theoretical analysis In this section, the i -th horizontal slice of the tensor ˜ T ≥ σ can b e prov ed to b e an approximation of T ( i, : , :). Then sampling from the matrix ˜ T ≥ σ ( i, : , :) yields goo d recommendations for user i ; see Theorem 5 . The conclusions of Lemmas 4 and 5 are used in the pro of of Theorem 5 , so w e in tro duce them first. The pro ofs of Lemma 5 and Theorem 5 can b e found in App endices B and C resp ectiv ely . Lemma 4. [ 12 ] L et ˜ A b e an appr oximation of the matrix A such that || A − ˜ A || F ≤ || A || F . Then, the pr ob ability that sampling fr om ˜ A pr ovides a b ad r e c ommendation is Pr ( i,j ) ∼ ˜ A [( i, j )bad] ≤ 1 − 2 . (34) Lemma 5. L et A ∈ R N × N b e a matrix and A k b e the b est r ank- k appr oximation satisfying || A − A k || F ≤ || A || F . If the thr eshold for trunc ating the singular values of A is chosen as σ = || A || F √ k , then || A − A ≥ σ || F ≤ 2 || A || F . (35) Theorem 5. Algorithm 4 outputs the state | ˜ T ≥ σ ( i, : , :) i c orr esp onding to the appr oximation of T ( i, : , :) such that for at le ast (1 − δ ) N users, user i in which satisfies ||T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || F ≤ ||T ( i, : , :) || F (36) with pr ob ability at le ast p 1 p 2 p 3 = (1 − e −||T || 2 F / 3 p )(1 − e − ζ 2 ( 1 p − p ) ||T || 2 F 3 N (1+ γ ) )(1 − 1 / p oly N ) , wher e γ , ζ ∈ [0 , 1] and p is the subsample pr ob ability. The pr e cision = q (1 + ζ )( 1 p − p ) + 0 q 2(1+ γ ) δ p , δ ∈ (0 , 1) , 0 = max m =0 , ··· ,N − 1 2 ( m ) if the b est r ank- k appr oximation satisfies || ˆ ˜ T ( m ) − ˆ ˜ T ( m ) k || F ≤ ( m ) || ˆ ˜ T ( m ) || F for a smal l c onstant k , and the c orr esp onding thr eshold of e ach ˆ ˜ T ( m ) is chosen as σ ( m ) = ( m ) || ˆ ˜ T ( m ) || F √ k . Mor e over, b ase d on L emma 4 , the pr ob ability that sampling ac c or ding to ˜ T ≥ σ ( i, : , :) (is e quivalent to me asuring the state | ˜ T ≥ σ ( i, : , :) i in the c omputational b asis) pr ovides a b ad r e c ommendation is Pr t ∼U N ,j ∼ ˜ T ≥ σ ( i, : , :) [( i, j, t )bad] ≤ 1 − 2 . (37) 19 4.4 Complexit y analysis The complexity of Algorithm 4 is giv en b y the following result. Theorem 6. F or at le ast (1 − δ ) N users, A lgorithm 4 outputs an appr oximation state of |T ( i, : , :) i with c omplexity O ( √ kN polylog ( N )(1+ γ ) min m ( m ) (1+ ) √ p ) . F or suitable p ar ameters, the running time of Algorithm 4 is O ( √ k N p olylog( N )) . The pro of of Theorem 6 can b e found in App endix D . Note that the running time of our quantum algorithm dep ends heavily on the threshold σ ( m ) = ( m ) || ˆ ˜ T ( m ) || F √ k whic h relies on the rank k and corresp onding precision ( m ) . Abov e all, the running time of Algorithm 4 is O ( √ k N p olylog( N )) for suitable parameters. 4.5 A quan tum algorithm of tensor completion In this section, we propose a quantum algorithm for tensor completion based on our quantum t-svd algorithm. This metho d follo ws the similar idea of Algorithm 4 but truncate the top k singular v alues among all the frontal slice ˆ ˜ T ( m ) , m = 0 , · · · , N − 1 . More sp ecifically , in Step 3 of Algorithm 4 , after getting the state | ξ 1 i , we design another unitary transformation V 0 acting on the ancillary register | 0 i that maps | t i | 0 i → | t i | 1 i if t < σ and | t i | 0 i → | t i | 0 i otherwise, so the state b ecomes | ξ 0 2 i = 1 || ˜ T ( i, : , :) || F N − 1 X m,j =0 ˆ σ ( m ) j ≥ σ || ˆ ˜ T ( i, : , m ) || 2 β ( im ) j | ˆ v ( m ) j i | ˆ σ ( m ) j i | 0 i + N − 1 X m,j =0 ˆ σ ( m ) j <σ || ˆ ˜ T ( i, : , m ) || 2 β ( im ) j | ˆ v ( m ) j i | ˆ σ ( m ) j i | 1 i | m i . (38) Then after the inv erse QSVE, measuring the third register and p ostselecting the outcome | 0 i , just as done in Steps 4 and 5 of Algorithm 4 , we get | ξ 0 4 i = 1 α 0 N − 1 X m,j =0 ˆ σ ( m ) j ≥ σ || ˆ ˜ T ( i, : , m ) || 2 β ( im ) j | ˆ v ( m ) j i | m i , (39) where α 0 = N − 1 P m,j =0 ˆ σ ( m ) j ≥ σ || ˆ ˜ T ( i, : , m ) || 2 2 · | β ( im ) j | 2 1 / 2 . 20 The last step is the inv erse QFT whic h outputs the final state | ξ 0 5 i = 1 α 0 √ N N − 1 X t =0 N − 1 X m,j =0 ˆ σ ( m ) j ≥ σ || ˆ ˜ T ( i, : , m ) || 2 β ( im ) j ω − mt | ˆ v ( m ) j i | t i . (40) Our first truncation metho d applied in quantum recommendation systems introduced in Sec- tion 4.2 is called t-svd-tubal compression and the second algorithm in Section 4.5 is called t-svd compression. According to the comparison and analysis of these tw o metho ds in [ 39 ], although the latter has b etter p erformance when applied to stationary camera videos, the former works muc h b etter on the non-stationary panning camera videos b ecause it b etter captures the con volution relations b et ween different frontal slices of the tensor in dynamic video, so we design the quan tum v ersion of b oth metho ds in this pap er. 5 Conclusion The main contribution of this pap er consists of tw o parts. First, we presen t a quantum t-svd algorithm for third-order tensors which ac hieves the complexity of O ( N p olylog( N )). The other inno v ation is that w e prop ose the first quan tum algorithm for recommendation systems mo deled b y third-order tensors. W e pro ve that our algorithm can provide go o d recommendations v arying with con texts and run in exp ected time O ( N p olylog( N )p oly( k )) for some suitable parameters, which is exp onen tially faster than kno wn classical algorithms. W e also prop ose a v arian t of Algorithm 4 , whic h deals with third-order tensors completion problems. A The pro of of Theorem 4 In this app endix, w e pro ve Theorem 4 . In the QSVE algorithm [ 12 ], Kerenidis and Prak ash first constructed tw o isometries P and Q whic h are implemented efficiently through tw o unitary transformations U P and U Q , suc h that the target matrix A has the factorization A || A || F = P † Q . Based on these t wo isometries, the unitary op erator W = (2 P P † − I mn )(2 QQ † − I mn ) can b e implemented efficiently . The QSVE algorithm utilizes the connection betw een the eigenv alues e ± iθ i of W and the singular v alues σ i of A , i.e., cos θ i 2 = σ i || A || F . Therefore, w e can perform the phase estimation on W to get an estimated v alue θ i and then compute the estimated singular v alue σ i stored in a register superp osed with its corresp onding singular vector. In the proof of Theorem 4 , w e assume that ev ery frontal slice of the tensor A is stored in the data structure stated in Lemma 2 . Then according to Theorem 5.1 in [ 12 ], the quantum state | A ( k ) i i and | s ( k ) A i can b e prepared efficien tly b y the op erators P ( k ) and Q ( k ) , k = 0 , · · · , N 3 − 1. Based on our quan tum t-svd algorithm, the QSVE is expected to be p erformed on each frontal slice of ˆ A , denoted as ˆ A ( m ) = 1 √ N 3 P N 3 − 1 k =0 ω km A ( k ) . F or achieving this, w e construct t wo isometries ˆ P ( m ) and ˆ Q ( m ) . According to Remark 2 , the input is c hosen as the state | ˆ A ( m ) i = 1 || ˆ A ( m ) || F P i,j,k ω km A ( i, j, k ) | i i | j i . F ollo wing the similar pro cedure of the QSVE algorithm [ 12 ], we can obtain the desired output state. Pr o of. Since ev ery A ( k ) , k = 0 , · · · , N 3 − 1, is stored in the binary tree structure, the quantum computer can p erform the following mappings in O (p olylog( N 1 N 2 )) time, as shown in Theorem 5.1 21 in [ 12 ]: U ( k ) P : | i i | 0 i → | i i | A ( k ) i i = 1 || A ( k ) i || 2 N 2 − 1 X j =0 A ( i, j, k ) | i i | j i , U ( k ) Q : | 0 i | j i → | s ( k ) A i | j i = 1 || A ( k ) || F N 1 − 1 X i =0 || A ( k ) i || 2 | i i | j i , (41) where A ( k ) i is the i -th row of A ( k ) and s ( k ) A , 1 || A || F h || A ( k ) 0 || 2 , || A ( k ) 1 || 2 , · · · , || A ( k ) N 1 − 1 || 2 i T , k = 0 , · · · , N 3 − 1 . W e can define tw o isometries P ( k ) ∈ R N 1 N 2 × N 1 and Q ( k ) ∈ R N 1 N 2 × N 2 related to U ( k ) P and U ( k ) Q as follow ed: P ( k ) = N 1 − 1 X i =0 | i i | A ( k ) i i h i | , Q ( k ) = N 1 − 1 X j =0 | s ( k ) A i | j i h j | . (42) Define another op erator ˆ P ( m ) , 1 √ N 3 P N 3 − 1 k =0 || A ( k ) i || 2 ω km || ˆ A ( m ) i || 2 P ( k ) whic h achiev es the state preparation of the rows of the matrix ˆ A ( m ) . Since every isometry P ( k ) , k = 0 , · · · , N 3 − 1 , can b e implemen ted with complexity O (log N 1 ), ˆ P ( m ) can also b e implemented efficiently . Substituting P ( k ) in to ˆ P ( m ) , w e ha ve for each m = 0 , · · · , N 3 − 1 , ˆ P ( m ) = 1 √ N 3 N 3 − 1 X k =0 || A ( k ) i || 2 ω km || ˆ A ( m ) i || 2 X i | i i | A ( k ) i i h i | = X i | i i | ˆ A ( m ) i i h i | , (43) where | ˆ A ( m ) i i = | ˆ A ( i, : , m ) i = 1 √ N 3 N 3 − 1 X k =0 || A ( k ) i || 2 ω km || ˆ A ( m ) i || 2 | A ( k ) i i . It is easy to c heck that ˆ P ( m ) is an isometry: ˆ P ( m ) † ˆ P ( m ) = ( X i | i i h i | h ˆ A ( m ) i | )( X j | j i | ˆ A ( m ) j i h j | ) = I N 1 . (44) W e construct another arra y of N 3 binary trees, eac h of whic h has the ro ot storing || ˆ A ( m ) || 2 F and the i -th leaf storing || ˆ A ( m ) i || 2 2 . Define ˆ s ( m ) A = 1 || ˆ A ( m ) || F h || ˆ A ( m ) 0 || 2 || ˆ A ( m ) 1 || 2 · · · || ˆ A ( m ) N 1 − 1 || 2 i T . According to the pro of of Lemma 5.3 in [ 12 ], w e can p erform the mapping ˆ U ( m ) Q : | 0 i | j i → | ˆ s ( m ) A i | j i = 1 || ˆ A ( m ) || F X i || ˆ A ( m ) i || 2 | i i | j i (45) 22 and the corresp onding isometry ˆ Q ( m ) = P j | ˆ s ( m ) A i | j i h j | satisfies ˆ Q ( m ) † ˆ Q ( m ) = I N 2 . No w we can p erform QSVE on the matrix ˆ A ( m ) . First, the factorization ˆ A ( m ) || ˆ A ( m ) || F = ˆ P ( m ) † ˆ Q ( m ) can b e easily verified. Moreo ver, we can pro v e that 2 ˆ P ( m ) ˆ P ( m ) † − I N 1 N 2 is unitary and it can b e efficien tly implemen ted in time O (polylog ( N 1 N 2 )). Actually , 2 ˆ P ( m ) ˆ P ( m ) † − I N 1 N 2 =2 X i | i i | ˆ A ( m ) i i h i | h ˆ A ( m ) i | − I N 1 N 2 = U ˆ P ( m ) " 2 X i | i i | 0 i h i | h 0 | − I N 1 N 2 # U † ˆ P ( m ) , (46) where 2 P i | i i | 0 i h i | h 0 | − I N 1 N 2 is a reflection. U ˆ P ( m ) is the unitary op erator corresp onding to the isometry ˆ P ( m ) , i.e., U ˆ P ( m ) = P i | i i | ˆ A ( m ) i i h i | h 0 | . The similar result holds for 2 ˆ Q ( m ) ˆ Q ( m ) † − I N 1 N 2 . No w denote W m = (2 ˆ P ( m ) ˆ P ( m ) † − I N 1 N 2 )(2 ˆ Q ( m ) ˆ Q ( m ) † − I N 1 N 2 ) , (47) and we can prov e that the subspace spanned by { ˆ Q ( m ) | ˆ v ( m ) i i , ˆ P ( m ) | ˆ u ( m ) i i} is inv ariant under the unitary transformation W m : W m ˆ Q ( m ) | ˆ v ( m ) i i = 2 ˆ σ ( m ) i || ˆ A ( m ) || F ˆ P ( m ) | ˆ u ( m ) i i − Q | ˆ v ( m ) i i , W m ˆ P ( m ) | ˆ u ( m ) i i = 4 ˆ σ 2 i || ˆ A ( m ) || F − 1 ! ˆ P ( m ) | ˆ u ( m ) i i − 2 ˆ σ i || ˆ A ( m ) || F ˆ Q ( m ) | ˆ v ( m ) i i . The matrix W m can be calculated under an orthonormal basis using the Sc hmidt orthogonalization, and it is a rotation in the subspace spanned by its eigenv ectors | ω ( m ) i ± i corresp onding to eigenv alues e ± iθ ( m ) i , where θ ( m ) i is the rotation angle satisfying cos( θ ( m ) i / 2) = ˆ σ ( m ) i || ˆ A ( m ) || F , i.e. ˆ Q ( m ) | ˆ v ( m ) i i = √ 2( | ω ( m ) i + i + | ω ( m ) i − i ) ˆ P ( m ) | ˆ u ( m ) i i = √ 2( e iθ i / 2 | ω ( m ) i + i + e − iθ i / 2 | ω ( m ) i − i ) . In the QSVE algorithm on the matrix ˆ A ( m ) , m = 0 , · · · , N 3 − 1 , we c ho ose the input state as the Kronec ker pro duct form of the normalized matrix ˆ A ( m ) || ˆ A ( m ) || F represen ted in the svd, i.e., | ˆ A ( m ) i = 1 || ˆ A ( m ) || F P i ˆ σ ( m ) i | ˆ u ( m ) i i | ˆ v ( m ) i i . Then I N 1 ⊗ ˆ U Q ( m ) | ˆ A ( m ) i = 1 || ˆ A ( m ) || F X i √ 2 ˆ σ ( m ) i | ˆ u ( m ) i i ( | ω ( m ) i + i + | ω ( m ) i − i ) . (48) 23 P erforming the phase estimation on W m and computing the estimated singular v alue of ˆ A ( m ) through oracle ˆ σ ( m ) i = || ˆ A ( m ) || F cos( θ ( m ) i / 2) , we obtain 1 || ˆ A ( m ) || F X i √ 2 ˆ σ ( m ) i | ˆ u ( m ) i i | ω ( m ) i + i | θ ( m ) i i + | ω ( m ) i − i |− θ ( m ) i i | σ ( m ) i i . (49) w e next uncompute the phase estimation procedure and then apply the inv erse of I N 1 ⊗ ˆ U Q ( m ) , obtaining the desired state ( 15 ) in Theorem 4 .. B The pro of of Lemma 5 Pr o of. Let σ i denote the singular v alue of A and ` b e the largest integer for which σ ` ≥ || A || F √ k . By the triangle inequality , || A − A ≥ σ || F ≤ || A − A k || F + || A k − A ≥ σ || F . If k ≤ ` , it’s easy to conclude that || A k − A ≥ σ || F ≤ || A − A k || F ≤ || A || F . If k > ` , || A k − A ≥ σ || 2 F = P k i = ` +1 σ 2 i ≤ kσ 2 ` +1 ≤ k ( || A || F √ k ) 2 ≤ ( || A || F ) 2 . Abov e all, we hav e || A − A ≥ σ || F ≤ 2 || A || F . C The pro of of Theorem 5 Pr o of. Based on Lemma 5 in the main text, if the b est rank- k approximation satisfies || ˆ ˜ T ( m ) − ˆ ˜ T ( m ) k || F ≤ ( m ) || ˆ ˜ T ( m ) || F , then || ˆ ˜ T ( m ) − ˆ ˜ T ( m ) ≥ σ ( m ) || F ≤ 2 ( m ) || ˆ ˜ T ( m ) || F ≤ 0 || ˆ ˜ T ( m ) || F , (50) for σ ( m ) = ( m ) || ˆ ˜ T ( m ) || F √ k , m = 0 , · · · , N − 1 . By summarizing on b oth side of ( 50 ), we get || ˆ ˜ T − ˆ ˜ T ≥ σ || 2 F = N − 1 X m =0 || ˆ ˜ T ( m ) − ˆ ˜ T ( m ) ≥ σ ( m ) || 2 F ≤ 2 0 || ˆ ˜ T || 2 F . (51) Since the inv erse QFT along the third mo de of the tensor T cannot c hange the F rob enius norm of its horizontal slice, ( 51 ) can b e b e re-written as || ˜ T − ˜ T ≥ σ || 2 F ≤ 2 0 || ˜ T || 2 F . (52) Moreo ver, noticing that || ˜ T − ˜ T ≥ σ || 2 F = P N − 1 i =0 || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || 2 F , we hav e E || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || 2 F ≤ 2 0 || ˜ T || 2 F N . Due to Marko v’s Inequality ([ 30 , Prop osition 2.6]), Pr || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || 2 F > 2 0 || ˜ T || 2 F δ N ! ≤ E || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || 2 F δ N 2 0 || ˜ T || 2 F = δ (53) holds for some δ ∈ (0 , 1). That means at least (1 − δ ) N users i satisfy || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || 2 F ≤ 2 0 || ˜ T || 2 F δ N . (54) 24 During the prepro cessing part of Algorithm 4 , tensor ˜ T is obtained b y sampling the tensor T with uniform probability p , so E || ˜ T || 2 F = ||T || 2 F /p . Using the Chernoff b ound, we hav e Pr || ˜ T || 2 F > (1 + θ ) ||T || 2 F /p ≤ e − θ 2 ||T || 2 F / 3 p for θ ∈ [0 , 1], which is exp onen tially small. Here, we c ho ose θ = 1, then the probability that || ˜ T || 2 F ≤ 2 ||T || 2 F /p (55) is p 1 = 1 − e −||T || 2 F / 3 p . Based on the third assumption in Assumption 2 , we sum b oth sides of ( 21 ) for m and i resp ectiv ely , obtaining 1 1 + γ ||T || 2 F N ≤ ||T ( i, : , :) || 2 F ≤ (1 + γ ) ||T || 2 F N , (56) and 1 1 + γ ||T || 2 F N ≤ || T ( m ) || 2 F ≤ (1 + γ ) ||T || 2 F N . (57) Then, ( 54 ) b ecomes || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || 2 F ≤ 2 2 0 (1 + γ ) δ p ||T ( i, : , :) || 2 F (58) with probability p 1 . Mean while, since E ||T ( i, : , :) − ˜ T ( i, : , :) || 2 F = ( 1 p − p ) ||T ( i, : , :) || 2 F , then Pr ||T ( i, : , :) − ˜ T ( i, : , :) || 2 F > ν ||T ( i, : , :) || 2 F ≤ e − ζ 2 ( 1 p − p ) ||T || 2 F 3 N (1+ γ ) , (59) where ν = (1 + ζ )( 1 p − p ) and ζ ∈ [0 , 1]. That means with probability at least p 2 = 1 − e − ζ 2 ( 1 p − p ) ||T || 2 F 3 N (1+ γ ) , ||T ( i, : , :) − ˜ T ( i, : , :) || 2 F ≤ ν ||T ( i, : , :) || 2 F . (60) Com bining ( 58 ) and ( 60 ) together and by triangle inequality , w e obtain ||T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || F ≤||T ( i, : , :) − ˜ T ( i, : , :) || F + || ˜ T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || F ≤ ||T ( i, : , :) || F . (61) According to Lemma 4 , the probability that sampling according to ˜ T ≥ σ ( i, : , :) provides a bad rec- ommendation is Pr t ∼U N ,j ∼ ˜ T ≥ σ ( i, : , :) [( i, j, t )bad] ≤ 1 − 2 . (62) 25 D The pro of of Theorem 6 Pr o of. Similar to the complexit y of Algorithm 2 , the QFT is p erformed with the complexity O ((log N ) 2 ). The QSVE algorithm takes time O ( N p olylog( N ) / ( m ) SVE ) and outputs the sup erp o- sition state with probability p 3 = 1 − 1 / p oly N . In Step 5, w e need to rep eat the measurement O || ˜ T ( i, : , :) || F || ˆ ˜ T ≥ σ ( i, : , :) || F times in order to ensure the probabilit y of getting the outcome | 0 i in Step 5 is close to 1. F or most users, we can pro v e that || ˜ T ( i, : , :) || F || ˆ ˜ T ≥ σ ( i, : , :) || F is b ounded and the upp er b ound is a constan t for appropriate parameters. The pro of is in the following. Since E || ˜ T ( i, : , :) || 2 F = ||T ( i, : , :) || 2 F p ≤ (1 + γ ) ||T || 2 F pN , then b y Chernoff b ound, || ˜ T ( i, : , :) || 2 F ≤ 2(1 + γ ) ||T || 2 F pN (63) holds with probability close to 1. Moreo v er, from the previous discussion, there are at least (1 − δ ) N users satisfying ||T ( i, : , :) − ˜ T ≥ σ ( i, : , :) || F ≤ ||T ( i, : , :) || F , then (1 + ) ||T ( i, : , :) || F ≤ || ˜ T ≥ σ ( i, : , :) || F ≤ (1 + ) ||T ( i, : , :) || F . Since the F rob enius norm is unchanged under the F ourier transform, w e get (1 + ) || ˆ T ( i ) || F ≤ || ˆ ˜ T ≥ σ ( i, : , :) || F ≤ (1 + ) || ˆ T ( i ) || F . (64) Therefore, || ˆ ˜ T ≥ σ ( i, : , :) || 2 F ≥ (1 + ) 2 || ˆ T ( i ) || 2 F ≥ (1 + ) 2 1 + γ ||T || 2 F N . (65) Com bining ( 63 ) and ( 65 ) together, we can conclude that for at least (1 − δ ) N users, || ˜ T ( i, : , :) || F || ˆ ˜ T ≥ σ ( i, : , :) || F is b ounded with probabilit y p 1 p 2 , that is, || ˜ T ( i, : , :) || F || ˆ ˜ T ≥ σ ( i, : , :) || F ≤ (1 + γ ) 2 ||T || 2 F pN (1+ ) 2 1+ γ ||T || 2 F N 1 / 2 = √ 2(1 + γ ) (1 + ) √ p . (66) The precision for the singular v alue estimation algorithm on the matrix || ˆ ˜ T ( m ) || F can b e chosen as ( m ) SVE = σ ( m ) || ˆ ˜ T ( m ) || F . Therefore, the total complexit y of Algorithm 4 is (log N ) 4 · N p olylog( N ) min m ( m ) SVE · || ˜ T ( i, : , :) || F || ˆ ˜ T ≥ σ ( i, : , :) || F ≤ (log N ) 4 N p olylog N max m || ˆ ˜ T ( m ) || F σ ( m ) · √ 2(1 + γ ) (1 + ) √ p u √ k N p olylog( N )(1 + γ ) min m ( m ) (1 + ) √ p , where ( m ) and are defined individually in Theorem 5 . 26 References [1] Dimitris Achlioptas and F rank McSherry . F ast computation of lo w-rank matrix approxima- tions. Journal of the ACM (JACM) , 54(2):9, 2007. [2] Gediminas Adomavicius and Alexander T uzhilin. Context-a w are recommender systems. In R e c ommender systems handb o ok , pages 217–253. Springer, 2011. [3] Pierre Comon. T ensor decomp ositions. Mathematics in Signal Pr o c essing V , pages 1–24, 2002. [4] Liev en De Lathauw er, Bart De Mo or, and Jo os V andewalle. A multilinear singular v alue decomp osition. SIAM journal on Matrix Analysis and Applic ations , 21(4):1253–1278, 2000. [5] Gregory Ely , Shuc hin Aeron, Ning Hao, and Misha E Kilmer. 5d seismic data completion and denoising using a nov el class of tensor decomp ositions. Ge ophysics , 80(4):V83–V95, 2015. [6] Vittorio Gio v annetti, Seth Llo yd, and Lorenzo Maccone. Quantum random access memory . Physic al r eview letters , 100(16):160501, 2008. [7] L. Gu, X. W ang, and G. Zhang. Quantum higher order singular v alue decomp osition. In 2019 IEEE International Confer enc e on Systems, Man and Cyb ernetics (SMC) , pages 1166–1171, Oct 2019. [8] Ning Hao, Misha Kilmer, Karen Braman, and Randy Ho o v er. F acial recognition using tensor- tensor decomp ositions. SIAM Journal on Imaging Scienc es [ele ctr onic only] , 6, 02 2013. [9] Ning Hao, Misha E Kilmer, Karen Braman, and Randy C Ho o ver. F acial recognition using tensor-tensor decomp ositions. SIAM Journal on Imaging Scienc es , 6(1):437–463, 2013. [10] Shenglong Hu, Liqun Qi, and Guofeng Zhang. Computing the geometric measure of en tan- glemen t of multipartite pure states b y means of non-negative tensors. Physic al R eview A , 93(1):012304, 2016. [11] Iordanis Kerenidis and Anupam Prak ash. Quan tum gradient descent for linear systems and least squares. arXiv pr eprint arXiv:1704.04992 , 2017. [12] Iordanis Kerenidis and Anupam Prak ash. Quantum Recommendation Systems. In Christos H. P apadimitriou, editor, 8th Innovations in The or etic al Computer Scienc e Confer enc e (ITCS 2017) , v olume 67 of L eibniz International Pr o c e e dings in Informatics (LIPIcs) , pages 49:1– 49:21, Dagstuhl, German y , 2017. Schloss Dagstuhl–Leibniz-Zen trum fuer Informatik. [13] Misha E Kilmer and Carla D Martin. F actorization strategies for third-order tensors. Line ar A lgebr a and its Applic ations , 435(3):641–658, 2011. [14] T amara G Kolda and Brett W Bader. T ensor decomp ositions and applications. SIAM r eview , 51(3):455–500, 2009. [15] Seth Llo yd, Masoud Mohseni, and P atrick Reb entrost. Quan tum principal component analysis. Natur e Physics , 10(9):631, 2014. [16] Y unpu Ma, Y uyi W ang, and V olk er T resp. Quan tum mac hine learning algorithm for kno wledge graphs. arXiv pr eprint arXiv:2001.01077 , 2020. 27 [17] Carla D Martin, Richard Shafer, and Betsy LaRue. An order- p tensor factorization with applications in imaging. SIAM Journal on Scientific Computing , 35(1):A474–A490, 2013. [18] Y un Miao, Liqun Qi, and Yimin W ei. Generalized tensor function via the tensor singular v alue decomp osition based on the t-pro duct. arXiv pr eprint arXiv:1901.04255 , 2019. [19] M.A. Nielsen and I.L. Chuang. Quantum Computation and Information . Cam bridge Univ ersit y Press, London, 2010. [20] Iv an V Oseledets. T ensor-train decomp osition. SIAM Journal on Scientific Computing , 33(5):2295–2317, 2011. [21] An upam Prak ash. Quantum algorithms for line ar algebr a and machine le arning. PhD thesis, UC Berkeley , 2014. [22] Liqun Qi, Haibin Chen, and Y annan Chen. T ensor eigenvalues and their applic ations , v ol- ume 39. Springer, 2018. [23] Liqun Qi and Ziy an Luo. T ensor analysis: sp e ctr al the ory and sp e cial tensors , v olume 151. Siam, 2017. [24] Liqun Qi, Guofeng Zhang, and Guyan Ni. How entangled can a multi-part y system p ossibly b e? Physics L etters A , 382(22):1465–1471, 2018. [25] LQ QI, GF Zhang, D Braun, F Bohnet-W aldraff, and O Giraud. Regularly decomp osable tensors and classical spin states. Communic ations in mathematic al scienc es , 2017. [26] P atrick Reb en trost, Maria Sc huld, Leonard W ossnig, F rancesco Petruccione, and Seth Lloyd. Quan tum gradien t descent and newton?s metho d for constrained p olynomial optimization. New Journal of Physics , 21(7):073023, 2019. [27] P atrick Reb en trost, Adrian Steffens, Iman Marvian, and Seth Llo yd. Quantum singular-v alue decomp osition of nonsparse lo w-rank matrices. Physic al r eview A , 97(1):012327, 2018. [28] Steffen Rendle, Leandro Balby Marinho, Alexandros Nanop oulos, and Lars Schmidt-Thieme. Learning optimal ranking with tensor factorization for tag recommendation. In Pr o c e e dings of the 15th A CM SIGKDD international c onfer enc e on Know le dge disc overy and data mining , pages 727–736, 2009. [29] Steffen Rendle and Lars Schmidt-Thieme. Pairwise in teraction tensor factorization for p erson- alized tag recommendation. In Pr o c e e dings of the thir d ACM international c onfer enc e on Web se ar ch and data mining , pages 81–90, 2010. [30] Sheldon M Ross. Intr o duction to Pr ob ability Mo dels, ISE . Academic press, 2006. [31] Changpeng Shao and Hua Xiang. Quan tum circulant preconditioner for a linear system of equations. Physic al R eview A , 98(6):062321, 2018. [32] Marvi T eixeira and Domingo Ro driguez. A class of fast cyclic con v olution algorithms based on blo c k pseudo circulan ts. IEEE Signal Pr o c essing L etters , 2(5):92–94, 1995. [33] Ledy ard R T uck er. Some mathematical notes on three-mo de factor analysis. Psychometrika , 31(3):279–311, 1966. 28 [34] Minc heng W u, Shib o He, Y ongtao Zhang, Jiming Chen, Y ouxian Sun, Y ang-Y u Liu, Junshan Zhang, and H Vincent Poor. A tensor-based framework for studying eigen v ector multicen tralit y in multila y er netw orks. Pr o c e e dings of the National A c ademy of Scienc es , 116(31):15407–15413, 2019. [35] Guofeng Zhang. Dynamical analysis of quantum linear systems driven b y multi-c hannel m ulti- photon states. Automatic a , 83:186–198, 2017. [36] Jiani Zhang, Arvind K Saibaba, Misha E Kilmer, and Shuc hin Aeron. A randomized tensor singular v alue decomp osition based on the t-pro duct. Numeric al Line ar Algebr a with Applic a- tions , 25(5):e2179, 2018. [37] Mengshi Zhang, Guy an Ni, and Guofeng Zhang. Iterative metho ds for computing u-eigen v alues of non-symmetric complex tensors with application in quan tum entanglemen t. Computational Optimization and Applic ations , pages 1–20, 2019. [38] Zemin Zhang and Sh uchin Aeron. Exact tensor completion using t-svd. IEEE T r ansactions on Signal Pr o c essing , 65(6):1511–1526, 2016. [39] Zemin Zhang, Gregory Ely , Sh uchin Aeron, Ning Hao, and Misha Kilmer. Nov el metho ds for m ultilinear data completion and de-noising based on tensor-svd. In Pr o c e e dings of the IEEE c onfer enc e on c omputer vision and p attern r e c o gnition , pages 3842–3849, 2014. [40] P an Zhou, Canyi Lu, Zhouchen Lin, and Chao Zhang. T ensor factorization for low-rank tensor completion. IEEE T r ansactions on Image Pr o c essing , 27(3):1152–1163, 2017. 29

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment