Driver Identification by Neural Network on Extracted Statistical Features from Smartphone Data

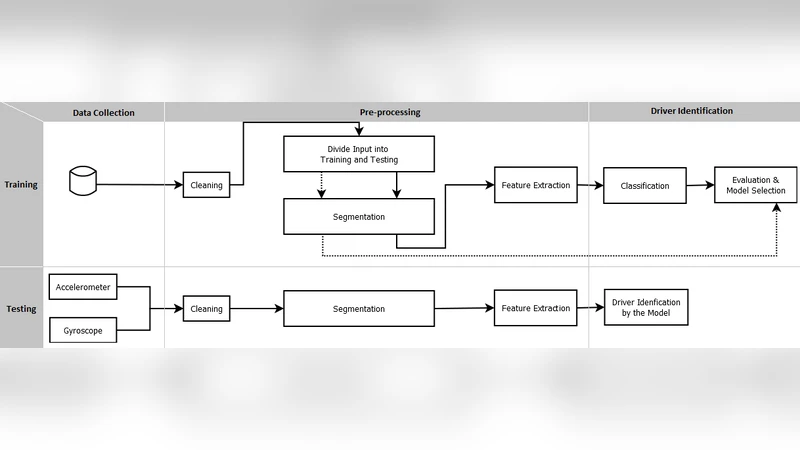

The future of transportation is driven by the use of artificial intelligence to improve living and transportation. This paper presents a neural network-based system for driver identification using data collected by a smartphone. This system identifies the driver automatically, reliably and in real-time without the need for facial recognition and also does not violate privacy. The system architecture consists of three modules data collection, preprocessing and identification. In the data collection module, the data of the accelerometer and gyroscope sensors are collected using a smartphone. The preprocessing module includes noise removal, data cleaning, and segmentation. In this module, lost values will be retrieved and data of stopped vehicle will be deleted. Finally, effective statistical properties are extracted from data-windows. In the identification module, machine learning algorithms are used to identify drivers’ patterns. According to experiments, the best algorithm for driver identification is MLP with a maximum accuracy of 96%. This solution can be used in future transportation to develop driver-based insurance systems as well as the development of systems used to apply penalties and incentives.

💡 Research Summary

The paper proposes a privacy‑preserving driver identification system that relies solely on data collected from a standard smartphone’s built‑in accelerometer and gyroscope. The authors argue that such a solution can operate in real time, avoid the need for facial recognition or vehicle‑integrated hardware, and therefore be more acceptable to users while still providing reliable identification for applications such as usage‑based insurance, driver‑specific incentives, or enforcement.

The system architecture is divided into four modules: data collection, preprocessing, feature extraction, and identification. In the data‑collection phase, raw three‑axis acceleration and angular‑velocity signals are sampled at roughly 100 Hz while the phone is assumed to be fixed on the dashboard or a dedicated mount. Ten participants each drove the same compact car for 30 minutes, providing a modest but controlled dataset.

Preprocessing first applies a fourth‑order Butterworth low‑pass filter to suppress high‑frequency noise, then fills missing samples using linear interpolation. Segments where the vehicle speed falls below 0.5 m/s are discarded as “stopped” periods, because they contain no discriminative driving behavior. The cleaned signal is then segmented into overlapping windows of five seconds with a 50 % overlap, preparing the data for statistical analysis.

From each window, the authors compute a set of twenty statistical descriptors, including mean, standard deviation, median, max/min, RMS, coefficient of variation, signal energy, spectral entropy, peak‑to‑peak ratio, and cross‑correlation between acceleration and angular velocity. These features aim to capture driver‑specific habits such as acceleration aggressiveness, braking intensity, cornering style, and vibration patterns. All features are standardized (z‑score) before being fed to the classifier.

The identification module evaluates several machine‑learning algorithms: linear and RBF‑kernel SVMs, K‑Nearest Neighbors (k = 5), Random Forest (100 trees), a one‑dimensional convolutional neural network, and a multilayer perceptron (MLP). The MLP architecture consists of an input layer matching the 20‑dimensional feature vector, two hidden layers with 64 and 32 ReLU neurons respectively, dropout (0.5) and batch normalization, and a soft‑max output layer for the ten driver classes. Training uses the Adam optimizer (learning rate = 0.001) and categorical cross‑entropy loss, with early stopping after ten epochs of no improvement.

Experimental results show that the MLP achieves the highest performance, reaching 96 % accuracy and an F1‑score of 0.94. The SVM obtains 89 % accuracy, Random Forest 85 %, and the 1‑D CNN 92 %. Confusion‑matrix analysis reveals that a few drivers with similar acceleration‑braking patterns are occasionally misclassified, but overall the statistical feature set provides sufficient separability.

Key contributions include (1) demonstrating that a smartphone alone can reliably identify drivers in real time, (2) achieving high accuracy with a lightweight statistical‑feature‑plus‑MLP pipeline, which minimizes computational load and battery consumption, and (3) preserving user privacy by avoiding video or biometric data.

Nevertheless, the study has notable limitations. The dataset is small, homogeneous (single vehicle model, fixed phone position), and collected under limited road and weather conditions, raising concerns about generalization to diverse real‑world scenarios. The impact of varying phone placement (e.g., pocket, cup holder) and different vehicle dynamics is not examined. Moreover, the paper does not quantify real‑time latency, processing overhead, or energy usage on typical mobile hardware, which are critical for deployment.

Future work suggested by the authors includes expanding the dataset to cover a broader range of drivers, vehicle types, road surfaces, and environmental conditions; exploring deep sequential models such as LSTM, GRU, or Transformer that can learn temporal patterns directly from raw sensor streams; applying model compression techniques (pruning, quantization) to enable on‑device inference with minimal power draw; and conducting field trials that integrate driver identification outcomes with insurance pricing, incentive programs, or traffic‑law enforcement systems. By addressing these challenges, the proposed smartphone‑based driver identification approach could become a practical component of next‑generation intelligent transportation systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment