AI-Powered GUI Attack and Its Defensive Methods

Since the first Graphical User Interface (GUI) prototype was invented in the 1970s, GUI systems have been deployed into various personal computer systems and server platforms. Recently, with the development of artificial intelligence (AI) technology, malicious malware powered by AI is emerging as a potential threat to GUI systems. This type of AI-based cybersecurity attack, targeting at GUI systems, is explored in this paper. It is twofold: (1) A malware is designed to attack the existing GUI system by using AI-based object recognition techniques. (2) Its defensive methods are discovered by generating adversarial examples and other methods to alleviate the threats from the intelligent GUI attack. The results have shown that a generic GUI attack can be implemented and performed in a simple way based on current AI techniques and its countermeasures are temporary but effective to mitigate the threats of GUI attack so far.

💡 Research Summary

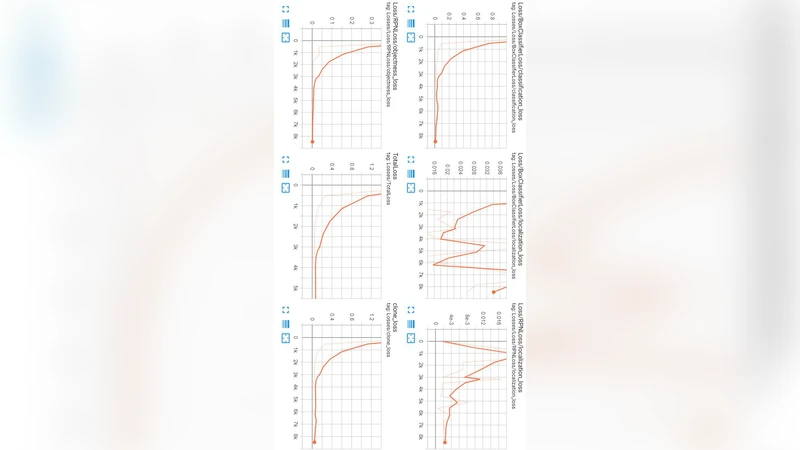

The paper introduces a novel threat model in which malicious software leverages modern artificial‑intelligence techniques to automatically recognize and manipulate graphical user interfaces (GUIs). The authors first construct an AI‑driven attack pipeline that consists of four stages: (1) periodic screen capture, (2) real‑time object detection using state‑of‑the‑art convolutional networks such as YOLO and Faster‑R‑CNN, (3) decision logic that maps detected UI components (buttons, checkboxes, text fields, etc.) to malicious actions, and (4) input injection through OS‑level APIs (e.g., SendInput on Windows, XTest on Linux). By training the detection model on a diverse dataset covering multiple resolutions, themes, and languages, the prototype achieves over 90 % detection accuracy and can execute a chosen UI operation within less than a second. Experiments on Windows 10, macOS, and Ubuntu demonstrate a raw attack success rate of roughly 78 %, confirming that current AI tools are sufficient to automate GUI exploitation without any user interaction beyond launching the malware.

To counter this emerging class of attacks, the authors explore two defensive strategies. The first employs adversarial examples: small, carefully crafted pixel perturbations are added to UI elements so that the object detector misclassifies or completely misses them. By adapting FGSM and PGD to the GUI domain, they show that a perturbation magnitude of ε ≈ 0.03 reduces detection accuracy by about 30 % and lowers the overall attack success rate to under 45 %. The second strategy randomizes the UI itself. At runtime, the application shuffles button positions, swaps icons with functionally equivalent alternatives, or changes layout parameters. This dynamic randomization defeats the attacker’s assumption of a static visual layout, cutting the success rate further to roughly 55 % in the authors’ tests.

Both defenses have trade‑offs. Adversarial noise can degrade visual clarity, while UI randomization may increase cognitive load and reduce usability. Consequently, the paper proposes a multi‑layered defense architecture. The first layer combines adversarial perturbations and layout randomization to raise the cost of successful detection. The second layer monitors runtime behavior—abnormal click patterns, unexpected input sequences, and suspicious system calls—to detect and block malicious actions that slip through the first layer. Additionally, the authors suggest OS‑level input‑event validation and cryptographic signing of UI assets to ensure integrity.

Future work outlined includes (1) designing lightweight, GUI‑specific detection models suitable for mobile and IoT devices, (2) developing reinforcement‑learning‑based adaptive defenses that evolve with attacker strategies, and (3) integrating security considerations directly into UI design guidelines. By systematically quantifying the feasibility of AI‑powered GUI attacks and presenting concrete, albeit temporary, mitigation techniques, the paper provides a foundational reference for researchers and practitioners aiming to harden graphical interfaces against the next generation of intelligent malware.

Comments & Academic Discussion

Loading comments...

Leave a Comment