Towards a Framework for Certification of Reliable Autonomous Systems

A computational system is called autonomous if it is able to make its own decisions, or take its own actions, without human supervision or control. The capability and spread of such systems have reached the point where they are beginning to touch much of everyday life. However, regulators grapple with how to deal with autonomous systems, for example how could we certify an Unmanned Aerial System for autonomous use in civilian airspace? We here analyse what is needed in order to provide verified reliable behaviour of an autonomous system, analyse what can be done as the state-of-the-art in automated verification, and propose a roadmap towards developing regulatory guidelines, including articulating challenges to researchers, to engineers, and to regulators. Case studies in seven distinct domains illustrate the article.

💡 Research Summary

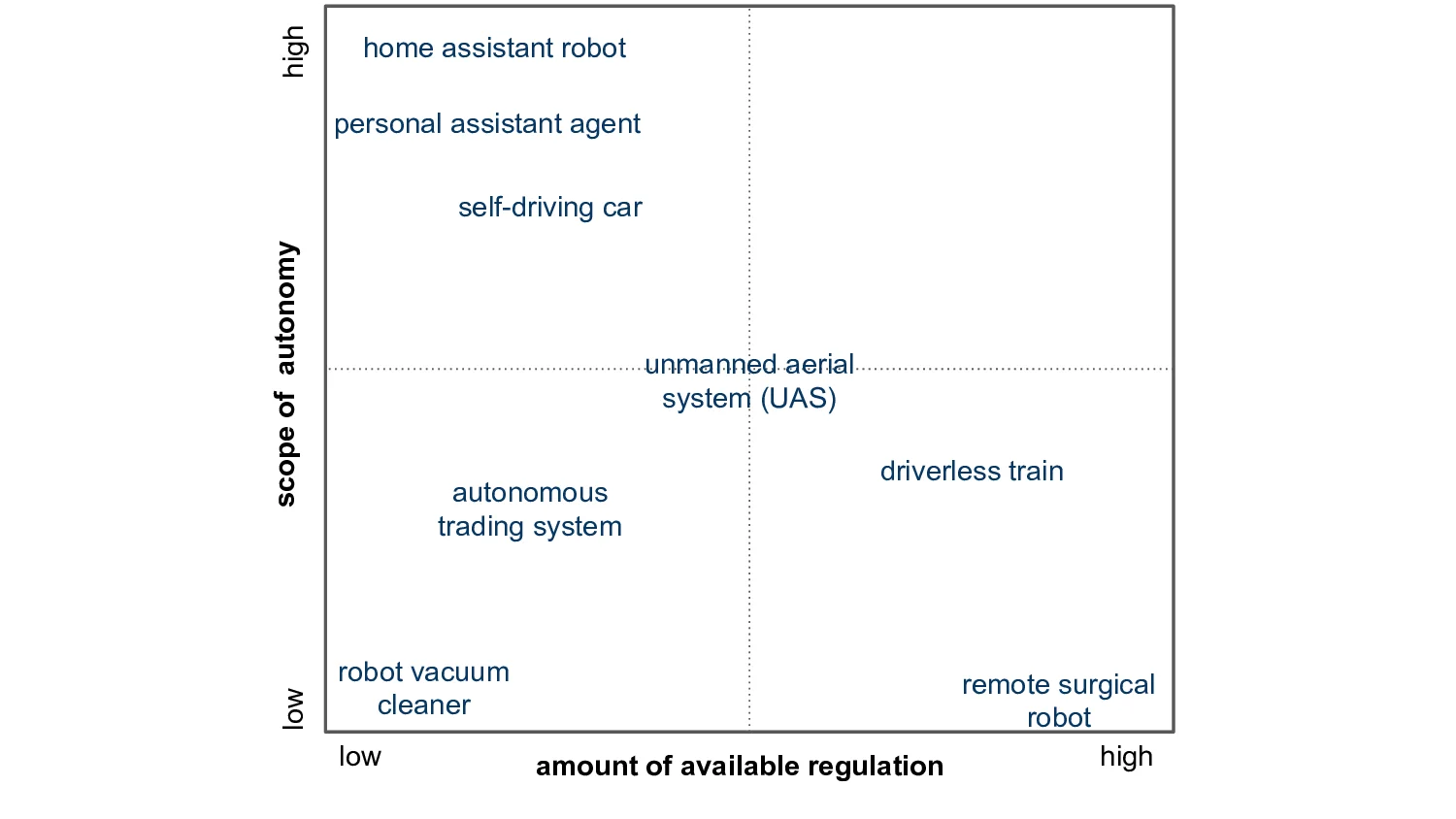

The paper tackles the pressing problem of how to certify the reliability of increasingly prevalent autonomous systems. It begins by distinguishing between “autonomy” – the ability of a system to make and act on decisions without human supervision – and “reliability,” defined as consistent fulfillment of functional requirements, including adherence to rules and appropriate handling of rare, exceptional situations. The authors adopt a six‑grade autonomy scale derived from SAE International and extend it with a “low” level, resulting in seven categories ranging from no autonomy to full autonomy. They also introduce the notion of “scope of autonomy,” which captures how many functional capabilities are covered by the autonomous behaviour.

A survey of the current regulatory landscape shows that many international standards (IEC, ISO, IEEE, CENELEC) address generic safety and functional‑safety concerns but largely ignore autonomy‑specific issues such as uncertainty, learning, and dynamic decision making. Moreover, most standards remain textual and are not expressed in a formal language that can be directly consumed by automated verification tools.

To bridge this gap, the authors propose a three‑layer reference framework for certification:

-

Functional Layer – Captures the services the system must provide and the safety requirements derived from existing functional‑safety standards (e.g., ISO 26262, IEC 61508). Hazard analysis and safety goals are defined here.

-

Behavioral Layer – Models the set of executable actions and state transitions. Formal verification techniques such as model checking, theorem proving, and simulation‑based testing are applied to ensure that every reachable behaviour satisfies the functional safety properties.

-

Intentional Layer – Represents internal mental‑state constructs (goals, beliefs, intentions) typical of rational agents. By formalising these constructs using BDI (Belief‑Desire‑Intention) models or goal‑oriented logics, the framework can verify that the system’s intentions remain consistent with ethical norms and regulatory constraints, even when it must override ordinary rules in emergency situations.

The paper details a “property‑derivation process” that starts from regulatory requirements, proceeds to functional specifications, then to behavioural models, and finally to intentional models. At each step, verification properties are extracted and fed to automated tools such as SPIN, NuSMV, Coq, or Alloy. This systematic pipeline enables a compositional proof of reliability that can be inspected by regulators.

To demonstrate applicability, seven case studies are examined: home‑assistant robots, self‑driving cars, unmanned aerial systems (UAS), driverless trains, autonomous trading agents, personal‑assistant agents, and remote surgical robots (which currently have limited autonomy). For each domain the authors map expected autonomy levels against existing regulatory strength, highlighting gaps where the proposed framework can provide additional assurance. For instance, UAS operate at high autonomy but face weak regulation, suggesting a need for rigorous behavioural and intentional verification.

The final sections articulate challenges for three stakeholder groups. Researchers are urged to develop formal languages for the intentional layer, handle probabilistic uncertainty, and create runtime verification mechanisms. Engineers must integrate formal specification into the design workflow and build automated verification pipelines. Regulators need to define autonomy‑specific certification criteria, align standards with formal specifications, and establish independent bodies capable of evaluating the three‑layer evidence package.

In summary, the paper offers a comprehensive roadmap: it clarifies terminology, critiques existing standards, introduces a layered certification framework, validates it across diverse domains, and outlines concrete research, engineering, and policy actions required to achieve trustworthy autonomous systems. This work serves as a bridge between academic advances in formal verification and the practical needs of industry and regulators seeking to safely deploy autonomous technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment