Effects of Persuasive Dialogues: Testing Bot Identities and Inquiry Strategies

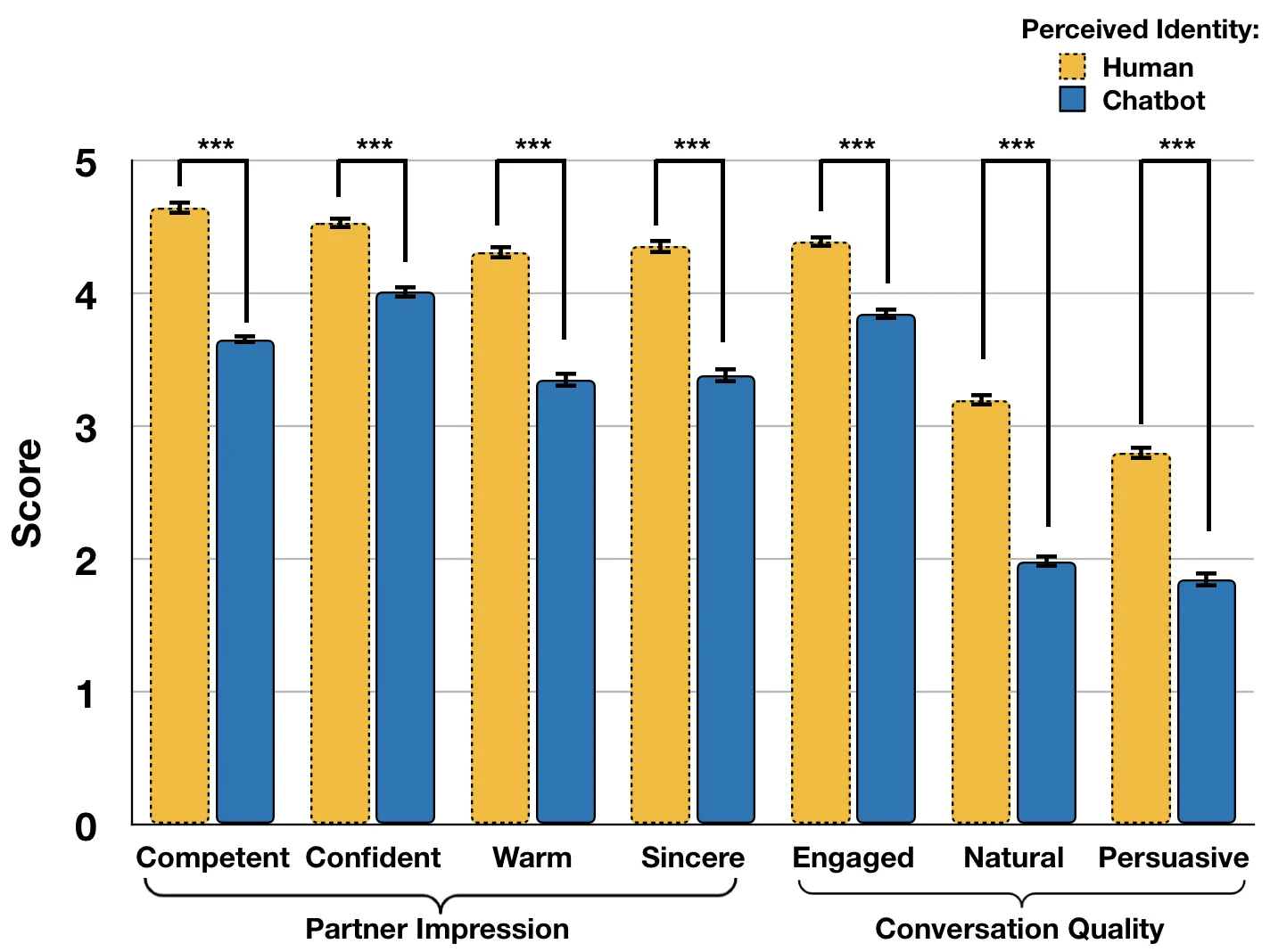

Intelligent conversational agents, or chatbots, can take on various identities and are increasingly engaging in more human-centered conversations with persuasive goals. However, little is known about how identities and inquiry strategies influence the conversation’s effectiveness. We conducted an online study involving 790 participants to be persuaded by a chatbot for charity donation. We designed a two by four factorial experiment (two chatbot identities and four inquiry strategies) where participants were randomly assigned to different conditions. Findings showed that the perceived identity of the chatbot had significant effects on the persuasion outcome (i.e., donation) and interpersonal perceptions (i.e., competence, confidence, warmth, and sincerity). Further, we identified interaction effects among perceived identities and inquiry strategies. We discuss the findings for theoretical and practical implications for developing ethical and effective persuasive chatbots. Our published data, codes, and analyses serve as the first step towards building competent ethical persuasive chatbots.

💡 Research Summary

This paper investigates how a chatbot’s disclosed identity and its inquiry strategy affect persuasive outcomes and interpersonal perceptions in a donation context. The authors built an agenda‑based persuasive dialogue system using the PER‑SUAISON‑GOOD dataset, which includes ten persuasive strategies and three types of persuasive inquiries (personal, non‑personal, and mixed). The system consists of three modules: Natural Language Understanding (NLU) that classifies user utterances into 14 dialogue acts using a multimodal feature set (text, sentiment, context, character features); Dialogue Manager (DM) that selects the next system act based on the user’s act and a pre‑defined agenda; and Natural Language Generation (NLG) that renders the selected act into a human‑readable sentence via templates.

A 2 × 4 factorial experiment was conducted with 790 online participants recruited via a crowdsourcing platform. The two identity conditions were (1) a human‑like name “Jessie” (human identity) and (2) the same name with an explicit bot label “Jessie

Comments & Academic Discussion

Loading comments...

Leave a Comment