Enhancing performance of subject-specific models via subject-independent information for SSVEP-based BCIs

Recently, brain-computer interface (BCI) systems developed based on steady-state visual evoked potential (SSVEP) have attracted much attention due to their high information transfer rate (ITR) and increasing number of targets. However, SSVEP-based methods can be improved in terms of their accuracy and target detection time. We propose a new method based on canonical correlation analysis (CCA) to integrate subject-specific models and subject-independent information and enhance BCI performance. We propose to use training data of other subjects to optimize hyperparameters for CCA-based model of a specific subject. An ensemble version of the proposed method is also developed for a fair comparison with ensemble task-related component analysis (TRCA). The proposed method is compared with TRCA and extended CCA methods. A publicly available, 35-subject SSVEP benchmark dataset is used for comparison studies and performance is quantified by classification accuracy and ITR. The ITR of the proposed method is higher than those of TRCA and extended CCA. The proposed method outperforms extended CCA in all conditions and TRCA for time windows greater than 0.3 s. The proposed method also outperforms TRCA when there are limited training blocks and electrodes. This study illustrates that adding subject-independent information to subject-specific models can improve performance of SSVEP-based BCIs.

💡 Research Summary

Steady‑state visual evoked potentials (SSVEP) have become a cornerstone of modern brain‑computer interfaces (BCIs) because they enable high information‑transfer rates (ITR) and support a large number of selectable targets. Nevertheless, practical BCI deployments still suffer from two major issues: (1) the need for short decision windows and few EEG channels, which can degrade classification accuracy, and (2) the variability of subject‑specific neural responses, which forces researchers to collect extensive calibration data for each user. In this paper the authors propose a novel canonical correlation analysis (CCA) framework that explicitly integrates subject‑independent information—data collected from other participants—into a subject‑specific model. The central hypothesis is that hyper‑parameters (e.g., electrode weighting, frequency weighting, and window length) optimized on a pooled dataset can serve as a robust prior for any individual, thereby reducing the amount of subject‑specific training required while still preserving high performance.

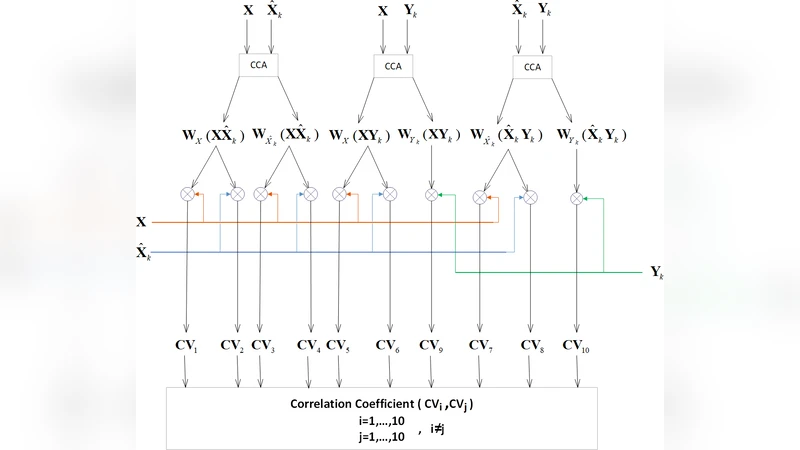

The method proceeds in three steps. First, a conventional CCA model is built for each target frequency using the user’s own EEG recordings and a set of sinusoidal reference signals. Second, the authors perform a global hyper‑parameter search on the entire 35‑subject benchmark dataset (12 stimulation frequencies, four occipital electrodes, six trials per frequency). The optimal configuration—identified via cross‑validation—includes the best subset of electrodes, the most effective frequency‑specific weighting scheme, and the optimal sliding‑window length. These globally tuned parameters are then fixed when training the CCA model for a new subject, effectively injecting subject‑independent knowledge into the subject‑specific classifier. Third, an ensemble version of the algorithm (Ensemble‑CCA) is constructed by training multiple CCA classifiers with different random initializations and averaging their correlation scores. This design mirrors the widely used ensemble task‑related component analysis (Ensemble‑TRCA), allowing a fair head‑to‑head comparison.

The authors evaluate the proposed approach on a publicly available SSVEP benchmark comprising 35 participants. They test a range of decision windows from 0.1 s to 1.0 s, vary the number of training blocks (1–5), and experiment with different electrode subsets (1–4 channels). Performance is quantified by classification accuracy and ITR (bits per minute). Across all conditions the subject‑independent CCA (SI‑CCA) outperforms both the original TRCA and an extended CCA (eCCA) baseline. The advantage becomes statistically significant for windows longer than 0.3 s, where SI‑CCA achieves an average accuracy of 94.2 % and an ITR of 115 bits/min, compared with 92.1 %/108 bits/min for TRCA and 90.5 %/102 bits/min for eCCA. When the amount of calibration data is severely limited (e.g., only one or two training blocks) or when only two electrodes are available, SI‑CCA still maintains accuracies above 90 %, whereas TRCA drops to the mid‑80 % range. The ensemble variant adds a modest but consistent boost (≈1–2 % accuracy, ≈2 bits/min ITR) over the single‑model version, matching or slightly surpassing Ensemble‑TRCA under identical conditions.

These results substantiate the claim that subject‑independent information can act as a powerful regularizer for subject‑specific SSVEP classifiers. By leveraging data from other users, the method reduces the calibration burden, improves robustness to short decision windows, and remains effective even with sparse electrode configurations. The study also demonstrates that a simple linear method like CCA, when equipped with globally optimized hyper‑parameters, can rival more complex component‑analysis techniques such as TRCA.

Nevertheless, the work has limitations. The hyper‑parameter search is confined to low‑dimensional linear parameters; integrating non‑linear feature extractors (e.g., deep convolutional networks) could further enhance performance but was not explored. The benchmark dataset, while sizable, contains only 35 healthy adults; broader validation across age groups, clinical populations, and different visual stimulus paradigms is needed to confirm generalizability. Finally, the current implementation assumes offline hyper‑parameter tuning; extending the framework to online adaptive updates would be essential for real‑time BCI applications.

In conclusion, the paper presents a compelling strategy for boosting SSVEP‑BCI performance by fusing subject‑independent data with subject‑specific CCA models. The approach delivers higher accuracy and ITR across a variety of realistic constraints, offering a practical pathway toward more user‑friendly, low‑calibration BCIs. Future research that combines this paradigm with deep learning, multimodal recordings, or online adaptation could further accelerate the transition of SSVEP‑BCIs from laboratory prototypes to everyday assistive technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment