Frosting Weights for Better Continual Training

Training a neural network model can be a lifelong learning process and is a computationally intensive one. A severe adverse effect that may occur in deep neural network models is that they can suffer from catastrophic forgetting during retraining on …

Authors: Xiaofeng Zhu, Feng Liu, Goce Trajcevski

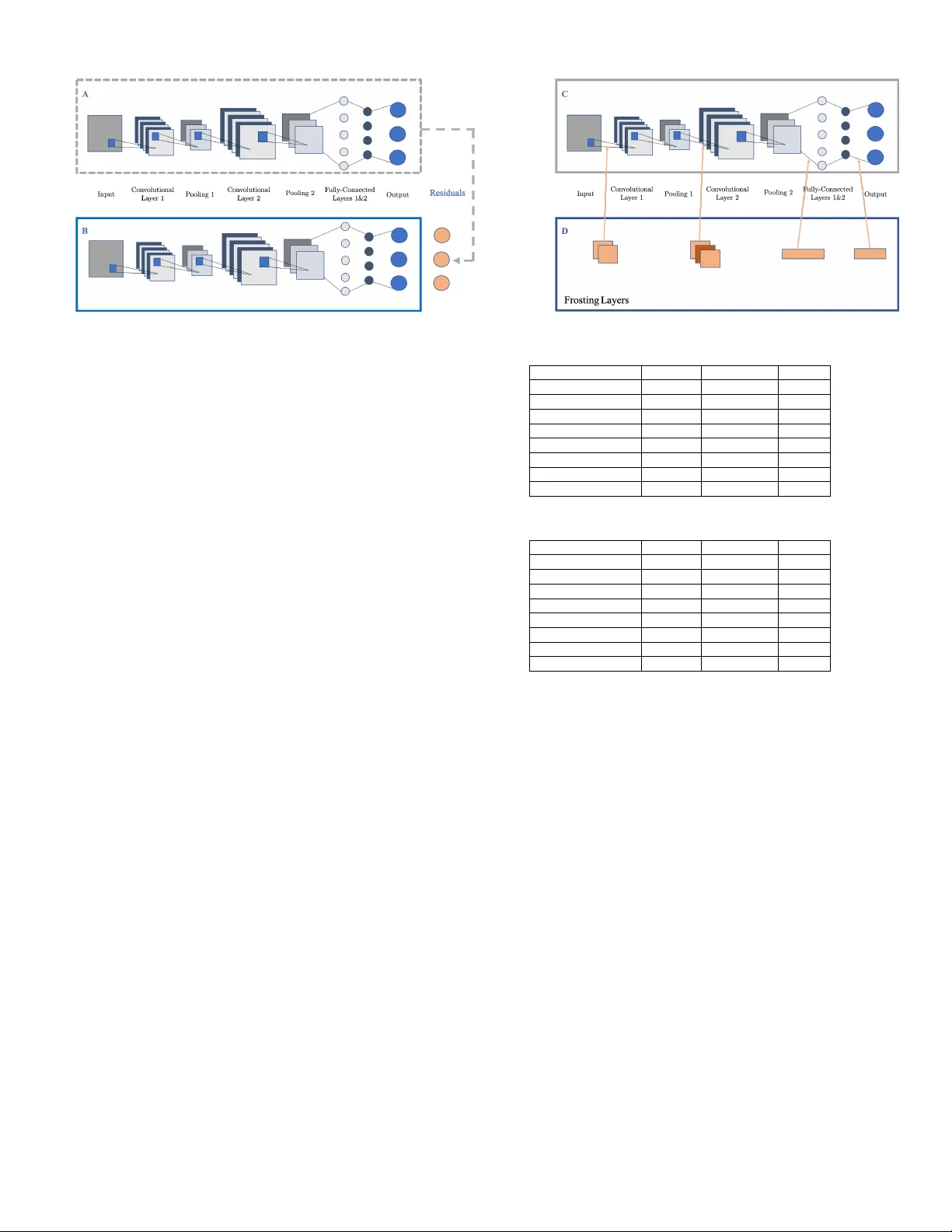

Frosting W eights for Better Continual T raining Xiaofeng Zhu Department of CS Northwestern University Evanston, USA xiaofengzhu2013@u.northwestern.edu Feng Liu Department of CEECS Florida Atlantic University Boca Raton, USA fliu2016@fau.edu Goce T rajce vski Department of ECpE Iowa State University Ames, USA gocet25@iastate.edu Dingding W ang Department of CEECS Florida Atlantic University Boca Raton, USA wangd@fau.edu Abstract —T raining a neural network model can be a lifelong learning process and is a computationally intensive one. A sever e adverse effect that may occur in deep neural network models is that they can suffer from catastr ophic forgetting during retraining on new data. T o av oid such disruptions in the continuous learning, one appealing property is the additive nature of ensemble models. In this paper , we propose two generic ensemble appr oaches, gradient boosting and meta-learning, to solve the catastrophic f orgetting problem in tuning pre-trained neural network models. Index T erms —incremental learning, continual learning, meta- learning, ensemble models I . I N T RO D U C T I O N W ith stationary training resources and v arious advanced neural network structures, deep learning models ha ve e xceeded human performance in many areas. Ho wev er , a well-kno wn limitation of deep learning models is the so-called “catas- trophic forgetting. ” That is, while acquiring new knowledge, the models may forget the knowledge learned in the past. In other words, after tuning a pre-trained model with new data, the model can hav e a poor performance on patterns learned from old data. In this paper, we inv estigate the possibility of a model to generalize from old patterns and new patterns. T owards formalizing the setting of our study , we assume that we have a model with a generic loss function, and it has been well- trained on a big dataset. Our aim is that, upon presenting a ne w dataset, the model can be adjusted so that it can handle the ne w dataset well – while simultaneously not forgetting the patterns learned from the old dataset. In many real-world systems, new and old data are commonly stored in a buf fered manner , where the model always has access to a roughly fixed size of training data. This fact is at the core of what differentiates our model retraining from traditional continual learning with bounded computational complexity . In our study , the old and the new datasets share the same prediction space. For instance, the number of classes in the two datasets are the same, but the background and object angles may differ . The issue of catastrophic forgetting in neural networks had been addressed in the literature (cf. [11], [20]). Once again, the source of the problem is that when neural networks are trained continually on datasets with dif ferent feature distributions Xiaofeng Zhu and Feng Liu contributed equally to this work. Xiaofeng Zhu is the corresponding author . and/or trained for different objecti ves, the knowledge learned in an old training session can be lost in a ne w training session [60]. W e note that our study aligns closely to contin- ual/incremental learning and multi-task/sequential learning. Continual learning focuses on tuning a model with continual new data without forgetting old kno wledge [1], [24], [36], [45], [50]. Compared to continual learning, multi-task learning focuses on more dissimilar tasks [14], [42], [43], [64], e.g., incremental class learning [13], [19], [23], [38], [41], where different tasks have different classes. Moreover , different tasks usually only have access to new data [4] or just a small portion of old data [28]. From the training objectiv e aspect, multi-task learning solves task-specific problems: parameter space shifting using priors [22], [35], [53], path tra versal among different tasks [10], [28], parameter sharing in different tasks [34], and hard attentions [48] or masks [30] for switch- ing tasks. Their contributions address challenges in different experimental settings [31], such as how data in different tasks are distributed, how tasks are ordered [33], [52], and how performance should be ev aluated among a sequence of tasks. Our study may be perceived as a special case of continual learning – howe ver , we note that our primary focus and data setting differ from continual learning and, especially , sequential learning. Our retraining models aim to have good performance on both new and old data. In continual learning and sequential learning, a model can only access new data. Howe v er , when tuning a pre-trained model in real-world systems, the model should access some old data to guarantee adequate retraining. It may not be feasible in the long run to train on the union of all old data and new data. In our study , old data and new data are stored in a buffered manner . Giv en limited computation resources that can process a fixed capacity of data, we feed the same full capacity of training data during training or retaining. In ev ery future retraining process, a trained model is tuned on a fixed-size data set, which is composed of the new dataset and a subset of the old dataset. W e boost the performance of a trained model by gradient boosting and by learning a meta-network. The two methods are generic and can be applied to any neural network models and loss functions. Our major contributions are that (1) we propose an additi ve ensemble model that can tune a pre-trained model based on its performance gap, and (2) we further propose a meta-network model that can balance new patterns and old patterns and ev entually keep the number of parameters unchanged. I I . R E L A T E D W O R K W e now detail the major directions of continual learning: regularization, ensemble methods, and memory consolidation. A. Re gularization Regularization is the most common way of solving catas- trophic forgetting via approaches that measure the importance of weights of a trained model. The elastic weight consolidation (EWC) algorithm proposed in [20] uses the Fisher matrix to represent weight importance. A regularization term is added to the original loss function to constrain important weights to stay close to their old values when retraining on a new session/task. Despite the usefulness of the Fisher matrix, the diagonal Fisher matrix assumption may not hold; therefore, approximations of the Fisher Matrix [27], [46], [56], [63] and additional KL-diver gence constraints [5] were applied in later studies. Besides the Fisher matrix, gradient mag- nitude [2], unsupervised self-organizing maps [21], and the Hessian matrix [62] are common techniques for measuring weight importance. In particular , the memory aware synapses (MAS) model [2] has become a ne w widely-used benchmark after EWC. MAS uses the gradient magnitude of network outputs with re gard to weights to measure weight importance. In contrast, the Fisher matrix is based on loss and weights in EWC. When outputs are multi-dimensional, as in classification tasks, MAS calculates the squared l2 norm of outputs. An improv ement ov er EWC and MAS by adding an additional regularization term on neurons was presented in [4], proposing that sparsity at the neuron level was also important. W e show in our experiments that our ensemble models perform better than this regularization strategy . B. Ensemble Methods Intuitiv ely , ensemble models try to solve catastrophic for- getting by training multiple sub-networks [8], [9], [15], [29], [37], [57], [61]. Ren et al. [39] and Juefei-Xu et al. [17] made breakthroughs by proposing dynamic ways of constructing an ensemble model by tuning a sub-network of a pre-trained model or expanding a pre-trained model. The general limi- tation is that the memory usage scales up with the number of training sessions. Related meta-learning works generally focus on learning network architectures in order to adjust a pre-trained model to be robust on different datasets and tasks [16], [17], [26], [44], [58], [59]. F or instance, kno wledge distillation [15] tries to distill knowledge learned from a lar ge (teacher) network to a small (student) network. The goal is to make the teacher network and the student network behave as similarly as possible. Ho wev er , our goal is to learn a more generalized network continually , being able to fix errors in the old trained model by acquiring new data. C. Memory Consolidation Memory consolidation solves catastrophic forgetting from the data aspect. Memory consolidation models [6], [7], [18], [19], [28], [32], [51] learn to store the patterns/memories of different training sessions by strategically sampling a subset of the old data. Generative Adversarial Networks (GANs) are suitable in nature for learning networks [3], [25], [40], [47], [49], [54], [55] in an incremental way . A generator trained on old data can be used in a new training session for replaying old learning experiences. Our study solves a more generic issue from the modeling aspect, and memory consolidation can be applied to our models for providing a reasonable data buf fer . I I I . P RO P O S E D M O D E L S In this section, we present two ways of “frosting” weights for better retraining. One is based on classical gradient boost- ing, the other is based on neural network meta-learning. A. F r osting Network W eights by One-step Gradient Boosting W e train our proposed network BoostNet, which can be simpler or the same as the trained network. W e firstly apply a retraining dataset, composed of ne w training data and a subset of the old training data, to the trained model and calculate the model outputs and “residuals” [12]. Let L denote the softmax cross-entropy loss function, o i denote the output of the i th neuron before the softmax layer , p i denote the softmax probability of the i th neuron, and let y i denote the one-hot label. The residuals are calculated as ∂ L ∂ o i = y i − p i , measuring how much the trained model needs to improve in order to perform well on the retraining dataset. It is very likely that the residual v alues are close to 0 for the old training data, since the loss was originally minimized, and the residual values for the new training data are relativ ely larger . The weights of the trained model stay unchanged. Figure 1a illustrates this training procedure. The BoostNet takes the residuals as the training targets (“labels”). The loss function for the simpler model is the mean squared error (MSE) loss. In the end, we sum up the outputs of the old trained model and the outputs of the BoostNet model for future inference. This method does not change the trained model but adds new weights, which come from the simpler model. The limitation is that the number of total weights increases every time a new dataset comes in. While in kno wledge distillation (where a student model is trained to mimic the behavior of the teacher model), a base network was also trained and kept frozen [15], BoostNet aims to have different training targets – i.e., errors from former learners. B. F r osting Network W eights by Meta-learning W e now explain our second ensemble model that can keep the number of parameters unchanged for inference. W e add “frosting” layers for weights in a pre-trained model by taking weights as “neurons. ” More specifically , we train a meta- network for weights in addition to the trained network. Both weights in the meta-network and weights in the base network are optimized during re-training. W e have attempted freezing (a) BoostNet (b) FrostNet weights in the base network, but optimizing both networks yielded faster con ver gence. Each frosting layer is of the same shape as its corresponding weights. The weights in the frosting layers and the weights in the pre-trained model are both updated during retraining. In the end, the frosting layers are merged with the original weights so that the total number of weights is still unchanged. The merging operation is done by replacing the weights in the base network with the product of those weights and the weights in the corresponding frosting layer – after which the frosting layers are discarded. The base network with the updated weights is used for inference – thus, there is no performance loss after the mer ging operation. W e call this ensemble model FrostNet, and it is illustrated in Figure 1b. W e have attempted common activ ation functions such as tanh and ReLU ; tanh had a better performance com- pared to ReLU in our experiments. Although ReLU has many good metrics when applied to neurons, we do not find that it contributes to our frosting layer activ ation. Regardless, we hav e found that simply applying the frosting layers to trained weights without any activ ation functions already outperformed the benchmark models in our experiments. I V . E X P E R I M E N T S W e now discuss the experiments used in ev aluating the benefits of the proposed approaches. A. Datasets W e use the MNIST and the CIF AR-10 datasets for com- paring our BoostNet and FrostNet models with benchmark models. Similar to the data variation e xperiments in continual learning, we create a retraining dataset from the training set of the MNIST or CIF AR-10 by taking a random half of the original training set and augmenting the other half. The random half is taken by sampling each mini-batch during retraining instead of keeping them fixed before retraining. W e obtain the augmented validation set using the same method. T o this end, the sizes of the training and the v alidation sets stay unchanged in the initial training and retraining. W e augment the entire test set as the new test set. The augmentations were conducted on the original training, validation, and test data Original Augmented T otal Base 0.9921 0.2829 0.6352 T rain-from-scratch 0.9870 0.7547 0.8699 Fine-tuning 0.9921 0.2662 0.6267 EWC 0.9890 0.8117 0.8995 MAS 0.9889 0.6907 0.8385 Selfless 0.9860 0.3036 0.6430 BoostNet 0.9891 0.8830 0.9352 FrostNet 0.9920 0.9012 0.9818 T ABLE I: V ariational MNIST Original Augmented T otal Base 0.6599 0.3013 0.4810 T rain-from-scratch 0.6077 0.4309 0.5155 Fine-tuning 0.6207 0.4463 0.5200 EWC 0.6191 0.4640 0.5415 MAS 0.6094 0.4363 0.5230 Selfless 0.6198 0.3397 0.4795 BoostNet 0.6363 0.4580 0.5426 FrostNet 0.6456 0.4646 0.5552 T ABLE II: V ariational CIF AR-10 separately to av oid data leakage. The augmentation factors are randomly rotating images by 45 degrees (clockwise and counterclockwise), shifting images by 20 percent (left and right), and zooming in by 80-90 percent. W e have found that a well-trained model on the original MNIST or CIF AR-10 performs poorly on this augmentation setting. Similarly to incremental class experiments in sequential learning, we split both the original MNIST and CIF AR-10 training datasets into two parts: the first part has classes 0- 4; the second part has classes 5-9. W e use the first part for training a LeNet model. W e then take a random half of the first and the second parts during retraining. The original v alidation and test datasets are split into two parts under the same setting. Finally , we obtain a retraining dataset that is of the same size as the initial training dataset. B. Experimental Settings W e compare our models to three baseline models: the initial LeNet model (base), training a new model (train-from-scratch), fine-tuning from pre-trained weights (fine-tuning), and three state-of-the-art models: EWC, MAS, and Selfless [4]. W e use Classes 0-4 Classes 5-9 T otal Base 0.9984 0.0000 0.4992 T rain-from-scratch 0.9957 0.9562 0.9760 Fine-tuning 0.9953 0.9626 0.9790 EWC 0.9949 0.9562 0.9756 MAS 0.9916 0.9008 0.9462 Selfless 0.9940 0.9074 0.9507 BoostNet 0.9973 0.9628 0.9800 FrostNet 0.9963 0.9665 0.9814 T ABLE III: Split MNIST Classes 0-4 Classes 5-9 T otal Base 0.7590 0.0000 0.3795 T rain-from-scratch 0.4359 0.6518 0.5439 Fine-tuning 0.6010 0.5639 0.5824 EWC 0.5921 0.5520 0.5721 MAS 0.6402 0.5184 0.5230 Selfless 0.6535 0.4344 0.5440 BoostNet 0.6252 0.5791 0.6022 FrostNet 0.6498 0.5693 0.6096 T ABLE IV: Split CIF AR-10 LeNet with batch normalization as the network framework. The pre-trained weights from the base model are used for measuring weight and neuron importance for EWC, MAS, and Selfless. All benchmark models are tuned based on the optimized weights from the base LeNet model. W e use the truncated normal distribution for weight initialization for all network models. All models are trained at most 50 epochs with an early stopping of 10 consecutiv e epochs. The weights that hav e the highest accuracy and, secondarily , the lowest loss value (if the accuracy does not improve) on the validation dataset are used for inference. W e report the accuracy of the corresponding test datasets in tables I – IV. Boldface indicates the highest value in each column except the base model. W e publish the code for our experiments on GitHub 1 . V . R E S U LT S W e av erage the accuracy of each model using three runs. T ables I-II summarize the performance of the data variation experiments using the baseline models, the benchmark models, and our ensemble models. The base model has the highest accuracy on the original test datasets and the lowest accuracy on the augmented test datasets. Due to early-stopping and data augmentation, fine-tuning and benchmark models do not necessarily outperform train-from-scratch models. All the models except the base one suf fer from catastrophic for getting to some degree as the accuracy on an original test dataset is lower than the accuracy using the base model. All benchmark models have higher accuracy on the augmented dataset com- pared to the baseline models. EWC, MAS, and Selfless can prev ent a trained model forgetting old kno wledge, but their common limitation is that they hav e trouble learning from new knowledge. Our FrostNet model performs best among all benchmark models, and our BoostNet comes second. The FrostNet model yields the highest accuracy on the original datasets and the augmented datasets as the FrostNet model 1 https://github .com/XiaofengZhu/frosting_weights uses a network to learn how to adjust weights. The BoostNet model learns from performance gaps and pushes its weights to minimize the gaps. T ables III-IV summarize the incremental class e xperiments. The base model has the highest accuracy on the datasets of classes (0-4) and the lowest accuracy on the ones of classes (5- 9). Since the initial training dataset only has classes (0-4), the trained base model cannot predict any data from classes (5-9). T raining-from-scratch on the retraining dataset has noticeable catastrophic forgetting on the initial training dataset. Although EWC, MAS, and especially Selfless models have an advantage in reducing catastrophic forgetting, they hav e inferior results on the ne w classes (5-9). Our BoostNet and FrostNet models can balance memorizing old knowledge and learning ne w knowledge, with a good overall performance. V I . C O N C L U S I O N In this paper , we propose two generic ensemble methods for boosting the performance of retraining. Regarding parameter capacity , BoostNet slowly adds a small number of parameters to a pre-trained model, similar to adding ne w trees in random forest modeling. Although FrostNet adds frosting layers for trained weights, the merging operation brings the number of total parameters down to the original size. Our work can be extended to other fields, such as general continual learning and network compression. Our work can be improved by taking the time series characteristic of training into account to further boost the performance. An interesting future direction would be strategically selecting training data. Another interesting di- rection would be selecting synapse paths from a sophisticated network model trained for a large domain to specialize for small domains. R E F E R E N C E S [1] D. Albesano, R. Gemello, P . Laface, F . Mana, and S. Scanzio. Adap- tation of artificial neural netw orks avoiding catastrophic forgetting. In Pr oceedings of the 2006 IEEE International Joint Confer ence on Neural Network , pages 1554–1561. IEEE, 2006. [2] R. Aljundi, F . Babiloni, M. Elhoseiny , M. Rohrbach, and T . Tuytelaars. Memory aware synapses: Learning what (not) to forget. In Pr oceedings of the Eur opean Confer ence on Computer V ision (ECCV) , pages 139– 154, 2018. [3] R. Aljundi, L. Caccia, E. Belilovsky , M. Caccia, M. Lin, L. Charlin, and T . T uytelaars. Online continual learning with maximally interfered retriev al. 2019. [4] R. Aljundi, M. Rohrbach, and T . T uytelaars. Selfless sequential learning. arXiv preprint arXiv:1806.05421 , 2018. [5] A. Chaudhry , P . K. Dokania, T . Ajanthan, and P . H. T orr . Riemannian walk for incremental learning: Understanding forgetting and intransi- gence. In Proceedings of the European Conference on Computer V ision (ECCV) , pages 532–547, 2018. [6] A. Chaudhry , M. Ranzato, M. Rohrbach, and M. Elhoseiny . Efficient lifelong learning with a-gem. arXiv preprint , 2018. [7] A. Chaudhry , M. Rohrbach, M. Elhoseiny , T . Ajanthan, P . K. Dokania, P . H. T orr, and M. Ranzato. Continual learning with tiny episodic memories. arXiv pr eprint arXiv:1902.10486 , 2019. [8] G. Chen, W . Choi, X. Y u, T . Han, and M. Chandraker . Learning efficient object detection models with knowledge distillation. In Advances in Neural Information Processing Systems , pages 742–751, 2017. [9] W . Dai, Q. Y ang, G.-R. Xue, and Y . Y u. Boosting for transfer learning. In International Conference on Machine Learning . IEEE, 2007. [10] C. Fernando, D. Banarse, C. Blundell, Y . Zwols, D. Ha, A. A. Rusu, A. Pritzel, and D. Wierstra. Pathnet: Evolution channels gradient descent in super neural networks. CoRR , abs/1701.08734, 2017. [11] R. M. French. Catastrophic forgetting in connectionist networks. T rends in cognitive sciences , 3(4):128–135, 1999. [12] J. H. Friedman. Greedy function approximation: a gradient boosting machine. Annals of statistics , pages 1189–1232, 2001. [13] T . Ganegedara, L. Ott, and F . Ramos. Lifelong learning with structurally adaptiv e cnns. International Conference on Machine Learning , 2017. [14] P . Henderson, W .-D. Chang, F . Shkurti, J. Hansen, D. Meger, and G. Dudek. Benchmark environments for multitask learning in continuous domains. International Confer ence on Machine Learning , 2017. [15] G. Hinton, O. Vin yals, and J. Dean. Distilling the knowledge in a neural network. arXiv preprint , 2015. [16] K. Jav ed and M. White. Meta-learning representations for continual learning. arXiv pr eprint arXiv:1905.12588 , 2019. [17] F . Juefei-Xu, V . N. Boddeti, and M. Savvides. Perturbativ e Neural Networks. In IEEE Computer V ision and P attern Recognition (CVPR) , June 2018. [18] N. Kamra, U. Gupta, and Y . Liu. Deep generativ e dual memory network for continual learning. abs/1710.10368, 2017. [19] R. Kemker and C. Kanan. Fearnet: Brain-inspired model for incremental learning. International Confer ence on Learning Repr esentations (ICLR) , 2017. [20] J. Kirkpatrick, R. Pascanu, N. Rabinowitz, J. V eness, G. Desjardins, A. A. Rusu, K. Milan, J. Quan, T . Ramalho, A. Grabska-Barwinska, et al. Overcoming catastrophic forgetting in neural netw orks. Pr oceedings of the national academy of sciences , 114(13):3521–3526, 2017. [21] T . Kohonen. The self-organizing map. Pr oceedings of the IEEE , 78(9):1464–1480, 1990. [22] S.-W . Lee, J.-H. Kim, J. Jun, J.-W . Ha, and B.-T . Zhang. Overcoming catastrophic for getting by incremental moment matching. In Advances in neural information processing systems , pages 4652–4662, 2017. [23] Y . Li, Z. Li, L. Ding, P . Y ang, Y . Hu, W . Chen, and X. Gao. Supportnet: solving catastrophic forgetting in class incremental learning with support data. CoRR , abs/1806.02942, 2018. [24] Z. Li and D. Hoiem. Learning without forgetting. IEEE transactions on pattern analysis and machine intelligence , 40(12):2935–2947, 2017. [25] K. J. Liang, C. Li, G. W ang, and L. Carin. Generativ e adversarial network training is a continual learning problem, 2019. [26] M. Lin, Q. Chen, and S. Y an. Network in network. arXiv pr eprint arXiv:1312.4400 , 2013. [27] X. Liu, M. Masana, L. Herranz, J. V an de W eijer , A. M. Lopez, and A. D. Bagdanov . Rotate your networks: Better weight consolidation and less catastrophic for getting. In 2018 24th International Conference on P attern Recognition (ICPR) , pages 2262–2268. IEEE, 2018. [28] D. Lopez-Paz et al. Gradient episodic memory for continual learning. In Advances in Neural Information Processing Systems , pages 6467–6476, 2017. [29] Q. V . L. I. S. O. V . Luong, Minh-Thang and L. Kaiser . Multi-task sequence to sequence learning. International Conference on Learning Repr esentations (ICLR) , 2016. [30] A. Mallya and S. Lazebnik. Packnet: Adding multiple tasks to a single network by iterativ e pruning. In Proceedings of the IEEE Confer ence on Computer V ision and P attern Recognition , pages 7765–7773, 2018. [31] D. Maltoni and V . Lomonaco. Continuous learning in single- incremental-task scenarios. CoRR , abs/1806.08568, 2018. [32] S. V . Mehta, B. Paranjape, and S. Singh. Evaluating influence functions for memory replay in continual learning. 2019. [33] E. Meyerson and R. Miikkulainen. Beyond shared hierarchies: Deep multitask learning through soft layer ordering. International Conference on Learning Representations (ICLR) , 2018. [34] E. Meyerson and R. Miikkulainen. Pseudo-task augmentation: From deep multitask learning to intratask sharing - and back. 2018. [35] C. V . Nguyen, Y . Li, T . D. Bui, and R. E. T urner . V ariational continual learning. In International Conference on Learning Representations , 2018. [36] G. I. Parisi, R. Kemker , J. L. Part, C. Kanan, and S. W ermter . Continual lifelong learning with neural networks: A review . CoRR , abs/1802.07569, 2018. [37] R. Polikar , L. Upda, S. S. Upda, and V . Honavar . Learn++: An incremental learning algorithm for supervised neural networks. IEEE transactions on systems, man, and cybernetics, part C (applications and r eviews) , 31(4):497–508, 2001. [38] S.-A. Rebuffi, A. Kolesnik ov , G. Sperl, and C. H. Lampert. icarl: Incremental classifier and representation learning. In Proceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 2001–2010, 2017. [39] H. W . J. L. Ren, Boya and H. Gao. Life-long learning based on dynamic combination model. 2017. [40] A. Rios and L. Itti. Closed-loop GAN for continual learning. CoRR , abs/1811.01146, 2018. [41] D. Roy , P . Panda, and K. Roy . Tree-cnn: a hierarchical deep con- volutional neural network for incremental learning. arXiv preprint arXiv:1802.05800 , 2018. [42] S. Ruder. An overvie w of multi-task learning in deep neural networks. CoRR , abs/1706.05098, 2017. [43] A. A. Rusu, N. C. Rabinowitz, G. Desjardins, H. Soyer , J. Kirkpatrick, K. Kavukcuoglu, R. Pascanu, and R. Hadsell. Progressive neural networks. arXiv preprint , 2016. [44] A. A. Rusu, N. C. Rabinowitz, G. Desjardins, H. Soyer , J. Kirkpatrick, K. Kavukcuoglu, R. Pascanu, and R. Hadsell. Progressive neural networks. CoRR , abs/1606.04671, 2016. [45] P . Ruvolo and E. Eaton. Ella: An efficient lifelong learning algorithm. In International Conference on Machine Learning , pages 507–515, 2013. [46] J. L. W . M. C. A. G.-B. Y . W . T . R. P . Schwarz, Jonathan and R. Hadsell. Progress & compress: A scalable framework for continual learning. 2018. [47] A. Seff, A. Beatson, D. Suo, and H. Liu. Continual learning in generativ e adversarial nets. CoRR , abs/1705.08395, 2017. [48] J. Serrà, D. Surís, M. Miron, and A. Karatzoglou. Overcoming catastrophic forgetting with hard attention to the task. arXiv pr eprint arXiv:1801.01423 , 2018. [49] H. Shin, J. K. Lee, J. Kim, and J. Kim. Continual learning with deep generati ve replay . In Advances in Neural Information Pr ocessing Systems , pages 2990–2999, 2017. [50] D. L. Silver , Q. Y ang, and L. Li. Lifelong machine learning systems: Beyond learning algorithms. In 2013 AAAI spring symposium series , 2013. [51] S. Sodhani, S. Chandar, and Y . Bengio. On training recurrent neural networks for lifelong learning. International Confer ence on Machine Learning , 2019. [52] T . Standley , A. R. Zamir, D. Chen, L. Guibas, J. Malik, and S. Sav arese. Which tasks should be learned together in multi-task learning? Interna- tional Conference on Machine Learning , 2019. [53] S. Swaroop, C. V . Nguyen, T . D. Bui, and R. E. T urner . Improving and understanding variational continual learning. arXiv:1905.02099 [cs, stat] , 2019. [54] C. SÃijbakan, M. Caccia, T . Lesort, and L. Charlin. Continual learning of generative models with maximum entropy generative replay . 2019. [55] H. Thanh-T ung, T . T ran, and S. V enkatesh. On catastrophic forget- ting and mode collapse in generative adversarial networks. CoRR , abs/1807.04015, 2018. [56] M. T u, V . Berisha, M. W oolf, J.-s. Seo, and Y . Cao. Ranking the parameters of deep neural networks using the fisher information. In Pr oceedings of 2016 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 2647–2651. IEEE, 2016. [57] C. W ang, X. Lan, and Y . Zhang. Model distillation with knowledge transfer from face classification to alignment and verification. arXiv pr eprint arXiv:1709.02929 , 2017. [58] J. Xu and Z. Zhu. Reinforced continual learning. In Advances in Neural Information Processing Systems , pages 899–908, 2018. [59] J. Y oon, E. Y ang, J. Lee, and S. J. Hwang. Lifelong learning with dy- namically expandable netw orks. International Confer ence on Learning Repr esentations (ICLR) , 2018. [60] J. Y osinski, J. Clune, Y . Bengio, and H. Lipson. How transferable are features in deep neural networks? In Advances in neural information pr ocessing systems , pages 3320–3328, 2014. [61] J. Y u, L. Y ang, N. Xu, J. Y ang, and T . Huang. Slimmable neural net- works. International Conference on Learning Representations (ICLR) , 2019. [62] F . Zenke, B. Poole, and S. Ganguli. Continual learning through synaptic intelligence. In Pr oceedings of the 34th International Conference on Machine Learning-V olume 70 , pages 3987–3995. JMLR. or g, 2017. [63] C. Zeno, I. Golan, E. Hoffer , and D. Soudry . T ask agnostic continual learning using online variational bayes. arXiv preprint arXiv:1803.10123 , 2018. [64] Y . Zhang and Q. Y ang. A survey on multi-task learning. CoRR , abs/1707.08114, 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment