Real-time 3D reconstruction from single-photon lidar data using plug-and-play point cloud denoisers

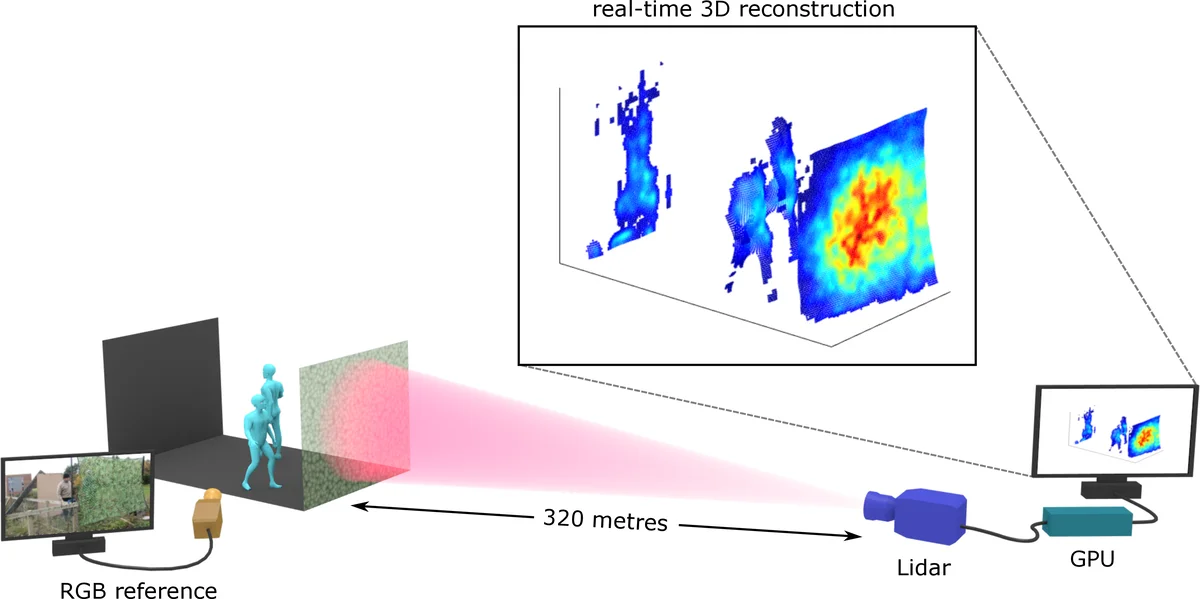

Single-photon lidar has emerged as a prime candidate technology for depth imaging through challenging environments. Until now, a major limitation has been the significant amount of time required for the analysis of the recorded data. Here we show a new computational framework for real-time three-dimensional (3D) scene reconstruction from single-photon data. By combining statistical models with highly scalable computational tools from the computer graphics community, we demonstrate 3D reconstruction of complex outdoor scenes with processing times of the order of 20 ms, where the lidar data was acquired in broad daylight from distances up to 320 metres. The proposed method can handle an unknown number of surfaces in each pixel, allowing for target detection and imaging through cluttered scenes. This enables robust, real-time target reconstruction of complex moving scenes, paving the way for single-photon lidar at video rates for practical 3D imaging applications.

💡 Research Summary

This paper addresses two major challenges that have limited the practical deployment of single‑photon time‑correlated single‑photon counting (TCSPC) lidar for real‑time three‑dimensional imaging: (1) extremely low signal‑to‑background ratios caused by ambient daylight and the Poisson nature of photon arrivals, and (2) the presence of multiple reflecting surfaces within a single pixel, which invalidates the common single‑surface assumption used by many existing algorithms.

The authors propose a novel plug‑and‑play (PnP) reconstruction framework that tightly couples a physics‑based observation model with powerful point‑cloud denoisers originally developed for computer graphics. The lidar data cube (Z) (size (N_r \times N_c \times T)) is modeled as a Poisson mixture of signal photons (parameterized by depth (t) and reflectivity (r)) and background photons (b). The negative log‑likelihood (g(t,r,b)) captures the full sensor statistics, including gain, dead pixels, and possible compressive sensing schemes.

Reconstruction proceeds by alternating proximal‑gradient updates on the three variable blocks ((t, r, b)) within a Parallel Alternating Linearized Minimization (PALM) scheme. Each block update consists of (i) a gradient step with respect to the log‑likelihood (data fidelity) and (ii) a denoising step that serves as the proximal operator. Crucially, the denoising step does not rely on handcrafted regularisers; instead, it invokes off‑the‑shelf point‑cloud denoisers:

-

Depth update – After the gradient step, the current point cloud (\Phi) (each point stores a 3‑D location and intensity) is processed by the Algebraic Point Set Surfaces (APSS) algorithm. APSS fits a smooth manifold to the points using spherical kernels, effectively regularising depth while naturally handling an arbitrary number of surfaces per pixel because the points are not tied to a raster grid.

-

Intensity update – The reflectivity vector (r) is refined by a low‑pass filter applied on the manifold defined by (\Phi). A nearest‑neighbour graph (similar to ISOMAP) is built, and each point’s intensity is smoothed using its local neighbours, preserving intra‑surface correlations while preventing cross‑surface leakage. Points whose intensity falls below a preset threshold are discarded.

-

Background update – Depending on the lidar architecture, background denoising is either omitted (bistatic scanners, where background counts are spatially uncorrelated) or performed with a simple FFT‑based image denoiser (monostatic scanners, where background forms a passive image).

All three steps are highly parallelisable on GPUs, allowing the entire pipeline to run in a few milliseconds per frame.

Experimental validation is carried out on two representative scenarios:

-

Static high‑resolution scene – A life‑size polystyrene head scanned at 40 m with a raster‑scanning lidar (141 × 141 pixels, 4613 time bins, 0.3 mm depth resolution). The acquisition yields ~3 photons/pixel with SBR ≈ 13. The proposed method recovers 96.6 % of the points within a 4 cm error margin, outperforming cross‑correlation (83.5 %), a recent single‑surface Bayesian method (95.2 %), and ManiPoP (95.23 %). Processing time is 13 ms, compared with 201 s for ManiPoP and 37 s for the single‑surface method—a speed‑up of 10⁴–10⁵×.

-

Dynamic long‑range scene – A 32 × 32 pixel single‑photon array (153 bins, 3.75 cm resolution) captures a 150 k fps binary stream, which is aggregated into 50 fps lidar frames (each containing 3008 binary sub‑frames). A super‑resolution scheme expands the point cloud to 96 × 96 real‑world pixels. The algorithm processes each frame in ~20 ms, enabling real‑time 3D video of two people walking behind a camouflage net at 320 m under daylight. Most pixels contain two surfaces; some edge pixels exhibit up to three surfaces, all correctly reconstructed.

The authors also discuss theoretical limits on the minimum photons per pixel and SBR required for reliable reconstruction, and they demonstrate that the framework can be extended to super‑resolution, deblurring in scattering media, and other imaging modalities.

Key contributions:

- Introduction of a PnP framework that merges a rigorous Poisson observation model with graphics‑based point‑cloud denoisers, eliminating the need for handcrafted priors.

- Ability to handle an unknown number of surfaces per pixel without sacrificing computational efficiency.

- GPU‑friendly implementation achieving real‑time performance (≈20 ms per frame) while delivering reconstruction quality equal to or better than state‑of‑the‑art methods.

- Validation on both static high‑resolution and dynamic long‑range scenes, demonstrating robustness to low photon counts, high background, and complex surface geometry.

In summary, the paper presents a scalable, robust, and ultra‑fast solution for single‑photon lidar 3D reconstruction, paving the way for video‑rate depth imaging in autonomous vehicles, remote sensing, and other real‑world applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment