Field Label Prediction for Autofill in Web Browsers

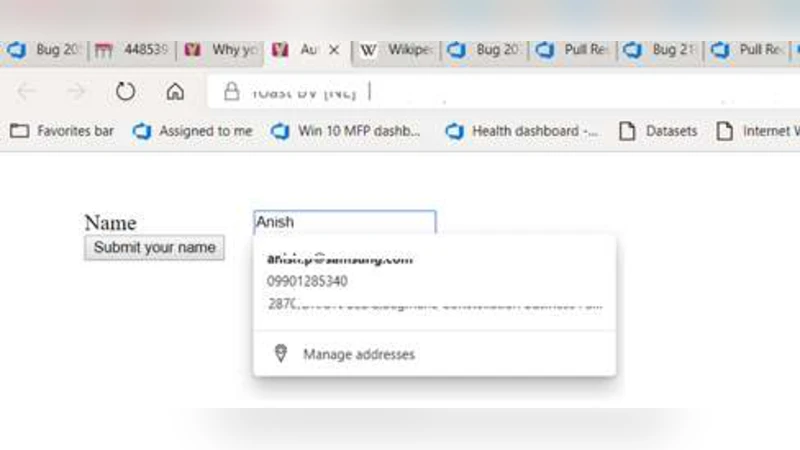

Automatic form fill is an important productivity related feature present in major web browsers, which predicts the field labels of a web form and automatically fills values in a new form based on the values previously filled for the same field in other forms. This feature increases the convenience and efficiency of users who have to fill similar information in fields in multiple forms. In this paper we describe a machine learning solution for predicting the form field labels, implemented as a web service using Azure ML Studio.

💡 Research Summary

The paper presents a machine‑learning driven solution for predicting field labels in web forms, thereby improving the autofill feature of modern browsers. The authors begin by outlining the productivity benefits of autofill and the necessity of correctly identifying field semantics for new forms. Traditional browsers rely on heuristic rules—often based on regular expressions applied to HTML attributes such as id, name, or label—to map a field to a known label (e.g., “email” or “address”). While effective for many static forms, these heuristics break down when identifiers are ambiguous, dynamically generated, or when forms are hidden behind login walls.

To address these shortcomings, the authors construct a labeled dataset of roughly 4,000 form fields collected from a variety of publicly available websites. For each field they extract four textual attributes: the visible label, the HTML name attribute, the id attribute, and the page URL. Human annotators assign one of a predefined set of common labels (email, username, address, password, age, etc.) to each entry. Basic preprocessing removes stop‑words and normalizes case. The categorical values are then transformed into a high‑dimensional binary vector using one‑hot encoding; each attribute contributes its own sub‑vector, and the final feature vector is the concatenation of these sub‑vectors.

Model development is carried out entirely within Azure Machine Learning Studio, a visual environment that supports data ingestion, preprocessing, algorithm selection, hyper‑parameter tuning, and deployment as a web service. The authors experiment with several algorithms—including linear regression, support vector machines, and decision trees—before settling on a multi‑class Decision Forest (an ensemble of randomized decision trees). The chosen forest uses bagging for resampling, 16 trees, a maximum depth of 100, 128 random splits per node, and a leaf‑node sample minimum of one. A 70 %/30 % train‑test split yields an overall precision of about 95 % for the binary “email” classifier, with comparable performance on the full multi‑class problem.

Deployment is realized by publishing the trained model as a RESTful API via Azure’s web‑service hosting. A browser extension (or any client‑side component) extracts the same four HTML attributes from a newly loaded form, sends the one‑hot encoded feature vector to the API, and receives the predicted label in real time. This architecture keeps the raw user data (actual form values) on the client side, transmitting only non‑sensitive structural features to the server, thereby respecting user privacy while allowing centralized model updates.

The paper concludes by acknowledging current limitations: the dataset is modest in size and language diversity, one‑hot encoding leads to high dimensionality, and the approach treats each label independently rather than exploiting inter‑label relationships. Future work will focus on scaling the dataset through large‑scale web crawling, adopting embedding‑based representations (e.g., Word2Vec, FastText) to reduce dimensionality, and exploring deep learning models that can capture richer semantic patterns. Moreover, the authors propose a hybrid system that combines rule‑based regular expressions, lookup tables, and machine‑learning predictions in an ensemble, aiming to achieve higher robustness across the wide variety of forms encountered on the modern web.

Comments & Academic Discussion

Loading comments...

Leave a Comment