Garbage In, Garbage Out? Do Machine Learning Application Papers in Social Computing Report Where Human-Labeled Training Data Comes From?

Many machine learning projects for new application areas involve teams of humans who label data for a particular purpose, from hiring crowdworkers to the paper’s authors labeling the data themselves. Such a task is quite similar to (or a form of) structured content analysis, which is a longstanding methodology in the social sciences and humanities, with many established best practices. In this paper, we investigate to what extent a sample of machine learning application papers in social computing — specifically papers from ArXiv and traditional publications performing an ML classification task on Twitter data — give specific details about whether such best practices were followed. Our team conducted multiple rounds of structured content analysis of each paper, making determinations such as: Does the paper report who the labelers were, what their qualifications were, whether they independently labeled the same items, whether inter-rater reliability metrics were disclosed, what level of training and/or instructions were given to labelers, whether compensation for crowdworkers is disclosed, and if the training data is publicly available. We find a wide divergence in whether such practices were followed and documented. Much of machine learning research and education focuses on what is done once a “gold standard” of training data is available, but we discuss issues around the equally-important aspect of whether such data is reliable in the first place.

💡 Research Summary

This paper conducts a meta‑research study of supervised‑machine‑learning (ML) application papers in the social computing domain that create original human‑labeled training sets from Twitter data. Drawing on the long‑standing methodology of structured content analysis in the social sciences and humanities, the authors treat each target paper as a “coding unit” and systematically assess how transparently it reports the labeling process.

The authors first assembled a corpus of papers from arXiv pre‑prints and Scopus‑indexed publications that performed a classification task on Twitter data and that claimed to have generated their own labeled dataset. They then designed a coding scheme comprising twelve items: (1) who performed the labeling (authors, crowdworkers, domain experts, etc.); (2) labeler qualifications; (3) whether a coding scheme or annotation guidelines were provided; (4) whether labelers received training or pilot testing; (5) whether items were labeled independently by multiple annotators; (6) whether inter‑rater reliability (IRR) metrics such as Cohen’s κ or Krippendorff’s α were reported; (7) the magnitude of IRR values; (8) whether crowdworker compensation was disclosed; (9) whether the compensation amount was specified; (10) whether the labeled dataset was publicly released; (11) whether a link or DOI to the dataset was given; and (12) any reconciliation procedures for disagreements.

Two or more researchers independently coded each paper; disagreements were resolved through discussion, mirroring best practices for content analysis and ensuring reliability of the meta‑analysis itself.

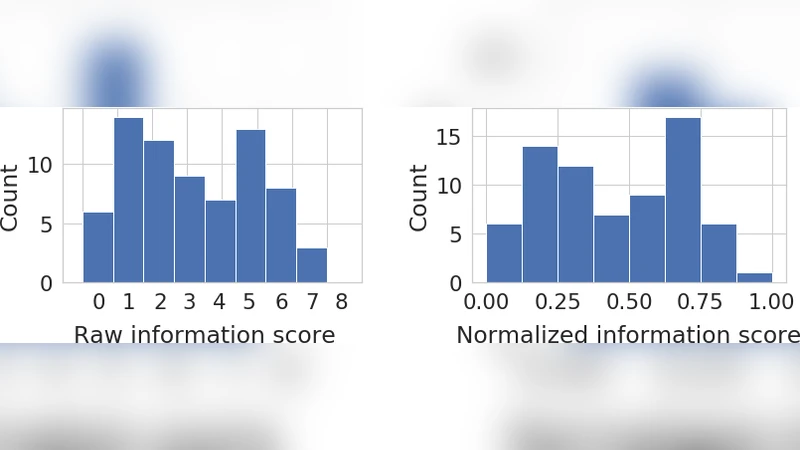

The findings reveal a striking lack of consistency and completeness in reporting. Only about 35 % of papers identified who performed the labeling, and fewer than 30 % described labeler qualifications or training. Independent labeling by multiple annotators was mentioned in roughly 22 % of cases, and IRR statistics were provided by just 12 % of papers; when reported, average α values hovered around 0.58, indicating modest agreement. Compensation details for crowdworkers appeared in only 18 % of studies, and merely 19 % of papers made the labeled dataset publicly accessible.

These results suggest that many ML application papers treat the creation of a “gold‑standard” dataset as a black‑box step, focusing instead on model architecture, performance metrics, and algorithmic refinements. By neglecting to document the labeling pipeline, authors undermine the construct validity of their classifiers and impede reproducibility. The authors argue that labeling is essentially a structured content‑analysis activity and should adopt the same rigorous practices: clear coding schemes, pilot testing, independent annotation, systematic IRR measurement, and transparent reporting of annotator recruitment, training, and compensation.

The paper situates its findings within broader movements toward open science, reproducibility, and emerging documentation frameworks such as “datasheets for datasets,” “model cards,” and “data statements.” It emphasizes that these initiatives must explicitly address human‑annotation processes, not only model performance or ethical considerations.

Limitations include the focus on Twitter‑based studies, which may not generalize to other domains, and the reliance on information disclosed in the papers themselves; absence of a statement does not guarantee absence of a labeling protocol. The authors recommend future work that directly accesses labeling platforms, interviews annotators, or examines provenance logs to obtain richer evidence.

In conclusion, the study provides empirical evidence that social‑computing ML papers often fail to report essential details about human‑labeled training data. Improving transparency in this early stage of the ML pipeline is crucial for ensuring data quality, model validity, and responsible deployment of automated systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment