That and There: Judging the Intent of Pointing Actions with Robotic Arms

Collaborative robotics requires effective communication between a robot and a human partner. This work proposes a set of interpretive principles for how a robotic arm can use pointing actions to communicate task information to people by extending existing models from the related literature. These principles are evaluated through studies where English-speaking human subjects view animations of simulated robots instructing pick-and-place tasks. The evaluation distinguishes two classes of pointing actions that arise in pick-and-place tasks: referential pointing (identifying objects) and locating pointing (identifying locations). The study indicates that human subjects show greater flexibility in interpreting the intent of referential pointing compared to locating pointing, which needs to be more deliberate. The results also demonstrate the effects of variation in the environment and task context on the interpretation of pointing. Our corpus, experiments and design principles advance models of context, common sense reasoning and communication in embodied communication.

💡 Research Summary

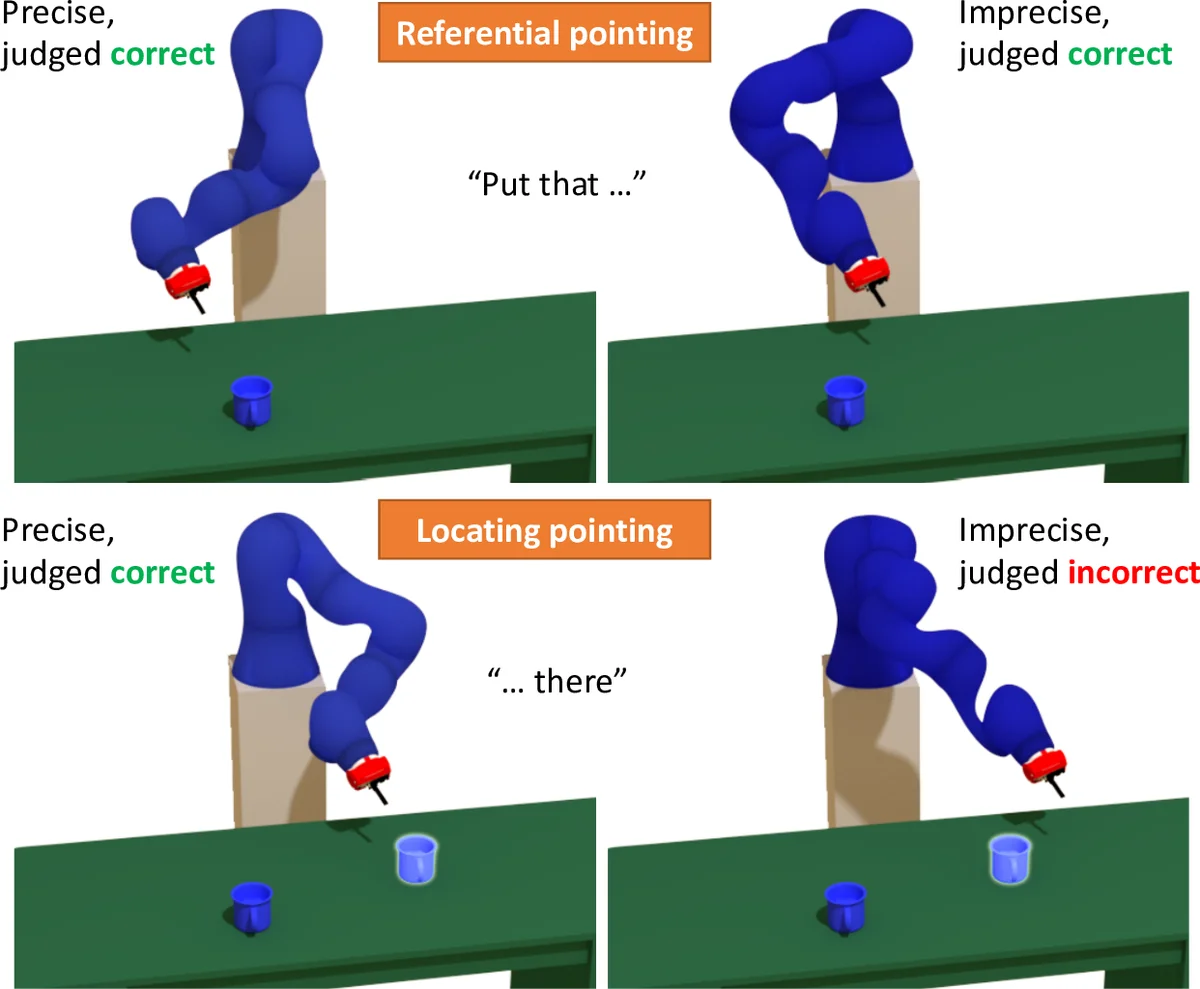

This paper investigates how a robotic arm can use pointing gestures to convey task‑relevant information to a human collaborator, focusing on pick‑and‑place scenarios. Building on prior work on deictic gestures and the “pointing cone” model, the authors distinguish two fundamentally different kinds of pointing: referential pointing, which identifies an object to be picked, and locating pointing, which designates the final placement location. They argue that these two intents have distinct requirements for precision and interpretability.

To test these ideas, the authors created a large‑scale dataset comprising over 7,000 human judgments collected via Amazon Mechanical Turk. Participants viewed short animations of simulated robots (a Baxter dual‑arm and a Kuka single‑arm) performing a pair of pointing gestures—first toward an object, then toward a target spot on a table—sometimes accompanied by synchronized speech (“Put that there”). The animations varied systematically along several dimensions: (1) pointing type (referential vs. locating), (2) angular precision of the pointing ray (tight cone vs. wide cone), (3) presence or absence of verbal cues, (4) scene complexity (single object, multiple objects, stacked objects), and (5) robot platform. After each animation, participants judged whether a displayed outcome image was correct, incorrect, or ambiguous.

Statistical analysis revealed two key patterns. First, referential pointing is robust to imprecision: even when the pointing ray deviated noticeably from the target object, participants still identified the intended object at a high rate (≈80 % correct). This suggests that humans treat the set of nearby objects as a candidate pool and select the closest one, tolerating moderate angular error. Second, locating pointing is highly sensitive to precision: only when the cone angle was small (≤ 5°) did participants reliably infer the intended placement location (≈70 % correct). With a wider cone, accuracy dropped below 30 %. The effect persisted across both robot platforms and was only modestly mitigated by verbal cues, indicating that visual precision dominates in spatially grounded instructions.

Additional findings highlighted the influence of context. In cluttered scenes with stacked items, participants favored physically stable placement locations over visually indicated but unstable points (e.g., the edge of a stack). This demonstrates that humans incorporate commonsense physical reasoning when interpreting robot gestures. Moreover, speech cues improved overall accuracy by about 5 % but did not compensate for large angular errors in locating pointing.

From these observations the authors derive a set of design principles for robotic pointing. The core idea is to define a candidate set of possible referents based on the current scene and the pointing ray, then discard any candidate that is “significantly farther” than the nearest alternative, using a statistically derived distance threshold. For referential pointing, this approach allows flexibility: the robot can point roughly toward a cluster of objects, and the human will still infer the intended one. For locating pointing, the robot must ensure that the ray aligns closely with the desired spot, possibly by adjusting arm trajectories to reduce angular deviation or by adding visual markers to narrow the candidate set. The principles also advise that verbal cues be used as supplementary information rather than a substitute for precise pointing, and that physical feasibility (stability, collision avoidance) be considered when selecting candidate locations.

The paper’s contributions are threefold: (1) a systematic, publicly released dataset of human interpretations of robot pointing gestures; (2) an empirical comparison of referential versus locating pointing, showing their differing tolerance for imprecision; and (3) a set of empirically grounded rules that can be integrated into motion‑planning or dialogue‑management modules to make robot gestures more legible and reliable.

Limitations include reliance on simulated animations rather than real‑world robot motion, which may omit factors such as joint vibration, occlusions, or lighting variations. The participant pool was limited to English‑speaking U.S. adults, leaving open the question of cross‑cultural differences in deictic interpretation. Future work is suggested in three directions: (a) validating the principles on physical robots in real collaborative tasks; (b) extending experiments to diverse linguistic and cultural groups; and (c) exploring multimodal deictic communication that combines pointing with gaze, body orientation, or haptic feedback.

Overall, the study advances our understanding of embodied communication in human‑robot interaction by quantifying how different pointing intents are perceived, and by providing actionable guidelines for designing robot gestures that are both expressive and interpretable.

Comments & Academic Discussion

Loading comments...

Leave a Comment