Linear Regression from Strategic Data Sources

Linear regression is a fundamental building block of statistical data analysis. It amounts to estimating the parameters of a linear model that maps input features to corresponding outputs. In the classical setting where the precision of each data poi…

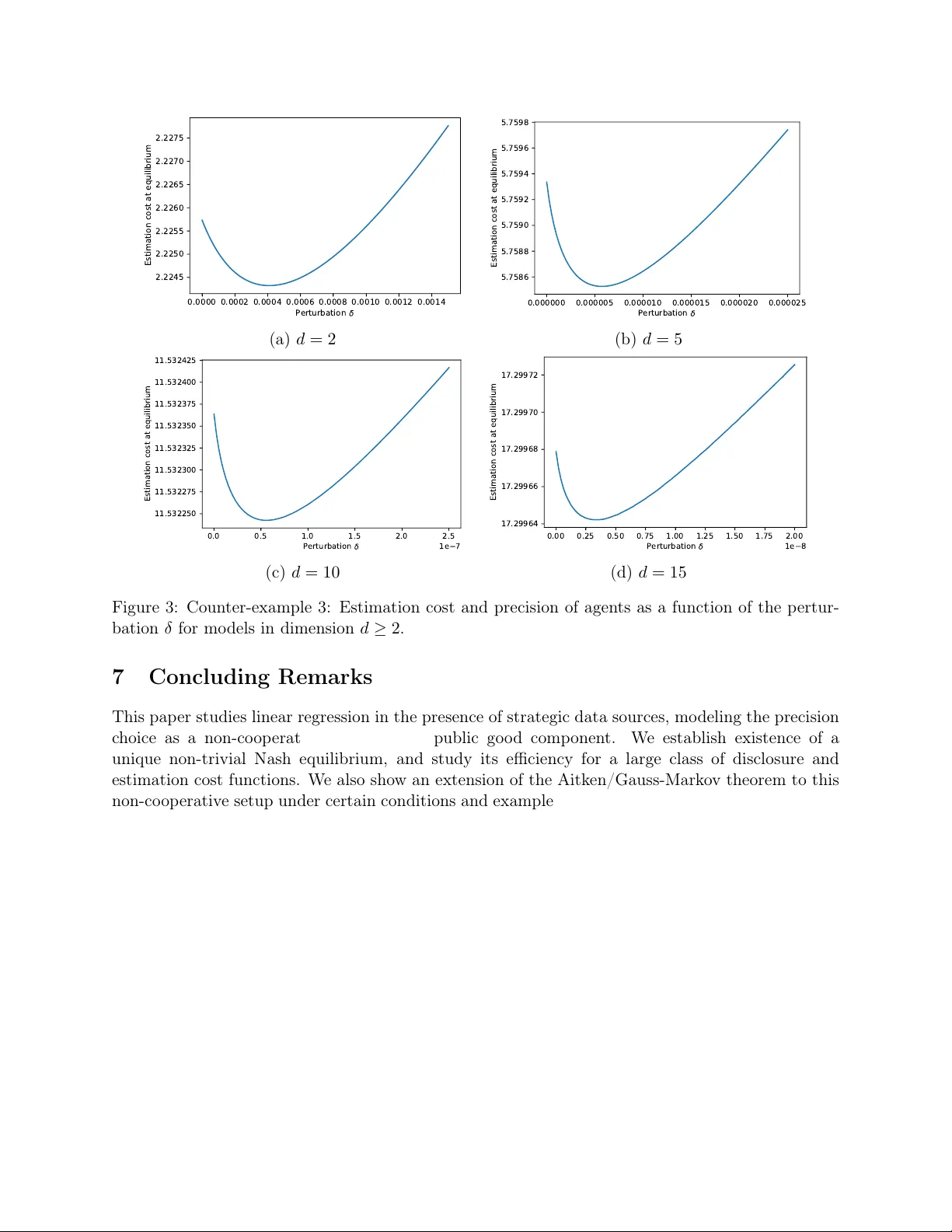

Authors: Nicolas Gast, Stratis Ioannidis, Patrick Loiseau