Learning dynamical systems with particle stochastic approximation EM

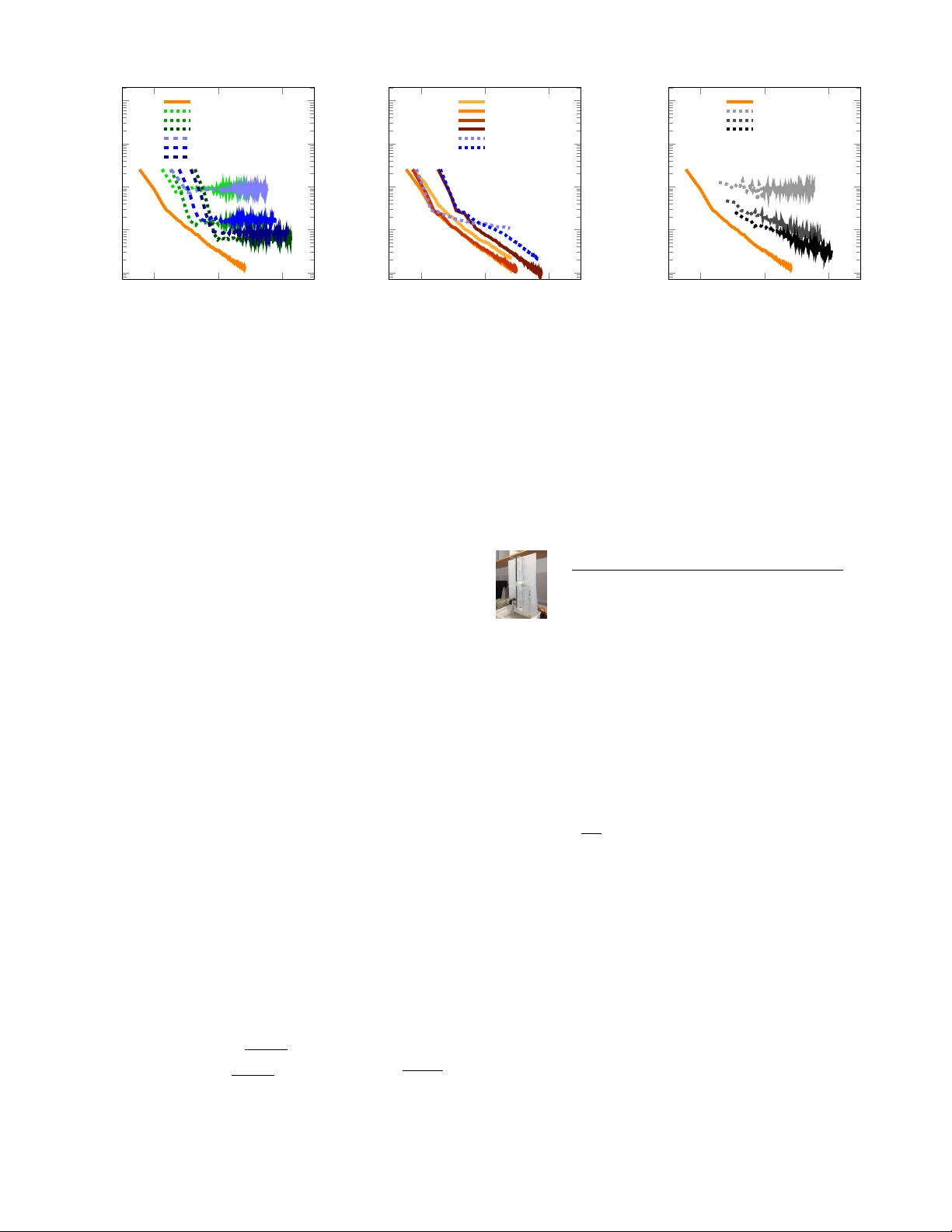

We present the particle stochastic approximation EM (PSAEM) algorithm for learning of dynamical systems. The method builds on the EM algorithm, an iterative procedure for maximum likelihood inference in latent variable models. By combining stochastic…

Authors: Andreas Lindholm, Fredrik Lindsten