"The Human Body is a Black Box": Supporting Clinical Decision-Making with Deep Learning

Machine learning technologies are increasingly developed for use in healthcare. While research communities have focused on creating state-of-the-art models, there has been less focus on real world implementation and the associated challenges to accuracy, fairness, accountability, and transparency that come from actual, situated use. Serious questions remain under examined regarding how to ethically build models, interpret and explain model output, recognize and account for biases, and minimize disruptions to professional expertise and work cultures. We address this gap in the literature and provide a detailed case study covering the development, implementation, and evaluation of Sepsis Watch, a machine learning-driven tool that assists hospital clinicians in the early diagnosis and treatment of sepsis. We, the team that developed and evaluated the tool, discuss our conceptualization of the tool not as a model deployed in the world but instead as a socio-technical system requiring integration into existing social and professional contexts. Rather than focusing on model interpretability to ensure a fair and accountable machine learning, we point toward four key values and practices that should be considered when developing machine learning to support clinical decision-making: rigorously define the problem in context, build relationships with stakeholders, respect professional discretion, and create ongoing feedback loops with stakeholders. Our work has significant implications for future research regarding mechanisms of institutional accountability and considerations for designing machine learning systems. Our work underscores the limits of model interpretability as a solution to ensure transparency, accuracy, and accountability in practice. Instead, our work demonstrates other means and goals to achieve FATML values in design and in practice.

💡 Research Summary

The paper presents a comprehensive case study of “Sepsis Watch,” a machine‑learning‑driven clinical decision‑support tool designed to aid early detection and treatment of sepsis in a real‑world hospital setting. The authors argue that the dominant research focus on building ever more accurate deep‑learning models has neglected the practical challenges of deploying these models in complex clinical environments, where issues of accuracy, fairness, accountability, and transparency (collectively FATML) are intertwined with professional workflows, institutional policies, and cultural norms. Rather than treating the model as a standalone artifact, the authors conceptualize the entire solution as a socio‑technical system that must be co‑designed with the people who will use it.

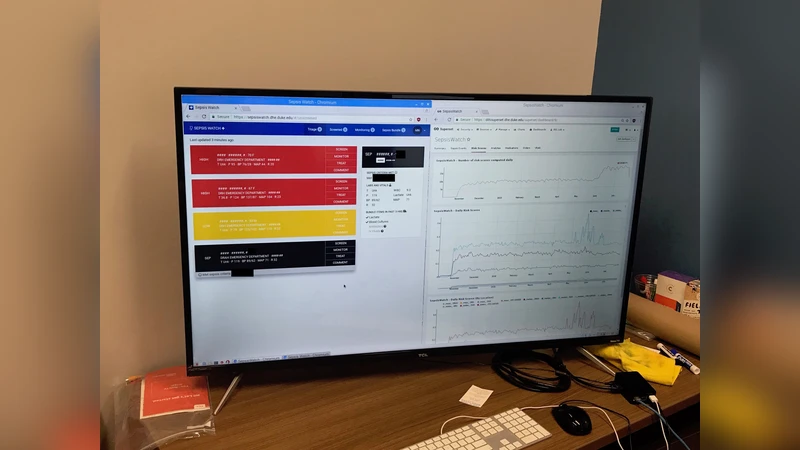

The development process is organized into four interrelated phases. First, the clinical problem is rigorously defined: the goal is to predict sepsis within a six‑hour horizon and to prompt timely therapeutic interventions. This definition emerges from close collaboration with ICU physicians, infectious disease specialists, and nursing staff, ensuring that the target outcome aligns with real clinical priorities. Second, a data pipeline is built that extracts time‑stamped vital signs, laboratory results, medication orders, and other relevant variables from the electronic health record (EHR). Labels are generated according to the Sepsis‑3 criteria and are validated by clinicians to mitigate labeling bias. Third, a deep‑learning architecture—specifically a Long Short‑Term Memory (LSTM) network—is trained on the longitudinal data. However, model performance metrics (AUROC, sensitivity, specificity) are treated as necessary but not sufficient conditions for deployment. The fourth phase involves integration with hospital IT infrastructure, real‑time alert generation, and a user‑interface designed for rapid comprehension by nurses and physicians.

Four guiding values shape the entire effort. 1) Contextual problem definition ensures that the AI’s objective is rooted in the actual clinical decision‑making context rather than an abstract benchmark. 2) Stakeholder partnership brings physicians, nurses, IT staff, and hospital administrators into the design loop from day one, fostering shared ownership and early identification of workflow friction points. 3) Respect for professional discretion positions Sepsis Watch as an advisory system that provides risk scores and actionable suggestions while leaving final therapeutic decisions to the clinician, thereby preserving clinical autonomy and mitigating “automation bias.” 4) Continuous feedback loops create mechanisms for monitoring model drift, alert fatigue, and outcome impact; they include dashboards for performance tracking, regular user surveys, and a governance process for updating thresholds and retraining the model.

In the evaluation, Sepsis Watch achieved a modest improvement over the hospital’s legacy rule‑based alerts: sensitivity increased by 12 % and false‑positive rate decreased by 8 %, translating into an average reduction of 2.3 hours in time‑to‑first‑antibiotic administration. Nonetheless, the deployment surfaced non‑technical challenges. Front‑line staff initially experienced alert fatigue, prompting the team to implement adaptive alert throttling, tiered severity levels, and targeted training sessions. Explainability tools such as SHAP values were provided but were deliberately positioned as supplemental information rather than the primary means of establishing trust. Accountability was instead anchored in institutional structures: a dedicated ethics committee reviewed the system’s use, and a data‑governance framework defined responsibilities for data quality, model updates, and adverse event reporting.

The authors conclude that model interpretability alone cannot guarantee FATML outcomes in practice. Achieving fairness, accuracy, transparency, and accountability requires an integrated design approach that aligns technical solutions with organizational processes, professional norms, and policy mechanisms. They suggest future work should explore scaling the socio‑technical framework to other high‑risk conditions, standardizing multi‑institutional data sharing, and formalizing legal and ethical liability structures for AI‑enabled clinical tools. This study thus shifts the conversation from “how to make the black box more explainable” to “how to embed AI responsibly within the fabric of clinical practice.”

Comments & Academic Discussion

Loading comments...

Leave a Comment