The Power and Pitfalls of Transparent Privacy Policies in Social Networking Service Platforms

Users disclose ever-increasing amounts of personal data on Social Network Service platforms (SNS). Unless SNSs’ policies are privacy friendly, this leaves them vulnerable to privacy risks because they ignore the privacy policies. Designers and regulators have pushed for shorter, simpler and more prominent privacy policies, however the evidence that transparent policies increase informed consent is lacking. To answer this question, we conducted an online experiment with 214 regular Facebook users asked to join a fictitious SNS. We experimentally manipulated the privacy-friendliness of SNS’s policy and varied threats of secondary data use and data visibility. Half of our participants incorrectly recalled even the most formally “perfect” and easy-to-read privacy policies. Mostly, users recalled policies as more privacy friendly than they were. Moreover, participants self-censored their disclosures when aware that visibility threats were present, but were less sensitive to threats of secondary data use. We present design recommendations to increase informed consent.

💡 Research Summary

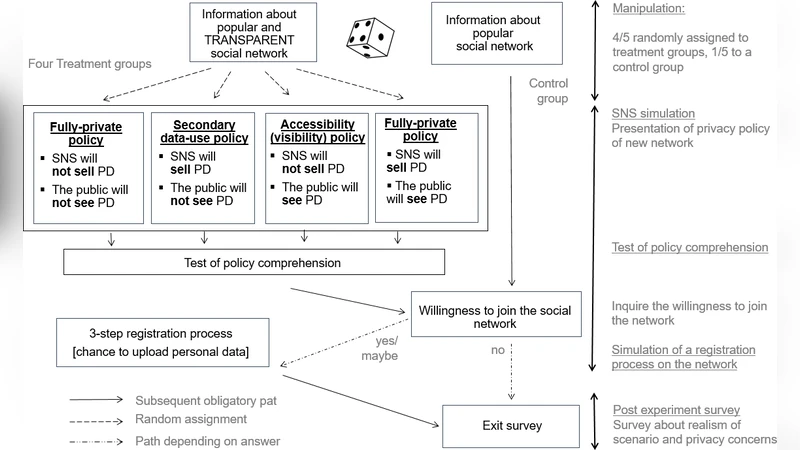

The paper investigates whether making privacy policies on social networking services (SNS) more transparent, concise, and prominent actually improves users’ informed consent and influences their self‑disclosure behavior. The authors note that while regulators and designers have advocated for shorter, simpler policies, empirical evidence supporting the claim that such policies lead to better user understanding is scarce. To fill this gap, they conducted a controlled online experiment with 214 regular Facebook users who were invited to join a fictitious SNS.

The experimental design employed a 2 × 2 factorial manipulation. The first factor was the “friendliness” of the privacy policy: a “perfectly friendly” version that was rewritten for maximum readability (short sentences, bolded key clauses, plain language) versus a “standard” version that resembled the original Facebook policy. The second factor introduced two distinct threat cues: (1) a visibility threat (“Your posts may be visible to people beyond your friends”) and (2) a secondary‑use threat (“Your data may be sold to advertisers”). Participants read the assigned policy, then completed a recall test, rated how privacy‑friendly they perceived the policy to be, and finally filled out a mock profile where they chose the audience for a sample post (e.g., friends‑only, public).

Key findings are threefold. First, even the most readable, “perfectly friendly” policy was remembered accurately by fewer than half of the participants. This suggests that formal readability alone does not guarantee retention of policy content. Second, participants systematically over‑estimated the privacy‑friendliness of the policies they read, indicating a positivity bias: users tend to assume they are better protected than the policy actually provides. Third, the presence of a visibility threat led participants to self‑censor, selecting more restrictive audience settings for their mock post. In contrast, the secondary‑use threat had a negligible effect on disclosure choices, implying that users are less sensitive to abstract risks of data resale than to concrete risks of unwanted exposure.

The authors discuss the implications of these results for policy design and regulation. They argue that policy reform should move beyond brevity and clarity to incorporate memory‑enhancing techniques such as repeated emphasis of key risks, visual icons, and interactive elements (e.g., check‑lists or short quizzes) that force users to actively engage with the most critical clauses. Highlighting visibility threats appears effective at prompting self‑censorship, supporting the adoption of privacy‑by‑default settings that limit public exposure unless the user explicitly opts in. Conversely, the low impact of secondary‑use warnings suggests that abstract statements about data selling need to be grounded in concrete scenarios or case studies to raise perceived risk.

Limitations are acknowledged: the experiment took place in a simulated environment that may not capture the full social and contextual pressures of real‑world SNS use; the sample consisted mainly of Facebook users, limiting generalizability to other platforms; and the study measured only immediate behavioral responses, not long‑term changes in privacy attitudes or practices.

In conclusion, the paper demonstrates that transparent, easy‑to‑read privacy policies are insufficient on their own to achieve genuine informed consent. Effective consent requires policies that are memorable, that clearly flag both direct visibility risks and indirect secondary‑use risks, and that are coupled with design interventions that encourage active user engagement. The authors propose concrete design recommendations: (1) use visual cues and repeated phrasing for high‑impact clauses, (2) embed interactive verification steps (e.g., short quizzes) before users can proceed, (3) set default sharing options to the most privacy‑protective level, (4) present secondary‑use threats through vivid, scenario‑based narratives, and (5) provide periodic policy summaries that prompt users to reconfirm their understanding. Implementing these measures could bridge the gap between policy transparency and actual user awareness, thereby strengthening the foundation of informed consent on social networking platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment