A Multiversion Programming Inspired Approach to Detecting Audio Adversarial Examples

Adversarial examples (AEs) are crafted by adding human-imperceptible perturbations to inputs such that a machine-learning based classifier incorrectly labels them. They have become a severe threat to the trustworthiness of machine learning. While AEs in the image domain have been well studied, audio AEs are less investigated. Recently, multiple techniques are proposed to generate audio AEs, which makes countermeasures against them an urgent task. Our experiments show that, given an AE, the transcription results by different Automatic Speech Recognition (ASR) systems differ significantly, as they use different architectures, parameters, and training datasets. Inspired by Multiversion Programming, we propose a novel audio AE detection approach, which utilizes multiple off-the-shelf ASR systems to determine whether an audio input is an AE. The evaluation shows that the detection achieves accuracies over 98.6%.

💡 Research Summary

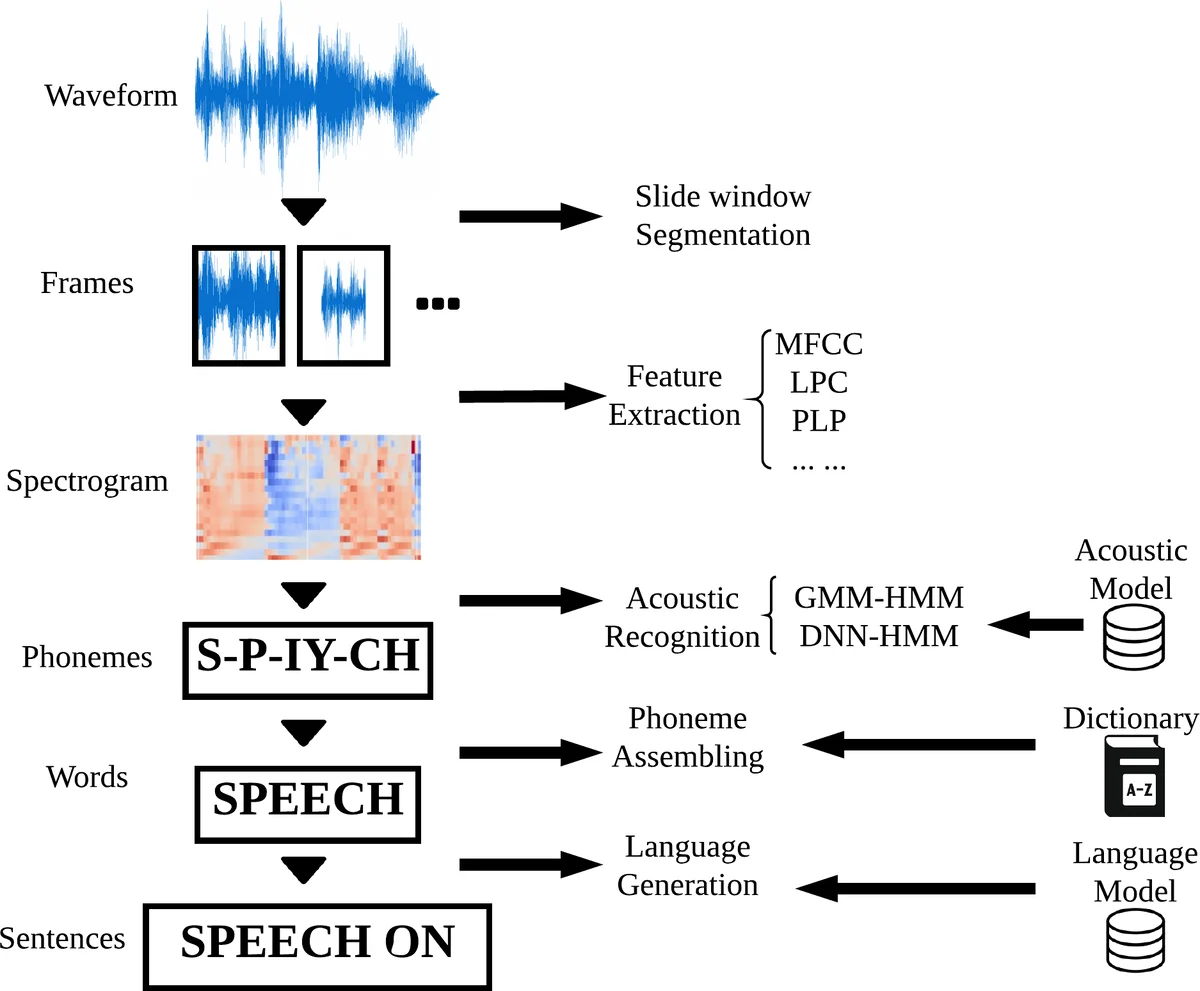

The paper addresses the emerging threat of audio adversarial examples (AEs) that can cause automatic speech recognition (ASR) systems to produce incorrect transcriptions. While adversarial attacks in the image domain have been extensively studied and are known to be highly transferable across different models, the authors observe that audio AEs exhibit poor transferability: the same adversarial audio often fails to fool multiple heterogeneous ASR systems. This observation forms the core hypothesis of the work.

Inspired by the software‑engineering technique of Multi‑Version Programming (MVP), the authors propose a detection framework called MVP‑EARS. The idea is simple yet powerful: run several off‑the‑shelf ASR engines (e.g., DeepSpeech, Kaldi, wav2vec, Google Speech‑to‑Text, etc.) in parallel on the same input audio, collect their transcription outputs, and compute pairwise similarity scores (Levenshtein distance, BLEU, Jaccard, etc.). Normal speech typically yields highly similar transcriptions across engines, resulting in high similarity scores, whereas an adversarial audio leads to divergent transcriptions and low similarity scores. These scores are assembled into a feature vector that feeds a binary classifier (Random Forest, SVM, LightGBM, etc.) which decides whether the input is adversarial.

A key advantage of this approach is that the classifier does not need to be trained on real adversarial audio. The authors build a large benchmark dataset comprising over 10,000 audio AEs generated by state‑of‑the‑art white‑box (Carlini et al.) and black‑box (Taori et al.) attacks, together with benign audio samples. For each sample they record the transcriptions from five different ASR systems, providing a rich resource for the community. To prepare the classifier for future “transferable” AEs—audio that could simultaneously fool multiple ASR engines—the authors synthesize “hypothetical transferable AE” vectors by artificially setting high similarity scores across ASRs. Training on a mixture of benign data and these synthetic vectors enables the detector to remain effective even if transferable AEs appear later.

Experimental results show that MVP‑EARS achieves detection accuracies up to 99.88%, with precision and recall above 99.8% on the held‑out test set. The system maintains over 95% detection rate when evaluated against newly generated AEs that were not seen during training, demonstrating strong generalization. The authors also analyze the computational overhead: running multiple ASRs incurs higher latency and, for cloud‑based services, additional API costs, but the trade‑off is justified by the near‑perfect detection performance.

The paper discusses limitations and future work. The main vulnerability is the potential emergence of truly transferable audio AEs that can deceive all employed ASR engines; while none have been demonstrated yet, the authors acknowledge this risk and propose proactive training with synthetic data as a mitigation strategy. They also suggest exploring lightweight local ASR models to reduce latency, extending the framework to multimodal inputs (audio‑visual commands), and establishing an open benchmark for continuous evaluation of both attacks and defenses.

In summary, the authors successfully adapt the MVP concept to the audio security domain, providing a practical, high‑accuracy detection method for current audio adversarial examples and a forward‑looking strategy to stay ahead of future, more sophisticated attacks. This work constitutes a significant step toward securing voice‑controlled AI services against adversarial manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment