Join Query Optimization with Deep Reinforcement Learning Algorithms

Join query optimization is a complex task and is central to the performance of query processing. In fact it belongs to the class of NP-hard problems. Traditional query optimizers use dynamic programming (DP) methods combined with a set of rules and restrictions to avoid exhaustive enumeration of all possible join orders. However, DP methods are very resource intensive. Moreover, given simplifying assumptions of attribute independence, traditional query optimizers rely on erroneous cost estimations, which can lead to suboptimal query plans. Recent success of deep reinforcement learning (DRL) creates new opportunities for the field of query optimization to tackle the above-mentioned problems. In this paper, we present our DRL-based Fully Observed Optimizer (FOOP) which is a generic query optimization framework that enables plugging in different machine learning algorithms. The main idea of FOOP is to use a data-adaptive learning query optimizer that avoids exhaustive enumerations of join orders and is thus significantly faster than traditional approaches based on dynamic programming. In particular, we evaluate various DRL-algorithms and show that Proximal Policy Optimization significantly outperforms Q-learning based algorithms. Finally we demonstrate how ensemble learning techniques combined with DRL can further improve the query optimizer.

💡 Research Summary

The paper tackles the long‑standing problem of join order optimization, which is known to be NP‑hard and traditionally addressed by dynamic programming (DP) combined with heuristic pruning and cost‑based estimation. While DP‑based optimizers such as those in System R, Volcano, and PostgreSQL can find good plans, they suffer from two major drawbacks: (1) exhaustive enumeration of join permutations quickly becomes infeasible for queries with many tables, leading to aggressive search space reductions (e.g., left‑deep trees only) that may miss the true optimum; and (2) the underlying cost model relies on cardinality estimates that assume attribute independence and uniform data distributions, which are often violated in real‑world workloads, causing systematic estimation errors.

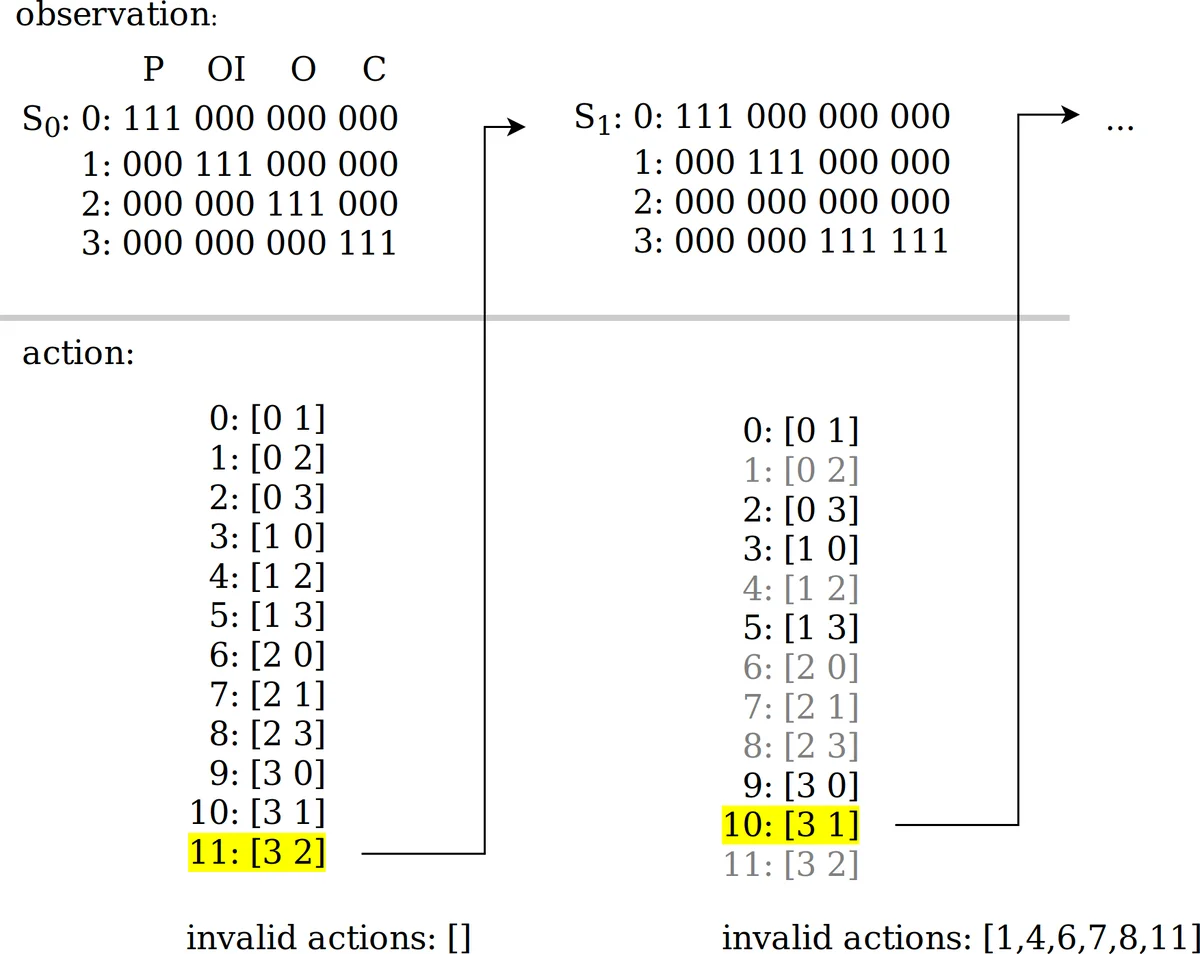

To overcome these limitations, the authors introduce FOOP (Fully Observed Optimizer), a generic framework that casts join order selection as a fully observable Markov Decision Process (MDP). In FOOP, every relation and every intermediate result is part of the observable state, actions correspond to selecting the next relation to join, and the reward is defined as the inverse of the final plan cost (or actual execution time). By exposing the full state to the reinforcement learning (RL) agent, the optimizer can learn policies that directly reflect the true performance impact of each decision, rather than relying on potentially inaccurate cost estimates.

FOOP is deliberately placed in the application layer, making it DBMS‑independent. The authors integrate it with PostgreSQL’s planner and cost model, but the framework could be attached to any relational engine. The state representation includes table cardinalities, selectivities, current partial join tree structure, and estimated sizes of intermediate results. The action space consists of the remaining tables that have not yet been joined. After a sequence of actions terminates (i.e., all tables are joined), the final plan is evaluated by the native cost model, and the reward is fed back to the RL algorithm.

The core contribution is a systematic comparison of three deep‑RL algorithms within the same FOOP environment: (1) a vanilla Deep Q‑Network (DQN), (2) Double DQN with Prioritized Experience Replay, and (3) Proximal Policy Optimization (PPO). DQN is a value‑based method that learns a Q‑function approximating the expected return of each state‑action pair. Double DQN mitigates the over‑estimation bias of DQN, while Prioritized Replay focuses learning on high‑TD‑error transitions, accelerating convergence. PPO, by contrast, is a policy‑gradient method that directly optimizes a stochastic policy using a clipped surrogate objective, offering better sample efficiency and more stable updates.

Experimental evaluation uses a suite of benchmark queries of varying size (3‑way to 8‑way joins) and includes different physical join algorithms (nested‑loop, hash, sort‑merge). The results show that PPO consistently outperforms the Q‑learning variants: it reaches lower final plan costs (5‑10 % improvement over PostgreSQL’s DP planner) and does so with dramatically reduced optimization time (roughly 3‑5× faster). DQN and Double DQN achieve modest gains but exhibit higher variance and slower convergence, especially on larger join graphs where the state‑action space explodes.

Beyond single‑algorithm performance, the authors explore an ensemble approach: multiple RL agents (e.g., one PPO, one Double DQN) are trained in parallel, and a meta‑learner selects the best candidate plan from the set of proposals. This ensemble yields additional cost reductions (2‑4 % over the best single agent) and improves robustness, particularly on queries with complex join topologies or heterogeneous join operator choices.

The paper also discusses practical considerations and limitations. FOOP currently relies on offline training using historical query logs; online learning would introduce additional overhead and stability concerns that need further study. The reward function still depends on the underlying cost model, so any systematic bias in that model can affect learning unless the reward is calibrated with actual execution feedback. Moreover, the state representation, while comprehensive, may become high‑dimensional for very large schemas, suggesting a need for feature‑selection or representation learning techniques.

In summary, the authors present a novel, modular framework that brings modern deep reinforcement learning to the heart of join order optimization. By treating the problem as a fully observable MDP, FOOP enables the direct comparison of state‑of‑the‑art RL algorithms, demonstrates the superiority of PPO over traditional Q‑learning approaches, and shows that ensemble techniques can further enhance plan quality. The work provides strong empirical evidence that DRL can reduce both optimization latency and plan cost, paving the way for future integration of learning‑based optimizers into production database systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment