Coulomb Autoencoders

Learning the true density in high-dimensional feature spaces is a well-known problem in machine learning. In this work, we consider generative autoencoders based on maximum-mean discrepancy (MMD) and provide theoretical insights. In particular, (i) we prove that MMD coupled with Coulomb kernels has optimal convergence properties, which are similar to convex functionals, thus improving the training of autoencoders, and (ii) we provide a probabilistic bound on the generalization performance, highlighting some fundamental conditions to achieve better generalization. We validate the theory on synthetic examples and on the popular dataset of celebrities’ faces, showing that our model, called Coulomb autoencoders, outperform the state-of-the-art.

💡 Research Summary

The paper “Coulomb Autoencoders” tackles the long‑standing problem of learning true data densities in high‑dimensional spaces by improving maximum‑mean‑discrepancy (MMD) based autoencoders. Traditional variational autoencoders (VAEs) rely on the Kullback‑Leibler (KL) divergence and require stochastic encoders, which can cause conflicts during reconstruction. In contrast, MMD‑based Wasserstein autoencoders (WAEs) allow deterministic encoders, offering more stable training, but the MMD objective is generally non‑convex and prone to local minima.

The authors propose to replace the usual Gaussian or inverse‑multiquadratic kernels with a Coulomb kernel, i.e., a kernel that satisfies Poisson’s equation (-\nabla^{2}k(z,z’)=\lambda\delta(z-z’)). In dimensions (h>2) this yields a kernel proportional to (|z-z’|^{-(h-2)}); in two dimensions it reduces to a logarithmic form. This choice gives the MMD term a physical interpretation: samples from the prior (p_Z) act as positive charges, while samples generated by the encoder act as negative charges, and the kernel induces global attraction/repulsion forces analogous to electrostatics.

Theoretical contributions

-

Convergence properties (Theorem 1). Under mild assumptions (sample size (N>h), distinct prior samples, and a Poisson‑satisfying kernel), the empirical MMD functional is harmonic everywhere except at the data points. By the maximum principle for harmonic functions, every local extremum is global, and the set of saddle points has Lebesgue measure zero. Consequently, gradient‑based local search methods almost surely converge to a global minimum. The global minima are precisely the configurations where the encoder’s latent sample set (D_{f_z}) equals the prior sample set (D_z); thus the encoder perfectly matches the prior distribution.

-

Generalization bound (Theorem 2). Assuming the reconstruction error (|x-g(f(x))|^{2}) can be uniformly bounded by a small constant (\xi), the authors derive a high‑probability bound on the deviation between the empirical loss (\hat L) and its expectation (L). The bound consists of four exponential terms that vanish as the number of samples (N) grows. The first term depends on (\xi) and dominates the bound, highlighting that controlling reconstruction error—by limiting network capacity or improving architecture—is crucial for good generalization.

Empirical validation

- Synthetic 1‑D and 2‑D particle experiments illustrate that Gaussian and IMQ kernels generate multiple local minima, whereas the Coulomb kernel yields a unique global minimum, confirming the theoretical predictions.

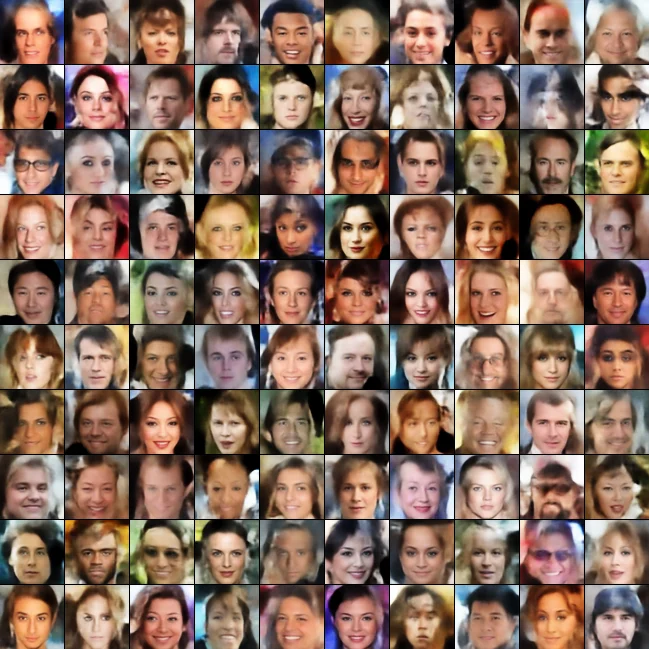

- CelebA face generation: Using a 5‑layer convolutional encoder/decoder with a 32‑dimensional latent space, the Coulomb autoencoder achieves lower Fréchet Inception Distance (FID ≈ 23.1) and higher Inception Score (IS ≈ 3.9) compared to standard WAEs, VAEs, and several GAN variants. Training curves show rapid loss reduction and stable convergence, with reduced sensitivity to the regularization weight (\lambda).

Limitations and future work

The convergence proof holds in function space; neural‑network parameter spaces remain non‑convex, so local minima could still arise in practice, though experiments suggest they are rare. The impact of the kernel hyper‑parameter (\lambda) and of high latent dimensions (>100) on numerical stability is not fully explored. Future directions include investigating other physics‑inspired kernels (e.g., gravitational), extending the framework to conditional generation, and deeper analysis of saddle‑point geometry.

Conclusion

By introducing a Coulomb kernel into the MMD term, the authors provide a principled objective that enjoys global‑optimality guarantees and a clear probabilistic generalization bound. Empirical results on both synthetic and real‑world image data demonstrate superior sample quality and training stability over existing MMD‑based autoencoders and other generative models. This work represents a significant step toward reliable, high‑dimensional density estimation and generative modeling.

Comments & Academic Discussion

Loading comments...

Leave a Comment