Forgery-Resistant Touch-based Authentication on Mobile Devices

Mobile devices store a diverse set of private user data and have gradually become a hub to control users’ other personal Internet-of-Things devices. Access control on mobile devices is therefore highly important. The widely accepted solution is to protect access by asking for a password. However, password authentication is tedious, e.g., a user needs to input a password every time she wants to use the device. Moreover, existing biometrics such as face, fingerprint, and touch behaviors are vulnerable to forgery attacks. We propose a new touch-based biometric authentication system that is passive and secure against forgery attacks. In our touch-based authentication, a user’s touch behaviors are a function of some random “secret”. The user can subconsciously know the secret while touching the device’s screen. However, an attacker cannot know the secret at the time of attack, which makes it challenging to perform forgery attacks even if the attacker has already obtained the user’s touch behaviors. We evaluate our touch-based authentication system by collecting data from 25 subjects. Results are promising: the random secrets do not influence user experience and, for targeted forgery attacks, our system achieves 0.18 smaller Equal Error Rates (EERs) than previous touch-based authentication.

💡 Research Summary

The paper addresses the growing need for secure yet usable authentication on mobile devices, where traditional passwords are cumbersome and conventional biometrics (face, fingerprint, voice) are vulnerable to forgery. Recent work has shown that users’ touch interactions can serve as a behavioral biometric, but existing touch‑based schemes rely on a single, static screen setting, making them susceptible to replay attacks in which an adversary records a victim’s strokes and replays them using a robot.

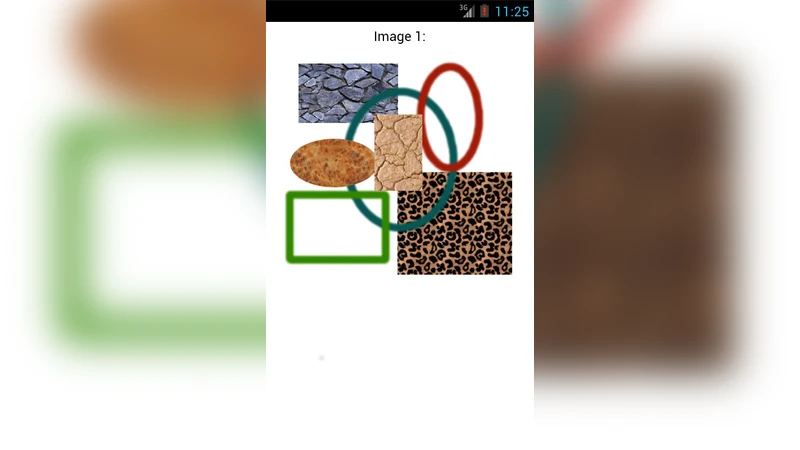

To overcome this weakness, the authors propose an adaptive, continuous authentication system that treats the screen’s geometric transformation (e.g., X‑axis or Y‑axis distortion) as a random “secret”. The operating system applies a chosen distortion to raw touch coordinates before they reach applications. Because the first touch point of any interaction is not transformed, users must unconsciously adjust the subsequent motion of their finger to achieve the intended on‑screen effect. Consequently, the same user produces different raw touch patterns under different settings, while the perceived application behavior remains consistent.

Two key properties are identified: stability – the inter‑user variation within a single setting exceeds the intra‑user variation across settings, ensuring that users can still be distinguished; and sensitivity – a user’s touch patterns differ enough across settings to be separable in feature space, allowing a classifier to detect mismatched settings. By selecting a set of screen settings that satisfy both properties, the system trains a distinct classifier for each setting during a short registration phase (approximately two minutes of data per setting).

During authentication, the system randomly selects one of the pre‑defined settings at each time interval and applies the corresponding classifier to the incoming touch data. An attacker who has harvested the victim’s touch strokes—whether from the same or different settings—cannot know which setting is active at attack time, and therefore cannot reliably replay the strokes. The threat model assumes the attacker can analyze the authentication code offline and can use a commercial programmable robot (e.g., a Lego robot) to replay touch events, but cannot obtain runtime screen‑setting information without privileged access.

The authors evaluated the approach with 25 participants. Each participant performed swipe gestures under a grid of 5 horizontal and 5 vertical distortion levels (25 total settings). Features similar to prior work (31 per stroke, including velocity, pressure, start/end locations, direction, etc.) were extracted. Classification models were built using these features for each setting. Results showed that, compared with prior single‑setting touch authentication, the proposed method reduced the mean Equal Error Rate (EER) for random forgery attacks by 0.02–0.09 and for targeted forgery attacks by 0.17–0.18. Moreover, increasing the number of settings further lowered EER, indicating that security scales with setting diversity. User studies reported that participants did not notice the screen distortions and experienced no degradation in usability.

The paper also discusses limitations: a sophisticated attacker equipped with high‑resolution sensors or computer‑vision techniques might infer the current setting, and certain UI elements that depend on absolute coordinates could behave oddly under distortion. Future work is suggested to explore defenses against real‑time setting inference, extend protection to single‑tap interactions, and test the framework on commercial devices.

In summary, this work introduces a novel paradigm that embeds a secret into the device’s low‑level screen transformation, forcing touch‑based biometric signatures to become setting‑dependent. By leveraging the simultaneously observed stability and sensitivity of touch behavior, the system achieves continuous, passive authentication that is markedly more resistant to both random and targeted forgery attacks, while preserving user experience.

Comments & Academic Discussion

Loading comments...

Leave a Comment