Sample-Efficient Reinforcement Learning with Maximum Entropy Mellowmax Episodic Control

Deep networks have enabled reinforcement learning to scale to more complex and challenging domains, but these methods typically require large quantities of training data. An alternative is to use sample-efficient episodic control methods: neuro-inspi…

Authors: Marta Sarrico, Kai Arulkumaran, Andrea Agostinelli

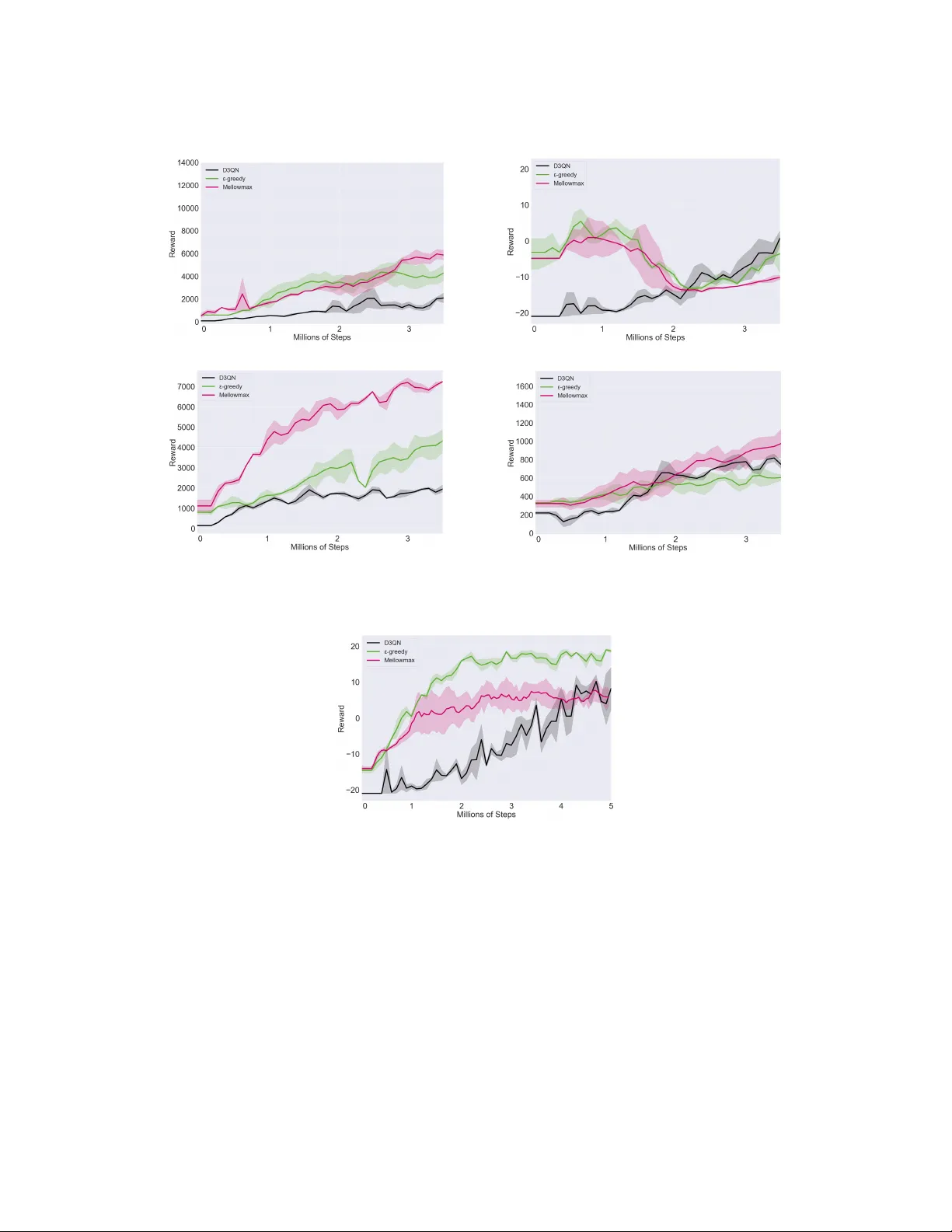

Sample-Efficient Reinf or cement Learning with Maximum Entr opy Mellowmax Episodic Contr ol Marta Sarrico Department of Bioengineering Imperial College London mvs918@ic.ac.uk Kai Arulkumaran Department of Bioengineering Imperial College London kailash.arulkumaran13@imperial.ac.uk Andrea Agostinelli Department of Bioengineering Imperial College London aa7918@ic.ac.uk Pierre Richemond Data Science Institute Imperial College London phr17@ic.ac.uk Anil A. Bharath Department of Bioengineering Imperial College London a.bharath@imperial.ac.uk Abstract Deep networks ha ve enabled reinforcement learning to scale to more complex and challenging domains, b ut these methods typically require lar ge quantities of training data. An alternati ve is to use sample-efficient episodic control methods: neuro-inspired algorithms which use non-/semi-parametric models that predict values based on storing and retrieving pre viously experienced transitions. One way to further improve the sample ef ficiency of these approaches is to use more principled e xploration strategies. In this work, we therefore propose maximum entropy mello wmax episodic control (MEMEC), which samples actions according to a Boltzmann policy with a state-dependent temperature. W e demonstrate that MEMEC outperforms other uncertainty- and softmax-based exploration methods on classic reinforcement learning en vironments and Atari games, achie ving both more rapid learning and higher final rew ards. 1 Introduction Despite the successes of deep reinforcement learning (DRL) agents [ 1 ], these models have a sample- efficienc y limitation: DRL agents typically require hundreds of times more experience than a human to reach similar le vels, suggesting a lar ge gap between current DRL algorithms and the operation of the human brain [ 33 , 15 ]. Recently , new neuro-inspired episodic control (EC) algorithms have demonstrated rapid learning, as compared to state-of-the-art DRL methods [ 5 , 27 ]. These algorithms were inspired by human long-term memory , which can be di vided into semantic and episodic memory: the former is responsible for storing general knowledge and facts about the world, whilst the latter is related to recollecting our personal e xperiences [ 11 ]. EC, introduced by Lengyel & Dayan [ 17 ], is inspired by this biological episodic memory , and models one of the several dif ferent control systems used for beha vioural decisions as suggested by neuroscience research [ 9 ]. As opposed to other RL systems, EC enables rapidly learning a policy from sparse amounts of e xperience. An alternativ e factor in the sample-ef ficiency of RL methods is the existence of an ef fectiv e exploration policy that is able to collect div erse experiences from the en vironment to learn from. The deep Q - network (DQN) [ 21 ], a notable DRL algorithm, as well as current EC algorithms [ 5 , 27 ], use the nai ve -greedy exploration policy [ 29 ]. More principled exploration methods, such as upper confidence bound (UCB) [ 3 , 8 ] and Thompson sampling (TS) [ 25 ], sample actions based on uncertainty ov er their consequences. Another alternative is to sample from a distribution ov er actions, such as the Boltzmann distribution [ 29 ], performing importance weighting of actions proportionally to the Gibbs- W orkshop on Biological and Artificial Reinforcement Learning, NeurIPS 2019. Boltzmann weights of their state-action values. Y et another solution is to use an alternativ e objective, yielding a policy that balances between maximising both the expected return and its entropy ov er states [ 2 , 13 ]. Similarly to supervised learning settings, the latter approach corresponds to an entropic regularisation o ver the solution [10, 22, 23, 28]. In this work, we hence propose maximum entropy mello wmax episodic control (MEMEC), which combines EC models with the maximum entrop y mellowmax polic y [ 2 ] for principled e xploration. W ithout resorting to maximum entropy RL, hence decoupling exploration benefits from the objective, we show that this softmax-based exploration strate gy can still improve both the sample-ef ficiency and final returns of EC methods, while retaining their simple, low-bias Monte Carlo returns. W e test MEMEC on a wide variety of domains, where it shows impro vements ov er the original EC methods, as well as the same EC methods with alternativ e exploration policies. 2 Background Reinfor cement Learning: The RL setting is formalised by Markov decision processes (MDPs). MDPs are characterised by a tuple h S, A, R, T , γ i , where S is the set of states, A is the set of actions, R is a reward function which is the immediate, intrinsic desirability of a certain state, T is the transition dynamics, and γ ∈ [0 , 1) is a discount factor that trades of f the value of current and future rew ards. The goal of RL is to find the optimal polic y , π ∗ , that maximizes the e xpected cumulativ e discounted return when followed from an y state s ∈ S . Q -learning [ 32 ], a widely used temporal difference (TD) method, can learn value functions by bootstrapping. The DQN uses Q -learning, updating a neural-network-based state-action v alue function, Q π ( s, a ; θ ) , parameterised by θ . As a baseline across all en vironments we used a strong DRL method—the dueling double DQN (D3QN) [21, 30, 31] (further detailed in Section 7). Figure 1: Architecture of NEC (for Atari games): a con volutional neural network receiv es a state (4 frames of the game) as input and outputs a key h , an embedding of that state. Lookup: Ke y h is compared to the stored keys in the differentiable neural dictionary (DND) and the k most similar ones ( k = 3 in this case) are retrie ved. Q h is computed as a weighted sum of the Q -v alues associated with the retriev ed keys. Episodic Control: EC models use a memory buf fer that stores state-action pairs and their associated episodic returns, ( s, a, R ) , with control performed by replaying the most rew arding actions based on the similarity between the current and stored states. Implementations of these EC methods include the non-parametric model-free EC (MFEC) [ 5 ], and the semi-parametric neural EC (NEC) [ 27 ]. Further details on MFEC and NEC can be found in Figure 1 and Section 7. 3 Exploration Strategies For both MFEC and NEC, in order to trade off exploration and exploitation in the en vironment, the agent follows an -greedy policy . This consists of a sampling strategy where the agent either uniformly picks ov er all actions with probability , or instead chooses the action that maximises the predicted return. Uncertainty-based: UCB and TS are two e xploration strategies that sample actions based on predicted uncertainties over the value of the return, and hence require learning a distribution over each Q -value. Common practice is to assume a (sub-)Gaussian distribution ov er Q -values, which is both con venient and yields tight re gret lower bounds [14]. 2 In UCB, the action that is chosen at each timestep is the one that is the maximum over the sum of the value (estimated mean of the distribution) and its uncertainty U ( s, a ) (a function of the estimated standard de viation of the distribution): argmax a ∈A Q ( s, a ) + U ( s, a ) . TS can be implemented by sampling from a posterior over Q -functions: ˜ Q ( s, a ) ∼ N ( Q ( s, a ) , ˜ σ ( Q ( s, a )) ∀ a ∈ A , where ˜ σ ( Q ( s, a )) is the estimated standard de viation of the Q -value, and we are assuming independence of state-action value functions. Then, the argmax action is chosen greedily from the sampled Q - function: argmax a ∈A ˜ Q ( s, a ) . The difficulty with these methods is that they require learning a probabilistic Q -function, and learning well-calibrated uncertainties using neural networks is still a challenging problem [24]. Furthermore, the actions are sampled independently per timestep, and so the resulting policy can be inconsistent o ver time [26]. Softmax-based: An alternati ve which does not ha ve these disadv antages is to use a softmax-based policy , which only requires learning the expected Q -values. By applying the Boltzmann operator (with in verse temperature β ) to the Q -values, b oltz β ( Q ) = P n i =1 Q i e β Q i P n i =1 e β Q i , one can sample actions from the resulting Boltzmann distrib ution o ver actions, where more promising actions will be sampled more frequently . Howe ver , the Boltzmann operator is not a non-expansion (it is not Lipschitz with constant < 1 ) for all v alues of the temperature parameter [ 19 ]; critical to the proof of conv ergence in TD learning, the non-e xpansion property is a suf ficient condition to guarantee con vergence to a unique fixed point. As a solution, Asade & Littman [ 2 ] introduced the mellowmax operator (with hyperparameter ω ), mm ω ( Q ) = log ( 1 n P n i =1 e ωQ i ) ω , as an alternativ e softmax function for v alue function optimisation, which is a non-expansion in the infinity norm for all temperatures. As the mello wmax operator can return the maximum (as ω → ∞ ) or minimum (as ω → −∞ ) of a set of values, Asadi & Littman [ 2 ] also proposed optimising ω under a maximum entropy constraint. This results in the maximum entropy mellowmax policy: π mm ( a | s ) = e β Q ( s,a ) P a ∈A e β Q ( s,a ) , which is of the same functional form as the Boltzmann policy , but where the optimal β can be solved for using a root-finding algorithm, such as Brent’ s method [ 6 ]. The equation which is solved for is P a ∈A e β ( Q ( s,a ) − mm ω ( Q ( s, · ))) ( Q ( s, a ) − mm ω ( Q ( s, · ))) = 0 . MEMEC, which uses the maximum entropy mello wmax policy for exploration, hence benefits from softmax-based exploration, where actions are chosen as a function of their estimated value, with a state-dependent temperature on the Boltzmann distrib ution ov er actions. As we show in our experiments, this approach outperforms both the original EC methods with -greedy exploration, as well as the alternative e xploration methods outlined here. W e do not train on a maximum entropy objectiv e, thereby purely using the maximum entropy mello wmax policy for exploration. 4 Experiments En vironments: W e e valuated EC methods with different exploration strate gies, as well as a D3QN baseline, in three sets of domains. Firstly , CartPole and Acrobot, two classic RL problems, im- plemented in OpenAI Gym [ 7 ]. Secondly , OpenRoom, and FourRoom, gridworld domains re- implemented from Machado et al. [ 20 ]. For these domains we trained the agents for 100,000 steps, and e valuated them e very 500 steps. Thirdly , Pong, Space In vaders, Q*bert, Bo wling, and Ms. Pac- Man, video games with high-dimensional visual observations from the Atari Learning En vironment [ 4 ]. Due to computational resource limitations, Atari training was performed for 5,000,000 steps (20,000,000 frames) for MFEC and 3,500,000 steps (12,000,000 frames) for NEC; ev aluation was performed e very 100,000 steps. For Atari games, we use standard preprocessing for the state [ 21 ], and similarly repeat actions for 4 game frames (= 1 agent step). W e used Gaussian random projections for MFEC, as this obtained better results than the variational autoencoder embeddings in the original work [ 5 ]. For all experiments we report the mean and standard de viation of each method, calculated ov er three random seeds. Hyperparameters: For all EC methods, we primarily used the original hyperparameters [ 5 , 27 ], but chose to keep some consistent across MFEC and NEC for consistency . These were to use the more robust in verse distance weighted kernel (Equation 5) for MFEC and NEC, k = 11 nearest neighbours, and a discount factor γ = 0.99 across all domains. For the episodic memories, the buf fer size was set to 150 for the room domains, 10,000 for the classic control domains and 100,000 (due to computational resource limitations) for the Atari games. W e used the original hyperparameters 3 for D3QN on Atari games [ 31 ], and tuned them manually for the other domains. The full details are present in Section 11. ω Hyperparameter: The ω hyperparameter in the mello wmax operator requires tuning per domain [ 2 , 13 ]. W ith high ω , mm ω acts as a max operator and with ω approaching zero mm ω acts as a mean operator . Thus, ω should not be too lar ge, as the agent will act greedily , nor too small, as the agent will behav e randomly . T o tune this hyperparameter we implemented a grid search with the following ω values: for the Gridworld and classic Control environments, ω ∈ { 5 , 7 , 9 , 12 } ; for Atari domain, ω ∈ { 10 , 20 , 25 , 30 , 40 , 50 , 60 } , depending on the g ame. As in prior work [ 2 , 13 ], we sho w results for MEMEC with the best ω . W e set ω to 7.5 for all non-Atari domains, 25 for Pong, 40 for Q*Bert and Space In vaders, 50 for Bo wling and 60 for Ms. Pac-Man. Uncertainty-based Exploration: For the fi ve simpler domains, we tested -greedy , UCB, TS, Boltz- mann and maximum entropy mello wmax exploration strate gies. While UCB sometimes performed well, TS performed extremely badly . Upon closer examination, the uncertainty estimates over the Q -values as calculated via the cov ariance matrix defined over the in verse distance kernel [ 27 ] embed- dings ov er the nearest neighbour keys were both relativ ely constant, meaning that UCB was close to -greedy with small , and relativ ely large, causing TS’ poor performance (high variance samples for the Q -values). W e were unable to improve the uncertainty estimates by fitting a Gaussian process to the nearest neighbours and optimising ov er δ in the in verse distance weighted kernel. Theoretically , UCB and TS often have similar frequentist re gret bounds [ 16 ], but our e xperiments demonstrate how dependent these are on well-calibrated uncertainties. 1 Due to computational limitations, and as a result of these experiments on the simpler domains, we only e v aluated -greedy and mellowmax, as well as the D3QN baseline, on the Atari games. 5 Results Figure 2: Learning curve on Acrobot with MFEC-based MEMEC. Classic Control: All fiv e methods solved Cartpole (see Section 8), as it is a relatively simple en vironment where e ven purely random exploration can reach highly re warding states. In Figure 2 we show the methods’ performance in Acrobot, using both MFEC and NEC. In both cases, MEMEC outperformed the other exploration strategies, not only getting to higher final re wards, b ut starting to learn faster . The next best strate gy—the Boltzmann policy—had high variance over random seeds. As discussed previously in Section 4, UCB performed poorly , which we believ e is due to poorly calibrated uncertainties. The D3QN baseline learned more slowly , and con ver ged to a suboptimal policy . Gridworld: MFEC performed best with softmax-based exploration strategies, whilst nearly all methods tended to perform poorly with NEC (see Section 9). Whilst MFEC with -greedy was able to solv e OpenRoom, it had high variance for FourRoom. In contrast to most of our other results, NEC performed best with -greedy , but still had poor performance on F ourRoom. D3QN was not able to consistently solve OpenRoom, and failed to solv e FourRoom. Atari: Figure 3 and T able 1 sho w the performance of our method compared to the D3QN baseline and to the -greedy polic y with EC for five different Atari games. Overall, MEMEC outperformed the other methods in these games most of the time, not only in terms of the maximum achiev ed rew ard, but also in terms of the learning speed (see Section 10 for additional learning curves). For Space 1 Despite this, we hav e retained the results for UCB in any experiments conducted with this method. 4 T able 1: Final averaged rewards for the Atari games: the values indicate the mean of the last 5 ev aluations, averaged o ver 3 initial random seeds, and their corresponding standard deviations. En vironments MFEC D3QN NEC D3QN -greedy Mellowmax -greedy Mellowmax Bowling 62 ± . 8 68 ± . 7 68 ± . 7 68 ± . 7 26 ± 12 11 ± 9 9 ± 7 22 ± 16 22 ± 16 22 ± 16 Q*Bert 3896 ± 710 11610 ± 898 11610 ± 898 11610 ± 898 3743 ± 1100 3951 ± 1321 5654 ± 483 5654 ± 483 5654 ± 483 1480 ± 271 Ms. Pac-Man 4178 ± 510 6968 ± 787 6968 ± 787 6968 ± 787 2101 ± 56 3900 ± 852 6997 ± 256 6997 ± 256 6997 ± 256 1851 ± 98 Space In vaders 672 ± 13 1029 ± 157 1029 ± 157 1029 ± 157 737 ± 29 598 ± 110 916 ± 228 916 ± 228 916 ± 228 756 ± 30 Pong 17 ± 2 17 ± 2 17 ± 2 7 ± 4 6 ± 4 − 7 ± . 9 − 11 ± . 5 − 5 ± 6 − 5 ± 6 − 5 ± 6 Figure 3: Learning curves for MFEC-based MEMEC agents on 4 Atari games. In vaders, Q*Bert and Ms. Pac-Man, our MEMEC agents, for both EC methods, outperformed the other algorithms, sho wing more rapid learning and higher final scores. For Bo wling, MFEC-based MEMEC performed similarly to -greedy , and somewhat w orse in Pong. NEC-based agents—either with -greedy or mello wmax policies—failed to solve Bowling and Pong. W e note that due to computational limitations we were unable to use the very lar ge buf fer sizes from the original works, which can also e xplain the inability to reproduce the performance of -greedy EC; it remains to be seen if using larger b uffer sizes w ould similarly improve the performance of MEMEC. 6 Discussion In this work we have inv estigated the use of more principled exploration methods in combination with EC, and proposed MEMEC, a method that addresses one of the main limitations of state-of-the-art RL models, namely , sample efficiency . W e show o ver a range of domains, including Atari games, that MEMEC can reliably achiev e higher rew ards faster , with stable performance across seeds. One limitation of mello wmax-based policies is its sensitivity to the value of ω across different domains [ 2 , 13 ], and the subsequent searches used to find optimal values, which are prohibiti ve in domains such as Atari. Implementing methods to automatically determine and tune ω will be an important area for future work. References [1] Kai Arulkumaran, Marc Peter Deisenroth, Miles Brundage, and Anil Anthon y Bharath. Deep reinforcement learning: A brief survey . IEEE Signal Processing Ma gazine , 34(6):26–38, 2017. [2] Kav osh Asadi and Michael L Littman. An alternative softmax operator for reinforcement learning. In International Confer ence on Machine Learning , pages 243–252, 2017. [3] Peter Auer, Nicolo Cesa-Bianchi, and Paul Fischer . Finite-time analysis of the multiarmed bandit problem. Machine Learning , 47(2-3):235–256, 2002. [4] Marc G Bellemare, Y av ar Naddaf, Joel V eness, and Michael Bowling. The arcade learning en vironment: An ev aluation platform for general agents. J ournal of Artificial Intelligence Resear ch , 47:253–279, 2013. [5] Charles Blundell, Benigno Uria, Ale xander Pritzel, Y azhe Li, A vraham Ruderman, Joel Z Leibo, Jack Rae, Daan W ierstra, and Demis Hassabis. Model-free episodic control. arXiv preprint arXiv:1606.04460 , 2016. 5 [6] Richard P Brent. Algorithms for minimization without derivatives . Courier Corporation, 2013. [7] Greg Brockman, V icki Cheung, Ludwig Pettersson, Jonas Schneider, John Schulman, Jie T ang, and W ojciech Zaremba. OpenAI Gym. arXiv preprint , 2016. [8] Richard Y Chen, Szymon Sidor , Pieter Abbeel, and John Schulman. UCB exploration via Q-ensembles. arXiv pr eprint arXiv:1706.01502 , 2017. [9] Nathaniel D Daw , Y ael Niv , and Peter Dayan. Uncertainty-based competition between prefrontal and dorsolateral striatal systems for behavioral control. Natur e Neur oscience , 8(12):1704, 2005. [10] Roy Fox, Ari P akman, and Naftali Tishby . T aming the noise in reinforcement learning via soft updates. In Confer ence on Uncertainty in Artificial Intelligence , 2016. [11] Daniel L Greenberg and Miek e V erfaellie. Interdependence of episodic and semantic memory: evidence from neuropsychology . Journal of the International Neur opsychological Society , 16 (5):748–753, 2010. [12] Hado V Hasselt. Double Q-learning. In Advances in Neural Information Pr ocessing Systems , pages 2613–2621, 2010. [13] Seungchan Kim, Kav osh Asadi, Michael Littman, and George Konidaris. DeepMellow: Re- moving the Need for a T arget Network in Deep Q-Learning. In International Joint Confer ence on Artificial Intelligence , 2019. [14] Tze Leung Lai and Herbert Robbins. Asymptotically efficient adaptiv e allocation rules. Ad- vances in Applied Mathematics , 6(1):4–22, 1985. [15] Brenden M Lake, T omer D Ullman, Joshua B T enenbaum, and Samuel J Gershman. Building machines that learn and think like people. Behavioral and Brain Sciences , 40, 2017. [16] T or Lattimore and Csaba Szepesvári. Bandit algorithms . Cambridge Uni versity Press, 2018. [17] Máté Lengyel and Peter Dayan. Hippocampal contributions to control: the third way . In Advances in Neural Information Pr ocessing Systems , pages 889–896, 2008. [18] Long-Ji Lin. Self-improving reactive agents based on reinforcement learning, planning and teaching. Machine Learning , 8(3-4):293–321, 1992. [19] Michael L Littman and Csaba Szepesvári. A generalized reinforcement-learning model: Con- ver gence and applications. In International Confer ence on Machine Learning , volume 96, pages 310–318, 1996. [20] Marios C Machado, Marc G Bellemare, and Michael Bowling. A Laplacian framew ork for option discov ery in reinforcement learning. In International Confer ence on Machine Learning , pages 2295–2304, 2017. [21] V olodymyr Mnih, Koray Kavukcuoglu, David Silver , Andrei A Rusu, Joel V eness, Marc G Bellemare, Alex Grav es, Martin Riedmiller , Andreas K Fidjeland, Geor g Ostrovski, et al. Human-lev el control through deep reinforcement learning. Natur e , 518(7540):529, 2015. [22] Ofir Nachum, Mohammad Norouzi, Kelvin Xu, and Dale Schuurmans. Bridging the gap between value and policy based reinforcement learning. In Advances in Neural Information Pr ocessing Systems , pages 2775–2785, 2017. [23] Gergely Neu, Anders Jonsson, and V icenç Gómez. A unified view of entropy-re gularized Markov decision processes. arXiv pr eprint arXiv:1705.07798 , 2017. [24] Ian Osband. Risk versus uncertainty in deep learning: Bayes, bootstrap and the dangers of dropout. In NIPS W orkshop on Bayesian Deep Learning , 2016. [25] Ian Osband, Daniel Russo, and Benjamin V an Roy . (More) efficient reinforcement learning via posterior sampling. In Advances in Neural Information Pr ocessing Systems , pages 3003–3011, 2013. 6 [26] Ian Osband, Charles Blundell, Alexander Pritzel, and Benjamin V an Roy . Deep exploration via bootstrapped DQN. In Advances in Neural Information Pr ocessing Systems , pages 4026–4034, 2016. [27] Alexander Pritzel, Benigno Uria, Sriram Sriniv asan, Adria Puigdomenech Badia, Oriol V inyals, Demis Hassabis, Daan W ierstra, and Charles Blundell. Neural episodic control. In International Confer ence on Machine Learning , pages 2827–2836, 2017. [28] Pierre H Richemond and Brendan Maginnis. A short variational proof of equi v alence between policy gradients and soft Q-learning. arXiv preprint , 2017. [29] Richard S Sutton and Andre w G Barto. Reinfor cement learning: An intr oduction . MIT press, 2018. [30] Hado V an Hasselt, Arthur Guez, and Da vid Silver . Deep reinforcement learning with double Q-learning. In AAAI Confer ence on Artificial Intelligence , 2016. [31] Ziyu W ang, T om Schaul, Matteo Hessel, Hado Hasselt, Marc Lanctot, and Nando Freitas. Dueling network architectures for deep reinforcement learning. In International Conference on Machine Learning , pages 1995–2003, 2016. [32] Christopher JCH W atkins and Peter Dayan. Q-learning. Machine Learning , 8(3-4):279–292, 1992. [33] Y ang Y u. T owards sample ef ficient reinforcement learning. In International J oint Confer ence on Artificial Intelligence , pages 5739–5743, 2018. 7 7 Additional Background Deep Q-network: The DQN uses Q -learning, updating a neural-network based state-action v alue function, Q ( s, a ; θ ) , parameterised by θ . The deep Q-network receives the state s as input and outputs a vector with the state-action values Q ( s, a, θ ) ∀ a ∈ A . In order to improve the stability of RL with function approximators, the original authors proposed using experience replay [ 18 ] and a target network [ 21 ]. The target network, parameterised by θ − , is equal to an older version of the online network and it is updated every τ steps. The TD target for the DQN is defined by: y DQN t = r t +1 + γ max a Q ( s t +1 , a ; θ − t ) . (1) The experience replay is a cyclic buf fer that stores the ( s t , a t , r t +1 , s t +1 ) transitions that the agent observes. Samples are retrieved from it uniformly at re gular intervals to train the netw ork. There hav e been sev eral proposed extensions that improv e this algorithm. Firstly , the max operator present in Q -learning is responsible for both the selection and ev aluation of state-action values, and hence can result in overoptimistic estimated Q -values. In double Q -learning [ 12 ], an alternative update rule for Q -learning, the selection of actions and the ev aluation of their values are performed by separate estimators. T o do so, two value functions are implemented, parameterised by θ and θ 0 : One is used for the greedy selection of actions whereas the other is used to determine the corresponding value. For the double DQN algorithm, the online and target networks are used as the two estimators [30]. In this case, the TD target can be written as: y DD QN t = r t +1 + γ Q ( s t +1 , argmax a Q ( s t +1 , a ; θ t ); θ − t ) . (2) Another extension based on the DQN is the dueling double DQN (D3QN) algorithm [ 31 ]. The dueling architecture extends the standard DQN by calculating separate advantage A θ and value V θ streams, sharing the same conv olutional neural network as a base. This imposes the form that state-action v alues can be calculated as of fsets (“advantages”) from the state v alue. The Q -function is then computed as: Q θ ( s, a ) = V θ ( s ) + ( A θ ( s, a ) − 1 |A| X a 0 A θ ( s, a 0 )) . (3) Model-free episodic control: In MFEC, the episodic controller Q E C ( s, a ) is represented by a fixed- size table for each action. Each entry corresponds to the state-action pair and the highest return ev er obtained from taking action a from state s . Whenev er a new state is encountered, the return is estimated based on the av erage of the observed returns from the k -nearest states s ( i ) : d Q EC ( s, a ) = 1 k P k i =1 Q EC s ( i ) , a if ( s, a ) / ∈ Q EC , Q EC ( s, a ) otherwise . (4) The state-action value function Q E C ( s, a ) is updated at the end of each episode, replacing the least- recently-used transitions with ne w state-action pairs and their corresponding episodic returns. If the pair ( s, a ) already exists in the table, the stored v alue will be updated to the maximum of the stored return and the newly observ ed return. For high-dimensional state spaces, the original authors used Gaussian random projections in order to reduce the dimensionality of the states before using them in the episodic controller . Neural Episodic Contr ol: NEC consists of two components: a neural network for learning a feature mapping, and a differentiable neural dictionary (DND) per action (see Figure 1). The neural network receiv es as input the state s and outputs a key/embedding h . Each DND memory performs a lookup based on the current key h , by comparing it with the keys h i already stored in the DND, and retrieving the k most similar keys with their corresponding Q i values. The predicted Q ( s, a ) value, based on h , is the weighted sum of the retriev ed Q i values: P i w i Q i . Each weight w i is calculated by using the in verse distance weighted kernel: k ( h, h i ) = 1 k h − h i k 2 2 + δ . (5) 8 Updating the DND in volv es appending new ke y-value pairs to the dictionary or , for cases where the same state already exists in that dictionary , updating the value of Q ( s, a ) as in Q -learning: Q i ← Q i + α R ( n ) ( s, a ) − Q i , (6) where α is the learning rate, and R ( n ) is the n -step return [ 29 ] bootstrapped from the predicted Q -values from NEC during each episode. The parameters of the neural network are trained by minimising the mean squared error loss between the predicted Q -values and the stored returns, using a random sample of s t , a t , R ( n ) t tuples stored in experience replay [18], in a similar fashion to the DQN algorithm. 9 8 Cartpole Results (a) MFEC-based algorithms. (b) NEC-based algorithms. Figure 4: Learning curves on Cartpole. 10 9 GridW orld Results (a) MFEC-based algorithms. (b) NEC-based algorithms. Figure 5: Learning curves on OpenRoom. (a) MFEC-based algorithms. (b) NEC-based algorithms. Figure 6: Learning curves on FourRoom. 11 10 Atari Results (a) Q*Bert (b) Pong (c) Ms. Pac-Man (d) Space In vaders Figure 7: Learning curves for NEC-based algorithms. Figure 8: Learning curves for MFEC-based algorithms on Pong. 12 11 Hyperparameters T able 2: Hyperparameters used for the Gridworld and the Classic Control Domains. Parameters name D3QN MFEC NEC initial 1 1 1 final 5 × 10 − 3 5 × 10 − 3 5 × 10 − 3 anneal start (steps) 1 5 × 10 3 5 × 10 3 anneal end (steps) 5 × 10 4 25 × 10 3 25 × 10 3 Discount factor λ 0 . 99 0 . 99 0 . 99 Rew ard clip Y es No No Number of neighbours k — 11 11 Kernel delta δ — 10 − 3 10 − 3 Experience replay size 10 5 — 10 5 RMSprop learning rate 25 × 10 − 5 — 7 . 92 × 10 − 6 RMSprop momentum 0 . 95 — 0 . 95 RMSprop 10 − 2 — 10 − 2 T raining start (steps) 5 × 10 3 — 10 3 Batch size 32 — 32 T arget network update (steps) 7 . 5 × 10 3 — — Observation projection — None — Projection key size — None — Memory learning rate α — — 0 . 1 n -step return — — 100 Ke y size — — 64 T able 3: Hyperparameters used for the Atari games. Parameters name D3QN MFEC NEC initial 1 1 1 final 10 − 2 5 × 10 − 3 10 − 3 anneal start (steps) 1 5 × 10 3 5 × 10 3 anneal end (steps) 10 6 25 × 10 3 25 × 10 3 Discount factor λ 0 . 99 0 . 99 0 . 99 Rew ard clip Y es No No Number of neighbours k — 11 11 Kernel delta δ — 10 − 3 10 − 3 Experience replay size 10 6 — 10 5 RMSprop learning rate 25 × 10 − 5 — 7 . 92 × 10 − 6 RMSprop momentum 0 . 95 — 0 . 95 RMSprop 10 − 2 — 10 − 2 T raining start (steps) 12 . 5 × 10 3 — 5 × 10 4 Batch size 32 — 32 T arget netw ork update (steps) 10 3 — — Observation projection — Gaussian — Projection key size — 128 — Memory learning rate α — — 0 . 1 n -step return — — 100 Ke y size — — 128 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment