Integrating Automated Play in Level Co-Creation

In level co-creation an AI and human work together to create a video game level. One open challenge in level co-creation is how to empower human users to ensure particular qualities of the final level, such as challenge. There has been significant prior research into automated pathing and automated playtesting for video game levels, but not in how to incorporate these into tools. In this demonstration we present an improvement of the Morai Maker mixed-initiative level editor for Super Mario Bros. that includes automated pathing and challenge approximation features.

💡 Research Summary

The paper presents an extension to the Morai Maker mixed‑initiative level editor for Super Mario Bros., addressing two persistent usability concerns identified in prior user studies. First, AI‑driven level suggestions often lack an explicit physics model, leading to additions that may render a level unplayable. Second, designers must manually play through a partially built level to verify reachability, which interrupts creative flow and forces designers to rely on their own skill to assess playability.

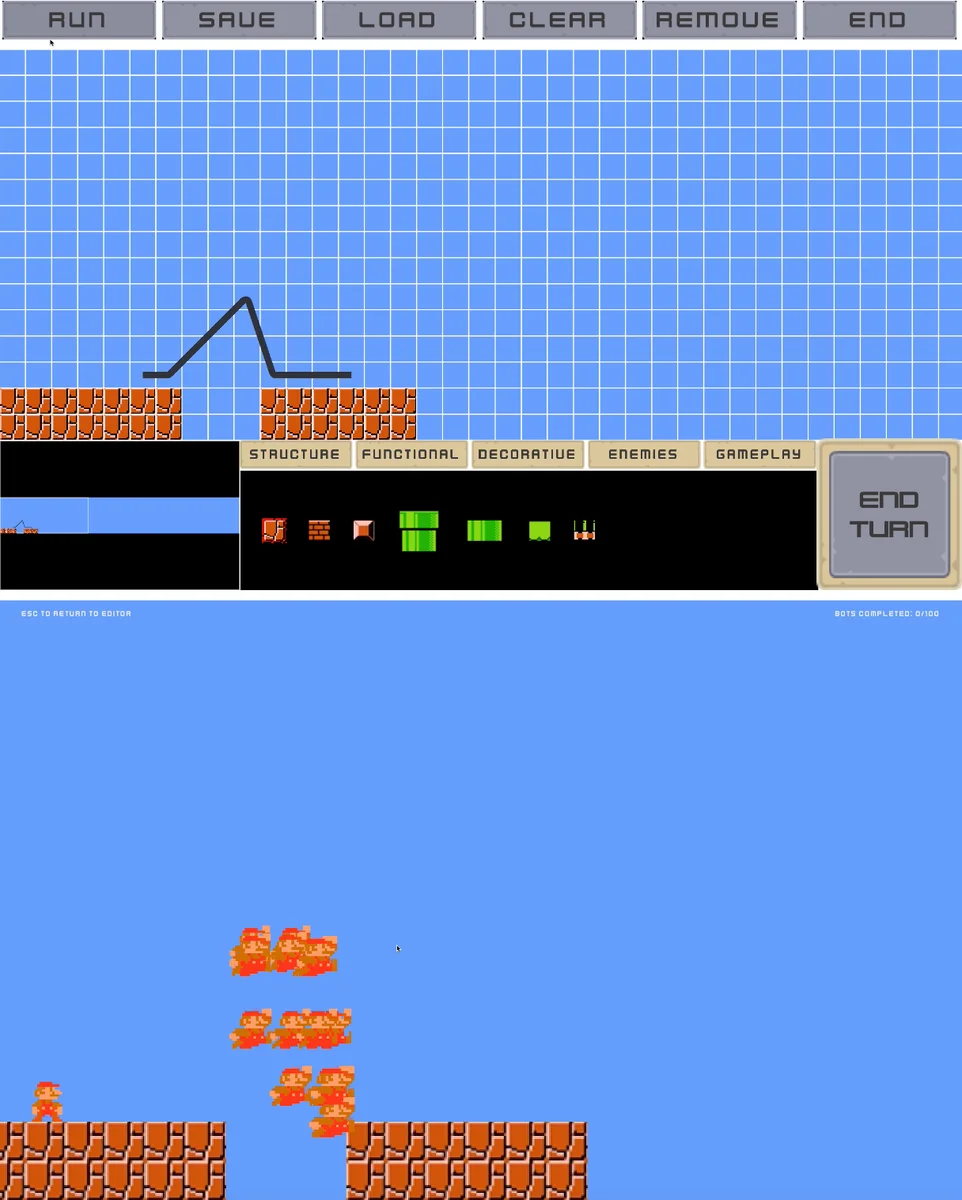

To mitigate these issues, the authors introduce two A*‑based tools. The A Reachability Check* encodes Mario’s movement mechanics—gravity, jump arcs, collision handling—into a set of search operators. When a user presses “P” and clicks two points in the editor, the system runs an A* search to determine if a feasible path exists between them. If successful, the optimal path is drawn as a black line; otherwise, no line appears, instantly signaling that the segment is unreachable. This check can be performed at any stage of level construction, allowing designers to validate partial layouts without full playtesting.

The second tool, A-based Survival Analysis*, builds on the reachability path. It spawns a configurable number of agents (default 100) that follow the A* path but with randomly assigned tolerance thresholds dictating how close they must be to a node before attempting to advance. This stochastic variation simulates sub‑optimal human behavior, providing a rough estimate of challenge. After agents traverse the path, the editor displays the count of agents that reach the goal, offering a quantitative “survival rate” that designers can use to compare difficulty across level sections. The authors caution that this metric is not a direct proxy for human perceived difficulty, but it serves as a useful relative indicator.

Technical implementation draws from the Mario AI Benchmark, where A* agents have been shown to outperform other agents when given full rule access. By translating Mario’s physics into search operators, the system guarantees optimality of the computed path, a property lacking in general GVGAI agents used in tools like Cicero. The survival analysis leverages Isaksen et al.’s concept of running many agents through a level to gauge difficulty, adapting it to a platformer context.

The paper situates its contributions within the broader PCGML (Procedural Content Generation via Machine Learning) literature, noting that while many mixed‑initiative editors exist, few incorporate automated playtesting or challenge estimation. It also references prior mixed‑initiative frameworks that rely on grammar‑based or search‑based generation, highlighting the novelty of integrating real‑time physics‑aware pathfinding and stochastic difficulty assessment.

Limitations are acknowledged. The A* model may still miss edge cases of Mario’s physics, leading to false positives or negatives in reachability. The random tolerance thresholds, while introducing variability, do not capture the full spectrum of human player strategies, reaction times, or learning curves. Moreover, the current implementation is tailored to 2‑D side‑scrollers and would require substantial adaptation for other genres or more complex physics engines.

Future work suggested includes refining the physics model, incorporating machine‑learning predictors of human difficulty, extending the tools to support multi‑player or 3‑D environments, and conducting larger user studies to evaluate how these automated checks influence design quality and creative satisfaction.

In summary, the authors successfully embed automated playability verification and approximate difficulty measurement into a mixed‑initiative level editor, transforming traditionally separate playtesting and difficulty tuning steps into an integrated, real‑time feedback loop that empowers both novice and expert designers to create more robust and appropriately challenging game levels.

Comments & Academic Discussion

Loading comments...

Leave a Comment