Multi-domain Conversation Quality Evaluation via User Satisfaction Estimation

An automated metric to evaluate dialogue quality is vital for optimizing data driven dialogue management. The common approach of relying on explicit user feedback during a conversation is intrusive and sparse. Current models to estimate user satisfac…

Authors: Praveen Kumar Bodigutla, Lazaros Polymenakos, Spyros Matsoukas

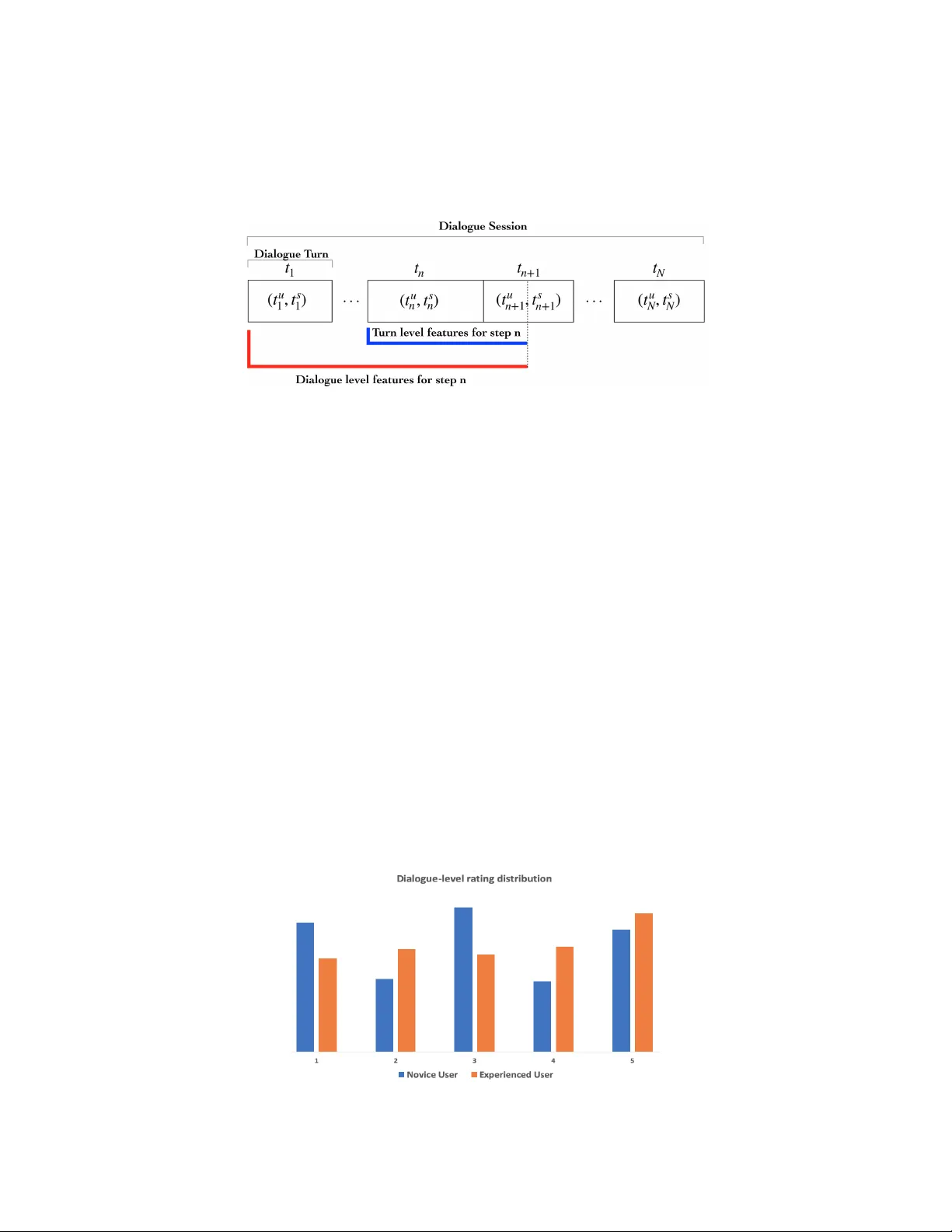

Multi-domain Con versation Quality Ev aluation via User Satisfaction Estimation Prav een Kumar Bodigutla pbodigut@amazon.com Lazaros P olymenakos polyml@amazon.com Spyros Matsoukas matsouka@amazon.com Abstract An automated metric to e v aluate dialogue quality is vital for optimizing data dri ven dialogue management. The common approach of relying on explicit user feedback during a conv ersation is intrusiv e and sparse. Current models to estimate user satisfaction use limited feature sets and employ annotation schemes with limited generalizability to con versations spanning multiple domains. T o address these gaps, we created a new Response Quality annotation scheme, introduced fi ve new domain-independent feature sets and e xperimented with six machine learning models to estimate User Satisfaction at both turn and dialogue le vel. Response Quality ratings achie ved significantly high correlation ( 0 . 76 ) with ex- plicit turn-le vel user ratings. Using the new feature sets we introduced, Gradient Boosting Regression model achiev ed best (rating [1-5]) prediction performance on 26 seen (linear correlation ∼ 0 . 79 ) and one new multi-turn domain (linear corre- lation 0 . 67 ). W e observed a 16% relativ e improvement (68% → 79%) in binary (“satisfactory/dissatisfactory”) class prediction accurac y of a domain-independent dialogue-lev el satisfaction estimation model after including predicted turn-level satisfaction ratings as features. 1 Introduction Automatic turn and dialogue lev el quality ev aluation of end user interactions with Spoken Dialogue Systems (SDS) is vital for identifying problematic con versations and for optimizing dialogue policy using a data driv en approach, such as reinforcement learning. One of the main obstacles to designing data-driv en policies is the lack of an objective function to measure the success of a particular interaction. Existing methods along with their limitations to measure dialogue success can be categorized into fi ve groups: 1) Using sparse sentiment for end-to-end dialogue system training; 2) Using task success as dialogue e valuation criteria, which does not capture frustration caused in intermediate turns and assumes end user goal is known in adv ance; 3) Explicitly soliciting feedback from the user , which is intrusiv e and may cause dissatisfaction; 4) Using a popular approach, such as P ARADISE [W alker et al. , 2000], to predict dialogue-lev el satisfaction ratings provided by surve yed users, which has limited generalizability to di verse (novice and experienced) user population [Deriu et al. , 2019]; and 5) Estimating per turn dialogue quality using trained Interaction Quality (IQ) [Schmitt et al. , 2012] estimation models. Per turn discrete 1-5 scale IQ labels are pro vided by the annotator while keeping track of dialogue quality till that turn. Prior attempts to model user satisfaction at turn le vel as a continuous process ev olving ov er time, employed either users [Engelbrecht et al. , 2009] or expert annotators [Higashinaka et al. , 2010] to rate each turn in a dialogue. Both approaches suffered from manual feature extraction process, which IQ addressed. Features used in the IQ estimation models were deriv ed from the current turn, the dialogue history , and output from three Spoken Language Understanding (SLU) components, namely: Automatic Speech Recognition (ASR), Natural Language Understanding (NLU), and the 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), V ancouver , Canada. dialogue manager . IQ annotations pro vided by e xpert annotators are reliable in comparison to explicit ratings pro vided by the end users. V arious models ha ve been e xplored to predict IQ, including Hidden Markov Models [Ultes et al. , 2014], Support V ector Machines (SVM) [Schmitt et al. , 2011], Support V ector Ordinal Regression (SV OR) [Asri et al. , 2014], Recurrent Neural Networks (RNN) [Pragst et al. , 2017], and Long Short-T erm Memory Networks (LSTM) [Rach et al. , 2017]. Con versations users ha ve with modern day SDS span multiple domains in a single dialogue session (T able 1). IQ annotation scheme which is dev eloped using within domain con versations has limited generalizability to dialogues that span across multiple domains. Utterance Comment User: Play latest hits. {domain: Music} User makes a popular r equest System: Shuffling from your playlist. System addr esses the r equest successfully User: Stop. Cancel my ev ening appointment. User bar ges-in {domain: Calendar } System: Sorry I don’t know that one. System identifies un-actionable r equest User: Cancel my 7pm event if it is raining to- day . {domain: Calendar , W eather } User paraphr ases System: T oday in Seattle, there is 60% chance of rain. Should I cancel your 7pm ev ent - Park V isit? System Informs and asks a question. User: Y es please. {domain: Global } User confirms System: Alright, 7pm event - Park visit Can- celed. System executes user r equest T able 1: Con versation spanning multiple domains. 1 The IQ approach to rate turns in a dialogue incrementally , increases cogniti ve load on the annotators and lo wers inter-annotator reliability . W e also hypothesize that the features using in IQ estimation models are limited, and including additional conte xtual signals improv es the performance of dialogue quality estimation models for both single-turn and multi-turn con versations 2 . T o address the aforementioned gaps in existing dialogue quality estimation methods, we propose an end-to-end User Satisfaction Estimation (USE) metric which predicts user satisfaction rating for each dialogue turn on a continuous 1-5 scale. T o obtain consistent, simple and generalizable annotation scheme that easily scales to multi-domain con versations, we introduce turn-le vel Response Quality (RQ) annotation scheme. T o improve the performance of the satisfaction estimation models, we design fiv e new feature sets, which are: 1) user request paraphrasing indicators, 2) cohesion between user request and system response, 3) div ersity of topics discussed in a session, 4) un-actionable user request indicators, and 5) aggregate popularity of domains and topics across the entire population of the users. Using RQ annotation scheme, annotators rated dialogue turns from 26 single-turn and multi-turn sampled Alexa domains 3 (e.g., Music, Calendar , W eather , Movie booking ). W e trained USE machine learning models using the annotated RQ ratings. T o explain model predicted rating using features’ values, we experimented with four interpretable models that rank features by their importance. W e benchmarked performance of these models against two state-of-the-art dialogue quality prediction models. Using ablation studies, we showed improvement in the best performing USE model’ s performance using new conte xtual features we introduced. In order to test the generalization performance of the model, we reserved one “ne w” multi-turn Alexa domain in the test set. Furthermore, we trained a dialogue-le vel satisfaction estimation model with 1 Due to confidentiality consideration, this example is not a real user conv ersation but authored to mimic the true dialogue. 2 In single-turn con versations the entire context is e xpected to be present in the same turn, while in a multi-turn case the context is carried from pre vious turns to address user’ s request in the current turn. 3 A multi-turn domain supports multi-turn con versations whereas a single-turn domain treats each user query as an independent request where context is not carried forward from pre vious turns. 2 explicit session-le vel ratings pro vided by both novice and experienced users on multi-turn dialogues from 14 domains. W e ev aluated the impact of including turn-lev el satisfaction ratings as features on the performance of this domain-independent dialogue-lev el satisfaction estimation model. The outline of the paper is as follows: Section 2 introduces the Response Quality annotation scheme and discusses its ef fectiv eness in terms of predicting turn-le vel user satisf action ratings. Section 3 summarizes the Response Quality annotated data, discusses dialogue-le vel satisfaction estimation using ratings provided by novice & e xperienced users and presents our e xperimentation setup. Section 4 provides an empirical study of turn and dialogue-level satisfaction estimation models’ performance and discusses results from feature ablation study . Section 5 concludes. 2 Response Quality Annotation W e designed the Response Quality (RQ) annotation scheme to generate training data for our turn-le vel User Satisfaction Estimation (USE) model. 2.1 RQ annotation Scheme and Comparison with IQ In RQ, similar to IQ annotations, annotators listened to raw audio and provided per turn’ s system RQ rating on a 5-point scale. The scale we asked annotators to follow was: 1 =T errible (system fails to understand and fulfill user’ s request), 2 =Bad (understands the request but fails to satisfy it in any w ay), 3 =OK (understands users request and either partially satisfies the request or provides information on how the request can be fulfilled), 4 =Good (understands and satisfies the user request, but pro vides more information than what the user requested or tak es extra turns before meeting the request), and 5 =Excellent (understands and satisfies user request completely and ef ficiently). Using a 5 point scale ov er a binary scale pro vides an option to the annotators to factor in their subjectiv e interpretation of the extent of success or failure of system’ s response to satisfy a user’ s request. Annotators rated con versations that spanned multiple domains. They were instructed to use the follow- up feedback from the user (e.g., user expresses frustration or rephrases an initial request) in making judgements. Unlike IQ annotation scheme, we removed the constraint on the annotators to keep track of the quality of dialogue so far while determining RQ ratings for a gi ven turn. This relaxation in constraint, coupled with making full con versation context a vailable to the annotators, reduced the cognitiv e load on them. This simplified annotation scheme not only helped in scaling RQ to multiple domains but also enabled precise identification of defecti ve turns which is not straightforw ard in the case of IQ where an individual turn’ s IQ rating depends on the prior turns’ ratings. 2.2 Inter Annotator Agreement (IAA) and Correlation with user satisfaction rating W e conducted a user study to verify the accuracy of RQ and IQ [Schmitt et al. , 2012] annotation process. In the study , eight users were asked to achie ve 30 pre-determined goals. Dialogue sessions to achie ve the gi ven goals spanned o ver six single-turn and two multi-turn Ale xa domains. For 15 out of the 30 goals, we asked the users to provide satisf action rating on a discrete (1-5) scale based on turn’ s system response. For the remaining 15 goals, we asked the users to rate each turn incrementally based on their perception of interaction so far . Then we sent the same utterances to six annotators (3 annotators per annotation type) for obtaining RQ (950 turns) and IQ (700 turns) annotations. The IQ annotations are less in number in comparison to RQ because we excluded turns where the users were unsure about the per-turn incremental satisfaction ratings they provided. W e did not impose any restriction on the number of turns required to achie ve a gi ven goal, which led to an unequal number of turns per dialogue session across users who were assigned the same goal. Since IQ annotation guidelines suggest rating unrelated queries independently , the annotators provid- ing IQ annotations, were also instructed to identify independent interactions within a given dialogue session. Specifically , annotators marked the beginning of a ne w interaction if they felt that the user’ s goal in the current turn is unrelated to the one he/she tried to achiev e in the pre vious turn. W e found that the [1 − 5] scale RQ ratings provided by 3 annotators were highly correlated (Spearman’ s rho 0 . 94 ) 4 with each other , suggesting high IAA. The mean RQ ratings were significantly (at 4 W e also asked 3 novice annotators to pro vide RQ ratings for 400 single and multi-turn con versations’ turns, the IAA was still high with mean Spearman’ s rho of 0.75. 3 95% confidence interval) correlated ( 0 . 76 ) with surveyed user satisfaction ratings with system’ s response. In the case of IQ ratings, mean IAA and correlation with user ratings dropped to 0 . 20 and 0 . 32 respectiv ely , suggesting limited generalizability of IQ annotation scheme to multi-domain con versations. W e used cohen’ s kappa to measure IAA between IQ annotators on the binary annotations the y provided to indicate the beginning of an interaction within a dialogue session. Low IAA (cohen’ s kappa 0 . 16 ) achie ved on these binary annotations, indicate ambiguity faced by the annotators in-terms of identifying end user’ s goal from a sequence of turns within a dialogue. 3 Data and Experimental Setup This section describes our turn-level and dialogue-le vel dataset, details the list of features deri ved from various signals, and e xplains our experimentation setup. 3.1 T urn-lev el Response Quality Data T o demonstrate that our RQ annotation scheme and predicti ve models are domain-independent and effecti ve for both single-turn and multi-turn dialogues, we used 30,500 dialogue turns randomly sampled from 26 single-turn ( 90% ) and multi-turn ( 10% ) domains that are representative of end user interactions with Alexa. The imbalance to wards single-turn dialogues is due to annotation priority . W e also tested model’ s generalization performance on 200 dialogue turns sampled from a “ne w” multi-turn goal oriented application. Figure 1 shows the di versity in rating distribution between the single-turn and multi-turn dialogues. Figure 1: Distribution of RQ annotation for single-turn, multi-turn domains and new multi-turn application. Exact percentage on y-axis is masked for confidentiality 3.2 Featur es T o estimate the turn level user satisfaction score, we used features derived from turn, dialogue context, and Spoken Language Understanding (SLU) components’ output similar to turn lev el IQ prediction models [Ultes et al. , 2017]. As shown in Figure 2, we define a dialogue turn at time n as t n = ( t u n , t s n ) , where t u n and t s n represent the user request and system response on turn n . A dialogue session of N turns is defined as ( t 1 : t N ). T o improv e the performance of the USE models across single and multi-turn con versations spanning multiple domains, we introduced the follo wing 5 sets of domain-independent features: 1. User request paraphrasing – Calculated by measuring syntactic and semantic (NLU predicted intent) similarity between consecutiv e turns’ user utterances. 2. Cohesion between response and request – Cohesi veness of system response with a user request is computed by calculating jaccard similarity score between user request and system response. System response “Her e is a sci-fi movie” will get higher cohesion score over “Her e is a comedy movie” , if the user request was “r ecommend a sci-fi movie” . 3. Aggregate topic popularity – Usage statistics such as aggre gate domain and intent usage count and ratio of usage count to number of customers, provides us a prior on the popularity of a topic across all users of Alexa. 4 4. Un-actionable user request – Identifies if the user request could not be fulfilled, by search- ing for phrases indicating an apology and negation in system response (e.g., “sorry I don’ t know how to do that” ). 5. Diversity of topics in a session – This dialogue level feature is calculated using the per- centage of unique intents till the current dialogue turn. Figure 2: dialogue and turn definitions. The blue and red lines indicate the history used for generating turn-based and dialogue-based features for the user satisfaction estimation on turn t n . 3.3 Dialogue-level satisfaction estimation using predicted turn-le vel ratings T o show that predicted turn-level satisf action ratings are effecti ve in estimating overall dialogue le vel satisfaction we trained dialogue-lev el quality estimation models to predict explicit dialogue-level ratings provided by users. Dialogue-level labels were obtained from ratings pro vided by 10 users ( 5 novice and 5 experienced) who were asked to achie ve 40 multi-turn goals spanning 14 {commercial con versation agent’ s} domains (example goals in Appendix T able 5). Since earlier attempts to estimate explicit dialogue-lev el satisfaction ratings did not generalize to dif ferent user population (see section 1), we collected data from users belonging to both “no vice” and “experienced” groups. A novice user has minimal e xperience con versing with the SDS and he/she has nev er used the functionality provided by the 14 domains prior to the study . An experienced user is a seasoned user of Alexa and its domains. Users provided their satisfaction rating with the dialogue on a discrete [1 − 5] scale at the end of each session, irrespective of the outcome. The rating scale we asked the annotators to follow was 1 =V ery dissatisfied, 2 =Dissatisfied, 3 =Moderately Satisfied (or Slightly dissatisfied), 4 =Satisfied and 5 =Extremely Satisfied. W e collected 1 , 042 dialogue-lev el satisfaction ratings in total. 45% of these ratings came from novice users and the rest were provided by the experienced users. Figure 3 sho ws the distribution of dialogue le vel ratings from both sets of users. Features to train the dialogue lev el satisfaction model included turn-level features computed on the last turn ( t N ), aggregate statistics (e.g., average ASR confidence score, number of barge-ins) computed ov er all turns ( t 1 : t N ) in a dialogue session and av erage estimated turn-le vel satisf action rating calculated across all turns in the same session. Figure 3: Distribution of dialogue le vel satisfaction ratings. Exact percentage on y-axis is masked for confiden- tiality 5 3.4 Experimental Setup This section describes the experimental setup we used for training and e valuating turn and dialogue lev el satisfaction estimation models. 3.4.1 T urn-lev el satisfaction estimation W e obtained a turn’ s RQ rating by a veraging the discrete 1-5 labels provided by 3 annotators. Hence, we considered regression models for experimentation which predicted satisfaction rating on a continuous 1-5 scale. T o achiev e interpretability , in our experiments we selected four models - LASSO [T ibshirani, 1996], Decision T ree Regression [Agrawal et al. , 1993], Random Forest Regression [Breiman, 2001] and Gradient Boosting Regression [Friedman, 2001] that rank features by their importance. For benchmarking we used Multi-layer Perceptron (MLP) [Gardner and Dorling, 1998] and Support V ector Regression (SVR) 5 . Data was randomly split into training ( 60% ), validation ( 20% ) and test ( 20% ) sets, ho wev er turns from the “new” multi-turn application were included only in the test set. 3.4.2 Dialogue-level satisfaction estimation W e trained the same four interpretable regression models (LASSO, Decision T ree, Random Forest and Gradient Boosting) to predict explicit dialogue level ratings on a continuous [1 − 5] scale. Since ratings obtained at dialogue lev el from users are sparse ( 3 . 5% of total number of turn-level RQ annotations), we performed 9-fold cross validation on randomly sampled 90% of data and we ev aluated the performance of the trained model on remaining 10% test data. 3.4.3 Evaluation Criteria W e used Pearson’ s linear correlation coef ficient ( r ) for ev aluating each model’ s 1-5 prediction performance. For the use case to identify problematic turns from an end user’ s perspectiv e, it is sufficient to identify satisfactory (rating ≥ 3) and dissatisfactory (rating < 3 ) interactions. For ev aluating turn-level satisf action models, we used F-score for the dissatisfactory class as the binary classification metric. Identifying dissatisfactory turns is of more importance and is in general a difficult task as majority of turns belong to the satisfactory class (Figure 1). W e used satisfactory and dissatisfactory binary class prediction accuracy as an additional metric to e v aluate the performance of dialogue-lev el satisfaction estimation models, since unlike turn-le vel data, the dialogue ratings are not concentrated around one class (Figure 3). 4 Results and Analysis In this section we present a performance analysis of both turn-le vel and dialogue-lev el satisfaction estimation models. 4.1 T urn-lev el satisfaction estimation results Single-turn Multi-turn Multi-turn new application Model\Metric C or rel ation F − dissatisf actor y C orr elation F − dissatisf actor y C or relation F − dissatisf actor y Lasso 0.70 ± 0.01 0.69 ± 0.02 0.68 ± 0.05 0.74 ± 0.06 0.62 ± 0.07 0.74 ± 0.06 Decision Tree 0.73 ± 0.01 0.70 ± 0.03 0.67 ± 0.05 0.71 ± 0.06 0.58 ± 0.07 0.73 ± 0.05 Random Forest 0.77 ± 0.01 0.74 ± 0.02 0.74 ± 0.05 0.75 ± 0.05 0.61 ± 0.07 0.76 ± 0.05 G.Boost 0.80 ± 0.01 0.77 ± 0.02 0.79 ± 0.04 0.78 ± 0.05 0.67 ± 0.06 0.79 ± 0.05 SVR 0.73 ± 0.01 0.72 ± 0.02 0.75 ± 0.04 0.71 ± 0.06 0.64 ± 0.07 0.76 ± 0.05 MLP 0.75 ± 0.01 0.73 ± 0.02 0.74 ± 0.04 0.73 ± 0.05 0.67 ± 0.06 0.79 ± 0.05 T able 2: Six machine learning regression models performance on turns from single-turn, multi-turn con versations and ne w multi turn test application. Each cell shows the mean and 95% bootstrap confidence interv al with the highest mean in bold. 5 Recurrent Neural Networks and Long Short-term Memory Networks [Rach et al. , 2017] [Drucker et al. , 1997] showed non-significant improvement ( ≤ 0 . 02 difference in Spearman’ s rho) ov er state-of-the-practice MLP and SVM models in predicting IQ. 6 Amongst the six models we experimented with, Gradient Boosting Regression achieves superior performance on single-turn domains and multi-turn domains ( r = ∼ 0 . 79 and F-dissatisfaction = 0 . 77 ). On the new multi-turn application, both Gradient Boosting Regression and MLP models achie ve better performance ( r = 0 . 67 and F-dissatisfaction = 0 . 79 ) in comparison to the other four models we experimented with (results in T able 2). Single-turn Multi-turn Multi-turn new application Features Removed\Metric C orr elation F − dissatisf actor y C or relation F − dissatisf actory C orr elation F − dissatisf actor y None 0.80 ± 0.01 0.77 ± 0.02 0.79 ± 0.04 0.78 ± 0.05 0.67 ± 0.06 0.79 ± 0.05 Aggegate topic Popularity 0.74 ± 0.02 0.72 ± 0.02 0.75 ± 0.04 0.72 ± 0.06 0.62 ± 0.07 0.74 ± 0.06 Un-actionable Request identifier 0.76 ± 0.01 0.74 ± 0.02 0.78 ± 0.05 0.77 ± 0.06 0.50 ± 0.08 0.70 ± 0.05 Cohesion between request and response 0.78 ± 0.01 0.77 ± 0.02 0.76 ± 0.04 0.77 ± 0.05 0.63 ± 0.07 0.77 ± 0.06 T opic diversity 0.79 ± 0.01 0.77 ± 0.01 0.77 ± 0.04 0.75 ± 0.05 0.67 ± 0.05 0.78 ± 0.05 User request paraphrasing 0.78 ± 0.01 0.76 ± 0.02 0.78 ± 0.04 0.76 ± 0.05 0.65 ± 0.05 0.76 ± 0.05 T able 3: Ablation study results on turn-level satisfaction estimation performance. Each cell shows the mean and 95% bootstrap confidence interv al with the highest mean in bold. Performance is measured using Linear correlation between predicted and annotated RQ ratings and F-score on dissatisfactory class (F-dissatisfactory). Bold highlights indicate statistically significant difference in performance. Based on ablation study using Gradient boosting re gression model, we found that the ne w features improv ed ev ery single metric on the test set. On single-turn dialogues, features corresponding to “aggregate topic popularity” caused largest statistically significant ∼ 7% relativ e improv ement in linear correlation (0.741 → 0.796) and F-dissatisfaction (0.72 → 0.77) scores. On the new multi-turn application, ∼ 35% relati ve improv ement in linear correlation (0.496 → 0.67) shows significant impact of “Un-actionable request” feature on generalization performance (T able3). The fiv e new feature sets we introduced occur in the top 10 sets of important features (Appendix T able 7) returned by model based on their computed importance score. 4.2 Dialogue-level satisfaction estimation results W ithout A verage-estimated turn-level rating feature With A verage-estimated turn-le vel rating feature Model\Metric Accuracy F − dissatisf actory C or relation Accuracy F − dissatisf actor y C orr elation Lasso 0.73 ± 0.05 0.64 ± 0.09 0.45 ± 0.13 0.78 ± 0.07 0.71 ± 0.09 0.58 ± 0.11 Decision T ree 0.64 ± 0.11 0.56 ± 0.12 0.31 ± 0.11 0.73 ± 0.06 0.63 ± 0.08 0.52 ± 0.10 Random Forest 0.67 ± 0.06 0.61 ± 0.12 0.47 ± 0.11 0.71 ± 0.07 0.64 ± 0.11 0.58 ± 0.10 G.Boost 0.68 ± 0.05 0.61 ± 0.12 0.58 ± 0.11 0.79 ± 0.07 0.73 ± 0.08 0.60 ± 0.09 T able 4: Dialogue level satisf action estimation performance obtained by four machine learning models with and without A verage estimated turn-lev el satisfaction rating as feature. Each cell shows the mean and 95% bootstrap confidence interval with the highest mean in bold. W ider confidence intervals are due to small sample size. Across all four models we experimented with, we observed an improv ement in dialogue le vel satisfaction estimation results by including av erage estimated turn-level satisfaction rating as a feature (results in T able 4). T urn-le vel satisfaction ratings were estimated using the Gradient Boosting Regression model which is described in the previous section (Section 4.1). Best performing dialogue- le vel Gradient Boosting Regression model achiev ed 16% relati ve improvement (68% → 79%) in binary “satisfactory/dissatisfactory” class prediction accurac y and ∼ 3 . 5% relati ve improv ement (0.58 → 0.60) in correlation between predicted and true dialogue-lev el labels. Based on feature importance scores returned by the Gradient Boosting regression model, a verage predicted turn-le vel satisfaction rating score is the most important feature for predicting dialogue-level satisfaction rating, follo wed by av erage ASR scores and av erage aggregate domain popularity (top 10 features in Appendix T able 9). 5 Conclusion In this paper , we described a user-centric and domain-independent approach for ev aluating user satisfaction in multi-domain con versations users have with an AI assistant. W e introduced Response Quality (RQ) annotation scheme which is highly correlated ( r = 0 . 76 ) with explicit turn le vel user satisfaction ratings. By designing fiv e additional ne w features, we achie ved a high linear correlation of ∼ 0.79 between annotated RQ and predicted User Satisfaction ratings with Gradient Boosting Regression as the User Satisfaction Estimation (USE) model, for both single-turn and multi-turn dialogues. Gradient Boosting Regression and Multi Layer Perceptron (MLP) models generalized to ne w domain better ( r = 0 . 67 ) than other models. By including turn-le vel satisfaction 7 prediction as features, we observed a relati ve 16% improv ement (68% → 79%) improv ement in binary “dissatisfactory/satisfactory” class prediction accuracy of a domain-independent dialogue-le vel satisfaction estimation model. The Gradient Boost Regression based dialogue-le vel model estimated explicit dialogue-le vel ratings pro vided by novice and experienced users on con versations spanning 14 domains and achiev ed significant (at 95% confidence interval) 0 . 60 correlation between predicted and true user ratings. W ith statistically significant ∼ 7% and ∼ 35% relativ e improv ement in linear correlation on e xisting domains and new multi-turn application respectiv ely , our ablation study supported our hypothesis that the new features impro ve model prediction performance. On multi-domain con versations, we plan to explore the use of Deep Neural Net models to reduce handcrafting of features [Rach et al. , 2017] and to jointly estimate end user satisf action at turn and dialogue le vel, though these models reduce interpretability . T o learn dialogue policies using reinforcement learning, we plan to experiment with proposed RQ based User Satisfaction metric as an alternati ve for re ward modeling. Acknowledgments W e thank Alborz Geramifard and NeurIPS Con versation AI w orkshop revie wers for their insightful feedback and guidance. W e also thank Longshaokan W ang, Swanand Joshi and Joshua Levy for helping with data procurement and turn level satisf action estimation setup. Finally , we thank Alison Bauter Engel, Kate Ridge way and Ale xa Data Services-RAMP team for their significant help with user studies and data annotations. 8 References Rakesh Agraw al, T omasz Imieli ´ nski, and Arun Swami. Mining association rules between sets of items in large databases. SIGMOD Rec. , 22(2):207–216, June 1993. L. E. Asri, H. Khouzaimi, R. Laroche, and O. Pietquin. Ordinal regression for interaction quality prediction. In 2014 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 3221–3225, May 2014. Leo Breiman. Random forests. Machine learning , 45(1):5–32, 2001. Jan Deriu, Álv aro Rodrigo, Arantxa Otegi, Guillermo Echegoyen, Sophie Rosset, Eneko Agirre, and Mark Cieliebak. Survey on e v aluation methods for dialogue systems. CoRR , abs/1905.04071, 2019. Harris Drucker , Christopher J. C. Burges, Linda Kaufman, Alex J. Smola, and Vladimir V apnik. Support vector regression machines. In M. C. Mozer , M. I. Jordan, and T . Petsche, editors, Advances in Neural Information Pr ocessing Systems 9 , pages 155–161. MIT Press, 1997. Klaus-Peter Engelbrecht, Florian Gödde, Felix Hartard, Hamed Ketabdar , and Sebastian Möller . Modeling user satisfaction with hidden Marko v models. In Pr oceedings of the SIGDIAL 2009 Confer ence , pages 170–177, London, UK, September 2009. Association for Computational Linguistics. Jerome H Friedman. Greedy function approximation: a gradient boosting machine. Annals of statistics , pages 1189–1232, 2001. MW Gardner and SR Dorling. Artificial neural networks (the multilayer perceptron)—A revie w of applications in the atmospheric sciences. Atmospheric En vir onment , 32(14-15):2627–2636, 1998. Ryuichiro Higashinaka, Y asuhiro Minami, Kohji Dohsaka, and T oyomi Meguro. Issues in predicting user satisfaction transitions in dialogues: Individual differences, ev aluation criteria, and prediction models. In IWSDS , 2010. Louisa Pragst, Stefan Ultes, and W olfgang Minker . Recurrent Neur al Network Interaction Quality Estimation , pages 381–393. Springer Singapore, Singapore, 2017. Niklas Rach, W olfgang Minker , and Stefan Ultes. Interaction quality estimation using long short-term memories. In SIGDIAL Confer ence , 2017. Alexander Schmitt, Benjamin Schatz, and W olfgang Minker . Modeling and predicting quality in spoken human-computer interaction. In SIGDIAL Confer ence , 2011. Alexander Schmitt, Stefan Ultes, and W olfgang Minker . A parameterized and annotated spoken dialog corpus of the cmu let’ s go bus information system. In Nicoletta Calzolari (Conference Chair), Khalid Choukri, Thierry Declerck, Mehmet U?ur Do?an, Bente Maegaard, Joseph Mariani, Asuncion Moreno, Jan Odijk, and Stelios Piperidis, editors, Pr oceedings of the Eight International Confer ence on Language Resour ces and Evaluation (LREC’12) , Istanbul, T urkey , may 2012. European Language Resources Association (ELRA). Robert Tibshirani. Regression shrinkage and selection via the lasso. Journal of the Royal Statistical Society . Series B (Methodological) , 58(1):267–288, 1996. Stefan Ultes, Robert ElChab, and W olfgang Minker . Application and evaluation of a conditioned hidden markov model for estimating interaction quality of spoken dialogue systems. In Joseph Mariani, Sophie Rosset, Martine Garnier-Rizet, and Laurence Devillers, editors, Natural Interaction with Robots, Knowbots and Smartphones , pages 303–312, New Y ork, NY , 2014. Springer New Y ork. Stefan Ultes, P awel Budziano wski, Iñigo Casanue va, Nik ola Mrksic, Lina Maria Rojas-Barahona, Pei hao Su, Tsung-Hsien W en, Milica Gasic, and Stev e J. Y oung. Domain-independent user satisfaction re ward estimation for dialogue policy learning. In INTERSPEECH , 2017. Marilyn W alker , Candace Kamm, and Diane Litman. T ow ards developing general models of usability with paradise. Nat. Lang. Eng. , 6(3-4):363–377, September 2000. 9 A A ppendices Domain/Application Goal Multi-turn con versational bot for mo vies Get ratings of two other movies directed by the direc- tor of a movie you liked w atching recently . Multi-turn con versational bot for mo vies Ask for movie recommendations and na vigate through them until you got a recommendation you liked. Multi-turn con versational bot for mo vies → Music Find details about the cast of your fav orite movie and play the movie’ s soundtrack. T icket-booking third party application Get a list of theaters near you and find out what is play- ing in at least 2 theaters returned by the application for the same query . Music → Knowledge Find song by artist and find out in which movie it was used Music → Music Find a popular album by an artist and play it Knowledge → Local Search Ask for a fact about a historical monument and find its address Recipe Ask for a general type of recipe (e.g. cookies, pasta dishes, etc.), choose a recipe from the list using your voice, and then add the ingredients to your shopping list. T icket-booking third party application T ry purchasing ticket for a mo vie playing in theaters near you. T icket-booking third party application Find what’ s playing in a theater outside your current city (or outside the default city from your de vice) Notification Add an appointment to the calendar and then change the time. (requires the user to first cancel and then create a new appointment) T able 5: Example Multi-turn goals for user study Model Hyper parameter - Optimal value Lasso alpha: 0 . 001 Decision T rees max-depth: 33 , min-samples-leaf: 31 , min- samples-split: 23 Random Forest max-depth: 49 , min-samples-leaf: 11 , min- samples-split: 27 Gradient Boosting Decision T rees max-depth: 23 , min-samples-leaf: 17 , min- samples-split: 59 SVR c: 2 , gamma: 0 . 024 MLP n-layers: 3 , batch-size: 128 , hidden size : 100 , solver: ‘sgd’ activ ation: ‘relu’ T able 6: Optimal Hyper parameter v alues used for training turn-level User Satisfaction Estimation (USE) models. 10 Featur e set description T urn(s) the feature set is computed on ASR & NLU Confidence scores t u n Length of user request and system Response t u n , t s n T ime between consecutiv e user requests t u n - t u n +1 Aggregate - Intent Popularity t u n Un-actionable user request t s n Cohesion between system response and user request t u n , t s n Length of dialogue t 0 - t n T opic diversity t u 0 - t u n User paraphrasing his/her request t u n - t u n +1 T able 7: Based on turn-lev el Gradient Boosting Regression model’ s output, top 10 feature sets 6 ranked by Importance score. In bold are the new feature sets we introduced. Model Hyper parameter - Optimal value Lasso alpha: 0 . 01 Decision T rees max-depth: 2 , min-samples-leaf: 5 , min- samples-split: 2 Random Forest max-depth: 4 , min-samples-leaf: 8 , min- samples-split: 13 Gradient Boosting Decision T rees max-depth: 2 , min-samples-leaf: 8 , min- samples-split: 17 T able 8: Optimal Hyper parameter values used for training dialogue-le vel satisfaction Estimation models. Featur e set description T urn(s) the feature set is computed on A verage estimated turn-le vel satisfaction ratings t 0 - t N A verage ASR confidence t 0 - t N Last turn’ s ASR confidence t N A verage aggre gate - domain popularity t 0 - t N Number of question prompts from the system side t s 0 - t s N A verage time dif ference between consecutive utterances t u 0 - t u N A verage NLU confidence t 0 - t N Length of the dialogue t 0 - t N Last turn’ s aggregate intent popularity t N A verage aggre gate - intent popularity t 0 - t N T able 9: Based on dialogue-lev el Gradient Boosting Regression model output, top 10 features, 7 ranked by Importance score. 6 For confidentiality we are not mentioning the entire list of feature sets used in the model. 7 For confidentiality we are not mentioning the entire list of features used in the model. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment