FastSpeech: Fast, Robust and Controllable Text to Speech

Neural network based end-to-end text to speech (TTS) has significantly improved the quality of synthesized speech. Prominent methods (e.g., Tacotron 2) usually first generate mel-spectrogram from text, and then synthesize speech from the mel-spectrog…

Authors: Yi Ren, Yangjun Ruan, Xu Tan

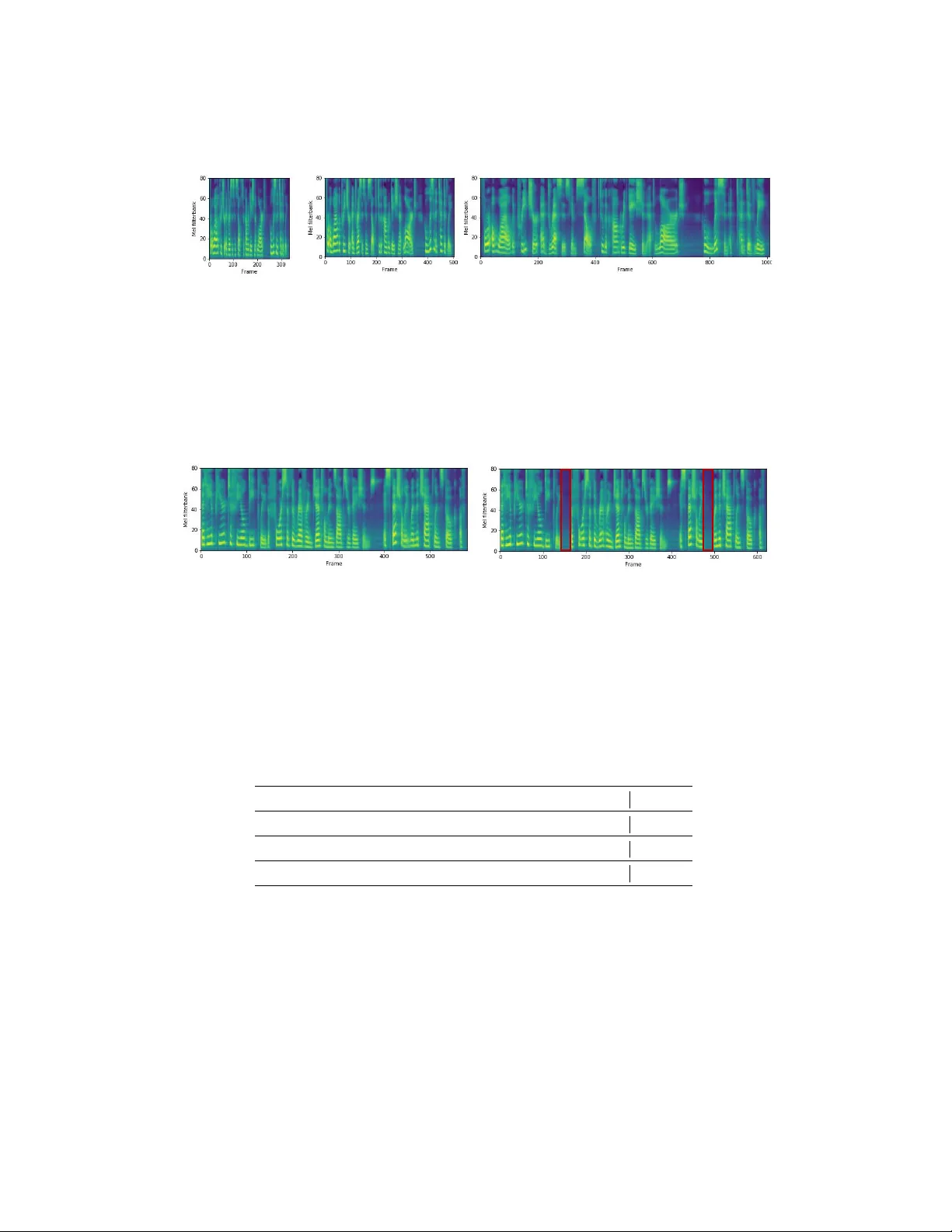

F astSpeech: F ast, Rob ust and Controllable T ext to Speech Y i Ren ∗ Zhejiang Univ ersity rayeren@zju.edu.cn Y angjun Ruan ∗ Zhejiang Univ ersity ruanyj3107@zju.edu.cn Xu T an Microsoft Research xuta@microsoft.com T ao Qin Microsoft Research taoqin@microsoft.com Sheng Zhao Microsoft STC Asia Sheng.Zhao@microsoft.com Zhou Zhao † Zhejiang Univ ersity zhaozhou@zju.edu.cn Tie-Y an Liu Microsoft Research tyliu@microsoft.com Abstract Neural network based end-to-end text to speech (TTS) has significantly impro ved the quality of synthesized speech. Prominent methods (e.g., T acotron 2) usually first generate mel-spectrogram from text, and then synthesize speech from the mel-spectrogram using vocoder such as W aveNet. Compared with traditional concatenativ e and statistical parametric approaches, neural network based end- to-end models suffer from slo w inference speed, and the synthesized speech is usually not robust (i.e., some words are skipped or repeated) and lack of con- trollability (v oice speed or prosody control). In this work, we propose a nov el feed-forward network based on T ransformer to generate mel-spectrogram in paral- lel for TTS. Specifically , we extract attention alignments from an encoder-decoder based teacher model for phoneme duration prediction, which is used by a length regulator to e xpand the source phoneme sequence to match the length of the target mel-spectrogram sequence for parallel mel-spectrogram generation. Experiments on the LJSpeech dataset show that our parallel model matches autoregressiv e mod- els in terms of speech quality , nearly eliminates the problem of word skipping and repeating in particularly hard cases, and can adjust voice speed smoothly . Most importantly , compared with autoregressi ve T ransformer TTS, our model speeds up mel-spectrogram generation by 270x and the end-to-end speech synthesis by 38x. Therefore, we call our model FastSpeech. 3 1 Introduction T ext to speech (TTS) has attracted a lot of attention in recent years due to the advance in deep learning. Deep neural netw ork based systems hav e become more and more popular for TTS, such as T acotron [27], T acotron 2 [22], Deep V oice 3 [19], and the fully end-to-end ClariNet [18]. Those models usually first generate mel-spectrogram autoregressi vely from te xt input and then synthesize speech from the mel-spectrogram using vocoder such as Griffin-Lim [ 6 ], W a veNet [ 24 ], P arallel ∗ Equal contribution. † Corresponding author 3 Synthesized speech samples can be found in https://speechresearch.github.io/fastspeech/ . 33rd Conference on Neural Information Processing Systems (NeurIPS 2019), V ancouv er, Canada. W av eNet [ 16 ], or W aveGlo w [ 20 ] 4 . Neural network based TTS has outperformed conv entional concatenativ e and statistical parametric approaches [9, 28] in terms of speech quality . In current neural network based TTS systems, mel-spectrogram is generated autoregressi vely . Due to the long sequence of the mel-spectrogram and the autore gressive nature, those systems face se veral challenges: • Slow inference speed for mel-spectrogram generation. Although CNN and Transformer based TTS [ 14 , 19 ] can speed up the training ov er RNN-based models [ 22 ], all models generate a mel-spectrogram conditioned on the previously generated mel-spectrograms and suffer from slow inference speed, given the mel-spectrogram sequence is usually with a length of hundreds or thousands. • Synthesized speech is usually not robust. Due to error propagation [ 3 ] and the wrong attention alignments between text and speech in the autore gressiv e generation, the generated mel-spectrogram is usually deficient with the problem of words skipping and repeating [ 19 ]. • Synthesized speech is lack of controllability . Previous autoregressiv e models generate mel-spectrograms one by one automatically , without explicitly le veraging the alignments between text and speech. As a consequence, it is usually hard to directly control the voice speed and prosody in the autoregressi ve generation. Considering the monotonous alignment between text and speech, to speed up mel-spectrogram generation, in this work, we propose a nov el model, FastSpeech, which takes a text (phoneme) sequence as input and generates mel-spectrograms non-autoregressi vely . It adopts a feed-forward network based on the self-attention in T ransformer [ 25 ] and 1D conv olution [ 5 , 11 , 19 ]. Since a mel-spectrogram sequence is much longer than its corresponding phoneme sequence, in order to solve the problem of length mismatch between the two sequences, FastSpeech adopts a length regulator that up-samples the phoneme sequence according to the phoneme duration (i.e., the number of mel-spectrograms that each phoneme corresponds to) to match the length of the mel-spectrogram sequence. The regulator is b uilt on a phoneme duration predictor , which predicts the duration of each phoneme. Our proposed FastSpeech can address the abo ve-mentioned three challenges as follo ws: • Through parallel mel-spectrogram generation, FastSpeech greatly speeds up the synthesis process. • Phoneme duration predictor ensures hard alignments between a phoneme and its mel- spectrograms, which is v ery different from soft and automatic attention alignments in the autoregressi ve models. Thus, FastSpeech av oids the issues of error propagation and wrong attention alignments, consequently reducing the ratio of the skipped words and repeated words. • The length regulator can easily adjust voice speed by lengthening or shortening the phoneme duration to determine the length of the generated mel-spectrograms, and can also control part of the prosody by adding breaks between adjacent phonemes. W e conduct experiments on the LJSpeech dataset to test FastSpeech. The results show that in terms of speech quality , FastSpeech nearly matches the autoregressi ve T ransformer model. Furthermore, FastSpeech achie ves 270x speedup on mel-spectrogram generation and 38x speedup on final speech synthesis compared with the autoregressi ve T ransformer TTS model, almost eliminates the problem of word skipping and repeating, and can adjust voice speed smoothly . W e attach some audio files generated by our method in the supplementary materials. 2 Background In this section, we briefly ov erview the background of this work, including te xt to speech, sequence to sequence learning, and non-autoregressi ve sequence generation. 4 Although ClariNet [ 18 ] is fully end-to-end, it still first generates mel-spectrogram autoregressiv ely and then synthesizes speech in one model. 2 T ext to Speech TTS [ 1 , 18 , 21 , 22 , 27 ], which aims to synthesize natural and intelligible speech giv en text, has long been a hot research topic in the field of artificial intelligence. The research on TTS has shifted from early concatenative synthesis [ 9 ], statistical parametric synthesis [ 13 , 28 ] to neural network based parametric synthesis [ 1 ] and end-to-end models [ 14 , 18 , 22 , 27 ], and the quality of the synthesized speech by end-to-end models is close to human parity . Neural network based end-to-end TTS models usually first con vert the text to acoustic features (e.g., mel-spectrograms) and then transform mel-spectrograms into audio samples. Howe ver , most neural TTS systems generate mel-spectrograms autoregressi vely , which suffers from slow inference speed, and synthesized speech usually lacks of robustness (word skipping and repeating) and controllability (voice speed or prosody control). In this work, we propose F astSpeech to generate mel-spectrograms non-autore gressiv ely , which sufficiently handles the abo ve problems. Sequence to Sequence Learning Sequence to sequence learning [ 2 , 4 , 25 ] is usually b uilt on the encoder-decoder frame work: The encoder takes the source sequence as input and generates a set of representations. After that, the decoder estimates the conditional probability of each tar get element giv en the source representations and its preceding elements. The attention mechanism [ 2 ] is further introduced between the encoder and decoder in order to find which source representations to focus on when predicting the current element, and is an important component for sequence to sequence learning. In this work, instead of using the con ventional encoder -attention-decoder framework for sequence to sequence learning, we propose a feed-forward network to generate a sequence in parallel. Non-A utoregr essive Sequence Generation Unlike autoregressi ve sequence generation, non- autoregressi ve models generate sequence in parallel, without e xplicitly depending on the pre vious elements, which can greatly speed up the inference process. Non-autoregressi ve generation has been studied in some sequence generation tasks such as neural machine translation [ 7 , 8 , 26 ] and audio synthesis [ 16 , 18 , 20 ]. Our F astSpeech dif fers from the abo ve w orks in tw o aspects: 1) Pre vious works adopt non-autoregressi ve generation in neural machine translation or audio synthesis mainly for infer- ence speedup, while FastSpeech focuses on both inference speedup and impro ving the robustness and controllability of the synthesized speech in TTS. 2) For TTS, although P arallel W aveNet [ 16 ], Clar- iNet [18] and W av eGlow [20] generate audio in parallel, they are conditioned on mel-spectrograms, which are still generated autoregressi vely . Therefore, the y do not address the challenges considered in this work. There is a concurrent work [ 17 ] that also generates mel-spectrogram in parallel. Howe ver , it still adopts the encoder-decoder frame work with attention mechanism, which 1) requires 2 ∼ 3x model parameters compared with the teacher model and thus achiev es slower inference speedup than FastSpeech; 2) cannot totally solv e the problems of word skipping and repeating while F astSpeech nearly eliminates these issues. 3 F astSpeech In this section, we introduce the architecture design of FastSpeech. T o generate a target mel- spectrogram sequence in parallel, we design a nov el feed-forw ard structure, instead of using the encoder-attention-decoder based architecture as adopted by most sequence to sequence based autore- gressiv e [ 14 , 22 , 25 ] and non-autoregressi ve [ 7 , 8 , 26 ] generation. The ov erall model architecture of FastSpeech is sho wn in Figure 1. W e describe the components in detail in the following subsections. 3.1 Feed-F orward T ransformer The architecture for F astSpeech is a feed-forward structure based on self-attention in Transformer [ 25 ] and 1D con volution [ 5 , 19 ]. W e call this structure as Feed-F orward T ransformer (FFT), as shown in Figure 1a. Feed-F orward T ransformer stacks multiple FFT blocks for phoneme to mel-spectrogram transformation, with N blocks on the phoneme side, and N blocks on the mel-spectrogram side, with a length re gulator (which will be described in the ne xt subsection) in between to bridge the length gap between the phoneme and mel-spectrogram sequence. Each FFT block consists of a self-attention and 1D con volutional netw ork, as shown in Figure 1b. The self-attention netw ork consists of a multi-head attention to extract the cross-position information. Dif ferent from the 2-layer dense network in T ransformer , we use a 2-layer 1D con volutional network with ReLU activ ation. The moti v ation is that the adjacent hidden states are more closely related in the character/phoneme and mel-spectrogram 3 F F T B l o c k N x P h on e m e E m be dd ing P h o n e me L e n g t h R e g u l at o r N x L i n e a r L a y e r F F T B l o c k (a) Feed-Forward T ransformer M u l t i - He a d A t t e n t i o n A d d & N o r m C o n v 1 D A d d & No r m (b) FFT Block Du r ati on P r e di c tor 𝛼 = 1 . 0 𝒟 = [ 2 ,2 ,3 ,1 ] (c) Length Regulator A u t or eg r es s i v e T r a n s f or me r T T S Du r a ti on E x tr a c tor C on v 1D + Nor m P h on e m e C on v 1D + Nor m L i n e a r L a y e r M SE L os s T r a i n i n g (d) Duration Predictor Figure 1: The ov erall architecture for FastSpeech. (a). The feed-forward T ransformer . (b). The feed-forward T ransformer block. (c). The length regulator . (d). The duration predictor . MSE loss denotes the loss between predicted and extracted duration, which only e xists in the training process. sequence in speech tasks. W e evaluate the ef fectiveness of the 1D conv olutional network in the experimental section. Following T ransformer [ 25 ], residual connections, layer normalization, and dropout are added after the self-attention network and 1D con v olutional network respectiv ely . 3.2 Length Regulator The length re gulator (Figure 1c) is used to solve the problem of length mismatch between the phoneme and spectrogram sequence in the Feed-F orward T ransformer , as well as to control the v oice speed and part of prosody . The length of a phoneme sequence is usually smaller than that of its mel-spectrogram sequence, and each phoneme corresponds to se veral mel-spectrograms. W e refer to the length of the mel-spectrograms that corresponds to a phoneme as the phoneme duration (we will describe how to predict phoneme duration in the next subsection). Based on the phoneme duration d , the length regulator e xpands the hidden states of the phoneme sequence d times, and then the total length of the hidden states equals the length of the mel-spectrograms. Denote the hidden states of the phoneme sequence as H pho = [ h 1 , h 2 , ..., h n ] , where n is the length of the sequence. Denote the phoneme duration sequence as D = [ d 1 , d 2 , ..., d n ] , where Σ n i =1 d i = m and m is the length of the mel-spectrogram sequence. W e denote the length re gulator LR as H mel = LR ( H pho , D , α ) , (1) where α is a hyperparameter to determine the length of the expanded sequence H mel , thereby controlling the voice speed. For example, gi ven H pho = [ h 1 , h 2 , h 3 , h 4 ] and the correspond- ing phoneme duration sequence D = [2 , 2 , 3 , 1] , the expanded sequence H mel based on Equa- tion 1 becomes [ h 1 , h 1 , h 2 , h 2 , h 3 , h 3 , h 3 , h 4 ] if α = 1 (normal speed). When α = 1 . 3 (slow speed) and 0 . 5 (fast speed), the duration sequences become D α =1 . 3 = [2 . 6 , 2 . 6 , 3 . 9 , 1 . 3] ≈ [3 , 3 , 4 , 1] and D α =0 . 5 = [1 , 1 , 1 . 5 , 0 . 5] ≈ [1 , 1 , 2 , 1] , and the expanded sequences become [ h 1 , h 1 , h 1 , h 2 , h 2 , h 2 , h 3 , h 3 , h 3 , h 3 , h 4 ] and [ h 1 , h 2 , h 3 , h 3 , h 4 ] respectiv ely . W e can also control the break between words by adjusting the duration of the space characters in the sentence, so as to adjust part of prosody of the synthesized speech. 3.3 Duration Predictor Phoneme duration prediction is important for the length re gulator . As sho wn in Figure 1d, the duration predictor consists of a 2-layer 1D con volutional network with ReLU activ ation, each followed by the layer normalization and the dropout layer , and an e xtra linear layer to output a scalar, which is exactly the predicted phoneme duration. Note that this module is stacked on top of the FFT blocks on the phoneme side and is jointly trained with the FastSpeech model to predict the length of 4 mel-spectrograms for each phoneme with the mean square error (MSE) loss. W e predict the length in the logarithmic domain, which makes them more Gaussian and easier to train. Note that the trained duration predictor is only used in the TTS inference phase, because we can directly use the phoneme duration extracted from an autore gressiv e teacher model in training (see following discussions). In order to train the duration predictor, we extract the ground-truth phoneme duration from an autoregressi ve teacher TTS model, as sho wn in Figure 1d. W e describe the detailed steps as follows: • W e first train an autoregressi ve encoder -attention-decoder based T ransformer TTS model following [14]. • For each training sequence pair , we e xtract the decoder-to-encoder attention alignments from the trained teacher model. There are multiple attention alignments due to the multi- head self-attention [ 25 ], and not all attention heads demonstrate the diagonal property (the phoneme and mel-spectrogram sequence are monotonously aligned). W e propose a focus rate F to measure ho w an attention head is close to diagonal: F = 1 S P S s =1 max 1 ≤ t ≤ T a s,t , where S and T are the lengths of the ground-truth spectrograms and phonemes, a s,t donates the element in the s -th ro w and t -th column of the attention matrix. W e compute the focus rate for each head and choose the head with the largest F as the attention alignments. • Finally , we extract the phoneme duration sequence D = [ d 1 , d 2 , ..., d n ] according to the duration extractor d i = P S s =1 [arg max t a s,t = i ] . That is, the duration of a phoneme is the number of mel-spectrograms attended to it according to the attention head selected in the abov e step. 4 Experimental Setup 4.1 Datasets W e conduct experiments on LJSpeech dataset [ 10 ], which contains 13,100 English audio clips and the corresponding text transcripts, with the total audio length of approximate 24 hours. W e randomly split the dataset into 3 sets: 12500 samples for training, 300 samples for v alidation and 300 samples for testing. In order to alle viate the mispronunciation problem, we conv ert the text sequence into the phoneme sequence with our internal grapheme-to-phoneme con version tool [ 23 ], follo wing [ 1 , 22 , 27 ]. For the speech data, we con vert the ra w wav eform into mel-spectrograms following [ 22 ]. Our frame size and hop size are set to 1024 and 256, respectiv ely . In order to ev aluate the rob ustness of our proposed FastSpeech, we also choose 50 sentences which are particularly hard for TTS system, following the practice in [19]. 4.2 Model Configuration FastSpeech model Our F astSpeech model consists of 6 FFT blocks on both the phoneme side and the mel-spectrogram side. The size of the phoneme vocab ulary is 51, including punctuations. The dimension of phoneme embeddings, the hidden size of the self-attention and 1D con volution in the FFT block are all set to 384. The number of attention heads is set to 2. The kernel sizes of the 1D con volution in the 2-layer con volutional netw ork are both set to 3, with input/output size of 384/1536 for the first layer and 1536/384 in the second layer . The output linear layer conv erts the 384-dimensional hidden into 80-dimensional mel-spectrogram. In our duration predictor, the k ernel sizes of the 1D con volution are set to 3, with input/output sizes of 384/384 for both layers. A utoregr essive T ransf ormer TTS model The autoregressi ve T ransformer TTS model serves two purposes in our work: 1) to e xtract the phoneme duration as the target to train the duration predictor; 2) to generate mel-spectrogram in the sequence-le vel knowledge distillation (which will be introduced in the next subsection). W e refer to [ 14 ] for the configurations of this model, which consists of a 6-layer encoder , a 6-layer decoder, e xcept that we use 1D con volution network instead of position-wise FFN. The number of parameters of this teacher model is similar to that of our FastSpeech model. 5 4.3 T raining and Inference W e first train the autoregressi ve T ransformer TTS model on 4 NVIDIA V100 GPUs, with batchsize of 16 sentences on each GPU. W e use the Adam optimizer with β 1 = 0 . 9 , β 2 = 0 . 98 , ε = 10 − 9 and follow the same learning rate schedule in [25]. It tak es 80k steps for training until con vergence. W e feed the text and speech pairs in the training set to the model again to obtain the encoder-decoder attention alignments, which are used to train the duration predictor . In addition, we also lev erage sequence-le vel knowledge distillation [ 12 ] that has achieved good performance in non-autoregressi ve machine translation [ 7 , 8 , 26 ] to transfer the knowledge from the teacher model to the student model. For each source te xt sequence, we generate the mel-spectrograms with the autore gressiv e T ransformer TTS model and take the source text and the generated mel-spectrograms as the paired data for FastSpeech model training. W e train the FastSpeech model together with the duration predictor . The optimizer and other hyper-parameters for FastSpeech are the same as the autore gressiv e Transformer TTS model. The FastSpeech model training takes about 80k steps on 4 NVIDIA V100 GPUs. In the inference process, the output mel-spectrograms of our FastSpeech model are transformed into audio samples using the pretrained W av eGlow [20] 5 . 5 Results In this section, we ev aluate the performance of FastSpeech in terms of audio quality , inference speedup, robustness, and controllability . A udio Quality W e conduct the MOS (mean opinion score) ev aluation on the test set to measure the audio quality . W e keep the text content consistent among dif ferent models so as to exclude other interference factors, only e xamining the audio quality . Each audio is listened by at least 20 testers, who are all nativ e English speakers. W e compare the MOS of the generated audio samples by our FastSpeech model with other systems, which include 1) GT , the ground truth audio; 2) GT (Mel + W aveGlow) , where we first con vert the ground truth audio into mel-spectrograms, and then con vert the mel-spectrograms back to audio using W av eGlow; 3) T acotr on 2 [ 22 ] (Mel + W aveGlow) ; 4) T r ansformer TTS [ 14 ] (Mel + W aveGlow) . 5) Merlin [ 28 ] (WORLD) , a popular parametric TTS system with WORLD [ 15 ] as the vocoder . The results are sho wn in T able 1. It can be seen that our FastSpeech can nearly match the quality of the T ransformer TTS model and T acotron 2 6 . Method MOS GT 4.41 ± 0.08 GT (Mel + W aveGlow) 4.00 ± 0.09 T acotr on 2 [22] (Mel + W aveGlow) 3.86 ± 0.09 Merlin [28] (W ORLD) 2.40 ± 0.13 T r ansformer TTS [14] (Mel + W aveGlow) 3.88 ± 0.09 F astSpeech (Mel + W aveGlow) 3.84 ± 0.08 T able 1: The MOS with 95% confidence interv als. Inference Speedup W e ev aluate the inference latency of FastSpeech compared with the autore- gressiv e T ransformer TTS model, which has similar number of model parameters with FastSpeech. W e first show the inference speedup for mel-spectrogram generation in T able 2. It can be seen that FastSpeech speeds up the mel-spectrogram generation by 269.40x, compared with the T ransformer TTS model. W e then sho w the end-to-end speedup when using W aveGlo w as the vocoder . It can be seen that FastSpeech can still achie ve 38.30x speedup for audio generation. 5 https://github .com/NVIDIA/wav eglo w 6 According to our further comprehensi ve experiments on our internal datasets, the voice quality of FastSpeech can always match that of the teacher model on multiple languages and multiple voices, if we use more unlabeled text for kno wledge distillation. 6 Method Latency (s) Speedup T r ansformer TTS [14] (Mel) 6.735 ± 3.969 / F astSpeech (Mel) 0.025 ± 0.005 269.40 × T r ansformer TTS [14] (Mel + W aveGlow) 6.895 ± 3.969 / F astSpeech (Mel + W aveGlow) 0.180 ± 0.078 38.30 × T able 2: The comparison of inference latency with 95% confidence intervals. The ev aluation is conducted on a server with 12 Intel Xeon CPU, 256GB memory , 1 NVIDIA V100 GPU and batch size of 1. The a verage length of the generated mel-spectrograms for the two systems are both about 560. W e also visualize the relationship between the inference latency and the length of the predicted mel- spectrogram sequence in the test set. Figure 2 sho ws that the inference latency barely increases with the length of the predicted mel-spectrogram for FastSpeech, while increases lar gely in T ransformer TTS. This indicates that the inference speed of our method is not sensitiv e to the length of generated audio due to parallel generation. (a) FastSpeech (b) T ransformer TTS Figure 2: Inference time (second) vs. mel-spectrogram length for FastSpeech and T ransformer TTS. Robustness The encoder -decoder attention mechanism in the autoregressi ve model may cause wrong attention alignments between phoneme and mel-spectrogram, resulting in instability with word repeating and word skipping. T o ev aluate the robustness of FastSpeech, we select 50 sentences which are particularly hard for TTS system 7 . W ord error counts are listed in T able 3. It can be seen that T ransformer TTS is not robust to these hard cases and gets 34% error rate, while FastSpeech can effecti vely eliminate w ord repeating and skipping to improve intelligibility . Method Repeats Skips Error Sentences Error Rate T acotr on 2 4 11 12 24% T r ansformer TTS 7 15 17 34% F astSpeech 0 0 0 0% T able 3: The comparison of rob ustness between FastSpeech and other systems on the 50 particularly hard sentences. Each kind of w ord error is counted at most once per sentence. Length Control As mentioned in Section 3.2, F astSpeech can control the v oice speed as well as part of the prosody by adjusting the phoneme duration, which cannot be supported by other end-to-end TTS systems. W e show the mel-spectrograms before and after the length control, and also put the audio samples in the supplementary material for reference. V oice Speed The generated mel-spectrograms with different voice speeds by lengthening or short- ening the phoneme duration are shown in Figure 3. W e also attach sev eral audio samples in the 7 These cases include single letters, spellings, repeated numbers, and long sentences. W e list the cases in the supplementary materials. 7 supplementary material for reference. As demonstrated by the samples, FastSpeech can adjust the voice speed from 0.5x to 1.5x smoothly , with stable and almost unchanged pitch. (a) 1.5x V oice Speed (b) 1.0x V oice Speed (c) 0.5x V oice Speed Figure 3: The mel-spectrograms of the voice with 1.5x, 1.0x and 0.5x speed respectiv ely . The input text is " F or a while the preac her addr esses himself to the congre gation at large , who listen attentively ". Br eaks Between W ords FastSpeech can add breaks between adjacent words by lengthening the duration of the space characters in the sentence, which can impro ve the prosody of v oice. W e sho w an example in Figure 4, where we add breaks in two positions of the sentence to improv e the prosody . (a) Original Mel-spectrograms (b) Mel-spectrograms after adding breaks Figure 4: The mel-spectrograms before and after adding breaks between words. The corresponding text is " that he appear ed to feel deeply the for ce of the re verend gentleman’ s observations, especially when the chaplain spoke of ". W e add breaks after the words " deeply " and " especially " to improve the prosody . The red boxes in Figure 4b correspond to the added breaks. Ablation Study W e conduct ablation studies to verify the effecti veness of sev eral components in FastSpeech, including 1D Conv olution and sequence-le vel knowledge distillation. W e conduct CMOS ev aluation for these ablation studies. System CMOS F astSpeech 0 F astSpeech without 1D convolution in FFT block -0.113 F astSpeech without sequence-level knowledge distillation -0.325 T able 4: CMOS comparison in the ablation studies. 1D Con volution in FFT Block W e propose to replace the original fully connected layer (adopted in T ransformer [ 25 ]) with 1D con volution in FFT block, as described in Section 3.1. Here we conduct experiments to compare the performance of 1D con volution to the fully connected layer with similar number of parameters. As shown in T able 4, replacing 1D con volution with fully connected layer results in -0.113 CMOS, which demonstrates the effecti veness of 1D con volution. Sequence-Level Knowledge Distillation As described in Section 4.3, we lev erage sequence-le vel knowledge distillation for FastSpeech. W e conduct CMOS ev aluation to compare the performance of FastSpeech with and without sequence-le vel kno wledge distillation, as shown in T able 4. W e find that removing sequence-le vel knowledge distillation results in -0.325 CMOS, which demonstrates the effecti veness of sequence-le vel kno wledge distillation. 8 6 Conclusions In this work, we hav e proposed FastSpeech: a fast, robust and controllable neural TTS system. FastSpeech has a no vel feed-forward network to generate mel-spectrogram in parallel, which consists of se veral ke y components including feed-forward Transformer blocks, a length re gulator and a duration predictor . Experiments on LJSpeech dataset demonstrate that our proposed FastSpeech can nearly match the autoregressi ve Transformer TTS model in terms of speech quality , speed up the mel-spectrogram generation by 270x and the end-to-end speech synthesis by 38x, almost eliminate the problem of word skipping and repeating, and can adjust v oice speed (0.5x-1.5x) smoothly . For future work, we will continue to improve the quality of the synthesized speech, and apply FastSpeech to multi-speaker and lo w-resource settings. W e will also train F astSpeech jointly with a parallel neural vocoder to mak e it fully end-to-end and parallel. Acknowledgments This work was supported by the National Natural Science Foundation of China under Grant No.61602405, No.61836002. This work was also supported by the China Kno wledge Centre of Engineering Sciences and T echnology . References [1] Sercan O Arik, Mike Chrzano wski, Adam Coates, Gregory Diamos, Andre w Gibiansky , Y ong- guo Kang, Xian Li, John Miller , Andrew Ng, Jonathan Raiman, et al. Deep voice: Real-time neural text-to-speech. arXiv preprint , 2017. [2] Dzmitry Bahdanau, K yunghyun Cho, and Y oshua Bengio. Neural machine translation by jointly learning to align and translate. ICLR 2015 , 2015. [3] Samy Bengio, Oriol V inyals, Navdeep Jaitly , and Noam Shazeer . Scheduled sampling for sequence prediction with recurrent neural networks. In Advances in Neural Information Pr ocessing Systems , pages 1171–1179, 2015. [4] W illiam Chan, Navdeep Jaitly , Quoc Le, and Oriol V inyals. Listen, attend and spell: A neural network for large vocab ulary con versational speech recognition. In Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Conference on , pages 4960–4964. IEEE, 2016. [5] Jonas Gehring, Michael Auli, Da vid Grangier , Denis Y arats, and Y ann N Dauphin. Con volutional sequence to sequence learning. In Pr oceedings of the 34th International Confer ence on Machine Learning-V olume 70 , pages 1243–1252. JMLR. org, 2017. [6] Daniel Grif fin and Jae Lim. Signal estimation from modified short-time fourier transform. IEEE T r ansactions on Acoustics, Speech, and Signal Pr ocessing , 32(2):236–243, 1984. [7] Jiatao Gu, James Bradbury , Caiming Xiong, V ictor OK Li, and Richard Socher . Non- autoregressi ve neural machine translation. arXiv preprint , 2017. [8] Junliang Guo, Xu T an, Di He, T ao Qin, Linli Xu, and T ie-Y an Liu. Non-autoregressi ve neural machine translation with enhanced decoder input. In AAAI , 2019. [9] Andrew J Hunt and Alan W Black. Unit selection in a concatenativ e speech synthesis system using a lar ge speech database. In 1996 IEEE International Confer ence on Acoustics, Speech, and Signal Pr ocessing Confer ence Pr oceedings , volume 1, pages 373–376. IEEE, 1996. [10] Keith Ito. The lj speech dataset. https://keithito.com/LJ- Speech- Dataset/ , 2017. [11] Zeyu Jin, Adam Finkelstein, Gautham J Mysore, and Jingw an Lu. Fftnet: A real-time speaker- dependent neural v ocoder . In 2018 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 2251–2255. IEEE, 2018. 9 [12] Y oon Kim and Alexander M Rush. Sequence-level knowledge distillation. arXiv pr eprint arXiv:1606.07947 , 2016. [13] Hao Li, Y ongguo Kang, and Zhenyu W ang. Emphasis: An emotional phoneme-based acoustic model for speech synthesis system. arXiv pr eprint arXiv:1806.09276 , 2018. [14] Naihan Li, Shujie Liu, Y anqing Liu, Sheng Zhao, Ming Liu, and Ming Zhou. Close to human quality tts with transformer . arXiv preprint , 2018. [15] Masanori Morise, Fumiya Y okomori, and K enji Ozawa. W orld: a vocoder -based high-quality speech synthesis system for real-time applications. IEICE TRANSACTIONS on Information and Systems , 99(7):1877–1884, 2016. [16] Aaron v an den Oord, Y azhe Li, Igor Babuschkin, Karen Simonyan, Oriol V inyals, Koray Kavukcuoglu, Geor ge van den Driessche, Edward Lockhart, Luis C Cobo, Florian Stimber g, et al. Parallel wav enet: F ast high-fidelity speech synthesis. arXiv pr eprint arXiv:1711.10433 , 2017. [17] Kainan Peng, W ei Ping, Zhao Song, and Kexin Zhao. Parallel neural text-to-speech. arXiv pr eprint arXiv:1905.08459 , 2019. [18] W ei Ping, Kainan Peng, and Jitong Chen. Clarinet: Parallel wav e generation in end-to-end text-to-speech. In International Conference on Learning Repr esentations , 2019. [19] W ei Ping, Kainan Peng, Andrew Gibiansky , Sercan O. Arik, Ajay Kannan, Sharan Narang, Jonathan Raiman, and John Miller . Deep voice 3: 2000-speaker neural te xt-to-speech. In International Confer ence on Learning Repr esentations , 2018. [20] Ryan Prenger , Rafael V alle, and Bryan Catanzaro. W av eglow: A flow-based generati ve netw ork for speech synthesis. In ICASSP 2019-2019 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 3617–3621. IEEE, 2019. [21] Y i Ren, Xu T an, T ao Qin, Sheng Zhao, Zhou Zhao, and Tie-Y an Liu. Almost unsupervised text to speech and automatic speech recognition. In ICML , 2019. [22] Jonathan Shen, Ruoming Pang, Ron J W eiss, Mike Schuster , Na vdeep Jaitly , Zongheng Y ang, Zhifeng Chen, Y u Zhang, Y uxuan W ang, Rj Skerrv-Ryan, et al. Natural tts synthesis by conditioning wa venet on mel spectrogram predictions. In 2018 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 4779–4783. IEEE, 2018. [23] Hao Sun, Xu T an, Jun-W ei Gan, Hongzhi Liu, Sheng Zhao, T ao Qin, and T ie-Y an Liu. T ok en- lev el ensemble distillation for grapheme-to-phoneme con version. In INTERSPEECH , 2019. [24] Aäron V an Den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol V inyals, Alex Grav es, Nal Kalchbrenner, Andre w W Senior , and Koray Ka vukcuoglu. W avenet: A generative model for raw audio. SSW , 125, 2016. [25] Ashish V aswani, Noam Shazeer , Niki Parmar , Jakob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser , and Illia Polosukhin. Attention is all you need. In Advances in Neural Informa- tion Pr ocessing Systems , pages 5998–6008, 2017. [26] Y iren W ang, Fei T ian, Di He, T ao Qin, ChengXiang Zhai, and T ie-Y an Liu. Non-autoregressi ve machine translation with auxiliary regularization. In AAAI , 2019. [27] Y uxuan W ang, RJ Skerry-Ryan, Daisy Stanton, Y onghui W u, Ron J W eiss, Navdeep Jaitly , Zongheng Y ang, Y ing Xiao, Zhifeng Chen, Samy Bengio, et al. T acotron: T o wards end-to-end speech synthesis. arXiv pr eprint arXiv:1703.10135 , 2017. [28] Zhizheng W u, Oli ver W atts, and Simon King. Merlin: An open source neural network speech synthesis system. In SSW , pages 202–207, 2016. 10 A ppendices A Model Hyperparameters T able 5: Hyperparameters of Transformer TTS and FastSpeech. Encoder and Decoder are for T ransformer TTS, Phoneme-Side and Mel-Side FFT are for FastSpeech. Hyperparameter T ransformer TTS F astSpeech Phoneme Embedding Dimension 384 384 Pre-net Layers 3 / Pre-net Hidden 384 / Encoder/Phoneme-Side FFT Layers 6 6 Encoder/Phoneme-Side FFT Hidden 384 384 Encoder/Phoneme-Side FFT Con v1D Kernel 3 3 Encoder/Phoneme-Side FFT Con v1D Filter Size 1024 1536 Encoder/Phoneme-Side FFT Attention Heads 2 2 Decoder/Mel-Side FFT Layers 6 6 Decoder/Mel-Side FFT Hidden 384 384 Decoder/Mel-Side FFT Con v1D Kernel 3 3 Decoder/Mel-Side FFT Con v1D Filter Size 1024 1536 Decoder/Mel-Side FFT Attention Headers 2 2 Duration Predictor Con v1D Kernel / 3 Duration Predictor Con v1D Filter Size / 256 Dropout 0.1 0.1 Batch Size 64 (16 * 4GPUs) 64 (16 * 4GPUs) T otal Number of Parameters 30.7M 30.1M B 50 Particularly Hard Sentences The 50 particularly hard sentences mentioned in Section 5 are listed below: 01. a 02. b 03. c 04. H 05. I 06. J 07. K 08. L 09. 22222222 hello 22222222 10. S D S D Pass zero - zero Fail - zero to zero - zero - zero Cancelled - fifty nine to three - two - sixty four T otal - fifty nine to three - two - 11. S D S D Pass - zero - zero - zero - zero F ail - zero - zero - zero - zero Cancelled - four hundred and sixteen - sev enty six - 12. zero - one - one - two Cancelled - zero - zero - zero - zero T otal - two hundred and eighty six - nineteen - sev en - 13. forty one to fi ve three hundred and ele ven F ail - one - one to zero two Cancelled - zero - zero to zero zero T otal - 14. zero zero one , MS03 - zero twenty fi ve , MS03 - zero thirty two , MS03 - zero thirty nine , 15. 1b204928 zero zero zero zero zero zero zero zero zero zero zero zero zero zero one se ven ole32 11 16. zero zero zero zero zero zero zero zero two se ven nine eight F three forty zero zero zero zero zero six four two eight zero one eight 17. c fi ve eight zero three three nine a zero bf eight F ALSE zero zero zero bba3add2 - c229 - 4cdb - 18. Calendaring agent f ailed with error code 0x80070005 while saving appointment . 19. Exit process - break ld - Load module - output ud - Unload module - ignore ser - System error - ignore ibp - Initial breakpoint - 20. Common DB connectors include the DB - nine , DB - fifteen , DB - nineteen , DB - twenty five , DB - thirty sev en , and DB - fifty connectors . 21. T o deli ver interf aces that are significantly better suited to create and process RFC eight twenty one , RFC eight twenty two , RFC nine se venty se ven , and MIME content . 22. int1 , int2 , int3 , int4 , int5 , int6 , int7 , int8 , int9 , 23. se ven _ ctl00 ctl04 ctl01 ctl00 ctl00 24. Http0XX , Http1XX , Http2XX , Http3XX , 25. config file must contain A , B , C , D , E , F , and G . 26. mondo - deb ug mondo - ship motif - deb ug motif - ship sts - deb ug sts - ship Comparing local files to checkpoint files ... 27. Rusb vts . dll Dsaccessbvts . dll Exchmembvt . dll Draino . dll Im trying to deploy a ne w topology , and I keep getting this error . 28. Y ou can call me directly at four tw o fi ve se ven zero three sev en three four four or my cell four two fiv e four four four se ven four se ven four or send me a meeting request with all the appropriate information . 29. F ailed zero point zero zero percent < one zero zero one zero zero zero zero Internal . Exchange . ContentFilter . BVT ContentFilter . BVT_log . xml Error ! Filename not specified . 30. C colon backslash o one tw o f c p a r t y backslash d e v one two backslash oasys backslash legac y backslash web backslash HELP 31. src backslash mapi backslash t n e f d e c dot c dot o l d backslash backslash m o z a r t f one backslash e x fiv e 32. copy backslash backslash j o h n f a n four backslash scratch backslash M i c r o s o f t dot S h a r e P o i n t dot 33. T ake a look at h t t p colon slash slash w w w dot granite dot a b dot c a slash access slash email dot 34. backslash bin backslash premium backslash forms backslash r e g i o n a l o p t i o n s dot a s p x dot c s Raj , DJ , 35. Anuraag backslash backslash r a d u r five backslash d e b u g dot one eight zero nine underscore P R two h dot s t s contains 36. p l a t f o r m right bracket backslash left bracket f l a v o r right bracket backslash s e t u p dot e x e 37. backslash x eight six backslash Ship backslash zero backslash A d d r e s s B o o k dot C o n t a c t s A d d r e s 38. Mine is here backslash backslash g a b e h a l l hyphen m o t h r a backslash S v r underscore O f f i c e s v r 39. h t t p colon slash slash teams slash sites slash T A G slash def ault dot aspx As always , any feedback , comments , 40. two thousand and fiv e h t t p colon slash slash news dot com dot com slash i slash n e slash f d slash two zero zero three slash f d 41. backslash i n t e r n a l dot e x c h a n g e dot m a n a g e m e n t dot s y s t e m m a n a g e 42. I think Rich’ s post highlights that we could have been more strate gic about how the sum total of XBO X three hundred and sixtys were distributed . 43. 64X64 , 8K , one hundred and eighty four ASSEMBL Y , DIGIT AL VIDEO DISK DRIVE , INTERN AL , 8X , 44. So we are back to Extended MAPI and C++ because . Extended MAPI does not hav e a dual interface VB or VB .Net can read . 45. Thanks , Bor ge Trongmo Hi gurus , Could you help us E2K ASP guys with the following issue ? 46. Thanks J RGR Are you using the LDDM dri ver for this system or the in the b uild XDDM driv er ? 12 47. Btw , you might remember me from our discussion about O W A automation and OW A readiness day a year ago . 48. empidtool . ex e creates HKEY_CURRENT_USER Softw are Microsoft Of fice Common QMPersNum in the registry , queries AD , and the populate the registry with MS employment ID if av ailable else an error code is logged . 49. Thursday , via a joint press release and Microsoft AI Blog, we will announce Microsoft’ s continued partnership with Shell leveraging cloud, AI, and collaboration technology to dri ve industry innov ation and transformation. 50. Actress Fan Bingbing attends the screening of ’Ash Is Purest White (Jiang Hu Er Nv)’ during the 71st annual Cannes Film Festiv al 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment