Hebbian Synaptic Modifications in Spiking Neurons that Learn

In this paper, we derive a new model of synaptic plasticity, based on recent algorithms for reinforcement learning (in which an agent attempts to learn appropriate actions to maximize its long-term average reward). We show that these direct reinforce…

Authors: Peter L. Bartlett, Jonathan Baxter

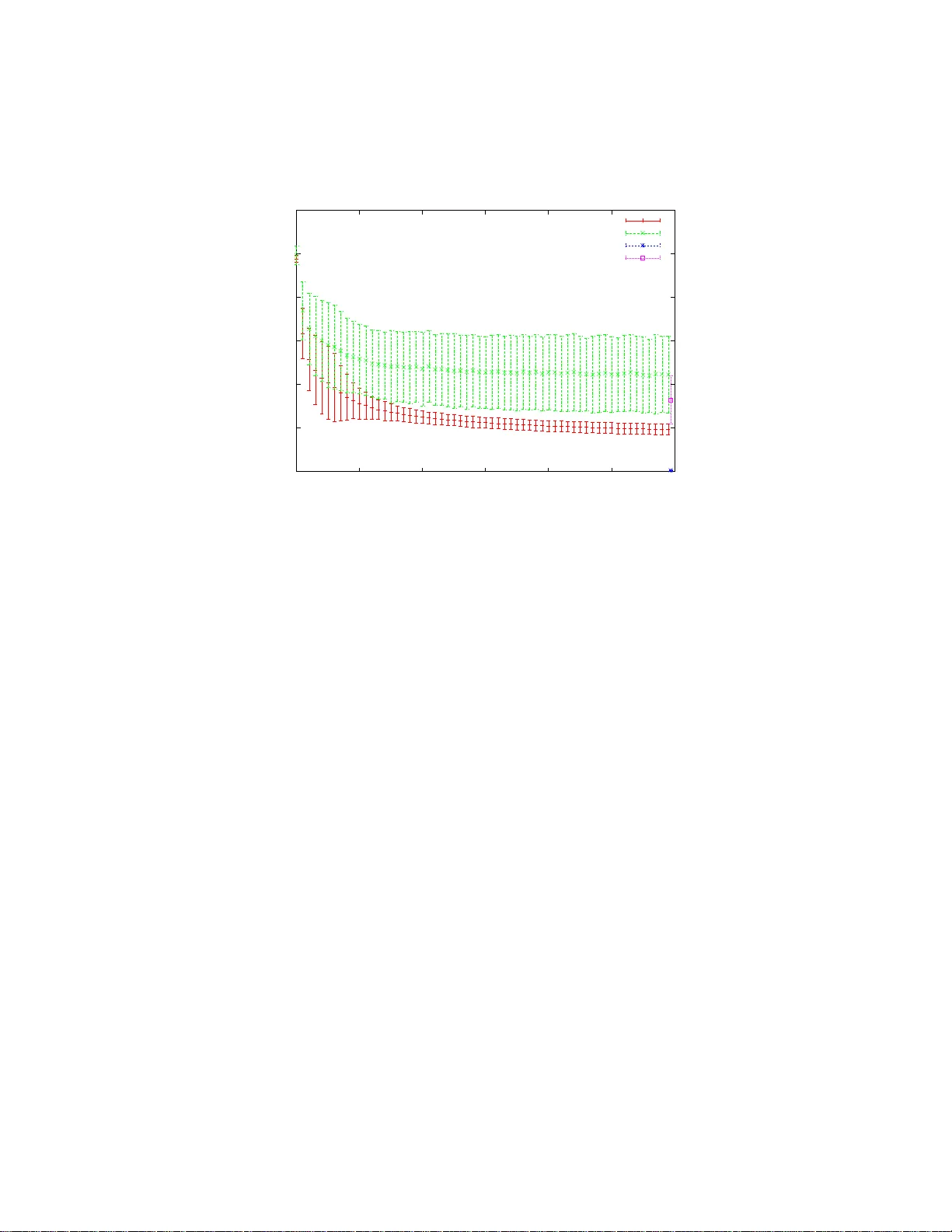

Hebbian Synaptic Modifications in Spiking Neurons that Learn Peter L. Bartlett and Jonathan Baxter Research School of Information Sciences and Engineering Australian National Univ ersity Peter .Bartlett@anu.edu.au, Jon athan . B axter@anu.edu.au No vember 27, 1999 Abstract In this pape r , w e deri ve a ne w model of syn aptic plasticity , base d on re - cent algorit hms for reinforce ment learning (in whic h a n a gent attempts to learn appropriate actions to maximize its long-ter m ave rage rew ard). W e sho w that these direct reinforc ement learning algorithms also giv e locally optimal perfor mance for the problem of reinforceme nt learning with mul- tiple agen ts, without any explicit communica tion between agen ts. B y co n- siderin g a network of spiking neurons as a collection of agents attempting to maximize the long-term av erage of a re ward signal, w e deriv e a synap- tic update rule that is qualitati vely similar to H ebb’ s postulate . This rule requir es only simple computations, such as additio n and leak y integ ration, and in volv es only qua ntities that are a v ailable in the vicinity of the synapse . Furthermor e, it leads to syna ptic connect ion strength s that giv e locally op- timal valu es of the long term av erage reward . The reinforcement learning paradi gm is suf ficiently broad to encompas s many learning problems that are solved by the brain. W e illustra te, with simulati ons, that the approach is ef fecti ve for simple pattern classificatio n an d motor learning tasks. 1 What is a go o d synapt i c up d a te rule? It is widely accepted that t h e functions performed by neural circuits are modi- fied by adjustments to the strength of the synaptic connections b et w een neurons . 1 In the 1940s, Donald Hebb speculated that such adj ustments are associated with simultaneous (or nearly si m ultaneous) firing of t h e presynaptic and postsynaptic neurons [14]: When a n axon of cell A ... persi s tently takes part in firing [cell B ], some growth process o r metabolic change takes place [to increase] A ’ s ef ficacy as one o f the cells firing B . Although this pos t ulate is rather vague, it provides the important suggestion that the computations p erformed by neural circuits could be modified by a simpl e cel- lular mechanism . Many candidates for Hebbian synaptic update rules have been suggested, and there is consid erable experimental evidence of such mechanis ms (see, for instance, [7, 23, 16, 17 , 1 9 , 21]). Hebbian modi fications to syn apt ic s trengths seem in t uitively reasonable as a mechanism fo r mo difying the function of a neural circuit. Howe ver , it i s n o t clear that these synaptic upd ates actually improve t h e performance of a neural circuit in any useful sense. Indeed, simulati on studies of s p ecific H ebb i an u pdate ru l es hav e i llustrated some serious s hortcomings (see, for example, [20]). In contrast wit h the “plausibi lity of cellular mechanisms” approach, m ost ar- tificial neural net work research has emphasized performance in practical applica- tions. Synaptic update rules for artificial neural networks ha ve been de vised that minimize a s uitable cost function. Update rules such as the backpropagati on al- gorithm [22 ] (see [12 ] for a more d etailed treatment) perform gradient descent in parameter space: they modify the connection strengths in a direction that max- imally decreases the cost function, and hence leads to a l ocal minim um of t hat function. Through approp riate choice of the cost function, these parameter op- timization algorithm s ha ve allowed artificial neural networks t o be applied (wit h considerable success) to a variety of pattern recogniti on and predictive modellin g problems. Unfortunately , there i s litt l e evidence th at the (rather com p licated) computa- tions required for the synaptic update rule in parameter optim ization p rocedures like the backpropagation algorithm can be performed in biological neural circuits. In particular , these algorithms require gradient signals to be propagated backwards through the network. This paper p resents a syn apt ic update rule that p rovably optimizes t he per- formance of a neural network, but requires onl y s imple computatio ns in volving signals that are readily av ailable in bi ological neurons. This synaptic update rule is consistent wit h Hebb ’ s postulate. 2 Related updat e rules hav e been propos ed in t he past. For in s tance, the u p dates used in the adaptive search el em ents (ASEs) described in [4, 2, 1, 3] are of a sim - ilar form (see also [25]). Howe ver , it is not known i n what sense t hese upd ate rules optimize performance. The update rule we present here is based o n similar foundations to t h e REINFORCE class o f algorithm s introduced by Williams [27]. Howe ver , when appli ed to sp iking neurons such as t h ose described here, REIN- FORCE leads to parameter updates i n the steepest ascent direction i n two lim ited situations: when the rewa rd d epends only on the current input to the neuron and the neuron outputs do not af fect the statisti cal properti es o f t h e inputs, and when the rew ard depends only on the sequence of i nputs sin ce t he arriv al of the last re ward value. Furthermo re, in bo t h cases the parameter updates must be carefully synchronized with the timing of t he re ward values, which is esp ecially problem- atic for networks with mo re than one l ayer of neurons. In Section 2, we describe r e inforce ment learning pr oblems , in which an agent aims to maxim ize the long-term average of a r ewar d signal . Reinforcement learn- ing is a useful abstraction that encom passes many diverse learning problem s , such as supervised learnin g for pattern classification or predictive mo delling, time se- ries predicti on, adaptive control, and game playin g . W e revie w th e dire ct r ein- for cement learning al g orithm we proposed i n [5] and show in Section 3 that, in the case of mul t iple ind epend ent agents cooperating to o p timize performance, the algorithm con veniently decomposes in such a way that the agents are able to learn independently with no n eed for explicit communicatio n . In Section 4, we consider a network o f model neurons as a collection of agents cooperating to sol ve a reinforcement learning problem , and show that the direct reinforcement learning algorithm l eads to a simple synaptic u p date rule, and t h at the decompos ition property impli es t hat only l ocal informati o n is needed for the updates. Section 5 d iscusses po s sible mechanis ms for the synaptic update rule in biological neural networks. The parsi m ony of requiring onl y one simple mechanis m to opti m ize param- eters for m any diverse learning prob l ems is appealing (cf [26]). In Section 6, we present results of sim ulation e xperiments, illus t rating the performance of this update rule for pattern recognition and adaptive control probl ems. 2 Reinf orcement learning ‘Reinforcement learning’ refers to a general class o f learning problems i n which an agent attempts to imp rove its p erformance at some task. For in s tance, we 3 might want a robot to s w eep the floor of an offi ce; to guide the robot, we provide feedback in the form of occasional re wards, perhaps depending on ho w much dust remains on the floor . This section explains ho w we can formally define this class of problems and sho ws that i t includes as special cases many con v entional learning problems. It als o revie ws a general-purpose learning meth od for reinforcement learning problems. W e can m odel the interaction s between an agent and its en vironm ent mathe- matically as a par t ially observable Mark ov decision pr ocess (POMDP). Figure 1 illustrates the features of a POMDP . At each (discrete) time step t , the agent and the en vironment are in a particular state x t in a state space S . F or our clean- ing robot , x t might include t he agent’ s location and orientation , together with the location of dust and obstacles in the office. The state at ti me t determines an observation vector y t (from so me set Y ) that is seen by the agent. For ins tance in t he cleaning example, y t might con s ist of visual informat i on a vailable at the agent’ s current location. Since observations are typi call y noisy , the relati o nship between the s tate and the correspon ding observ ation is modelled as a probability distribution ν ( x t ) over observation v ectors. No tice that the probability distribution depends on the state. When t h e agent sees an observation vector y t , i t decides on an action u t from some set U of av ailable actions. In the office cleaning example, the av ailable actions might consist of directions in which to move or operations of the robot’ s broom. A m apping from observations to actions is referred to as a policy . W e allow the agent to choose actions using a randomi zed policy . That is, the observation vector y t determines a probability distri bution µ ( y t ) over action s, and the action is chosen randoml y according to thi s dist ribution. W e are concerned with random- ized p o licies that depend on a vector θ ∈ R k of k parameters (and we write the probability distribution over actions as µ ( y t , θ ) ). The agent ’ s actions determ i ne the ev olution of states, po ssibly in a probabili s- tic way . T o model t his, each action determi nes the probabilit i es of transit i ons from the current st at e to p ossible su bsequent states. F or a finite stat e space S , we can write th ese probabiliti es as a t ransition probabili ty matrix, P ( u t ) . Here, the i, j -the entry o f P ( u t ) ( p ij ( u t ) ) is the probabil ity of maki ng a transitio n from state i to state j given that the agent took action u t in state i . In the offic e, the actions chosen by the agent determine its location and orientation and the location of dust and obs tacles at th e ne xt time instant, perhaps wi th som e random element to model the prob ability that the agent slips or bumps into an ob s tacle. Finally , in ev ery state, the agent recei ves a rew ard sig nal r t , which is a real 4 Observ ation y t Re ward r t State transiti on, P ( u t ) . State, x t Observ ation process, ν ( x t ) . Re ward process. ✛ ✛ ✛ En vironment Parame ters, θ . Polic y , µ ( θ, y t ) . ✻ Agent . ✲ ✲ ✛ Action, u t Figure 1: Partially o bservable Markov decision process (P OMDP). number . For the cleanin g agent, the rew ard might b e zero most of the t ime, but take a positiv e value when the agent removes some dust . The aim of the agent is to choose a policy (that is, t h e parameters that deter- mine the pol i cy) so as to m aximize the long -term ave rage of the rewa rd, η = lim T →∞ E " 1 T T X t =1 r t # . (1) (Here, E is the expectation operator .) This probl em i s made more dif ficult by the limited information that is av ailable to the agent. W e assume that at each t i me step the agent s ees only the observations y t and the reward r t (and is aw are of its policy and the acti ons u t that it choo s es to tak e). It has no kno wledge of t he underlying state space, how the actions af fect the ev olution o f states, ho w the rew ard signals depend on the states, or how t he ob s erv ations depend on t he states. 2.1 Other learning tas ks viewed as r einf orcement learning Clearly , the reinforcement learning problem described above provides a good model of adaptive cont rol problems , such as the acquisition of motor skills. How- 5 e ver , t he class of reinforcement learning problems is broad, and includes a nu m ber of o t her learnin g problems that are solved by the brain. For instance, the super- vised learnin g problems of pattern recognition and predictive modelling require labels (such as an appro p ri ate classi fication) to be associated with patt erns. These problems can be viewed as reinforcement learning problems with re ward signals that depend on the accuracy o f each predicted label. T ime ser i es p rediction, the problem of predicting the next i t em in a sequence, can be viewed in t h e same way , with a reward signal that corresponds to the accuracy of the predicti on. More general filtering p roblems can also be viewe d in this way . It follows that a s ingle mechanism for reinforcement learning would suf fice for the s olution of a consid- erable variety of learning probl ems. 2.2 Dir ect rein for cement learnin g A general approach t o reinforcement learning problems was present ed recently in [5, 6]. Tho se papers considered agents th at use parameterized pol i cies, and introduced general-purpose reinforcement learning algorithms t hat adjust the pa- rameters i n the direction that maximal l y increases t h e average re ward. Such alg o - rithms con ver ge to po licies that are l ocally opt imal, in t he s ense that any further adjustment to the parameters in any direction cannot i m prove the policy’ s perfor- mance. This section revie ws the algori thms introduced in [5, 6]. The next two sections sh ow how these algorithm s can be app l ied t o n et works of spiking neu- rons. The dir ect r einfor cement learning approach presented in [5], b uild i ng on ideas due to a n u mber of authors [27, 9, 10, 15, 1 8], adju s ts the parameters θ of a randomized poli cy that, on being presented wi t h the o b serva tion vector y t , choo s es actions according to a probability di stribution µ ( y t , θ ) . The approach in volves the computation of a vector z t of k real numbers (one compon ent for each component of the parameter v ector θ ) that is updated according to z t +1 = β z t + ∇ µ u t ( y t , θ ) µ u t ( y t , θ ) , (2) where β is a real numb er b et ween 0 and 1 , µ u t ( y t , θ ) is the probability of the action u t under t he current poli cy , and ∇ denotes the gradient with respect to the parameters θ (so ∇ µ u t ( y t , θ ) is a ve ctor of k partial deriv ativ es). The vector z t is used to update t h e parameters, and can be thought of as an ave rage of the ‘good’ directions in parameter space i n which t o adjust the parameters if a l ar ge value 6 of rew ard occurs at time t . The first term i n the right-hand-side of (2) ensures that z t remembers past values of the s econd term. The nu merator in t he second term is in the direction in parameter space which leads to the maximal i n crease of th e probability of the actio n u t taken at time t . This direction is di vided by the probability of u t to ensure m o re “popul ar” actions don’t end up domi nating the overa ll updat e di rectio n for th e parameters. Updates to the p arameters correspond to weighted sums of these normali zed di rectio n s, where the weighting depends on future values of the reward sign al. Theorems 3 and 6 in [5] show th at if θ remains constant, the long-term aver age of the p rod uct r t z t is a g o od approximation to the gradient of the a verage re ward with respect to the parameters, provided β is suf ficiently close to 1 . It is clear from Equation (2) that as β gets closer to 1 , z t depends on measurements further back in tim e. Th eorem 4 i n [5] shows that, for a g ood approximation to the gradient of the a verage rewa rd, it suffices if 1 / (1 − β ) , the time constant in th e update of z t , is large compared with a certain tim e constant—t he mixing time —of the POMDP . (It is useful , although not quit e correct, to think of the m ixing tim e as t h e tim e from the occurrence of an action un t il the eff ects o f that action hav e died away .) This gives a simpl e way to compute an appropriate direction to up d ate th e pa- rameters θ . An on-line algorithm ( OL POMDP ) w as presented i n [6] th at updates the parameters θ according to θ t = θ t − 1 + γ r t z t , (3) where the small positiv e real number γ is the size of the steps taken in parameter space. If these steps are suffic iently small , s o that the parameters change slowly , this update rul e modifies the p arameters in t he direction that maximally increases the long-term av erage of t h e rew ard. 3 Dir ect r einfor cem ent learning with independent agents Suppose that, ins t ead of a single agent, there are n independent agents , all co- operating to maxi m ize t h e avera ge rew ard (see Fig u re 2). Suppose that each of these agents sees a di s tinct observation vector , and h as a disti n ct parameterized randomized policy t h at depends on its own s et of parameters. This multi-agent reinforcement learning problem can also be modelled as a POMDP b y consid- ering t his collecti o n of agents as a sing l e agent, with an observation vector t hat 7 Re ward r t State transiti on, P ( u t ) . Observ ation processes. Re ward process. State, x t ✛ ✛ ✛ En vironment ✻ Policy Parame ters ✻ Policy Parame ters . ✛ ✲ ✲ ✲ ✲ ✲ ✲ Action, u n t Action, u 1 t Observ ation, y 1 t Observ ation, y n t . . . Agent 1 Agent n Figure 2: POMDP controlled by n i ndependent agents. consists of t he n observation vectors of each independent agent, and sim ilarly for the parameter vector and acti o n vector . For example, if the n agent s are coop- erating to clean the floor i n an o f fice, the state vector would includ e the location and orientation of the n agents, th e observation vector for agent i m i ght consist of t he vi sual inform ation av ailable at t h at agent ’ s current location, and the actions chosen by all n agents determine the state vector at the next time inst ant . T h e following decompositi on theorem foll ows from a simple calculation . Theor em 1. F or a P O MD P contr olled by multiple independent ag ents, the di r ect r einf or cement learn ing upd a te equation s (2) and (3) for t h e combi n ed agent ar e equivalent to t hose that would be u s ed by eac h agent i f it ignor ed t he existence of the other agents. That is, if we l et y i t denote the ob s ervation vector for agent i , u i t denote the action it takes, and θ i denote its parameter vector , then the update equati o n (3) is 8 equivalent to the s yst em of n u p d ate equations, θ i t = θ i t − 1 + γ r t z i t , ( 4) wher e th e vectors z 1 t , . . . , z n t ∈ R k ar e updated accor ding to z i t +1 = β z i t + ∇ µ u i t ( y i t , θ i ) µ u i t ( y i t , θ i ) . (5) Her e, ∇ deno tes the gradient with r espect to th e ag ent’ s p a rameters θ i . Eff ectively , each agent t reats the other agents as a part of the en vironment , and can update its own behaviour w h ile remaining oblivious to the existence of the other agent s. The onl y commu n i cation that occurs between these cooperating agents is via the globall y distributed rew ard, and vi a whatev er influence agents ’ actions hav e on other agents’ observations. Nonetheless, in the space of p aram- eters of all n agents, the upd ates (4) adjust th e complete parameter vector (the concatenation of the vectors θ i ) in the direction that maxim ally increases the a ver - age rew ard. W e shall s ee in the ne xt section that this con venient property leads t o a syn aptic update rule for s p i king neuron s that in volve s only lo cal quantiti es, plus a global rew ard si g nal. 4 Dir ect r einf orcement learning in neural networks This section sho ws ho w w e can model a neural network as a collection of agents solving a reinforcement learning problem, and apply the direct reinforcement learning algorit hm to op timize t h e parameters of the network. The networks we consider contain simple mod els of sp i king neuron s (see Figure 3). W e consi der discrete t ime, and sup p ose that each neuron in the n etwork can choose one of two actions at time step t : to fire, or not to fire. W e represent t h ese action s with the notation u t = 1 and u t = 0 , respectively 1 . W e use a simple probabilistic m o del for the behaviour o f the neuron. Define th e potential v t in the neuron at t ime t as v t = X j w j u j t − 1 , (6) 1 The actio ns can be rep resented by any two d istinct real values, such as u t ∈ {± 1 } . An essentially identical der ivation gives a similar update rule. 9 Connect ion strength, w j Presynapti c acti vity , u j ∈ { 0 , 1 } Potenti al, v = P j w j u j Acti vity , u ∈ { 0 , 1 } , Pr( u = 1) = σ ( v ) Synapse Figure 3: M odel of a neuron. where w j is the connection strength of the j th synapse and u j t − 1 is the acti vity at the previous ti me step of t he presynaptic neuron at the j th synapse. The potential v represents the voltage at the cell body (the postsynaptic po tentials ha ving been combined in th e dendritic tree). The p robability of activity in the neuron is a function of the potential v . A squashing function σ m aps from th e real-v alued potential t o a number b et w een 0 and 1 , and th e activity u t obeys the fol lowing probabilisti c rule. Pr ( neuron fir es at time t ) = Pr ( u t = 1) = σ ( v t ) . (7) W e assume that t h e squ ashing function satis fies σ ( α ) = 1 / (1 + e − α ) . W e are interested in com putation in networks of these spiking neurons , so we need to specify the network inp uts, on which the comput ation is performed, and the network outputs , where the results of t h e compu t ation appear . T o this end, some n eurons i n the network are di stinguish ed as inp u t neurons , which m eans their activity u t is provided as an external inp ut to the network. Other neurons are distingui shed as output neur ons , which means th ei r activity represents the result of a comput ation performed by the n et work. A real-v alued global rew ard si g nal r t is broadcast to e very neuron in the net- work at time t . W e view each (non-input) neuron as an independent agent in a reinforcement l earnin g problem. The agent’ s (neuron’ s) po l icy is sim ply how i t chooses to fire given the acti vities on i t s presynaptic inputs. The s y naptic strength s ( w j ) are th e adju s table parameters of this policy . Theorem 1 shows how to update the s ynaptic strengt hs in the direction that maximally increases the long-term a v- erage of the reward. In t his case, we have ∂ ∂ w j µ u t µ u t = σ ′ ( v t ) u j t − 1 σ ( v t ) if u t = 1 , − σ ′ ( v t ) u j t − 1 1 − σ ( v t ) otherwise 10 = ( u t − σ ( v t )) u j t − 1 , where the second equalit y fol lows from the prop erty of the s quashing fun ction, σ ′ ( α ) = σ ( α ) (1 − σ ( α )) . This results in an update rule for the j -th synaptic strength o f w j,t +1 = w j,t + γ r t +1 z j,t +1 , (8) where the real nu m bers z j,t are updated according to z j,t +1 = β z j,t + ( u t − σ ( v t )) u j t − 1 . (9) These equations describe t h e updates for th e parameters in a single neuron . The pseudocode in Algorithm 1 gives a com plete description of the steps in volved in compu ting neuron activities and synaptic modifications for a network of s uch neurons. Algorithm 1 Model of neural network activity and synapt ic m odification. 1: G iven : Coef ficient β ∈ [0 , 1) , Step size γ , Initial synaptic connection s trengths of the i -th neuron w i j, 0 . 2: for time t = 0 , 1 , . . . do 3: Set activities u j t of input neurons . 4: f or non-input n eurons i do 5: Calculate potential v i t +1 = P j w i j,t u j t . 6: Generate activity u i t +1 ∈ { 0 , 1 } usi n g Pr u i t +1 = 1 = σ v i t +1 . 7: end for 8: Observe rew ard r t +1 (which depends on network out puts). 9: f or non-input n eurons i do 10: Set z i j,t +1 = β z i j,t +1 + ( u i t − σ ( v i t )) u j t − 1 . 11: Set w i j,t +1 = w i j,t + γ r t +1 z i j,t +1 . 12: end for 13: end for Suitable v al u es for the quantities β and γ required by Al g orithm 1 depend on the mixing ti me of the controlled POMDP . The coeffic ient β sets the decay rate of 11 the v ariable z t . For the algorit hm to accurately approximate t he gradient direction, the correspon d ing time constant, 1 / (1 − β ) , sh o uld be large compared with th e mixing t ime of the en vi ronment. The st ep size γ affects the rate of change of the parameters. When th e parameters are cons tant, the lo ng term av erage of r t z t approximates the gradient. Thus, the step si ze γ should be sufficiently small so that the parameters are approxim atel y cons t ant over a t i me scale that allows an accurate estimate. Ag ain , thi s depend s on the mixin g time. Loosely sp eakin g, both 1 / (1 − β ) and 1 /γ should be signi ficantly l ar ger than the mixi ng tim e. 5 Biological Co nsideratio ns In modi fyi ng the strength of a s y n aptic connection , the u p date rule d escrib ed by Equations (8) and (9) in volves two components (see Figure 4). There is a Hebbian component ( u t u j t − 1 ) that helps to i ncrease the synaptic connection s trength when firing of the posts ynaptic neuron follows firing of th e presynaptic neuron. When the firing of the presyn aptic neuron is not fo l lowed by po stsynaptic firing , t his component is 0 , while the s econd component ( − σ ( v t ) u j t − 1 ) helps to decre ase the synaptic connection strength . The update rule has se veral attractive properties. Locality The modification of a particular synaps e w j in volves the postsynapti c potential v , th e postsynaptic acti vity u , and the presynaptic activity u j at the pre vious time step. Certainly the postsynapt i c pot ential is a vailable at the synapse. Action po- tentials in neuron s are transmitt ed back u p the dendrit ic t ree [24], so that (after some delay) the postsynaptic activity is also av ailable at the synapse. Since the influence of presynapti c activity on the postsynapti c potential i s mediated by receptors at the synapse, e vi dence of presynapti c acti vity is also a vailable at the synaps e. Whil e Equ ation (9) re quires inform ation about t he history of p resyn aptic acti vity , there is some evidence for mechanisms that allow recent receptor activation to be remembered [19, 21]. Hence, all of the quantities required for the compu t ation of the variable z j are likely to be a vailable in th e postsynapt ic region. Simplicity The compu t ation of z j in (9) i nv ol ves only addi tions and subtraction s modulated by the presynaptic and postsynapt ic activities, and combined in a simple first order filter . Th is filter i s a leaky integrator which models, for 12 (a) (b) (d) (e) (f) (g) (j) (k) (h) (l) r r (i) (c) r Figure 4: An illustratio n o f synaptic up dates. The presynaptic neuron is on the left, the postsynaptic on the right. The le vel in side the square in the postsynaptic neuron represents t he qu ant ity z j . (The dashed line indicates the zero v alue.) The symbol r represents the presence of a positive value of the rew ard signal, whi ch is assumed t o t ake onl y two values here. (The postsynaptic locati o n o f z j and r is for con venience in the depiction, and has n o other signi ficance.) The size o f the synapse represents t he connection s trength. T im e proceeds from top to bott om. (a)–(d): A sequence t h rough time illus trating changes in the synapse when no ac- tion potenti al s occur . In thi s case, z j steadily d ecays ((a)–(b)) tow ards zero, and when a re ward signal arriv es (c) , the strength of the sy naptic connection is not s i g- nificantly adjusted. (e)–(h): Presynapti c action potenti al (e), but no posts ynaptic action potent ial (f) leads to a larger decrease in z j (g), and su b sequent decrease in connection strength on arriv al of the reward signal (h). (i)–(l): Presynaptic actio n potential (i), followed by p ostsynaptic action p o tential (j) leads to an in crease in z j (k) and subsequent increase in connection s t rength (l). 13 instance, such commo n features as the concentration of ions in some re- gion of a cell or th e pot ential across a m em brane. Sim ilarly , the connection strength up dates described by E q uation (8) in volve simply the addition of a term that is m o dulated by the reward sign al. Optimality The results from [5], tog et h er wit h Th eorem 1, s h ow that this simple update rule modifies th e network parameters in the direction that maximally increases the avera ge rew ard, so it leads to p arameter values that locally optimize the performance of t he network. There are s o me experimental results that are consistent with the in volve ment of the correlation component (the term ( u t − σ ( v t )) u j t − 1 ) in the parameter up- dates. For instance, a lar ge body of literature on long-term potent iation (beginning with [7]) describes the enhancement of synaptic efficac y follo wing association of presynaptic and postsynaptic activities. More recently , the importance of the rel- ativ e tim ing of the EPSPs and APs has been demonstrated [19, 2 1]. In particular , the postsynaptic firing m ust occur after the EPSP for enhancement to occur . T h e backpropagation of the action p otential up the dendritic tree app ears to be crucial for this [17]. There is also experimental evidence th at presynaptic activity wit h out the gen- eration of an action potential in the postsynapti c cell can lead to a decrease in the connection strength [23]. The recent finding [19, 21] that an EPSP occurring shortly after an AP can l ead to depression is als o consistent wit h t his aspect of Hebbian learning. Howe ver , i n the experiments report ed in [19, 21], the presence of the AP appeare d to be important. It is not clear if t h e si gnificance of the relati ve timings of th e EPSPs and APs is related to learning or to maintain ing stability in bidirectionally coupled cells. Finally , some experiments hav e demo nstrated a decrease in synaptic effica cy when the synapses were not inv o l ved i n the production of an acti o n potential [16]. The u pdate rul e also requires a re ward s i gnal that is broadcast t o all neurons in t he network. In all of the experiments mentioned above, the synapt i c mod i- fications were ob served without any evidence of the presence of a plausib l e re- ward sig n al. Howe ver , there is limited evidence for such a s ignal in brains. It could be delivered in the form of particular neurotransmi tters, such as s erotonin or nor-a drenaline, to all neuron s in a circuit. Both of these neurotransmitters are deliv ered to the cortex by small cell assemblies (the raphe nu cleus and the locus coeruleus, respectively) that innervate large regions o f the cortex. T h e fact th at these assemblies contain few cell bodies suggests that they carry only limi ted in- formation. It may be that the rew ard signal is transmitted first electrically from 14 one of these cell assemblies, and then by diffusion of th e neurotransmitter to all of the plastic synapti c connecti ons in a neural circuit. This would save t h e expense of a synapse del ivering the re ward sign al to ever y plastic connection, but could be significantly s lower . This need no t be a disadvantage; for the purposes of param- eter opti m ization, the required rate of delive ry of the reward s ignal depends on the time const ants of the t ask, and can be subs tantially slower than cell signalling times. There is e vidence that the local application of serotonin immediately after limited synaptic activity can lead to l o ng term facilitation [11]. 6 Simulatio n Re sults In this section, we describe the results of s imulation s of Algorithm 1 for a pattern classification problem and an adaptiv e con t rol problem. In all simulation experi- ments, we us ed a symmetric representation , u ∈ {− 1 , 1 } . The difference between this representation and the assymm et ri c u ∈ { 0 , 1 } is a si mple transformation of the parameters, but this can be significant for gradient descent procedures. 6.1 Sonar signal classification Algorithm 1 was appl ied to th e problem of sonar return classification st u died by Gorman and Sejnowski [13]. (The data set is av ailable from th e U. C. Irvine repository [8].) Each pattern consists of 60 real numbers in the range [0 , 1 ] , rep- resenting the energy in various frequency bands of a sonar signal reflected from one o f two types of underwater objects, rocks and m etal cylinders. The data set contains 208 patterns, 97 labeled as rocks and 111 as cylinders. W e in vestigated the p erformance of a two-layer network of spiking neurons on this task. T h e first layer of 8 neurons receiv ed t he vector of 60 real numbers as inputs, and a si ngle output neuron recei ved t h e out puts of these neurons. This n euron ’ s outp u t at each time step was viewed as the prediction of the label corresponding t o the patt ern presented at that time step. The re ward signal was 0 or 1 , for an incorrect or cor - rect prediction, respectively . The parameters of the algo rithm were β = 0 . 5 and γ = 1 0 − 4 . W eights were initiall y set to random values uniformly chos en in t h e interval ( − 0 . 1 , 0 . 1) . Sin ce it takes two time steps for the influence of the hidden unit parameters to affect the re ward si g nal, it i s essential for the value of β for the synapses in a hidden layer neuron to be positive. It can be shown that for a constant pattern vector , t he o p timal choice of β for these sy napses is 0 . 5 . 15 0 10 20 30 40 50 60 0 50 100 150 200 250 300 error (%) training epochs Training error Test error Gorman and Sejnowski: training error Gorman and Sejnowski: test error Figure 5: Learning curves for the sonar classification prob lem. Each time the input pattern changed, t h e delay through the network m eant that the predicti on corresponding to the new patt ern was delayed by one time st ep. Because of this, i n the experiments each pattern was presented for many time steps before it w as changed. Figure 5 shows the mean and standard deviation of training and test errors over 100 runs of the algorit hm plotted agains t the number of trainin g epochs. Each run in volved an independent random split of the data i nto a test set (10%) and a t rain- ing set (90%). For each traini ng epoch, patterns in th e training set were presented to the network for 10 00 tim e steps each. The errors were calculated as th e pro- portion of misclass i fications during one p ass through the data, wit h each pattern presented for 1 000 ti me steps. Clearly , th e algorithm reli abl y leads to parameter settings that give training error around 10% , w i thout passing any gradient infor- mation through the network. Gorman and Sejnowski [13] in vestigated the performance of sigmoidal neural networks on this data. Although the networks they used w ere quite dif ferent (si nce they in volved determi nistic un its with real-valued output s), t he training error and test error they reported for a network with 6 hidden units is als o illustrated in Figure 5. 16 Figure 6: Th e in verted pendul um. 6.2 Contr olling an in verted pendulum W e also considered a probl em of l earning to balance an i nv erted pendulum. Fig- ure 6 s h ows the arrangement: a puck m oves in a square region. On t h e top of the pu ck is a weightless rod wit h a weig h t at its tip. The pu ck has n o in t ernal dynamics. W e in vestigated the p erformance of Algorithm 1 on this problem. W e used a network with four hidden units, each receiving real numbers representi n g th e position and velocity of the puck and the angle and angular velocity of the p endu- lum. These units were connected to two more units, whose outputs were used to control the sign of t wo 10N thrusts appli ed to t he pu ck in the tw o axis directions. The reward signal wa s 0 when the pendulum was upright, and − 1 when it hit the ground. Once the pendulum hit the groun d , the puck was randoml y located near the centre of the square w i th velocity zero, and the pendulum was reset to vertical with zero angular velocity . In the simulation, the square was 5 × 5 metres, the dynamics were sim ulated in discrete time, wit h t ime steps of 0 . 02 s, t h e puck bounced elast i cally off the walls, gra vity was 9 . 8 ms − 2 , t he puck radius was 50 mm , the puck height was 0 , the puck mass was 1 kg , air resistance was neglected, the pendu lum length was 500 mm , the pendulum m ass was 10 0 g, t he coefficient of frict i on o f the puck on the ground was 5 × 10 − 4 , and frictio n at the pendulu m joint was set to zero. The algorithm parameters were γ = 10 − 6 and β = 0 . 995 . 17 0 20 40 60 80 100 120 0 5e+06 1e+07 1.5e+07 2e+07 seconds iterations average time between failure Figure 7: A typ ical l earning curve for the inv erted pendulum problem. Figure 7 shows a typ ical l earning curve: th e av erage ti m e b efore the p end u - lum falls (in a simulati on o f 10000 0 iterations = 2000 seconds), as a function of t otal simulated time. Initial weight s were chosen uniformly from the interval ( − 0 . 05 , 0 . 0 5) . 7 Further work The most i nteresting questio ns raised by these results are concerned wi th possible biological mechanisms for update rules of thi s type. Some aspects of the update rule are s upported by e xperimental results. Others, such as the re ward s i gnal, ha ve not been in vestigated experimentally . One obvious direction for this work is the dev elopment of update rules for more rea list i c models of neurons. First, the model assumes di s crete time. Second, it ignores so me features that biol ogical neurons are kno wn to possess. For instance, the location of synapses in the d endritic tree allow timin g relationshi ps between action potent i als in different presynaptic cells to af fect the resul ting postsynaptic pot ent ial. Other features o f dendritic process- ing, such as n o nlinearities, are also i gnored b y the model presented here. It is not clear which of these features are i mportant for the computati onal properties of neural circuits. 18 8 Conclusio n s The synaptic update rul e presented in this paper requi res only simpl e compu ta- tions in volving only local quanti ties plus a global rew ard signal. Furthermo re, it adjusts the s y naptic connection strengths to locally opti m ize t he av erage reward recei ved by t he network. The reinforcement learning paradigm encompasses a considerable variety of l earning problems . Sim u lations have sh own the effecti ve- ness of the algorithm for a simp l e pattern classification problem and an adaptive control problem. References [1] A. G. Barto, C. W . Anderson, and R. S. Sutton. Synthesis of nonl inear control surfaces by a layered associative s earch network. Bio l ogical Cyber- netics , 43:175–18 5, 1982. [2] A. G. Barto and R. S. Sutto n. Landmark learning: An illustratio n of associa- tiv e search. Biological Cybernetics , 42:1–8 , 1981. [3] A. G. Barto, R. S. Sutton, and C. W . Anderson. Neuronlike adaptive elements that can solve difficult l earning control problems . IEEE T ransactions on Systems, Man, and Cybernetics , SMC-13:83 4–846, 1983. [4] A. G. B arto, R. S. Sutton, and P . S. Brouwer . Associative search n et- work: A reinforcement learning asso ciat ive m emory . Biological Cybernet- ics , 40:201–211 , 1981. [5] J. Ba xter and P . L. Bartlett. On s o m e algorithm s for in fini t e-horizon policy- gradient estimation. J ournal of Art ificial Int elligence Resear ch , 14, M arch 2001. [6] J. Baxter , P . L. Bartlett, and L. W eave r . Gradient-ascent algorit hms and experiments with infinite-horizon, policy-gradient esti mation. J ourn al of Artificial Intelligence R esearc h , 14, March 2001. [7] T . V . Bliss and T . Lomo . Long-last ing potentiatio n o f syn apt ic transmis sion in the d ent ate area of t h e anaesthetized rabbit following st imulation of the perforant path. Journal of Physiology (London) , 232:33 1–356, 19 73. 19 [8] E. K. C. Blak e and C. Merz. UCI reposit o ry of machin e learning databases, 1998. http ://www .ics.uci.edu/ ∼ ml earn/MLRepository .html. [9] X.-R. Cao and H.-F . Chen. Pe rturbation Realization, Potentials, and Sen- sitivity Analys is of Markov Processes. IEEE T ransact i ons o n Automatic Contr o l , 42:13 8 2–1393, 1997. [10] X.-R. Cao and Y .-W . W an. Algorithms for Sensit ivity Analysis of Markov Chains Through Potentials and Perturbation Realization . IEEE T ransactions on Contr o l Systems T echnology , 6:48 2 –492, 199 8. [11] G. A. C lark and E. R. Kandel. Induction o f long-term facilitation in A p lysia sensory neurons by local applicatio n of serotonin to remote synapses. Pr oc. Natl. Acad. Sci. USA , 90:11411–11415, 1993 . [12] T . L. Fine. F eedforwar d Neural Network Method o logy . Springer , New Y ork, 1999. [13] R. P . Gorman and T . J. Sejnowski. Analysis of hidden un its in a layered network trained to classify sonar tar get s . Neural Networks , 1:75–8 9 , 1988. [14] D. O. Hebb . The Organization of Behavior . W i ley , New Y ork , 19 4 9. [15] H. Kimu ra, K. M iyazaki, and S. Kobayashi. Reinforcement learning in POMDPs with function approxi m ation. In D. H. Fisher , editor , Pr o- ceedings of the F ourteenth Int ernational Confer ence on Machine Learning (ICML ’97) , pages 152– 1 60, 1997. [16] Y . Lo and M. m ing Poo. Acti vity-dependent s y naptic compet i tion in vitr o : Heterosynaptic suppressio n o f developing synapses. Science , 254:1019– 1022, 1991. [17] J. C. Magee and D. Johns ton. A synaptically cont rolled, associative signal for Hebbian plasticity in hippocampal neurons. S cience , 275:209–213, 1997. [18] P . Marbach and J. N. Tsitsiklis. Simulati on-Based Optimization of Marko v Re ward Processes. T echnical report, MIT , 1998 . [19] H. Markram, J. L ¨ ubke, M . Frotscher , and B . Sakmann. Regulation of synaptic efficac y by coincidence of postsynapti c APs and EPSPs. Science , 275:213–215 , 1 997. 20 [20] J. F . Medina and M. D. Mauk. Simulatio ns o f cerebellar m otor learn- ing: Computational analysis of plasticit y at t he mossy fiber to deep nucleus synapse. The Journal of Neur oscience , 19 (16):7140–7151, 1999. [21] G. qiang Bi and M. ming Poo. Synaptic modifications in cultured hippocam- pal neurons: dependence on spike ti ming, synapti c strength, and postsynap- tic cell type. The J ournal of Neur o s cience , 18(24):10464– 10472, 1998. [22] D. Rumelhart, G. Hint on, and R. W illi am s. Learning representations by back-propagating errors. Natur e , 323:533 –536, 19 8 6 . [23] P . K. Stanton and T . J. Sejnowski. Associativ e long-term depression in the hippocampus induced by Hebbi an covariance. Natur e , 339: 215–218, 1989. [24] G. J. Stuart and B . Sakmann. Acti ve propagation of som atic action potentials into neocorti cal pyramidal cell dendrites. Natur e , 367:69–7 2 , 1994. [25] G. T esauro. Simple neural model s of class ical conditi oning. Biological Cybernetics , 5 5:187–200, 1986. [26] L. G. V aliant. Cir cuits of t he mi nd . Oxford Un iversity Press, 199 4 . [27] R. J . Williams. Simple Statistical Gradient-Following Algorith ms for Con- nectionist Reinforcement Learning. Machine Learning , 8:22 9–256, 19 92. 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment