There is Limited Correlation between Coverage and Robustness for Deep Neural Networks

Deep neural networks (DNN) are increasingly applied in safety-critical systems, e.g., for face recognition, autonomous car control and malware detection. It is also shown that DNNs are subject to attacks such as adversarial perturbation and thus must…

Authors: Yizhen Dong, Peixin Zhang, Jingyi Wang

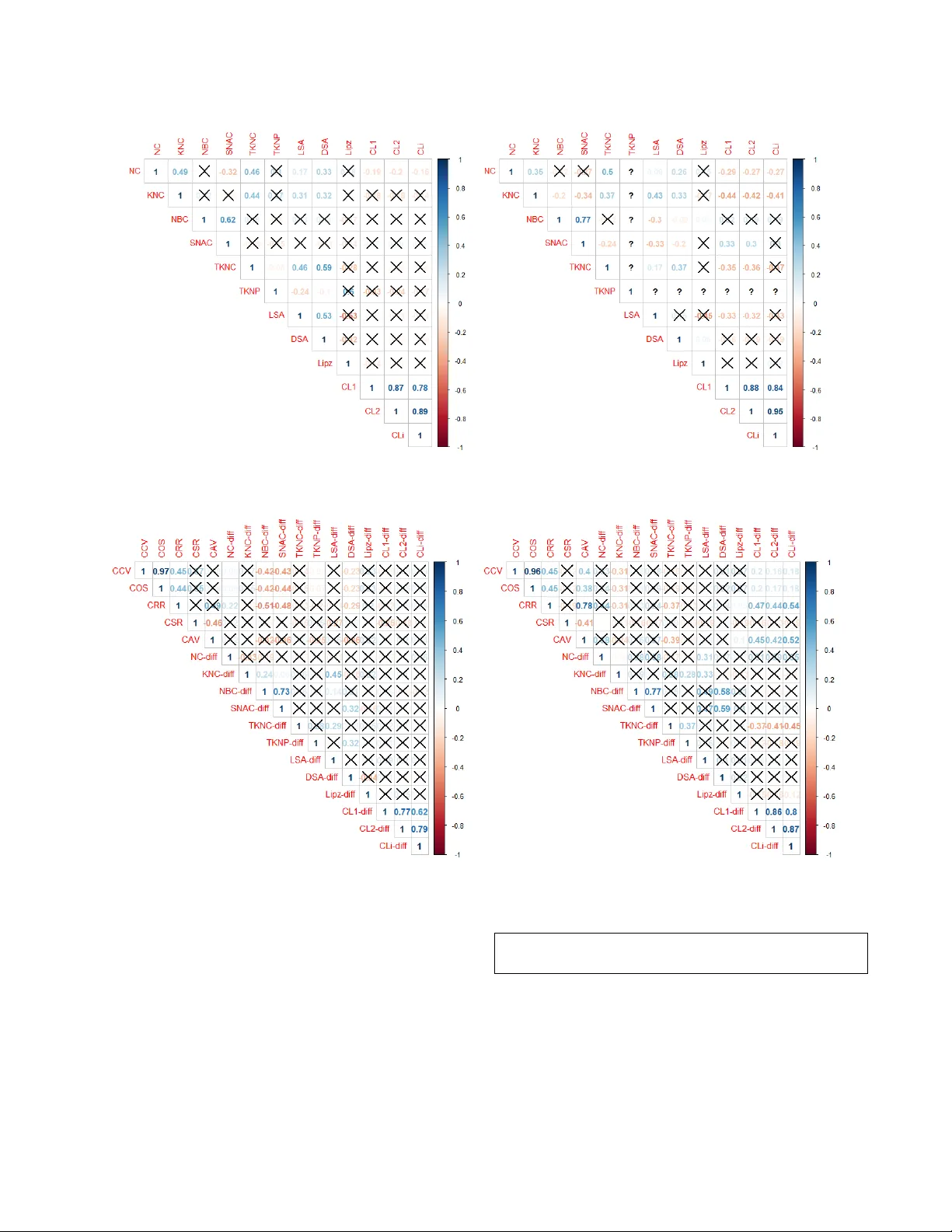

There is Limite d Correlation between Cov erage and Robustness for De ep Neural Networks Yizhen Dong ∗ dyz386846383@163.com Tianjin University China Peixin Zhang ∗ pxzhang94@zju.edu.cn Zhejiang University China Jingyi W ang National University of Singapore wangjy@comp.nus.edu.sg Shuang Liu Tianjin University shuang.liu@tju.edu.cn Jun Sun Singapore Management University junsun@smu.edu.sg Ting Dai Huawei Corporate daiting2@huawei.com Xinyu W ang Zhejiang University wangxinyu@zju.edu.cn Jianye Hao Tianjin University jianye.hao@tju.edu.cn Li W ang Tianjin University wangli@tju.edu.cn Jin Song Dong National University of Singapore dongjs@comp.nus.edu.sg ABSTRA CT Deep neural networks (DNN) are increasingly applied in safety- critical systems, e.g., for face recognition, autonomous car control and malware detection. It is also shown that DNNs are subject to attacks such as adversarial perturbation and thus must b e properly tested. Many coverage criteria for DNN since have been proposed, inspired by the success of code coverage criteria for software pro- grams. The e xpectation is that if a DNN is a well tested (and re- trained) according to such coverage criteria, it is more likely to be robust. In this work, we conduct an empirical study to evaluate the relationship between coverage , robustness and attack/defense metrics for DNN. Our study is the largest to date and systematically done based on 100 DNN models and 25 metrics. One of our ndings is that ther e is limited corr elation between coverage and robustness, i.e., improving co verage does not help improve the robustness. Our dataset and implementation have been made available to serve as a benchmark for future studies on testing DNN. A CM Reference Format: Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu W ang, Jianye Hao, Li W ang, and Jin Song Dong. 2019. There is Limited Correlation between Coverage and Robustness for Deep Neural Networks. In Proceedings of A CM Conference (Conference ’17) . ACM, New Y ork, N Y , USA, 12 pages. https://doi.org/10.1145/nnnnnnn.nnnnnnn ∗ Both authors contributed equally to this research. Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than ACM must be honored. Abstracting with credit is permitted. T o cop y otherwise, or republish, to post on servers or to redistribute to lists, requires prior specic permission and /or a fee. Request permissions from permissions@acm.org. Conference’17, July 2017, W ashington, DC, USA © 2019 Association for Computing Machinery. ACM ISBN 978-x-xxxx-xxxx-x/Y Y/MM. . . $15.00 https://doi.org/10.1145/nnnnnnn.nnnnnnn 1 IN TRODUCTION Recent years have seen rapid development on deep learning tech- niques as well as applications in a variety of domains like computer vision [ 12 , 36 ] and natural language processing [ 7 ]. There is a grow- ing trend to apply de ep learning for solving safety-critical tasks, such as face recognition [ 35 ], self-driving cars [ 3 ] and malware de- tection [ 50 ]. Unfortunately , deep neural netw orks (DNN) are shown to b e vulnerable to attacks and lack of robustness. For instance, they are easily subject to adversarial p erturbation [ 5 , 10 ], i.e., a DNN makes a wrong decision given a carefully crafted small perturba- tion on the original input. Such attacks have b een demonstrate d successfully in the physical world [ 19 ]. This suggests that DNN, just like software systems, must be properly analyzed and tested before they are applied in safety-critical systems. The software engineering community welcomed the challenge and opp ortunity . Multiple software testing approaches, i.e., dieren- tial testing [ 34 ], mutation testing [ 27 , 45 ] and concolic testing [ 39 ], have been adapted into the context of testing DNN. Inspired by the noticeable success of code coverage criteria in testing tradi- tional software systems, multiple coverage criteria 1 , e.g., neuron coverage [ 34 , 42 ] and its extensions DeepGauge [ 26 ], MC/DC [ 38 ], and Surprise Adequacy [ 17 ], hav e been proposed. Co verage criteria quantitatively measures ho w well a DNN is tested and oers guide- lines on how to create new test cases. The underlying assumption is that a DNN which is better tested, i.e., with higher coverage, is more likely to be robust. This assumption howe ver is often not examined or only evalu- ated with limited DNN mo dels and structures, making it unclear whether the results generalize. Furthermor e, how a test suite im- proves the quality of a DNN is dierent from that of a software system. A software system is improv ed by xing bugs r evealed by a test suite. A DNN is typically improved by retraining with the test suite. While existing studies show that retraining often impr oves 1 Metric and criterion are used interchangeably . Conference’17, July 2017, W ashington, DC, USA Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu Wang, Jianye Hao, Li Wang, and Jin Song Dong a DNN’s accuracy to some extent [ 34 , 39 ], it is not clear whether there is correlation between the coverage of the test suite and the improvement, i.e ., does a set of inputs with higher coverage imply better improvement ( on DNN robustness)? Inspired by the work in [ 14 ], we conduct an empirical study to evaluate whether cov erage is correlated with robustness of DNN and additional metrics which are associated with the quality of DNN [ 24 ]. In particular , we would like to answer the following research questions. • Are there correlations between testing coverage criteria and the robustness of DNN? • Are there correlations among dierent coverage criteria themselves? • Are there correlations between the improvement of coverage criteria and the improvement in terms of robustness after the DNN is retrained? • Are there metrics that are strongly correlated to the robust- ness of DNN or the robustness improvement after retraining? Based on the answers to the above questions, we aim to provide prac- tical guidelines for developing testing methods which contribute towards impro ving the robustness of DNN. Conducting such an empirical study is highly non-trivial. First, we ne ed a large set of real-w orld DNN for the study . However , training realistic DNN often takes signicant amount of time and resource. For instance , it takes 15 GP U hours to train a ResNet-101 model. Our study trained 100 state-of-the-art DNN mo dels 2 with a variety of architectures with two popular datasets, i.e., MNIST [ 21 ] and CIF AR10 [ 18 ]. Obtaining these models to ok a total of 150 GP U hours. Second, we need to obtain adversarial samples by attacking the trained original models. W e adopt 3 state-of-the-art attack methods, i.e., FGSM [ 10 ], JSMA [ 32 ] and C&W [ 5 ], to attack the original models, in order to obtain dier ent adversarial sample sets and train dierent DNN models. Some of the adv ersarial attack methods, e.g., JSMA and C&W , are known to b e time-consuming. It takes us a total of 1 , 810 GP U hours to obtain adversarial samples for all the original models with the 3 attack methods. Last but not least, w e need a systematic and automatic way of evaluating the coverage , robustness, and other associated metrics, which is not always straightforward. For instance , there are mul- tiple denitions of robustness in the literature [ 49 ], [ 48 ], some of which are complicated and expensive to compute (e .g., it took 12 GP U hours to compute a CLEVER scor e [ 48 ] for Go ogLeNet-22.). In this work, we develop a self-contained toolkit called DRT est ( D eep R obustness T esting), which calculates a comprehensive set of met- rics on DNN, including 1) 8 testing coverage criteria proposed for DNN, 2) 2 robustness metrics for DNN, and 3) a set of 15 attack and defense metrics for DNN. A total of 4 , 150 GP U hours are spent on computing these metrics based on the above-mentioned models. Our empirical study is conducte d as follows. For each dataset, we rst train 25 diverse see d models (with state-of-the-art architec- tures), attack each seed model with dierent attacking methods to generate adversarial samples (with var ying attack parameters), aug- ment the training dataset with the generated adversarial samples, 2 25 seed models trained with original dataset and 75 models retrained using original dataset augmented with adversarial samples. and retrain the model. W e apply DRT est to calculate a range of met- rics for every model. Afterwards, we apply a standard correlation analysis algorithm, the Kendall’s rank corr elation coecient [ 16 ], to analyze the correlations between the metrics. In summary , we make the following contributions. • W e conducted an empirical study to systematically investi- gate the correlation b etween coverage, robustness and re- lated metrics for DNN. Base d on the empirical study results, we discuss potential research directions on DNN testing. • W e implemente d a self-contained and extensible toolkit which calculates a large set of metrics, which can be used to quan- titatively measure dierent aspects of DNN. • W e publish online our models, adversarial samples, retrained models as well as DRT est , which can be used as a benchmark for future proposals on methods for DNN testing. W e organize the remainder of the paper as follows. Section 2 in- troduces the background knowledge of this work. Se ction 3 presents our research methodology . Se ction 4 shows details on our imple- mentations. Section 5 reports our ndings on the research questions. W e present related works in Section 6 and conclude in Section 7. 2 PRELIMINARIES In this section, we briey review pr eliminaries related to this work, which include Deep Neural Networks (DNN), adversarial attacks on DNN, testing methods for DNN, and robustness of DNN. 2.1 Deep Neural Networks A DNN is an articial neural network with multiple layers between the input and output layers. It can be denoted as a tuple D = ( I , L , F , T ) where • I is the input layer; • L = { L j | j ∈ { 1 , . . . , J } } is a set of hidden layers and the output layer , each of which contains s j neurons, and the k t h neuron in layer L j is denoted as n j , k and its value is v j , k ; • F is a set of activation functions; • T : L × F → L is a set of transitions between layers. The output of each neuron is compute d by applying the activation function to the weighted sum of its inputs, and the weights represent the strength of the connections between two linke d neurons. In this work, we focus on DNN classiers D ( X ) : X → Y , where X is a set of inputs and Y is a nite set of lab els. Given an input x ∈ X , a DNN classier transforms information layer by layer and outputs a label y ∈ Y for the input x . In this work, we try to cover a wide range of (including state-of-the-art) DNN architectures. W e briey introduce them in the following. LeNet [ 22 ] is one of the most representativ e DNN architectures. As shown in Fig. 1, the basic modules include the convolution layers (Conv ), the po oling layers (Pool) and the fully connecte d layers (FC). Conv aims to extract dierent lo cal featur es and Po ol makes sur e to get the same feature after transformation, i.e., translation, rotation, and scaling. FC then maps the distributed feature repr esentations from Conv and Pool to the label space. V GG [ 36 ] is an advanced architecture for extracting CNN features from images. Compared to LeNet, V GG utilizes smaller convolution There is Limited Correlation between Coverage and Robustness for De ep Neural Networks Conference’17, July 2017, W ashington, DC, USA Figure 1: LeNet-5 Structure kernels (e .g., 3 ∗ 3 or 1 ∗ 1 ) and pooling kernels (e .g., 2 ∗ 2 ) which signicantly increases the expressiv e power . GoogLeNet [ 40 ] Unlike most popular DNN models which obtain better accuracy by increasing the depth of the network, it introduces an inception module with a parallel topology to expand the width of the model instead. The inception mo dule helps to extract richer features and r educe dimensions using 1 ∗ 1 convolution kernel, and aggregate convolution results on multiple sizes to obtain features from dierent scales and accelerate convergence rate . ResNet [12] improv es traditional sequential CNNs by solving the vanishing gradients problem when expanding the number of lay ers. It utilizes short-cuts (also called skip connections), which adds up the input and output of a layer and then transforms the sum to the next layer as input. 2.2 Adversarial Attack Since Szegedy et al. discovered that DNNs are intrinsically vulnera- ble to adversarial samples (i.e., sample inputs which are generated through perturbation with the intention to trick a DNN into wrong decisions) [ 41 ], many attacking approaches have been developed to craft adversarial samples. In the following, we briey introduce 3 popular attacking algorithms that we adopt in our work. FGSM Goodfellow et al. proposed the rst and fastest attacking algorithm Fast Gradient Sign Method (FGSM) [ 10 ], which attempts to maximize the change of probability of sample’s original label by the gradient of its loss. The implementation of FGSM is as follows, which is quite straightforward and ecient. x ′ = x + ϵ · sign (∇ x J ( x , c x )) (1) J is the loss function for training, c x is the prediction of x and ϵ is a hyper-parameter to control the degree of perturbation. JSMA Jacobian-based Saliency Map Attack ( JSMA) [ 32 ] is a tar- geted attack method. First, it calculates a saliency map based on the Jacobian matrix of a given sample. Each value of the map represents the impact of the corresponding pixel to the target prediction. Then it greedily picks the most inuential features each time and maxi- mizes their values until either successfully generates an adversarial sample or the number of pixels modied exceeds the bound. W e refer the readers to [32] for details. C&W Carlini et al. [ 5 ] aim to craft adversarial samples with high condence and small perturbation based on certain distance metric by solving the following optimization problem directly: arg min | | x ′ − x | | p + λ · f ( x ′ , t ) (2) | | x ′ − x | | p is the perturbation according to p-norm measurements, e.g., L 0 , L 2 and L ∞ ; t is the target label and λ is a hyper-parameter to balance the objectives. In order to prev ent adv ersarial samples from generating illegal values, they de vised a gr oup of clip functions and loss functions. Readers can refer to [5] for details. 2.3 T esting Deep Neural Networks A variety of traditional software testing methods like dierential testing [ 4 , 29 ], concolic testing [ 11 ] have be en adapte d to the context of testing DNN [ 34 , 39 ] to nd adversarial samples (in hop e of revealing bugs in DNN). Note that in the setting of DNN testing, a test case is a sample input. In the following, we review some recently proposed coverage criteria for DNN. Neuron Coverage Neuron coverage [ 34 ] is the rst coverage cri- teria proposed for testing DNN, which quanties the p ercentage of activated neurons by at least one test case in the test suite. The authors also proposed a dierential testing method to generate test cases to improve neur on coverage. DeepGauge Later , Ma et al. proposed DeepGauge [ 26 ], which ex- tends neuron coverage with coverage criteria which are dened based on the activation values from two dierent levels. For in- stance, neuron-level coverage rst divides the range of values at each neuron into k sections during the training stage, obtains the up- per and low er bounds, and then e valuates if each section is cov ered or the boundary has b een crossed by the test suite. The layer-level coverage concerns how many neurons use d to b e the top- k ac- tive neurons ar e activated at least once for each layer (TKNC), or whether the pattern formed by sequences of top- k active neurons on each layer (TKNP) is present. Surprise Adequacy Based on the idea that a goo d test suite should be ‘surprising’ compared to the training set, Kim et al. [ 17 ] dened two measures on ho w surprising a testing input is to the training set. One is called the kernel density estimation, which evaluates the likelihood of the testing input from the training set. The other measures the Euclidean distance of neur on activation traces for a given input and the training set. Readers are referred to [ 17 ] for details. 2.4 Robustness of Deep Neural Networks Given the existence of adversarial samples, adv ersarial robustness becomes an imp ortant desired property of a DNN which measures its resilience against adversarial perturbations. Following the de- nitions proposed by Katz et al. [ 15 ], adversarial robustness can be categorized into local adversarial robustness and global adversarial robustness depending on dierent contexts. Definition 2.1. (Local Adversarial Robustness) Given a sample input x , a DNN D and a perturbation threshold ϵ , D is ϵ − local robust i for any sample input x ′ such that | | x − x ′ | | p ≤ δ , we have D ( x ) = D ( x ′ ) , where | | · | | p is the p -norm to measure the distance between two sample inputs. Definition 2.2. (Global Adversarial Robustness) For any sample inputs x and x ′ , a DNN D and two thresholds δ , ϵ , D is ( δ , ϵ )− robust i for any | | x − x ′ | | p ≤ δ , we have | D ( x ) − D ( x ′ ) | ≤ ϵ . Local robustness measures the robustness on a sp ecic input, while global robustness measures the robustness on all inputs. V erifying whether a DNN satises local or global robustness is an active r esearch area [ 9 , 13 , 15 ] and e xisting methods do not scale to Conference’17, July 2017, W ashington, DC, USA Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu Wang, Jianye Hao, Li Wang, and Jin Song Dong state-of-the-art DNNs (especially for global robustness). Thus, mul- tiple metrics have been proposed in order to empirically e valuate the adversarial robustness of a DNN in the literature [ 8 , 44 , 48 , 49 ]. In the following, we introduce two widely used adversarial robust- ness metrics (including both local [ 48 ] and global [ 49 ] robustness) which we adopt in this work. Global Lipschitz Constant Lipschitz Constant [ 49 ] measures the sensitivity of a model to adversarial samples. Giv en a function f , its Lipschitz constant is only related to the parameters of f . In our context, the function is in the form of a DNN. Its Lipschitz constant can be calculated recursively layer-by-layer from the output lay er all the way to the input layer , taking consideration of short-cuts in ResNet and inception module in GoogLeNet. For example, the Lipschitz Constant of a DNN which has a structur e similar to LeNet and V GG is the product of the Lipschitz Constant of all the hidden layers and the output layer . As an example, w e introduce how Lipschitz Constant is calcu- lated for a fully conne cted layer . Readers are referred to [ 6 ] for the calculation of convolution and aggregation layers. Let v j − 1 and ˆ v j − 1 be two inputs of layer L j ; v j and ˆ v j be their corresponding outputs; and ω j i , k be the parameter of the connection b etween the k t h neuron in layer L j and the i t h neuron in layer L j − 1 ; and s j be the number of neurons of lay er L j . The Lipschitz Constant for layer L j is dene d as α = max k Í s j i = 1 | ω j i , k | (so that layer L j satises v j − ˆ v j ∞ ≤ α v j − 1 − ˆ v j − 1 ∞ ). CLEVER Score Another robustness metric we adopt is the CLEVER score (Cr oss-Lipschitz Extr eme V alue for nEtwork Robustness) [ 48 ], which is a recently proposed attack-independent robustness score for large scale networks. Given a sample input x 0 and a DNN D , we say x a is a perturbed example of x 0 with perturbation δ if x a = x 0 + δ , let ∆ p = ∥ δ ∥ p denotes the ℓ p norm of δ , thus an adversarial example is a per- turbed example x a that satisfy D ( x 0 ) , D ( x a ) , the minimum ℓ p adversarial distortion of x 0 , denoted as ∆ p , min , is dened as the smallest ∆ p over all adv ersarial examples of x 0 . The idea is to ap- proximately calculate the lower bound of ∆ p , min of a given sample utilizing extreme value theor y . The lower b ound, denote d by β L where β L ≤ ∆ p , min , is dened such that any perturbe d example of x 0 with ∥ δ ∥ p ≤ β L are not adversarial examples. CLEVER score has been experimentally evaluated, which shows that it is consis- tent with other robustness evaluation metrics, e.g., attack-induced distortion metrics. Readers are referred to [48] for details. 3 METHODOLOGY 3.1 Experiment Design The overall worko w of our experiment is shown in Figure 2. W e follow a common DNN testing process ( e.g., by [ 26 , 34 ]), as shown at the top of the gure, whilst extracting a variety of metrics (as shown in the middle of the gure) which are used for correlation analysis (as shown at the bottom). W e start with training a model from a training set using state-of-the-art training methods. Afterwards, various adversarial attacks [ 5 , 10 , 32 ] are applied to generate new test cases. The last step is to augment the training set with the new test cases and obtain a retrained model. W e collect four dierent groups of metrics to characterize dif- ferent components of the process, i.e., (1) a set of testing coverage metrics of b oth the original and the retrained models, (2) a set of attack metrics of dierent kinds of adversarial attacks on the original models, (3) a set of robustness metrics of b oth the original models and the retrained models, and (4) a set of defense metrics which measure the dierences between the retrained model and the original model. W e repeat the ab ove (attack and retrain) process for the 25 seed models, obtain in total 100 mo dels, calculate the corresponding metrics and then conduct correlation analysis on all these metrics. In the following, we illustrate the challenges and our design choices of each part in detail. Adversarial Aacks W e adopt three state-of-the-art DNN attack methods, i.e., FGSM [ 10 ], CW [ 5 ] and JSMA [ 32 ], which are intro- duced in section 2.2, to generate adversarial samples. These attack methods are commonly used by previous DNN testing approaches, e.g., [ 17 , 26 ]. These generated adversarial samples are combined with the original datasets as new (training and testing) datasets for model retraining. Model Retraining For each original model, we obtain three sets of adversarial samples, one for each attack method. During model retraining, we combine the original training set with one set of the adversarial samples to obtain a new training set. W e retrain the original model with the new training set to obtain a retrained model. As a result, we obtain 3 retrained models for each original model, one for each attacking method. W e follow the standard partition of 6 : 1 for training and testing on the MNIST dataset and 5 : 1 for the CIF AR10 dataset. Metric Calculation As our objective is to investigate the corr ela- tions between coverage , robustness and other metrics associated with DNN, we conduct a thorough survey on existing metrics and collected 25 metrics in total. These metrics are categorized into four groups, i.e., testing metrics, robustness metrics, attack metrics and defense metrics. They are summarize d in T able 1. Note that the attack metrics measure to what extent the attacks are successful, imperceptible, whereas the defense metrics measure mainly on how the retrained models preserve the accuracy of the original model. For brevity , we refer the readers to the original papers for details. W e calculate values of all metrics based on their original denitions and use default parameters according to their original papers. Correlation A nalysis W e conduct correlation analysis, a statistical technique that shows whether and how strongly pairs of variables are correlated, on the metrics. W e are particularly interested to observe which metrics are correlated to the r obustness of a DNN model. The resulting correlation coecient is a single value b e- tween − 1 and + 1 , where + 1 (and − 1 ) means the most positively (and negatively) correlated, and 0 means no correlation. In this work, we adopt a commonly used correlation coecients, Kendall’s τ rank correlation coecient [ 16 ], which is a rank based correla- tion that measures monotonic relationship between two variables, to measure the correlations b etween dierent metrics. Note that compared to alternative methods like Pearson product-moment correlation coecient [ 33 ], Kendall’s τ rank correlation coecient does not require that the dataset follows a normal distribution or the correlation is linear . Since w e adopt tw o p opular dataset MNIST and There is Limited Correlation between Coverage and Robustness for De ep Neural Networks Conference’17, July 2017, W ashington, DC, USA Retra in Adve rs ar ia l sa mpl e s Ori gi nal model Retrai ned mod e l Tr a i n Rob us tness metri c s Te s t i n g metr ic s Att ac k DN N Att ac k - Def en s e Pi pe l i ne Att a c k me tri cs Defen se metr ic s Met ri c s Corr elation Analysis Figure 2: Over view of experiment design T able 1: Summary of metrics Metric T ype Metric Name Description T esting NC Neuron Coverage [34] KNC K-multisection Neuron Cov erage [26] SNAC Strong Neuron Activation Cov erage [26] NBC Neuron Boundary Coverage [26] TKNC T op-k Dominant Neuron Cov erage [26] TKNP T op-k Dominant Neuron Patterns Cov erage [26] LSA/DSA Surprise adequacy to training set [17] Robustness Lipschitz constant The global Lipschitz constant [49] CL1/CL2/CLi Clever score with L 1 /L 2 /L ∞ norm [48] Attack MR Misclassication Ratio [24] ACA C A verage Condence of A dversarial Class [24] ACT C A verage Condence of True Class [24] ALD p A verage L p Distortion [24] ASS A verage Structural Similarity [46] PSD Perturbation Sensitivity Distance [25] N TE Noise T olerance Estimation [25] RGB Robustness to Gaussian Blur [24] RIC Robustness to Image Compressionr [24] CC Computation Cost [24] Defense CA V Classication Accuracy V ariance [24] CRR/CSR Classication Rectify/Sacrice Ratio [24] CCV Classication Condence V ariance [24] COS Classication Output Stability [24] CIF AR10 to train 4 dierent families of DNN models. W e calculate the correlations of dierent metrics for the two dataset separately , in order to avoid the potential impact due to the training data. 4 IMPLEMEN T A TION AND CONFIGURA TIONS Our system is implemented base d on the T ensorF low framework [ 1 ] and the architecture is shown in Figure 3. There are 4 layers, i.e., the data layer , the algorithm layer , the measurement layer and the analysis layer . Our implementation is designed to be e xtensible, i.e., each layer can be extended with new models and algorithms with little impact on the other layers. Our implementation, including all the data and algorithms, is open source on GitHub 3 . 3 https://github.com/icse-2020/DRT est At tack Defense At tack Metric Defense Metric T esting Metric Robustness Metric Correlation Analysis Raw Datasets Adversarial Examples Raw DNN Defense-enhanced DNN … … Analysis Layer Measurement Layer Algorithm Layer Data Layer … Figure 3: The architecture of our framework The data layer maintains all data use d in our study , including the original mo dels, the adversarial samples generated for origi- nal models, and the retrained models. It interacts with all other layers. W e use well-know mo dels on image classication tasks in our experiment. T o cover a range of dierent deep learning model structures, we adopt four dierent model families, including 3 LeNet family models (LeNet-1, LeNet-4 and LeNet-5 [ 22 ]), 4 V GG family models (VGG-11, V GG-13, VGG-16, V GG-19 [ 36 ]), 4 ResNet family models (ResNet-18, ResNet-34, ResNet-50, ResNet-101 [ 12 ]) and 3 GoogLeNet family models (GoogLeNet-12, GoogLeNet-16, Conference’17, July 2017, W ashington, DC, USA Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu Wang, Jianye Hao, Li Wang, and Jin Song Dong T able 2: Hyper-parameters of attack methods dataset attack method model family parameter success rate MNIST FGSM LeNet 0.2, 0.3, 0.4 0.94 VGG 0.81 ResNet 0.83 GoogLeNet 0.71 CW LeNet 9, 10, 11 0.91 VGG 0.81 ResNet 0.91 GoogLeNet 0.90 JSMA LeNet 0.09, 0.1, 0.11 0.89 VGG 0.25 ResNet 0.75 GoogLeNet 0.52 CIF AR FGSM VGG 0.01, 0.02, 0.03 0.76 ResNet 0.65 GoogLeNet 0.75 CW VGG 0.1, 0.2, 0.3 0.88 ResNet 0.90 GoogLeNet 0.90 JSMA VGG 0.09, 0.1, 0.11 0.80 ResNet 0.79 GoogLeNet 0.75 GoogLeNet-22 [ 40 ]). In total, we have 14 model structures, which are representativ e image classication models. W e adopt two p opular publicly-available datasets, i.e., MNIST [ 21 ] and CIF AR10 [ 18 ] to train DNN models in our work. MNIST is a set of handwritten digit images. It contains 70 , 000 images in total. Each image in MINIST dataset is single-channel of size 28 ∗ 28 ∗ 1 . CI- F AR10 is a set of color images. It contains 10 classes, each of which has 6 , 000 images, and the input size of each image is 32 ∗ 32 ∗ 3 . The algorithm layer contains a set of algorithms for attacking DNN as well as algorithms for defending DNN through retraining. For each traine d mo del, we adopt thr ee state-of-the-art attack meth- ods (e .g., FGSM, CW and JSMA) to generate adversarial samples. The principle of choosing parameters for each attack is to balance the imperceptibility and success rate of generating adv ersarial samples. For MNIST , we adopt the same parameters from cleverhans [ 31 ] for all three attacks. For CIF AR10, we slightly changed the param- eters of FGSM and CW in order to obtain b etter imperceptibility . The parameters chosen include the attack step size for FGSM, the initial tradeo-constant for tuning the relative importance of size of the perturbation and condence of classication for CW and the maximum percentage of perturbed features for JSMA. T o further avoid bias introduced by hyper-parameters, we run each attack method on the original dataset for 3 times, each time with a dierent hyper-parameter conguration. Then we combine the successful adversarial samples generated from 3 runs of attacks as the adversarial sample set for model retraining. T able 2 shows the details of the hyper-parameter congurations for each attack method, and the column hyperparameter summarizes the hyper- parameter congurations used in each run of attack. During training and retraining, we adopt a learning rate of 0 . 001 , a batch size of 128 for all models in the two datasets. For MNIST , a test accuracy above 98% is accepted in b oth training and retraining. For CIF AR10, a test accuracy above 80% is accepted during training process and a test accuracy above 85% is required for retraining. The measurement layer contains all implementation for calculat- ing the 25 metrics shown in T able 1. W e calculate four robustness values, i.e., Global Lipschitz Constant (Lipz) and the CLEVER score (CL1, CL and CLi) for each model. Note that LeNet is not feasible for CIF AR10. In our experiment, since calculate CLEVER score is e x- tremely time-consuming for GoogLeNet, we reduce the number of images to 50 and sampling parameter N b = 50 , as it is r eported that 50 or 100 samples are usually sucient to obtain a r easonably accu- rate robustness estimation [ 48 ]. W e calculate the coverage criteria of dierent DNN models with the same test suite (i.e., the original test suite of MINIST or CIF AR10) and obtain 14 ∗ 4 and 11 ∗ 4 values of each coverage criteria on MNIST and CIF AR10, respectively . Defense Metrics are calculated for all the defense enhanced mod- els, i.e., models after adversarial training, according to their original denitions [ 24 ]. For each dataset, W e obtain 14 ∗ 3 and 11 ∗ 3 values for each defense metric on MINIST and CIF AR10, respectively . At- tack Metrics are calculated for the generated adversarial examples of each attack method, all parameters of attack metrics ar e set based on their original denitions [ 24 ]. W e obtain 14 ∗ 3 and 11 ∗ 3 values for each attack metric on MINIST and CIF AR10, respectively . W e additionally calculate a set of ∆ -metrics, which are denoted as Metric-di. For instance, Lipz-di is the Lipschitz Constant of the retrained model minus that of the original model. W e obtain 14 ∗ 3 and 11 ∗ 3 ∆ -robustness for each robustness metric on MINIST and CIF AR10. Similarly , we calculate ∆ -coverage metrics by subtracting the coverage achiev ed by the augmented test set (i.e., the original test set plus the adversarial samples) from that of the original test set. W e obtain 14 ∗ 3 and 11 ∗ 3 ∆ -coverage values for each co verage metric on MINIST and CIF AR10. The analysis layer implements the correlation analysis algorithm [ 16 ]. W e rst plot the data to observe the trend and then decide on the correlation analysis method to use. By observing the data plot, we found that the data does not show a linear trend. Therefore, we choose the Kendall’s τ rank correlation coecient [ 16 ], which does not assume that the data follows a normal distribution or the variables have a linear correlation. All experiments were conducted using four GP U servers. Server 1 has 1 Intel Xeon 3.50GHz CP U, 64GB system memory and 2 N VIDIA GTX 1080Ti GP U. Server 2 has 2 Intel Xeon 2.50GHz CP U, 126GB system memor y and 4 NVIDIA GTX 1080Ti GP U. Server 3 has 2 Intel Xeon 2.50GHz CP U, 96GB system memor y and 4 N VIDIA GTX 1080Ti GP U. Server 4 has 1 Intel Xeon 2.50GHz CP U, 119GB system memory and 2 T esla P100 GP U. Not all GP Us on the four servers are fully utilized. W e remark that we do not always have full occupations of all GP Us and 6 GP Us ar e used on average during the experiment period. In total, the experiment took more than 6 , 100 GP U hours to nish. T able 3 shows the time spent on dierent steps, i.e., on gen- erating adversarial examples, training and retraining, as well as metric calculations for each dataset on each model. The unit is 1 GP U hour . The time for correlation calculation compared to the other steps is neglectable. The most time consuming step is the metric calculation, which took 1 , 350 hours for the ResNet family on CIF AR10. The most time consuming metrics is the coverage cri- teria, which varies signicantly depending on the model structure. Adversarial sample generation is also time consuming. There is Limited Correlation between Coverage and Robustness for De ep Neural Networks Conference’17, July 2017, W ashington, DC, USA T able 3: Time for dierent steps in the experiment dataset model family generate AE train & retrain metric calc MNIST LeNet <0.5 <0.5 <0.5 VGG 160 6 420 ResNet 240 12 1200 GoogLeNet 120 25 300 CIF AR10 VGG 540 12 550 ResNet 450 45 1350 GoogLeNet 300 50 330 5 FINDINGS 5.1 Research Questions RQ1: Are there any correlations between existing test cover- age criteria and the robustness of the DNN models? T o answer the question, we conduct correlation analysis on the coverage metrics and the robustness metrics of all models on the original test set. The results are shown in Figure 4. The number and the color represent the strength of the correlation. The corr elation value is a number between − 1 and 1 . Positive numb er (and blue color) indicates p ositively correlated and negative numb er (and red color) indicates negative correlated. The larger the absolute number is, the stronger the correlation is. The darker the color is, the stronger the correlation is. W e measure the p-value of the sample data set we have and regard p-value greater than 0 . 05 as insignicant. An “X” mark means that we cannot make a decision because p-value is larger than 0 . 05 (i.e., insignicant) and a question mark “?” means that there ar e no valid results since the standar d variation of the data is 0 . The same notations are used in subsequent gures as well. W e summarize the results in the following two aspects. Accor ding to the denition of correlation in Guildford scale [ 2 ], an absolute value of less than 0 . 4 means that the (positive or negative) correlation is low; an absolute value of 0 . 4 - 0 . 7 means that the correlation is moderate; and otherwise the correlation is high or very high (i.e., 0 . 7 - 0 . 9 or ab ove 0 . 9 , respectively). W e have the following observations based on Fig. 4. First, there is no signicant or negative correlation between coverage and robustness metrics. In particularly , neural coverage is negatively correlated (i.e., with a value between − 0 . 16 and − 0 . 29 ) with the CLEVER score and is not signicantly correlated with Lipschitz con- stant for both MNIST and CIF AR10. Moreover , KNC, TKNC and LSA also show negative corr elations with CLEVER score on CIF AR10. It suggests that a DNN is less robust if the test set has a larger neur on coverage (although the str ength of the correlation is weak), which is unexpected. Second, there is no signicant correlation between any of the other coverage and any of the robustness metrics on the MNIST dataset. For the CIF AR10 dataset, positive correlation is only observed between SNAC and the CLEVER scor e, and the strength is low . This result suggests that a DNN mo del which achieves high coverage is not necessary robust and vice versa. W e further investigate the corr elation among all test coverage criteria themselves. It can be observed from Fig. 4 that NC, KNC, TKNC, LSA and DSA are p ositively correlated with each other . NBC and SNA C are correlated with each other with me dium or high strength, wher eas they have no ( or weak negative) corr elation with the other metrics. The results are consistent with observations re- ported in [ 26 ] and [ 17 ] which propose these coverage . This suggests that despite that dierent coverage criteria are dened dierently , they are in general correlated ( except for the boundary coverage). W e have the following answer to RQ1. Dierent coverage criteria are correlated with each other . There is limite d correlation between the coverage criteria and the ro- bustness metrics. RQ2: Does retraining with new test cases which improves coverage criteria improve the robustness of a DNN model? T o answer this question, we conduct correlation analysis on the dierence on coverage criteria and the dierence on robustness metrics before and after retraining. The results are shown in Fig. 5. W e observe that there is no correlation between the dierence on any coverage criteria and the dierence on any robustness metrics, except that there is negative correlation b etween TKNC-di and the CLEVER scores for all the CIF AR10 mo dels. This result casts a shadow over existing testing approaches, as the existing testing approaches are designed to generate test cases for high coverage, with the hop e that such test cases can be used to improve the adversarial robustness of the DNN models. W e thus have the following answer to RQ2. Retraining with new test cases which improve the coverage cri- teria does not necessarily improve the model robustness. RQ3: Are there metrics that are strongly correlated to the improvement of model robustness? The above results show that existing test coverage criteria have limited corr elations with the r obustness of DNN models and testing methods based on improving the coverage do not improve the robustness of DNN models. The question is then: are there metrics which are correlated to the improvement of the model robustness? T o answer the question, we systematically conduct correlation analysis between all metrics (or the metrics’s dierence before and after retraining) and the improv ement of the model robustness. The correlations between the defense metrics and the impro ve- ment of robustness are shown in Fig. 5. W e observe that there ar e positive correlations between the dierence of the CLEVER scores and all the defense metrics on CIF AR10. In particular , the correla- tion is of medium level for CRR and CA V . CRR and CA V measure how much the defense-enhanced model preserves the functionality of the original model [ 24 ]. Intuitively , this indicates that a defense method leads to more robustness improvement if the original model is better preserved by the defense-enhanced model. Furthermore, given the huge cost on computing robustness metrics, such positive cor- relations potentially provide a lightweight way of estimating on the eectiveness of a model enhancement method. W e additionally analyze the correlation between the attack met- rics and the improvement of coverage criteria. W e have the follow- ing observations from the results shown in Fig. 6. There are corre- lations between the dierences of TKNP and KNC and the attack metrics. Furthermore, NTE is positively correlated with KNC-di, NBC-di and SNAC-di. RGB is positively correlated with NC-di, NBC-di and SNAC-di. Intuitively , N TE and RGB measure the robustness of adversarial samples, which implies that more robust adversarial samples contribute more to the impro vement of cover- age metrics. Lastly , there is no correlation between the robustness Conference’17, July 2017, W ashington, DC, USA Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu Wang, Jianye Hao, Li Wang, and Jin Song Dong (a) MNIST (b) CIF AR10 Figure 4: T est coverage vs. robustness metrics (a) MNIST (b) CIF AR10 Figure 5: Defense Metrics vs. Coverage Criteria Dierences vs. Robustness Dierences dierences and the attack metrics for the CIF AR10 dataset. For the MINIST dataset, we observe negative correlations between the CLEVER score dierences with A CA C, ALD2, RIC and N TE, and positive correlations with ASS and ACT C. These obser vations indi- cate that more condent, perceptible and robust adversarial samples contribute more to improving the coverage criteria. W e have the answer to RQ3. Some defense metrics are p ositively correlated to the improve- ment of model robustness. RQ4: Are the correlation results consistent across dierent datasets, model families and correlation analysis metho ds? This question examines whether the correlation results ar e uni- versal or rather may vary cross dierent datasets, model families or correlation analysis methods. T o answer this question, we systemat- ically conduct the dierent correlation analysis using data obtained There is Limited Correlation between Coverage and Robustness for De ep Neural Networks Conference’17, July 2017, W ashington, DC, USA (a) MNIST (b) CIF AR10 Figure 6: Coverage Criterion Dierence vs. Robustness Dierence vs. Attack Metric from dierent datasets and model families. For the sake of space, we omit the details and refer the readers to the supplementar y materials made available at the online repository for details. Overall, while the correlation between testing coverage and robustness on MNIST and CIF AR10 are mostly consistent, we do observe that the results on some correlations vary slightly across the two datasets. For instance, the attack metrics ( except ALDinf ) show correlation with CL-di on MNIST but not on CIF AR10. The defense metrics show strong correlation with robustness and robustness-di on CIFRA10, which is not the case on MNIST . There are also inconsistent correlation results across dier ent model families. The correlation results on the MNIST , LeNet and V GG families are consistent, which is expecte d since they have similar model structures. However , it is surprise that mo dels in the GoogLeNet family often show opposite correlation results to those of the MNIST , LeNet and V GG families, especially for correlation between the attack metrics and the improvement of the model ro- bustness. This can be e xplained as Go ogLeNet has a rather dierent architecture from MNIST , LeNet and VGG (GoogLeNet tends to have more neurons in a lay er instead of having more layers). The above-mentioned inconsistency suggests that the correla- tion may dep end on the dataset and, more noticeably , the model architecture, which further complicates the picture . Lastly , we apply dierent correlation analysis algorithms (includ- ing Pearson product moment correlation [ 33 ] and Spearman’s rank- order correlation [ 37 ]) to observe whether the results ar e consistent. Overall, although the results are not identical, the dierences are not signicant and the results (e.g., whether it is positively or neg- atively correlated or whether it is strongly or weakly correlated) remain consistent. W e choose to present the results of Kendall cor- relation coecients in this work as it requires the least assumption on the underlying data. The results of other correlation analysis algorithms are present in the supplementary materials online. W e have our answer to RQ4. The correlation results are consistent across dierent correlation analysis algorithms but may vary across dierent datasets or model families. 5.2 Explanation In the following, we aim to interpret and ‘explain’ the above-mentioned results. These explanations must, howe ver , b e taken a grain of salt as they should be properly examined in the future. First, the reason that existing coverage criteria are not corre- lated with robustness may simply be due to the fact these coverage criteria are too weak to dierentiate robust and not-robust DNN models. It has b een shown that high neuron coverage could be easily achieved with a small number of samples [ 38 ], and similar conclusions are given by Odena et al. [30] for cov erages proposed in DeepGauge, such as neuron boundary coverage. This nding is conrmed by another recent research work [ 23 ], which reports that adversarial examples are pervasively distributed in the space divided by coverage criteria. The work [ 23 ] also suggests that using structural coverage to measure the neural network r obustness can be questionable. Second, our results suggest that retraining with the test case does not necessarily improve robustness. For software systems, a test case which rev eals a bug naturally leads to bug xing, which “denitely” improv es the ‘robustness’ of the system. This is not certain for DNN models. be cause the retrained mo del could b e rather dierent from the original model, i.e., it is like a new model, due to how such models are trained (i.e., through optimization Conference’17, July 2017, W ashington, DC, USA Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu Wang, Jianye Hao, Li Wang, and Jin Song Dong techniques which embody a lot of non-determinism and carry little theoretical guarantee). Third, we consider it to be intuitive that defense metrics are correlated with robustness as these defense metrics are indee d less formal ways of measuring robustness (i.e., in term of how well a DNN model defends adversarial attacks). As for the answer to RQ4, we take the consistency between dif- ferent correlation analysis algorithms positively as it shows that our results are not the result of certain ‘biased’ correlation analy- sis algorithm. The second part of the answer may suggest that a testing method may have to be tailored accor ding to dierent DNN architectures. 5.3 Discussion The results discussed so far are mostly negative, i.e ., only several defense metrics are correlated with the impro vement of model ro- bustness and existing testing methods designed based on coverage have limited eectiveness on impro ving the robustness of the DNN models. The results question the usefulness of coverage criteria proposed for DNN models. Indee d, a well tested (and impro ved by retraining) DNN through existing testing methods might produce a new model which has higher empirical accuracy on the testing set. Howev er , the new model is not necessarily more robust than the original model against adversarial perturbations. In fact, a r e- cent nding shows that DNN mo del robustness maybe at odds with accuracy since robust classiers are learning fundamentally dierent feature representations than standard classiers [ 43 ] . For DNN mod- els to be deployed in safety-critical applications, we b elieve that robustness is an as (if not more) important property as accuracy . The real question thus remains: how should we test DNN mo dels and make use of the testing results so that the robustness of the DNN models is improved? Or are there ways to improve the robustness of the DNN models in general? . T o this question, we do not have a clear answer and thus it remains an open question to us. It is possible that there could be other coverage criteria which are correlated with the model robustness or the associated testing method can help improve the model robustness. It is however important that no matter what coverage is pr oposed, it must be thoroughly analyzed to show its eect on model robustness. Our view is that nding adv ersarial samples should not be the end of DNN testing. Rather , testing DNN models should be designed in consideration of the model enhancement metho ds, i.e., a testing method should produce test cases which are useful according to the model enhancement methods. For instance, given the positive correlation between robustness and the defense metrics, we might want to generate test cases which could contribute to improve defense metrics such as CA V and CCV . 5.4 Threats to validity First, there may be threats to validity due to the selected datasets and model structures. In this work, w e regard each DNN model as the a program of the same functionality and calculate dierent metrics on these mo dels. W e assume the metrics are valid across dierent DNN model structures and conduct correlation analysis on the obtained metrics. Howe ver , some metrics are not applicable to certain model structures (e.g., MC/DC is not applicable to ResNet and GoogLeNet). Besides, Since each model family has limited number of models and datasets to analyse with, the results may be biase d to these specic datasets and model structures even though we are adopting the most popular datasets and state-of-the-art model structures. Second, there may be threats to validity due to the limited size of datasets, models and attack methods adopted. In this work, we use 14 dierent DNN model structures, 3 adversarial attack meth- ods, 100 models, 2 datasets, and 25 dierent metrics. While we are working on more datasets, model structures, etc., we could not sig- nicantly increase the scale due to the huge cost (more than 6 , 100 GP U hours) of the empirical study . For more statistical signicant results, more data points are helpful (or e ven necessary). W e thus call upon the open source community to jointly upscale our study . T o make sure that our correlation analysis results are valid, we only report the results beyond a certain signicant level by measuring its p-value [47] in this work. Third, the evaluation of DNN model robustness in general is still an open and challenging research pr oblem [ 51 ]. Although we are adopting the most popular robustness metrics, there might still be threat to validity to what extent these metrics can actually r eect the robustness of the models. 6 RELA TED W ORK In this se ction, we review related works, with a focus on recent progress on 1) testing appr oaches which propose dierent testing criteria for DNN models, 2) dier ent robustness metrics to evaluate the quality of the DNN models, and 3) state-of-the-art adversarial attacks and defense methods. T esting of deep learning mo dels Several recent papers propose d dierent coverage criteria for evaluating the eectiveness of a test set, along with dierent methods to generate test cases to improve the coverage criteria. For instance, DeepXplore [ 34 ] proposed the rst testing criterion for DNN models, i.e., Neuron Coverage (NC), which calculates the p ercentage of activated neurons (w .r .t. an activation function) among all neurons. Later , DeepGauge [ 26 ] extended the idea and proposed a serial of more ne-grained multi- granularity testing criteria from both neuron level and layer level. Inspired by the MC/DC test criteria from traditional software test- ing, Sun et al. proposed four test criteria base d on syntactic con- nections between neurons in adjacent layers and a concolic testing strategy to systematically improve MC/DC coverage of DNN mod- els [ 39 ]. More recently , two surprise ade quacy criteria [ 17 ] are proposed to measure the level of ‘surprise ’ of a new test case to the training set, e.g., by measuring the distance between their acti- vation vectors. Our work implemented and review ed most of the above-mentioned coverage criteria for a comprehensive e valuation. Note that some are omitted as they are e xtremely costly to compute. Robustness of deep learning models In the machine learning and the formal verication community , multiple metrics ar e used to measure the r obustness of DNN models. The Lipschitz constant was proved to be useful as a metric for Feed-for ward Neural Networks by Xu, H. [ 49 ]. Segedy et al. [ 41 ] leveraged the product of Lipschitz constants for each layer as a measur e of the DNN robustness and proposed Parseval Networks [ 6 ] to achieve improved robustness by maintaining a small Lipschitz constant at every hidden layer . There is Limited Correlation between Coverage and Robustness for De ep Neural Networks Conference’17, July 2017, W ashington, DC, USA Adversarial manipulation, which looks at the required distortion of adversarial samples is another direction. Matthias et al. intended to gave a formal guarantee on the robustness of a classier by obtain- ing a robustness lower b ound using a local Lipschitz continuous condition [ 13 ]. Recently , W eng et al. [ 48 ] extended their work and proposed a robustness metric called CLEVER score which is cal- culated using extreme value theory . Our work adopted one latest criteria from each direction. Attack and Defense for de ep learning models There is a large body work on adv ersarial attack and defense in recent y ears, which we are only able to cover the most rele vant ones. In particular , we adopted three state-of-the-art attacks to generate adversarial samples, i.e., a gradient-based approach (the FGSM method [ 10 ]), a saliency map-based approach ( JSMA [ 32 ]), and an optimization- based approach (C&W attack [ 5 ]). On the defense side, multiple attempts are available to obtain a r elatively robust model at train- ing phase or detect adversarial samples at runtime. For instance, adversarial training tries to include adv ersarial samples into con- sideration [ 20 ]. Another rele vant direction is robust training which tries to train a robust DNN model by considering all the possible perturbation at training phase [ 28 ]. Besides, mutation testing is adopted to nd adversarial samples at runtime [ 45 ]. Essentially , testing is complementary to these defense works. 7 CONCLUSION In this work, we conducte d a systematic and quantitative empir- ical study on 100 state-of-the-art DNN models to investigate the relevance and eectiveness of recently proposed testing criteria and approaches for deep neural networks. Our study is based on a self-containe d toolkit which implements all the testing cover- age criteria, two robustness metrics and a large set of measurable metrics during the adversarial attack and defense pipeline. Our results obtained from correlation analysis on all these metrics from dierent perspectives suggest that existing testing cov erage criteria have limited correlation with the robustness ( or the improvement of the robustness) of DNN models. Furthermore, we provide poten- tial directions to improve DNN testing in general by correlation analysis of robustness metrics and other kinds of metrics. While our results are mostly negative , we believe it is important that future proposed testing criteria and methods undergo similar evaluation so as to provide evidence of their relevance . Our models, adversarial samples, and programs for calculating the metrics ar e publicly available and can be used as a benchmark for evaluating future research in this dir ection. Conference’17, July 2017, W ashington, DC, USA Yizhen Dong, Peixin Zhang, Jingyi W ang, Shuang Liu, Jun Sun, Ting Dai, Xinyu Wang, Jianye Hao, Li Wang, and Jin Song Dong REFERENCES [1] Martín Abadi, Paul Barham, Jianmin Chen, Zhifeng Chen, Andy Davis, Jere y Dean, Matthieu Devin, Sanjay Ghemawat, Georey Ir ving, Michael Isard, et al. T ensorow: A system for large-scale machine learning. In 12th Symposium on Operating Systems Design and Implementation , pages 265–283, 2016. [2] J B. Stroud. Fundamental statistics in psychology and e ducation. Journal of Educational Psychology , 42:318, 05 1951. [3] Mariusz Bojarski, Davide Del T esta, Daniel Dworakowski, Bernhard Firner , Beat Flepp, Prasoon Goyal, Lawrence D Jackel, Mathew Monfort, Urs Muller , Ji- akai Zhang, et al. End to end learning for self-driving cars. arXiv preprint arXiv:1604.07316 , 2016. [4] Chad Brubaker , Suman Jana, Baishakhi Ray , Sarfraz Khurshid, and Vitaly Shmatikov . Using frankencerts for automated adversarial testing of certicate validation in SSL/TLS implementations. In IEEE Symposium on Se curity and Privacy , pages 114–129, 2014. [5] Nicholas Carlini and David A. W agner . T owards evaluating the robustness of neural networks. In IEEE Symposium on Security and Privacy , pages 39–57, 2017. [6] Moustapha Ciss, Piotr Bojanowski, Edouard Grave , Y ann Dauphin, and Nicolas Usunier . Parseval networks: Improving robustness to adv ersarial examples. In Proceedings of the 34th International Conference on Machine Learning , pages 854– 863, 2017. [7] Jacob Devlin, Ming-W ei Chang, Kenton Lee, and Kristina T outanova. Bert: Pre- training of deep bidirectional transformers for language understanding. In A nnual Conference of the North A merican Chapter of the Association for Computational Linguistics , 2019. [8] Mahyar Fazlyab, Alexander Robey , Hamed Hassani, Manfred Morari, and George J Pappas. Ecient and accurate estimation of lipschitz constants for deep neural networks. arXiv preprint , 2019. [9] Timon Gehr , Matthew Mirman, Dana Drachsler-Cohen, Petar T sankov, Swarat Chaudhuri, and Martin V e chev . Ai 2: Safety and robustness certication of neural networks with abstract interpretation. In IEEE Symposium on Security and Privacy , 2018. [10] Ian J. Goodfellow , Jonathon Shlens, and Christian Szegedy. Explaining and harnessing adversarial examples. In 3rd International Conference on Learning Representations , 2015. [11] Kelly Hayhurst, Dan V eerhusen, John Chilenski, and Leanna Rierson. A practical tutorial on modied condition/decision coverage. T echnical report, NASA, 2001. [12] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. De ep r esidual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition , pages 770–778, 2016. [13] Matthias Hein and Maksym Andriushchenko. Formal guarantees on the ro- bustness of a classier against adversarial manipulation. In Advances in Neural Information Processing Systems , pages 2266–2276, 2017. [14] Laura Inozemtseva and Reid Holmes. Coverage is not strongly correlated with test suite eectiveness. In 36th International Conference on Software Engineering , pages 435–445, 2014. [15] Guy Katz, Clark Barrett, David L Dill, Kyle Julian, and Mykel J Kochenderfer . Relu- plex: An ecient smt solver for verifying deep neural networks. In International Conference on Computer Aided V erication , pages 97–117. Springer , 2017. [16] Maurice G K endall. A new measure of rank corr elation. Biometrika , 30(1/2):81–93, 1938. [17] Jinhan Kim, Robert Feldt, and Shin Y oo. Guiding deep learning system testing using surprise adequacy . In Proceedings of the 41th International Conference on Software Engineering , 2019. [18] Alex Krizhevsky and Georey Hinton. Learning multiple layers of features from tiny images. Technical r eport, Citeseer, 2009. [19] Alexey Kurakin, Ian Goodfellow , and Samy Bengio. Adversarial examples in the physical world. In 5th International Conference on Learning Representations , 2017. [20] Alexey Kurakin, Ian J. Goodfellow , and Samy Bengio. Adversarial machine learning at scale. In 5th International Conference on Learning Representations , 2017. [21] Y ann LeCun. The mnist database of handwritten digits. http://yann. lecun. com/exdb/mnist/ , 1998. [22] Y ann LeCun, Léon Bottou, Y oshua Bengio, and Patrick Haner . Gradient-based learning applied to document recognition. Proceedings of the IEEE , 86(11):2278– 2324, 1998. [23] Zenan Li, Xiaoxing Ma, Chang Xu, and Chun Cao. Structural coverage criteria for neural networks could be misleading. In Proceedings of the 41st International Conference on Software Engine ering: New Ideas and Emerging Results , pages 89–92. IEEE Press, 2019. [24] Xiang Ling, Shouling Ji, Jiaxu Zou, Jiannan Wang, Chunming Wu, Bo Li, and Ting W ang. Deepse c: A uniform platform for security analysis of deep learning model. In IEEE Symposium on Security and Privacy , 2019. [25] Bo Luo, Y annan Liu, Lingxiao W ei, and Qiang Xu. T owards imperceptible and robust adversarial example attacks against neural networks. In Proceedings of the Thirty-Second AAAI Conference on Articial Intelligence , pages 1652–1659, 2018. [26] Lei Ma, Felix Juefei-Xu, Fuyuan Zhang, Jiyuan Sun, Minhui Xue, Bo Li, Chunyang Chen, Ting Su, Li Li, Y ang Liu, et al. Deepgauge: Multi-granularity testing criteria for deep learning systems. In Proceedings of the 33rd ACM/IEEE International Conference on Automated Software Engineering , pages 120–131. ACM, 2018. [27] Lei Ma, Fuyuan Zhang, Jiyuan Sun, Minhui Xue, Bo Li, Felix Juefei-Xu, Chao Xie, Li Li, Yang Liu, Jianjun Zhao, et al. De epmutation: Mutation testing of deep learning systems. In 29th International Symposium on Software Reliability Engineering , pages 100–111, 2018. [28] Aleksander Madry , Aleksandar Makelov , Ludwig Schmidt, Dimitris T sipras, and Adrian Vladu. T owards deep learning models resistant to adversarial attacks. In 6th International Conference on Learning Representations , 2018. [29] William M. McKeeman. Dierential testing for software. Digital T e chnical Journal , 10(1):100–107, 1998. [30] Augustus Odena and Ian Goodfellow . Tensorfuzz: Debugging neural networks with coverage-guided fuzzing. arXiv preprint , 2018. [31] Nicolas Papernot, Fartash Faghri, Nicholas Carlini, Ian Goodfellow , Reuben Fein- man, Alexey Kurakin, Cihang Xie, Y ash Sharma, Tom Brown, and Aurko Roy . T echnical report on the cleverhans v2.1.0 adversarial examples librar y . arXiv preprint arXiv:1610.00768 , 2016. [32] Nicolas Papernot, Patrick D. McDaniel, Somesh Jha, Matt Fredrikson, Z. Berkay Celik, and Ananthram Swami. The limitations of deep learning in adversarial settings. In European Symposium on Se curity and Privacy , pages 372–387, 2016. [33] KARL PEARSON. Notes on the history of correlation. Biometrika , 13(1):25–45, 1920. [34] Kexin Pei, Yinzhi Cao , Junfeng Y ang, and Suman Jana. Deepxplore: Automated whitebox testing of deep learning systems. In Proceedings of the 26th Symposium on Operating Systems Principles , pages 1–18. ACM, 2017. [35] Florian Schro, Dmitry Kalenichenko, and James Philbin. Facenet: A unied em- bedding for face recognition and clustering. In Proceedings of the IEEE conference on computer vision and pattern recognition , pages 815–823, 2015. [36] Karen Simonyan and Andrew Zisserman. V ery deep coanvolutional networks for large-scale image recognition. In 3rd International Conference on Learning Representations , 2015. [37] Charles Spearman. "general intelligence, " objectively determined and measured. The American Journal of Psychology , 15(2):201–292, 1904. [38] Y oucheng Sun, Xiaowei Huang, and Daniel Kroening. Testing deep neural net- works. arXiv preprint , 2018. [39] Y oucheng Sun, Min Wu, W enjie Ruan, Xiaowei Huang, Marta K wiatkowska, and Daniel Kroening. Concolic testing for deep neural networks. In Procee dings of the 33rd ACM/IEEE International Conference on Automated Software Engineering , pages 109–119, 2018. [40] Christian Szegedy , W ei Liu, Y angqing Jia, Pierre Sermanet, Scott Reed, Dragomir Anguelov , Dumitru Erhan, Vincent V anhoucke, and Andrew Rabino vich. Going deeper with convolutions. In Procee dings of the IEEE Conference on Computer Vision and Pattern Recognition , pages 1–9, 2015. [41] Christian Szegedy , W ojciech Zar emba, Ilya Sutskever , Joan Bruna, Dumitru Erhan, Ian Goodfellow , and Rob Fergus. Intriguing properties of neural networks. In 2nd International Conference on Learning Representations , 2014. [42] Y uchi Tian, Kexin Pei, Suman Jana, and Baishakhi Ray. Deeptest: Automated testing of deep-neural-network-driven autonomous cars. In Proceedings of the 40th international conference on software engineering , pages 303–314, 2018. [43] Dimitris Tsipras, Shibani Santurkar, Logan Engstrom, Alexander T urner , and Aleksander Madr y . Robustness may b e at odds with accuracy. arXiv preprint arXiv:1805.12152 , 2018. [44] Aladin Virmaux and Ke vin Scaman. Lipschitz regularity of deep neural networks: analysis and ecient estimation. In Advances in Neural Information Processing Systems , pages 3835–3844, 2018. [45] Jingyi W ang, Guoliang Dong, Jun Sun, Xinyu W ang, and Peixin Zhang. Adver- sarial sample detection for deep neural network through model mutation testing. In Proceedings of the 41th International Conference on Software Engineering , 2019. [46] Zhou W ang, Alan C. Bovik, Hamid R. Sheikh, and Eero P. Simoncelli. Image quality assessment: From error visibility to structural similarity . IEEE Transactions on Image Processing , 13(4):600–612, 2004. [47] Ronald L W asserstein, Nicole A Lazar , et al. The asaâĂŹs statement on p-values: Context, process, and purpose. The American Statistician , 70(2):129–133, 2016. [48] T sui- W ei W eng, Huan Zhang, Pin-Y u Chen, Jinfeng Yi, Dong Su, Yupeng Gao, Cho-Jui Hsieh, and Luca Daniel. Evaluating the robustness of neural networks: An extreme value theory approach. In 6th International Conference on Learning Representations , 2018. [49] Huan Xu and Shie Mannor . Robustness and generalization. Machine learning , 86(3):391–423, 2012. [50] Zhenlong Y uan, Y ongqiang Lu, Zhaoguo W ang, and Yib o Xue. Droid-sec: Deep learning in android malware detection. In Conference of the ACM Sp ecial Interest Group on Data Communication , pages 371–372, 2014. [51] Jiliang Zhang and Xiaoxiong Jiang. Adversarial examples: Opportunities and challenges. arXiv preprint , 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment