A Method with Feedback for Aggregation of Group Incomplete Pair-Wise Comparisons

A method for aggregation of expert estimates in small groups is proposed. The method is based on combinatorial approach to decomposition of pair-wise comparison matrices and to processing of expert data. It also uses the basic principles of Analytic Hierarchy/Network Process approaches, such as building of criteria hierarchy to decompose and describe the problem, and evaluation of objects by means of pair-wise comparisons. It allows to derive priorities based on group incomplete pair-wise comparisons and to organize feedback with experts in order to achieve sufficient agreement of their estimates. Double entropy inter-rater index is suggested for usage as agreement measure. Every expert is given an opportunity to use the scale, in which the degree of detail (number of points/grades) most adequately reflects this expert’s competence in the issue under consideration, for every single pair comparison. The method takes all conceptual levels of individual expert competence (subject domain, specific problem, individual pair-wise comparison matrix, separate pair-wise comparison) into consideration. The method is intended to be used in the process of strategic planning in weakly-structured subject domains.

💡 Research Summary

The paper proposes a novel framework for aggregating expert judgments when only incomplete pair‑wise comparison data are available, a situation common in small‑group strategic planning and other weakly‑structured domains. Building on the foundations of Analytic Hierarchy Process (AHP) and Analytic Network Process (ANP), the authors introduce four interlocking components: (1) hierarchical problem decomposition, (2) combinatorial decomposition of the incomplete comparison matrix, (3) multi‑level modeling of expert competence with flexible rating scales, and (4) an iterative feedback loop driven by a double‑entropy inter‑rater index.

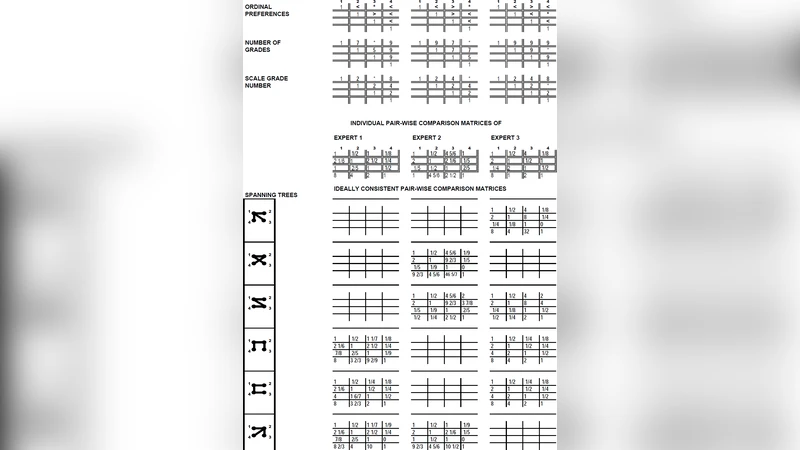

First, the decision problem is broken down into a hierarchy of goals, criteria, and alternatives. For each node a separate pair‑wise comparison matrix is elicited, but unlike classical AHP the matrices are allowed to be incomplete. The core technical contribution is the combinatorial approach: the incomplete matrix is partitioned into all possible complete sub‑matrices (subsets of comparisons that together form a full matrix). Each sub‑matrix is processed with standard AHP techniques (eigenvector or geometric mean) to obtain a local priority vector. These local vectors are then combined using a weighted average that reflects the number of comparisons in each sub‑matrix and the credibility of the experts who supplied them. This procedure yields a consistent global priority even when a substantial fraction of the pair‑wise judgments are missing.

Second, the method acknowledges that experts differ in competence across four conceptual levels: (i) domain knowledge, (ii) problem‑specific knowledge, (iii) matrix‑level expertise, and (iv) individual comparison expertise. To capture this heterogeneity, each expert may select a different granularity of rating scale (e.g., 5‑point, 7‑point, or 9‑point) for each comparison. The chosen scale is treated as part of the data, allowing the aggregation algorithm to weight finer‑grained judgments more heavily while still preserving coarser inputs from less confident experts.

Third, agreement among experts is monitored with a double‑entropy index. The first entropy term measures the dispersion of an individual expert’s responses (intra‑rater uncertainty), while the second term quantifies the mutual entropy between different experts (inter‑rater disagreement). The two terms are linearly combined into an overall index I = α·H₁ + β·H₂. A predefined threshold τ determines whether consensus is sufficient. If I exceeds τ, the algorithm identifies the comparison items that contribute most to H₂ and prompts the relevant experts to re‑evaluate them. The process repeats until I ≤ τ, at which point the final priority vector is accepted.

A numerical illustration with four experts evaluating six alternatives on three criteria demonstrates the method’s practicality. About 30 % of the pair‑wise entries were missing. The combinatorial decomposition generated twelve complete sub‑matrices; their aggregated priorities produced an initial double‑entropy value of 0.78 (τ = 0.65). After two rounds of targeted feedback, the index fell to 0.61, and a stable set of priorities emerged. The case study shows how the approach can recover robust rankings despite sparse data and how the feedback loop actively reduces disagreement.

The authors discuss strengths such as robustness to missing data, explicit modeling of expert competence, and transparent consensus building. They also acknowledge limitations: the combinatorial step can become computationally intensive for larger groups or higher‑dimensional problems, the freedom to choose rating scales may complicate interpretation, and the selection of τ and the weighting coefficients α, β remains somewhat subjective. Future work is suggested in the form of approximation algorithms (e.g., sampling of sub‑matrices), scale‑normalization techniques, and extensive field trials to calibrate the entropy thresholds.

In conclusion, the proposed method extends AHP/ANP methodology to settings where complete pair‑wise data are unavailable and expert competence varies widely. By integrating combinatorial matrix reconstruction, flexible scaling, and a double‑entropy‑driven feedback mechanism, it offers a systematic, repeatable process for achieving consensus and deriving reliable priorities in strategic planning and other weakly‑structured decision contexts.

Comments & Academic Discussion

Loading comments...

Leave a Comment