Shared Memory Pipelined Parareal

For the parallel-in-time integration method Parareal, pipelining can be used to hide some of the cost of the serial correction step and improve its efficiency. The paper introduces a basic OpenMP implementation of pipelined Parareal and compares it to a standard MPI-based variant. Both versions yield almost identical runtimes, but, depending on the compiler, the OpenMP variant consumes about 7% less energy and has a significantly smaller memory footprint. However, its higher implementation complexity might make it difficult to use in legacy codes and in combination with spatial parallelisation.

💡 Research Summary

The paper investigates the use of pipelining to improve the efficiency of the parallel‑in‑time integration method Parareal and presents a shared‑memory implementation based on OpenMP. Parareal alternates between a costly fine propagator F (e.g., a high‑order Runge‑Kutta scheme) and a cheap coarse propagator G (e.g., forward Euler). In the classic algorithm the coarse propagation is performed serially for all time slices, which limits speed‑up according to Amdahl’s law. By overlapping the coarse step of one slice with the fine step of the next, the “pipeline” hides part of the coarse cost, reducing the effective serial fraction and increasing the theoretical speed‑up from equation (3) to equation (4).

While MPI naturally supports this pipeline because each process can progress independently, a naïve OpenMP parallel loop introduces implicit synchronisation that destroys the overlap. The authors therefore design an explicit OpenMP scheme that uses the directives parallel, do, ordered, lock, and nowait. Each thread creates a private lock for its slice, acquires the lock before writing the fine result, releases it, and then enters an ordered region where it computes the coarse prediction, the correction δq, and writes the updated start value for the next slice. The lock for slice p+1 prevents the following thread from overwriting the buffer before its fine integration has finished. This lock‑based approach guarantees data‑race‑free execution while preserving the pipeline flow.

The theoretical model shows that the pipeline reduces the coarse cost from P·c_c to c_c per iteration, but the initial prediction still costs P·c_c. Consequently, the benefit is most pronounced when the number of iterations K is much larger than the number of slices P. The authors fix K = 4 and P = 24 for their experiments, a regime where the pipeline yields a modest but measurable gain.

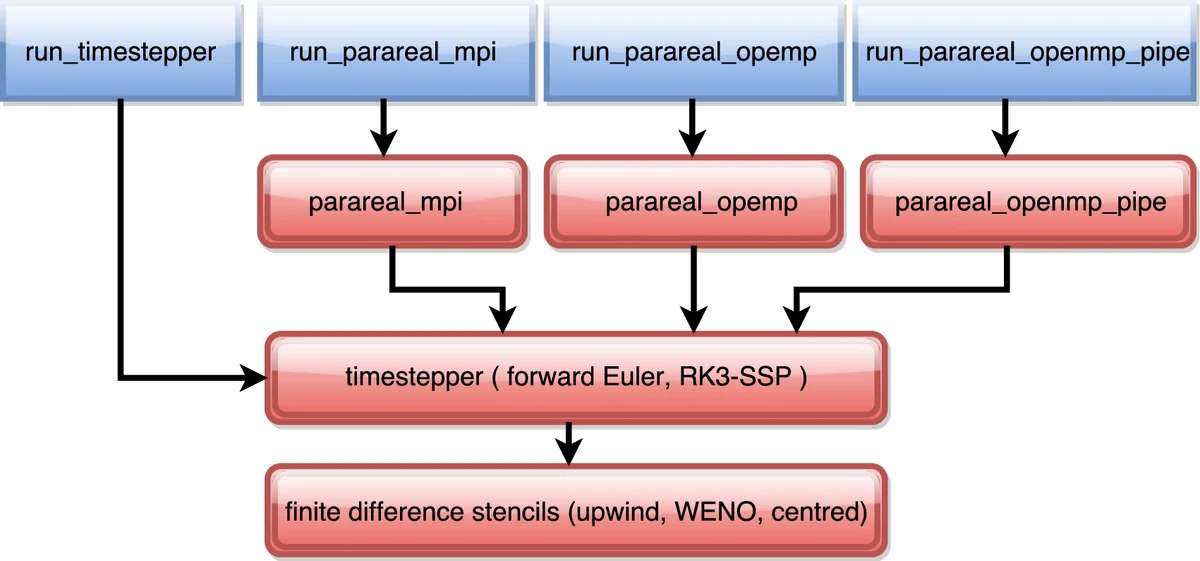

Numerical experiments solve the three‑dimensional Burgers equation on a 40³ grid using a fifth‑order WENO spatial discretisation and an RK3‑SSP time integrator for the fine propagator, and a first‑order upwind Euler scheme for the coarse propagator. The coarse step is roughly forty times faster than the fine step. With four Parareal iterations the error matches that of the serial fine integration (≈5.9 × 10⁻⁵), confirming correctness.

Performance is evaluated on two platforms: an 8‑core Intel Xeon workstation running Linux, and a node of the Cray XC40 “Piz Dora” (2 × 12‑core Broadwell CPUs). Both GCC and the Cray Fortran compiler are used. Runtime results show that OpenMP and MPI versions have almost identical execution times; the OpenMP version is slightly faster on the workstation for P = 8 cores. Speed‑up curves fall short of the theoretical bound because of overheads such as thread creation, lock management, and ordered region synchronisation. However, the OpenMP implementation consumes 6–8 % less energy and uses about 30 % less memory, owing to the absence of MPI message buffers and the shared‑memory data layout.

The authors discuss the trade‑offs. The OpenMP pipeline offers better energy and memory efficiency but introduces considerable code complexity: explicit lock handling, ordered sections, and nowait clauses make the program harder to read, debug, and maintain. Integrating this approach into legacy codes or coupling it with spatial domain decomposition (which typically uses MPI) would require nested parallelism or task‑based extensions, increasing the implementation burden. Moreover, the current code assumes a fixed number of iterations; an adaptive strategy that idles converged slices could improve efficiency but is not implemented.

In conclusion, the shared‑memory pipelined Parareal demonstrates that OpenMP can achieve comparable runtimes to MPI while reducing resource consumption, but its higher implementation complexity limits its immediate applicability to large, production‑level codes. Future work should explore task‑based OpenMP pipelines, dynamic iteration control, and seamless hybridisation with spatial parallelisation to fully exploit modern “fat‑node” architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment