Inference with Deep Generative Priors in High Dimensions

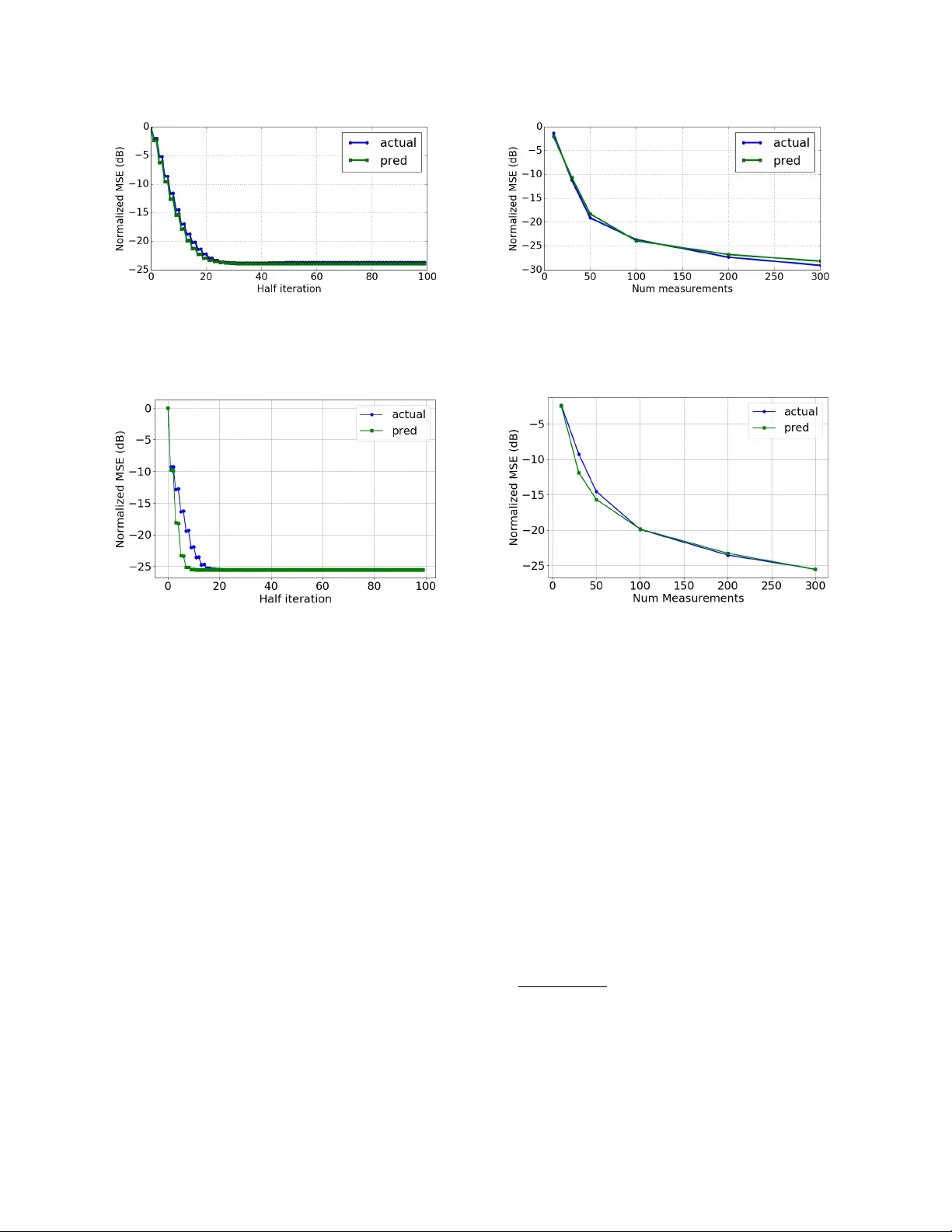

Deep generative priors offer powerful models for complex-structured data, such as images, audio, and text. Using these priors in inverse problems typically requires estimating the input and/or hidden signals in a multi-layer deep neural network from …

Authors: Parthe P, it, Mojtaba Sahraee-Ardakan