Unsupervised Adversarial Domain Adaptation Based On The Wasserstein Distance For Acoustic Scene Classification

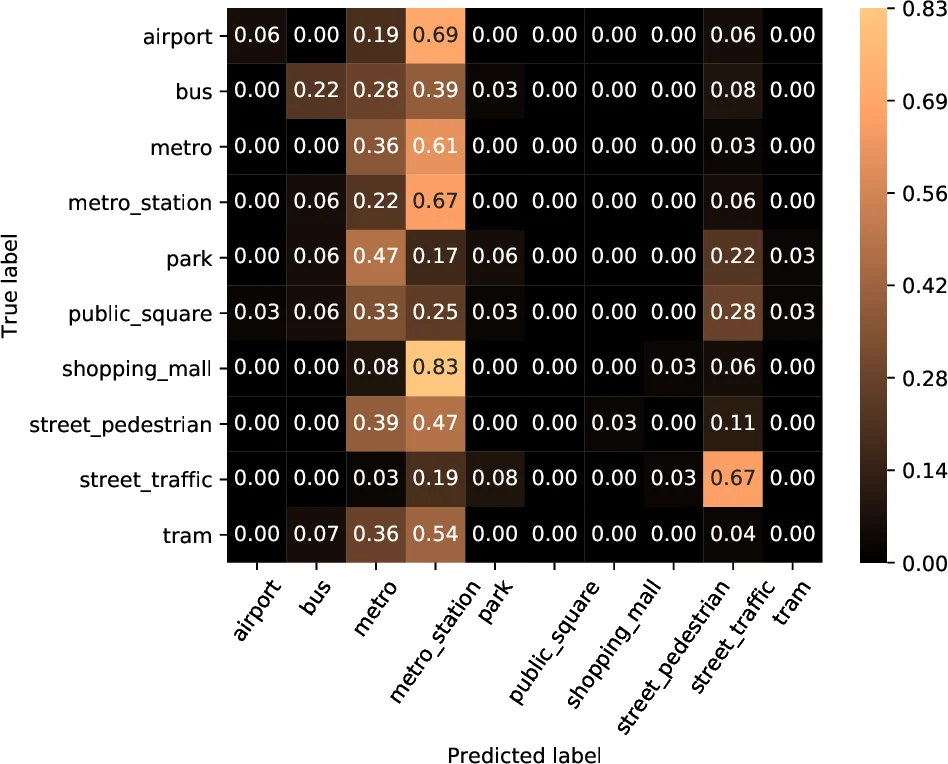

A challenging problem in deep learning-based machine listening field is the degradation of the performance when using data from unseen conditions. In this paper we focus on the acoustic scene classification (ASC) task and propose an adversarial deep learning method to allow adapting an acoustic scene classification system to deal with a new acoustic channel resulting from data captured with a different recording device. We build upon the theoretical model of H{\Delta}H-distance and previous adversarial discriminative deep learning method for ASC unsupervised domain adaptation, and we present an adversarial training based method using the Wasserstein distance. We improve the state-of-the-art mean accuracy on the data from the unseen conditions from 32% to 45%, using the TUT Acoustic Scenes dataset.

💡 Research Summary

Acoustic scene classification (ASC) has become a cornerstone task in machine listening, enabling applications ranging from urban sound monitoring to context‑aware devices. While deep neural networks achieve high accuracy when trained and tested on data captured under identical conditions, their performance drops dramatically when the recording device changes, a phenomenon known as domain shift. This paper addresses the unsupervised domain adaptation (DA) problem for ASC, where only labeled source data (high‑quality recordings) are available and the target data (recordings from consumer‑grade devices) are unlabeled.

The authors build on the theoretical framework of HΔH‑distance, which provides an upper bound on the target error as a sum of source error, the HΔH‑distance between source and target feature distributions, and a term involving the ideal joint classifier. Previous work (cited as

Comments & Academic Discussion

Loading comments...

Leave a Comment